Adapting GRADE for Reproductive Environmental Health: A Systematic Framework for Evidence Synthesis and Policy

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on adapting the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework for systematic reviews in reproductive...

Adapting GRADE for Reproductive Environmental Health: A Systematic Framework for Evidence Synthesis and Policy

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on adapting the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework for systematic reviews in reproductive environmental health. It addresses the critical need for valid and transparent evidence grading to translate research on environmental exposures and reproductive/children's health outcomes into policy recommendations [citation:1]. The content covers foundational concepts, methodological application steps tailored to field-specific challenges (such as observational study dominance and lifestage-specific vulnerabilities), strategies for troubleshooting common implementation barriers, and a comparative analysis of GRADE against other evidence grading systems. By synthesizing current methodological surveys and practical case studies, the article aims to equip professionals with the tools to enhance the rigor, consistency, and impact of evidence synthesis in this specialized and high-stakes field [citation:1][citation:2][citation:5].

The Imperative for Rigorous Evidence Grading in Reproductive Environmental Health

Systematic reviews are pivotal for translating environmental health research into protective policies. However, a 2024 methodological survey revealed a significant methodological gap: only 9.8% (18 out of 177) of systematic reviews on air pollution and reproductive/children's health employed a formal system for grading the overall body of evidence [1]. This underscores a critical lack of standardization in a field characterized by unique complexities that generic evidence assessment tools struggle to address [1].

The dominant framework, the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE), was developed for clinical trials and requires careful adaptation for environmental health questions [1] [2]. The core challenges that necessitate this adaptation include: the predominantly observational nature of studies, which complicates causal inference; the existence of critical developmental windows of susceptibility from preconception through adolescence; and the reality of complex, real-world exposures to chemical mixtures rather than single agents [1] [3]. This guide provides a comparative analysis of methodological approaches and experimental data central to advancing systematic review practices in this specialized field.

Comparison Guide: Evidence Grading Frameworks for Observational Environmental Health

The evaluation of evidence quality is foundational to a systematic review. The table below compares the most commonly applied frameworks, highlighting their adaptation needs for reproductive environmental health [1] [2].

Table: Comparison of Evidence Grading and Study Quality Assessment Frameworks

| Framework Name | Primary Purpose & Origin | Key Domains/Considerations | Modifications Needed for Reproductive/Environmental Health | Data Integration Capability |

|---|---|---|---|---|

| GRADE (Grading of Recommendations Assessment, Development, and Evaluation) | Grading the certainty (quality) of a body of evidence and strength of recommendations. Developed for clinical healthcare [1] [2]. | Risk of bias, inconsistency, indirectness, imprecision, publication bias. Observational evidence starts as "low certainty" [2]. | Requires domain expansion: exposure assessment accuracy, developmental timing, co-exposures, and alternative toxicological evidence (animal, in vitro) [1] [2]. | High. Structured process for moving from evidence to decision (EtD), suitable for integrating multiple evidence streams [2]. |

| Newcastle-Ottawa Scale (NOS) | Assessing the risk of bias (quality) of individual observational studies (case-control, cohort) [1]. | Selection of groups, comparability of groups, ascertainment of exposure/outcome. | Criteria must be refined to evaluate lifestage-specific confounding, exposure windows, and biomarker validity [1]. | Low. Designed for single-study assessment, not for grading an entire evidence body or integrating diverse data types. |

| Risk of Bias in Systematic Reviews (ROBIS) | Assessing the risk of bias in the conduct and synthesis of a systematic review [1]. | Study eligibility, identification/selection, data collection/appraisal, synthesis. | Critical for evaluating how well a review itself addressed field-specific challenges (e.g., exposure timing, mixture effects) [1]. | Not applicable. It is a tool for meta-evaluation of review methodology. |

| US EPA Integrated Risk Assessment Framework | Hazard identification, dose-response, exposure assessment, and risk characterization for chemical regulation. | Evaluates human, animal, and mechanistic evidence to identify hazards and quantify risk. | Primarily a risk assessment, not an evidence-grading system for systematic reviews. Its structure for evidence integration is informative [2]. | Very High. Explicitly designed to integrate epidemiological, toxicological, and mechanistic data. |

Supporting Experimental Data & Protocol: The 2024 methodological survey identified the frameworks above by systematically searching PubMed, Embase, and Epistemonikos for reviews on air pollution and reproductive/child health [1]. The review process involved dual independent screening, data extraction, and application of the ROBIS tool to evaluate the methodological quality of the included systematic reviews themselves [1].

Comparison Guide: Defining and Operationalizing Developmental Windows

Sensitivity to environmental insults varies dramatically across the lifespan. Precise definition of life stages is therefore not just a demographic detail but a core methodological variable affecting exposure assessment, confounding control, and biological plausibility [4].

Table: Harmonized Early-Life Age Groups for Exposure and Risk Assessment

| Life Stage (Descriptor) | Proposed Age Bins (Tier 1 - More Granular) | Consolidated Bins (Tier 2 - For Data-Poor Scenarios) | Key Physiological/Behavioral Rationale |

|---|---|---|---|

| Preterm & Term Newborn | Birth to <1 month; 1 to <3 months [4]. | 0 to <3 months | Immature metabolic and renal clearance; rapid brain development [3]. |

| Infant | 3 to <6 months; 6 to <12 months [4]. | 3 to <12 months | High hand-to-mouth activity; breastfeeding/dietary shifts; increased mobility [4]. |

| Toddler | 1 to <2 years; 2 to <3 years [4]. | 1 to <3 years | High exploration, mouthing behavior; diet resembles adult food; high respiratory rate per body weight [4] [3]. |

| Child | 3 to <6 years; 6 to <11 years [4]. | 3 to <12 years | Continued brain development; higher calorie and water intake per kg than adults; specific activity patterns (e.g., playing close to ground) [4]. |

| Adolescent | 11 to <16 years; 16 to <21 years [4]. | 12 to <18 years | Pubertal hormonal changes; brain maturation (prefrontal cortex); evolving independence and behaviors [4]. |

Supporting Experimental Data & Protocol: Research on manganese (Mn) exposure provides a clear example of sex- and window-specific effects. A 2022 study used laser ablation-inductively coupled plasma-mass spectrometry (LA-ICP-MS) to measure Mn concentrations in dentine, creating a retrospective biomarker of exposure at prenatal, postnatal, and early childhood periods [5]. Adolescents (ages 15-23) underwent resting-state fMRI. The analysis revealed that associations between dentine Mn and functional brain connectivity differed by both the timing of exposure and the sex of the individual [5]. For instance, prenatal Mn was associated with connectivity in the dorsal striatum in males, while postnatal Mn was linked to connectivity in the cerebellum in females, demonstrating distinct critical windows [5].

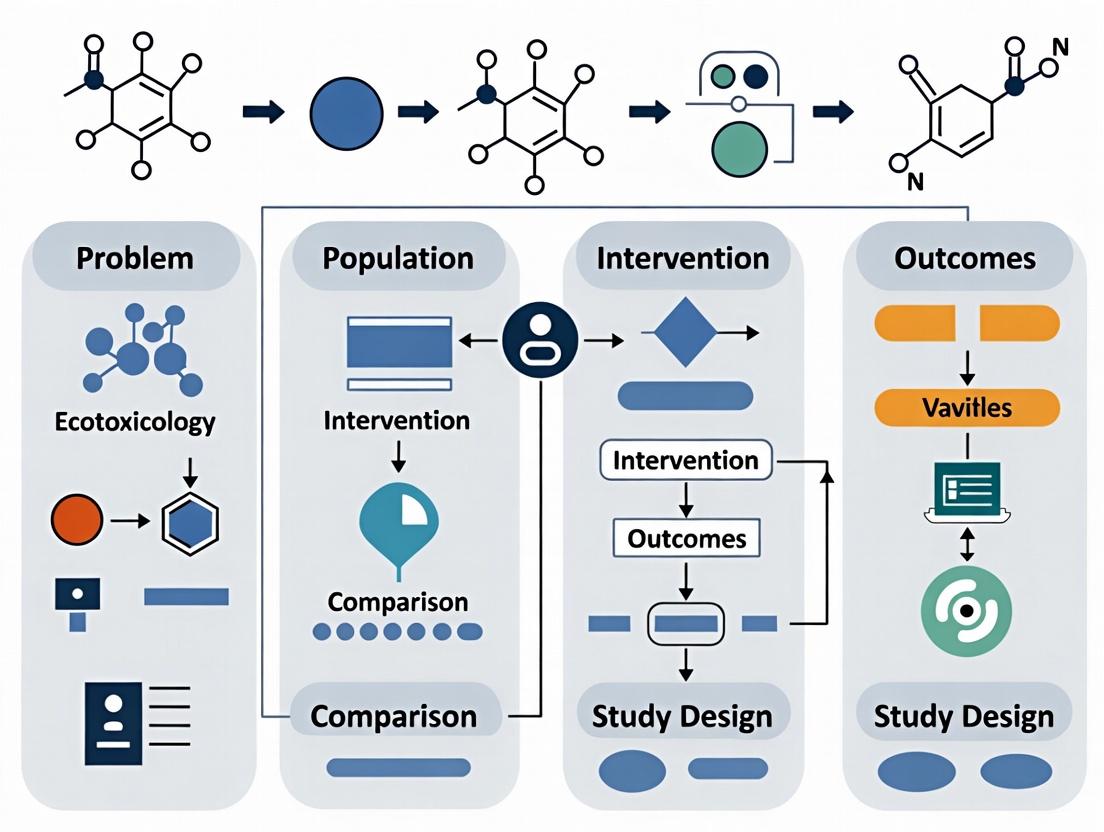

Diagram 1: Workflow for integrating developmental windows into the research lifecycle, from study design to evidence grading in systematic reviews (SR).

Comparison Guide: Methodologies for Assessing Real-World Exposures

Accurately capturing real-world exposures—which are often to low doses of multiple chemicals over variable time windows—is a paramount challenge. The choice of method directly impacts misclassification risk and the ability to detect effects [1].

Table: Comparison of Exposure Assessment Methodologies in Observational Studies

| Methodology Category | Specific Techniques | Key Advantages | Major Limitations for Reproductive Health |

|---|---|---|---|

| Environmental Monitoring | Fixed-site air/water monitors, residential modeling (e.g., land-use regression) [1]. | Objective; can provide long-term trend data; useful for community-level exposure. | May not reflect personal exposure; difficult to link to precise developmental windows (e.g., gestational trimester) [1]. |

| Personal Monitoring & Sensors | Wearable air monitors, GPS loggers, silicone wristbands. | Captures individual-level exposure across micro-environments; improving temporal resolution. | Costly and burdensome for large/longitudinal studies; data processing is complex; historical exposure cannot be measured. |

| Biomonitoring | Measuring chemicals/metabolites in blood, urine, cord blood, breast milk, or dentine [5] [3]. | Integrates all exposure routes; provides internal dose measure; suitable for chemical mixtures. | Often reflects recent exposure (except for persistent chemicals or biomarkers like dentine [5]); complex pharmacokinetics during pregnancy [3]. |

| Exposure Questionnaires & Diaries | Self-reported use of products, dietary habits, occupations, residential history. | Low cost; can capture historical data and exposure sources. | Prone to recall bias; may lack precision for quantitative risk assessment; difficult to validate. |

Supporting Experimental Data & Protocol: The Health Outcomes and Measures of the Environment (HOME) Study is a prospective birth cohort that exemplifies integrated exposure assessment. Researchers collect serial urine samples from pregnant women (e.g., at 16 and 26 weeks' gestation) to measure metabolites of phthalates, bisphenols, and other non-persistent chemicals [3]. This protocol links exposure during specific pregnancy windows to outcomes. Their findings showed that higher prenatal urinary levels of mono-benzyl phthalate were associated with an increased likelihood of maternal hypertensive disorders [3], demonstrating the power of timed biomonitoring to connect exposure windows with health effects.

Diagram 2: Proposed adaptation of the GRADE framework for reproductive environmental health, showing unique downgrade and upgrade considerations [1] [2].

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials and Reagents for Key Methodologies

| Item/Reagent | Primary Function | Application Context |

|---|---|---|

| Silicone Wristbands | Passive sampling devices that absorb a wide range of semi-volatile organic compounds (SVOCs) from the personal environment. | Personal exposure assessment to chemical mixtures (e.g., flame retardants, PAHs) in longitudinal cohort studies [3]. |

| Stable Isotope-Labeled Internal Standards | Mass spectrometry standards used for absolute quantification and to correct for matrix effects and analyte loss. | Essential for high-accuracy biomonitoring of chemical metabolites in complex biological matrices like urine or serum [3]. |

| Laser Ablation System coupled to ICP-MS | Enables precise spatial sampling of solid materials (e.g., teeth, nails) to reconstruct historical exposure timelines [5]. | Creating retrospective exposure biomarkers for metals and other elements to identify critical developmental windows of exposure [5]. |

| Multiplex Immunoassay Kits (e.g., Luminex) | Measure multiple protein biomarkers (cytokines, growth factors, hormones) from a single small-volume sample. | Assessing intermediate molecular phenotypes (e.g., placental growth factor, inflammatory markers) linking exposure to obstetric or developmental outcomes [3]. |

| Certified Reference Materials (CRMs) for Biomonitoring | Matrices (e.g., urine, serum) with certified concentrations of specific analytes, used for quality control and method validation. | Ensuring accuracy and comparability of exposure data across different laboratories and studies, crucial for evidence synthesis. |

This comparison guide objectively evaluates the frameworks and methodologies used to assess evidence within reproductive and environmental health systematic reviews. It is framed within the broader thesis that the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework requires strategic adaptation to address the unique methodological challenges of this field. The analysis reveals a landscape characterized by significant heterogeneity in tool application, persistent gaps in evidence, and a lack of standardized approaches for handling clinical and methodological diversity [6] [7] [8].

Comparative Analysis of Evidence Assessment and Gap Identification Frameworks

A methodological survey of systematic reviews on air pollution and reproductive/child health found that only 9.8% (18 out of 177) employed a formal system to grade the overall body of evidence [6]. Among those that did, reviewers applied 15 distinct tools to assess the internal validity (risk of bias) of individual studies and 9 different systems for grading the collective evidence, often with multiple modifications [6]. This underscores a profound lack of standardization.

The following table compares the adoption and characteristics of the most commonly cited frameworks in this domain:

Table: Adoption and Application of Key Evidence Assessment Frameworks

| Framework Name | Primary Purpose | Reported Use in Reproductive/Environmental Health Reviews [6] | Key Strengths | Noted Limitations for the Field |

|---|---|---|---|---|

| GRADE | Grading quality of evidence & strength of recommendations. | Most commonly used body-of-evidence grading system. | Systematic, transparent, widely recognized. | Default downgrading of observational evidence; not designed for lifestage-specific vulnerabilities or complex exposures [6]. |

| Newcastle-Ottawa Scale (NOS) | Assessing risk of bias in observational studies. | Most commonly used individual-study assessment tool. | Tailored for case-control and cohort studies. | Lacks explicit criteria for environmental exposure assessment or developmental windows [6]. |

| AHRQ Research Gaps Framework [9] [10] | Identifying & characterizing research gaps from systematic reviews. | Provides a structured method to define evidence shortcomings. | Leverages PICOS, integrates with evidence grading, classifies reasons for gaps. | Not a quality assessment tool; used to define future research needs. |

| PICOS Elements (Population, Intervention, Comparison, Outcomes, Study Design) | Formulating research questions & characterizing gaps. | Foundation for many gap frameworks [11] [10]. | Universal applicability, clarifies the scope of evidence. | A descriptive structure, not an evaluative or grading methodology. |

An evaluation of organizations that conduct systematic reviews found that only a minority used an explicit framework to determine research gaps, with variations of the PICO (Population, Intervention, Comparison, Outcomes) framework being the most common basis [10]. The AHRQ-endorsed framework builds on PICOS (adding 'Setting') and classifies the reason for a gap into four categories: (A) Insufficient or imprecise information; (B) Biased information; (C) Inconsistent or unknown consistency results; (D) Not the right information [9] [10]. A scoping review of 139 articles on health research gaps confirmed that knowledge synthesis (like systematic reviews) is the most frequent method for gap identification, but standard methods for prioritizing and displaying these gaps are still lacking [8].

Heterogeneity—the variability in study characteristics and results—is a central challenge. It can be categorized as clinical, methodological, or statistical, with the first two often driving the third [7]. In reproductive environmental health, sources of heterogeneity are particularly pronounced.

Table: Key Sources of Heterogeneity in Reproductive Environmental Health Reviews

| Heterogeneity Category | Specific Sources in Reproductive/Environmental Health | Impact on Evidence Synthesis |

|---|---|---|

| Clinical & Population Heterogeneity | - Lifecourse stage (e.g., specific gestational trimester, childhood developmental window) [6].- Baseline health, genetics, and comorbidities.- Variable exposure profiles (dose, duration, mixtures) [6]. | Challenges the pooling of studies; may obscure critical effect modifiers related to vulnerability. |

| Methodological Heterogeneity | - Exposure assessment methods (e.g., monitoring data, modeling, personal sensors) [6].- Outcome definitions and measurement (e.g., clinical vs. biomarker endpoints).- Adjustment for different sets of confounders.- Study design (prospective vs. retrospective cohorts). | Impairs comparability; differences in bias direction and magnitude affect confidence in a pooled estimate. |

| Intervention/Exposure Heterogeneity | - Complex, real-world mixtures of pollutants versus single-agent studies [6].- Differences in exposure settings (indoor/outdoor, occupational/residential). | Makes it difficult to define a single "intervention" effect, complicating translation to public health guidance. |

A systematic review of guidance on investigating clinical heterogeneity found minimal consensus but common suggestions [7]. These include pre-specifying covariates for investigation in the review protocol, ensuring covariates have a clear scientific rationale, involving clinical experts on the review team, and interpreting findings from subgroup analyses with caution as they are often exploratory [7].

Experimental Protocols and Data from Foundational Studies

Protocol 1: Methodological Survey of Evidence Grading Systems A 2024 methodological survey evaluated how systematic reviews grade evidence on air pollution and reproductive/children's health [6].

- Objective: To identify and evaluate the frameworks used for rating the internal validity of primary studies and for grading the overall body of evidence in this specific field.

- Search & Selection: Researchers searched multiple databases for systematic reviews of observational studies on air pollution and adverse reproductive/child health outcomes published from 1995 onward. From 177 eligible reviews, they included only those (n=18) that explicitly used a published tool to rate the body of evidence [6].

- Data Extraction & Analysis: Two reviewers independently extracted data on the tools used, their application, and any modifications. They analyzed the frequency of use and assessed the tools' applicability to field-specific challenges like lifestage vulnerabilities and exposure assessment [6].

- Key Quantitative Finding: The GRADE framework was the most commonly used system for grading bodies of evidence, while the Newcastle-Ottawa Scale (NOS) was most common for individual study assessment. However, most reviews used no formal system, and applied tools were highly heterogeneous and often modified [6].

Protocol 2: Application of the AHRQ Research Gaps Framework A 2013 evaluation applied and refined a framework for identifying research gaps from systematic reviews [9].

- Objective: To develop and evaluate a practical framework for the systematic identification and characterization of research gaps.

- Methods: The framework was applied to 50 existing systematic reviews. Concurrently, several Evidence-based Practice Centers (EPCs) used the framework during systematic reviews or Future Research Needs (FRN) projects and provided structured feedback [9].

- Characterization Process: Gaps were characterized using PICOS elements. The reason for each gap was classified as insufficient/imprecise information, biased information, inconsistency, or not the right information [9].

- Key Quantitative Finding: The application to 50 reviews identified approximately 600 unique research gaps. Feedback led to framework revisions, including guidance on handling multiple comparisons and deciding whether to limit gap analysis to questions with a formal strength-of-evidence assessment [9].

Protocol 3: Integrating Heterogeneous Evidence A foundational 1997 paper outlined the challenge of integrating direct and indirect evidence [12].

- Core Concept: Effective integration requires explicit analytic framework models that break down a broad question into linked sub-questions, each amenable to a systematic review.

- Methodological Approach: It advocates for the use of tabular displays to summarize major findings and the strength of evidence for each sub-question, aiding in the transparent integration of different research pieces for conclusion-making [12].

Visualizing Methodological Relationships and Workflows

The following diagrams map the logical relationships between the core concepts and methodologies discussed.

GRADE Adaptation and Heterogeneity Relationship

Evidence Assessment and Gap Identification Workflow

This table details key methodological resources for researchers conducting systematic reviews in reproductive and environmental health.

Table: Research Reagent Solutions for Evidence Assessment

| Tool/Resource Name | Primary Function | Application in Reproductive Env. Health |

|---|---|---|

| GRADE Framework | Systematically rate quality of a body of evidence and strength of recommendations. | The starting point for evidence grading; requires adaptation for observational studies, lifestages, and exposure complexity [6]. |

| AHRQ Research Gaps Framework | Identify and characterize where and why evidence is insufficient to support conclusions [9] [10]. | Critical for moving from synthesis to agenda-setting. Uses PICOS to define gaps and classifies reasons (A-D), linking to grading exercises. |

| Newcastle-Ottawa Scale (NOS) | Assess risk of bias in non-randomized studies (cohort, case-control). | Commonly used for individual study assessment, but may need supplemental criteria for exposure timing and life-stage specificity [6]. |

| PICOS Worksheet | Formulate the review question and define inclusion criteria with clarity. | Foundational step for any systematic review; essential for structuring the subsequent gap analysis [11] [10]. |

| Heterogeneity Investigation Protocol | Pre-specified plan to explore clinical & methodological sources of variation [7]. | Should be included in review protocol. Involves selecting covariates with scientific rationale (e.g., trimester) and interpreting subgroup analyses cautiously. |

Why GRADE? Exploring the Framework's Core Principles and Potential for Adaptation

In reproductive and children's environmental health research—a field dedicated to understanding the impacts of chemical exposures like air pollutants and endocrine disruptors on fertility, pregnancy, and child development—translating scientific findings into protective public health policies is paramount [6]. This translation relies on systematic reviews that synthesize evidence from often complex observational studies. A 2024 methodological survey of systematic reviews on air pollution and reproductive/child health found that only 9.8% (18 out of 177 reviews) employed a formal system to grade the overall quality, or certainty, of the collective evidence [6]. The Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework was the most commonly used system, despite not being designed specifically for this field [6]. This underscores a critical methodological gap and establishes the core thesis: while GRADE provides a foundational, transparent, and structured system for rating evidence certainty, its principled adaptation—not mere application—is essential to address the unique challenges of reproductive environmental health research.

Core Principles of GRADE: A Structured Foundation

GRADE, developed by a global working group, is more than a rating scale; it is a systematic framework for defining questions, synthesizing evidence, and moving from evidence to recommendations [13] [14]. Its core workflow is structured and transparent.

The Standard GRADE Workflow

The GRADE process begins by defining the clinical or public health question, typically using the PICO framework (Population, Intervention, Comparator, Outcome) [13]. For each critical or important outcome identified, a body of evidence is assembled from relevant studies. The framework then adjudicates the certainty of evidence for each outcome separately, acknowledging that quality can vary across outcomes within the same review [13].

A unique and foundational principle of GRADE is its initial study design hierarchy: evidence from randomized controlled trials (RCTs) starts as "high" certainty, while evidence from observational studies starts as "low" certainty [13]. This starting point is then modified by assessing factors that may decrease or increase the certainty rating.

Factors for Decreasing Certainty (Rating Down):

- Risk of Bias: Limitations in study design or execution.

- Inconsistency: Unexplained variability in results across studies.

- Indirectness: Evidence not directly comparing the populations, interventions, or outcomes of interest.

- Imprecision: Wide confidence intervals suggesting uncertainty about the effect estimate.

- Publication Bias: Systematic under- or over-publication of research findings [13] [14].

Factors for Increasing Certainty (Rating Up):

- Large Magnitude of Effect: A very large relative risk reduction or increase.

- Dose-Response Gradient: Evidence of a changing effect with changing exposure level.

- All Plausible Confounding: The effect remains when considering all plausible confounding factors that would reduce a demonstrated effect [13].

The final output is a certainty rating for each outcome—High, Moderate, Low, or Very Low—presented in standardized Evidence Profiles or Summary of Findings tables [13] [14]. For guideline developers, this evidence is then integrated with considerations of values, preferences, and resource use within Evidence-to-Decision (EtD) frameworks to formulate strong or weak recommendations [15].

Table 1: Core Principles and Outputs of the GRADE Framework

| Principle | Description | Key Output |

|---|---|---|

| Outcome-Centric Rating | Certainty is rated for each health outcome separately, not for a study as a whole. | Certainty rating (High to Very Low) for each pre-specified outcome. |

| Initial Design Hierarchy | RCTs start as High certainty; observational studies start as Low certainty. | A transparent starting point for all evidence assessments. |

| Structured Modification | Explicit, consistent criteria are used to rate certainty up or down from the initial level. | A documented audit trail for each judgment affecting the final certainty rating. |

| Standardized Presentation | Findings are summarized in structured tables for consistency and transparency. | Evidence Profiles and Summary of Findings tables. |

| Explicit Link to Decisions | For guidelines, evidence is integrated with other criteria in a structured framework. | Evidence-to-Decision (EtD) frameworks leading to graded recommendations [15] [13] [14]. |

Diagram 1: Standard GRADE Workflow for Evidence Certainty

Comparative Analysis: GRADE Versus Common Alternatives in Reproductive Environmental Health

The 2024 methodological survey of air pollution systematic reviews identified a highly heterogeneous landscape of evidence assessment tools [6]. While GRADE was the most common framework for grading bodies of evidence, 15 different tools were used to assess the risk of bias in individual studies, with the Newcastle-Ottawa Scale (NOS) being the most frequent [6]. This highlights a critical distinction: risk-of-bias tools evaluate individual studies, while GRADE evaluates the collective certainty of a body of evidence for a specific outcome.

Table 2: Comparison of Evidence Assessment Frameworks in Reproductive Environmental Health Systematic Reviews

| Framework (Purpose) | Key Characteristics & Application | Advantages for the Field | Limitations for the Field |

|---|---|---|---|

| GRADE (Grading certainty of a body of evidence) | Most common evidence-grading system found; structured, transparent, outcome-specific [6]. | Provides a universal language for certainty; forces explicit reasoning; links evidence to decisions [14]. | Default RCT hierarchy penalizes observational research; standard domains may not capture key field-specific biases [6]. |

| Newcastle-Ottawa Scale (NOS) (Risk of bias for individual observational studies) | Most common individual study assessment tool; assigns stars for selection, comparability, exposure/outcome [6]. | Familiar and widely used; specific to cohort/case-control studies. | Does not evaluate the body of evidence; summary score can be misleading; lacks explicit guidance on field-specific biases. |

| Ad Hoc / Modified Systems (Various purposes) | 9 distinct grading systems were identified, often with substantial modifications to established tools [6]. | Attempt to tailor criteria to the unique challenges of environmental health research. | Loss of standardization and comparability; methods often lack transparency and validation. |

| No Formal System | Majority (~90%) of identified systematic reviews used no formal evidence-grading system [6]. | -- | Severely limits objectivity, transparency, and utility for policy-making. |

The survey's finding that the majority of reviews used no formal grading system reveals a significant methodological weakness [6]. The use of numerous modified systems, while well-intentioned, creates a "Tower of Babel" effect, undermining the consistency needed for policy formulation. GRADE's structured, transparent, and widely recognized process offers a solution to this problem, but its clinical trial-centric origins necessitate adaptation to be fully fit-for-purpose in environmental health [6].

The Case for Adaptation: Addressing Unique Methodological Challenges

The direct application of standard GRADE to reproductive environmental health faces several conceptual and practical hurdles, primarily rooted in the field's reliance on observational epidemiology and its unique research questions [6].

1. The Observational Paradigm vs. The RCT Hierarchy: Environmental exposures (e.g., air pollution, endocrine-disrupting chemicals) cannot be ethically assigned randomly. The field is therefore built on observational studies. GRADE's default position of rating this evidence as "low certainty" at the outset can systematically underestimate the valid, causal evidence generated by well-designed epidemiological studies [6]. This creates a ceiling for evidence certainty that may not reflect true scientific confidence.

2. Complex, Life-Stage-Specific Exposures and Vulnerabilities: Key domains for rating evidence down, such as indirectness and risk of bias, require reinterpretation. Exposure assessment (e.g., estimating personal air pollution exposure from fixed monitors) is a major source of potential misclassification, especially concerning precise developmental windows like gestational trimesters [6]. Vulnerability varies dramatically by life stage, meaning the population (P in PICO) must be precisely defined. Furthermore, health outcomes may have long latency periods, spanning decades from exposure to manifestation [6].

3. Co-Exposure to Complex Mixtures: Real-world exposure involves mixtures of chemicals, while studies often examine single pollutants. This raises questions about the indirectness of the evidence to real-world risk and the potential for synergistic effects not captured by the primary research [6].

4. The Preventive Burden of Proof: Clinical research often seeks to demonstrate a treatment's benefit. In contrast, environmental health aims to demonstrate a hazard to justify protective regulation. Some argue the burden of proof should logically differ, with a greater emphasis on using upward-rating factors like large effects or dose-response to affirm credible hazard signals from observational data [6].

These challenges are not merely theoretical. The methodological survey concluded that existing approaches were "highly heterogeneous in both their comprehensiveness and their applicability," creating an urgent need for a consistent, tailored approach [6].

Diagram 2: Core Research Challenges Driving the Need for GRADE Adaptation

Proposed Protocol for Adapting GRADE: A Principled Approach

Adaptation should not mean abandoning GRADE's rigor but rather thoughtfully contextualizing its principles. The GRADE-ADOLOPMENT model provides a formal process for adopting, adapting, or creating de novo recommendations using EtD frameworks [15]. The following protocol proposes concrete adaptations for systematic reviews in this field, based on identified challenges [6].

Modified Initial Rating for Well-Designed Observational Studies

- Protocol: For research questions where RCTs are not ethical or feasible, pre-specify that certain tiers of observational study design (e.g., prospective cohorts with validated exposure assessment and confounder control) may start at "Moderate" rather than "Low" certainty. This decision must be justified explicitly in the review protocol.

- Rationale: Addresses the inherent penalty against observational evidence, acknowledging that a well-conducted observational study can provide more reliable evidence for environmental questions than an infeasible or unethical RCT [6].

Field-Specific Criteria for Rating Evidence Down

- Exposure Assessment Risk of Bias: Develop a supplementary checklist to evaluate exposure measurement. Key items should include: the validity of the exposure model or metric, temporal and spatial alignment with the critical developmental window, and consideration of exposure misclassification differential by outcome status.

- Indirectness due to Life Stage and Mixtures: Judge indirectness not only for population and intervention but explicitly for: 1) the relevance of the studied life stage (e.g., animal model, adult human) to the target life stage (e.g., fetus, child), and 2) the extrapolation from single-pollutant studies to the reality of mixture exposures [6].

Emphasis on Criteria for Rating Evidence Up

- Protocol: Apply the "Large Effect" and "Dose-Response" criteria with particular attention. In a preventive context, a large relative risk (e.g., RR > 2.0) from a well-designed observational study may be especially compelling evidence of hazard. Similarly, a clear dose-response gradient across multiple studies significantly reduces the likelihood that the association is due to residual confounding [6] [13].

- Application of "All Plausible Confounding": Systematically evaluate whether identified plausible confounding factors (e.g., socioeconomic status) would bias the effect toward or away from the null. Strong evidence persists if the effect remains after adjustment or if confounding would likely mask, not create, the observed association.

Experimental Protocol from the Methodological Survey

The 2024 review itself provides a methodological blueprint for evaluating evidence grading systems [6].

- Objective: To evaluate systems for grading bodies of evidence used in systematic reviews of environmental exposures and reproductive/children's health.

- Search & Selection: A comprehensive literature search for systematic reviews on air pollution and reproductive/child health outcomes (from conception to age 18) published from 1995 onward. Reviews required a reproducible search, explicit inclusion criteria, quality assessment of included studies, and—critically—an explicit tool for rating the body of evidence [6].

- Data Extraction: Two independent reviewers extracted data on the internal validity tools and evidence grading frameworks used, along with any modifications made to them.

- Analysis: A qualitative synthesis of the heterogeneity, comprehensiveness, and applicability of the identified frameworks was performed [6].

The Scientist's Toolkit: Essential Research Reagent Solutions

Conducting a systematic review with an adapted GRADE approach requires specific "methodological reagents" to ensure rigor and reproducibility.

Table 3: Essential Toolkit for GRADE-Adapted Systematic Reviews in Reproductive Environmental Health

| Tool / Resource | Function in the Review Process | Key Considerations for Adaptation |

|---|---|---|

| Pre-Registered Protocol (e.g., PROSPERO) | Defines PICO questions, search strategy, and—critically—the pre-specified plan for adapting GRADE criteria (e.g., starting certainty for observational studies). | Must explicitly justify any departures from standard GRADE based on the nature of the environmental exposure and population. |

| Specialized Search Hedges | Identifies observational studies in environmental health databases (e.g., PubMed, EMBASE, TOXLINE). | Must include terms for exposure (e.g., "phthalates," "PM2.5") and specific reproductive/developmental outcomes. |

| Risk of Bias Tool for Observational Studies (e.g., modified ROBINS-I) | Assesses internal validity of individual primary studies. | Must be supplemented with field-specific items on exposure assessment accuracy and life-stage relevance [6]. |

| GRADEpro Guideline Development Tool (GDT) | Software to create and manage Summary of Findings tables and Evidence Profiles [14]. | Used to document judgments for both standard and adapted criteria in a transparent, exportable format. |

| Evidence-to-Decision (EtD) Framework | Structures discussion from evidence to recommendation for guideline panels, considering equity, feasibility, and acceptability [15]. | For public health guidelines, must incorporate policy feasibility, regulatory context, and the precautionary principle. |

The translation of scientific evidence into effective public health policy faces particular challenges in the field of reproductive environmental health. This domain investigates the impact of chemical, physical, and biological environmental exposures on fertility, pregnancy, and child development [16]. A growing body of literature demonstrates adverse effects from exposures to substances like air pollutants, endocrine-disrupting chemicals, and heavy metals [17] [16]. However, the pathway from identifying a hazard to implementing protective policy is hindered by the inherent complexity of the evidence.

Research in this field is predominantly observational, as randomized controlled trials (RCTs) of harmful exposures are unethical [6]. This necessitates specialized methods for evaluating evidence strength and addressing biases like confounding and exposure misclassification. Furthermore, vulnerabilities are life-stage-specific, with exposures during critical developmental windows having potentially profound and long-lasting effects that differ from adult exposures [6]. The real-world context also involves complex mixtures of exposures, whereas research often studies single agents [6].

These complexities create a significant barrier for decision-makers. Physicians report a lack of clear, evidence-based information as a key reason for not counseling patients on environmental risks [16]. Policy-makers require a transparent, standardized, and credible summary of the science to justify regulatory action. This is where systematic reviews (SRs) and the frameworks used to grade the certainty of their evidence become critical. This article examines the performance of different evidence synthesis and grading methodologies, arguing that the adaptation of the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework is essential for strengthening policy translation in reproductive environmental health [6].

Performance Comparison: Evidence Grading Frameworks in Practice

A methodological survey of systematic reviews on air pollution and reproductive/child health reveals a fragmented landscape of evidence assessment tools [6]. Among 177 identified SRs, only 18 (9.8%) used a formal system to rate the overall body of evidence. These reviews employed 15 different tools for assessing individual study risk of bias and 9 distinct systems for grading the collective evidence [6].

Table 1: Comparison of Common Evidence Grading Frameworks Applied in Reproductive Environmental Health

| Framework | Primary Origin/Design | Key Strengths for Environmental Health | Key Limitations for Environmental Health | Typical Evidence Output/ Rating |

|---|---|---|---|---|

| GRADE | Clinical medicine (interventions) | Systematic, transparent process. Explicit criteria for upgrading/downgrading evidence. Widely recognized [18]. | Default downgrading of observational evidence. Requires adaptation for exposure timing, co-exposures, and life-stage vulnerability [6]. | High, Moderate, Low, Very Low certainty of evidence. |

| Navigation Guide | Adapted from GRADE for environmental health | Specifically designed for environmental exposure questions. Integrates human and animal evidence streams [19]. | Less established than GRADE. Can be resource-intensive to apply fully. | High, Moderate, Low, Very Low certainty (similar to GRADE). |

| IARC Monographs | Carcinogen hazard identification | Rigorous, internationally respected process for hazard ID. Expert judgment integrated with mechanistic data. | Focused solely on carcinogenicity, not other health endpoints. Process is lengthy and not easily applied to individual SRs. | Carcinogenic to humans (Group 1), Probably carcinogenic (Group 2A), etc. |

| OHAT (Office of Health Assessment and Translation) | Evolved from NTP-CERHR; for environmental chemicals | Tailored for evaluating environmental substances. Clear protocol for integrating human and animal evidence [19]. | Like GRADE, may start with a presumption against observational studies. | High, Moderate, Low, or Very Low level of evidence. |

The Newcastle-Ottawa Scale (NOS) for cohort/case-control studies and the GRADE framework were the most commonly used tools for individual studies and bodies of evidence, respectively, despite not being designed specifically for this field [6]. This adoption highlights a demand for structure but also indicates a need for adaptation. The table above summarizes the performance characteristics of key frameworks as applied in recent SRs [6] [19].

Reviews using these frameworks often reached nuanced conclusions that directly inform policy readiness. For instance, an SR on air pollution and autism spectrum disorder (ASD) using the Navigation Guide (a GRADE adaptation) found "moderate" quality evidence for an association with PM2.5, justifying a higher level of concern for policy action [19]. In contrast, an SR on the same topic using a modified IARC approach found only "limited" or "inadequate" evidence for most associations, suggesting more research is needed before regulation [19]. These divergent conclusions from similar evidence bases underscore how the choice and application of the grading framework critically influence the policy message.

Detailed Experimental Protocols: From Biomarker Discovery to Meta-Analysis

Translating evidence into policy relies on a chain of rigorous research methodologies. Below are detailed protocols for two critical types of studies that feed into systematic reviews: exposure biomonitoring (generating primary evidence) and meta-analysis (synthesizing that evidence).

3.1 Protocol for Suspect Screening of Chemicals in Maternal-Cord Blood Pairs This protocol is designed to identify and prioritize unknown or unexpected chemical exposures during pregnancy, a key data gap in environmental health [20].

- Objective: To perform a non-targeted analysis of paired maternal and cord serum samples to screen for ~3,500 industrial chemicals, confirm their presence, and prioritize those demonstrating ubiquitous exposure or maternal-fetal transfer.

- Sample Collection: Paired maternal (collected during labor) and umbilical cord blood (collected after delivery) are drawn into serum separator tubes. Samples are centrifuged, and serum is aliquoted and stored at -80°C until analysis [20].

- Chemical Analysis (LC-QTOF/MS):

- Extraction: 250 µL of serum undergoes protein precipitation with methanol.

- Instrumentation: Extract is analyzed using an Agilent 1290 UPLC coupled to an Agilent 6550 Quadrupole Time-of-Flight Tandem Mass Spectrometer (QTOF/MS).

- Chromatography: Separation is achieved on an Agilent Eclipse Plus C18 column (2.1 x 100 mm, 1.8 µm) with a gradient of water and methanol (both with modifiers).

- Mass Spectrometry: Data is acquired in both positive and negative electrospray ionization (ESI) modes to capture a broad range of chemicals [20].

- Data Processing & Prioritization:

- Suspect Screening: Acquired mass spectra are matched against a custom database of ~3,500 chemicals using Agilent Mass Hunter Personal Compound Database and Library (PCDL) software.

- Prioritization: Detected "suspect" features are filtered and ranked based on: a) detection frequency (>50% of samples), b) correlation in intensity between maternal and cord pairs, and c) intensity differences across demographic groups.

- Confirmation: Top-priority suspects undergo MS/MS fragmentation. Their spectra are matched against commercial reference standards or spectral libraries for tentative or confirmed identification [20].

3.2 Protocol for Conducting a Meta-Analysis on Micro-pollutants and Reproductive Outcomes This protocol outlines the quantitative synthesis of epidemiological data, a cornerstone of systematic reviews [17].

- Objective: To quantitatively synthesize effect estimates from observational studies examining the association between micro-pollutant exposure (e.g., PM2.5, heavy metals) and specific reproductive outcomes (e.g., preterm birth, reduced sperm concentration).

- Search Strategy: A systematic search of databases (PubMed, Scopus, Web of Science, Embase) is performed using controlled vocabulary and keywords for exposure and outcome. The search strategy is documented with dates and full strings for reproducibility [17].

- Study Selection & Data Extraction: Two reviewers independently screen titles/abstracts and full texts against pre-defined PECO(S) criteria (Population, Exposure, Comparator, Outcome, Study design). Data extracted include: author/year, study design, population characteristics, exposure assessment method, outcome definition, effect estimate (e.g., odds ratio, hazard ratio), 95% confidence interval, and adjusted covariates.

- Statistical Analysis:

- Effect Measure Pooling: Where studies are sufficiently homogeneous, pooled effect estimates (e.g., Summary Odds Ratios) are calculated using inverse-variance weighted random-effects models (e.g., DerSimonian and Laird method) to account for between-study heterogeneity.

- Heterogeneity Assessment: Statistical heterogeneity is quantified using the I² statistic (I² > 50% considered substantial).

- Subgroup & Sensitivity Analysis: Pre-specified analyses are conducted to explore sources of heterogeneity (e.g., by study design, geographic region, exposure assessment method). Influence analysis examines if results are driven by any single study.

- Publication Bias: Funnel plots and Egger's regression test are used to assess potential small-study effects [17].

Visualizing Systematic Review and Policy Translation Workflows

Systematic Review and Multilevel Policy Translation Pathways

The diagram above illustrates two interconnected pathways. The top cluster depicts the technical systematic review workflow, culminating in a graded evidence statement. This evidence then feeds into the bottom cluster, the multilevel policy translation process, which is non-linear and involves distinct "logics" at each level [21]. Political and administrative logics dominate the macro (national) and meso (regional) levels, where evidence is adopted and adapted into guidelines. The micro (clinical) level is governed by professional logic, where guidelines are ultimately implemented or modified in practice. Successful translation requires negotiation and feedback across all levels and logics [21].

Conducting high-quality systematic reviews and primary studies in reproductive environmental health requires specialized tools. The table below details key resources for exposure assessment, evidence synthesis, and hazard identification.

Table 2: Research Reagent Solutions for Reproductive Environmental Health Systematic Reviews

| Tool/Resource Name | Type/Category | Primary Function in Research | Relevance to Policy Translation |

|---|---|---|---|

| Liquid Chromatography-Quadrupole Time-of-Flight Mass Spectrometry (LC-QTOF/MS) | Analytical Instrumentation | Enables non-targeted "suspect screening" for thousands of chemicals in biological samples (e.g., serum), identifying unknown exposures [20]. | Generates data on emerging contaminants and exposure mixtures, informing priority-setting for future regulation. |

| GRADEpro GDT Software | Evidence Synthesis Software | Facilitates the creation of Summary of Findings (SoF) tables and guides the systematic application of GRADE criteria for rating evidence certainty. | Produces transparent, standardized evidence summaries that are the direct input for guideline development bodies. |

| Cochrane Risk of Bias in Non-randomized Studies (ROBINS-I) | Methodological Tool | Assesses risk of bias in observational studies across seven domains, providing a structured judgment of study limitations [6]. | Critical for justifying the downgrading of evidence certainty in GRADE due to study design limitations, adding rigor to reviews. |

| EPA CompTox Chemicals Dashboard | Chemical Database | A curated database with physicochemical properties, hazard data, and exposure information for ~900,000 chemicals. | Used to identify chemicals for suspect screening databases and to contextualize the potential risks of detected compounds [20]. |

| International Federation of Gynecology and Obstetrics (FIGO) Opinion on Chemical Exposure | Clinical Guidance | A consensus document summarizing evidence and recommending actions for healthcare providers on reproductive environmental health [16]. | Serves as a bridge between evidence synthesis and clinical practice, translating science into actionable advice for practitioners. |

The effective translation of environmental health evidence into protective policy is a critical public health imperative. Systematic reviews are the indispensable engine of this translation, but their output is only as robust as the methodologies they employ. The current landscape, characterized by a proliferation of ad hoc and unadapted grading tools, leads to inconsistent and sometimes unreliable policy messages [6].

The path forward requires the widespread adoption and field-specific adaptation of rigorous frameworks like GRADE. Adaptations must account for the unique challenges of observational environmental research, such as life-stage susceptibility, complex exposure windows, and mixed exposures [6]. Furthermore, the translation process itself must be recognized as a multi-level, iterative endeavor involving political, administrative, and professional actors [21]. By standardizing the synthesis of evidence through robust methodologies and understanding the pathways of its translation, researchers can provide the clear, credible, and actionable science necessary to inform policies that protect reproductive health across generations.

A Stepwise Guide to Applying and Adapting GRADE for Reproductive Health Reviews

A clearly framed research question establishes the structure and delineates the approach for defining objectives, conducting systematic reviews, and developing public health guidance [22]. In environmental health, the PECO framework (Population, Exposure, Comparator, Outcome) serves as the foundational pillar for formulating such questions, particularly when assessing associations between exposures and health outcomes [22] [23]. This framework is instrumental in translating observational research into policy, a process that critically depends on a valid and transparent assessment of the evidence [6].

The necessity for this work is underscored by a broader thesis on adapting the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework for reproductive and children's environmental health systematic reviews. While GRADE is integral to evidence grading, its conventional application favors randomized controlled trials (RCTs) and faces significant challenges in environmental health contexts [6]. These contexts are predominantly observational, involve complex exposures, and focus on protecting vulnerable populations—such as pregnant persons and children—from harms rather than testing clinical interventions for benefit [6]. Consequently, effectively framing the initial PECO question is the essential first step that directly influences the subsequent adaptation of evidence grading methodologies to be fit-for-purpose in this specialized field.

Methodology: Identifying and Evaluating Frameworks

This comparative guide synthesizes information from a systematic evaluation of methodological frameworks. The core analysis draws on two primary sources: a seminal framework for formulating PECO questions [22] and a 2024 methodological survey evaluating systems for grading bodies of evidence in systematic reviews of environmental exposures and reproductive/children's health [6].

The survey [6] employed a rigorous systematic review methodology, adhering to the Preferred Reporting Items for Overviews of Reviews (PRIOR) guidelines. It comprehensively searched for and assessed systematic reviews on air pollution and reproductive/child health to evaluate the frameworks used for rating internal validity and grading bodies of evidence. The survey's inclusion criteria were strictly defined, considering human populations from conception to age 18, exposures to air pollutants, adverse health outcomes, and only systematic reviews that explicitly used a published tool for rating the body of evidence [6]. This methodological rigor provides a current and evidence-based assessment of the state of practice, revealing that only 18 out of 177 (9.8%) identified systematic reviews used formal evidence grading systems, with high heterogeneity in the tools applied [6].

The comparative analysis focuses on the alignment between the PECO question framework and the subsequent stages of evidence synthesis and grading, with constant reference to the specific challenges of reproductive environmental health.

Comparative Analysis: PECO Frameworks and Evidence Grading Systems

The PECO framework is not a one-size-fits-all tool; its application varies based on the research context and the existing knowledge about an exposure-outcome relationship [22]. The following table outlines five paradigmatic scenarios for formulating PECO questions, ranging from initial exploration to decision-informing analysis.

Table 1: Scenarios for Formulating PECO Questions in Environmental Health [22]

| Scenario | Systematic Review or Research Context | Approach to Exposure & Comparator | PECO Example (Hearing Impairment) |

|---|---|---|---|

| 1 | Calculate the health effect; describe dose-response. | Explore the shape of the exposure-outcome relationship. | Among newborns, what is the incremental effect of a 10 dB increase in gestational noise exposure on postnatal hearing impairment? |

| 2 | Evaluate effect of an exposure cut-off, informed by review data. | Use cut-offs (e.g., tertiles) based on distributions in identified studies. | Among newborns, what is the effect of the highest vs. lowest dB exposure during pregnancy on postnatal hearing impairment? |

| 3 | Evaluate association between known exposure cut-offs. | Use cut-offs identified from external or other populations. | Among pilots, what is the effect of occupational noise exposure vs. noise in other occupations on hearing impairment? |

| 4 | Identify an exposure cut-off that ameliorates health effects. | Use existing exposure cut-offs linked to known health outcomes. | Among workers, what is the effect of exposure to <80 dB vs. ≥80 dB on hearing impairment? |

| 5 | Evaluate effect of an achievable intervention cut-off. | Select comparator based on cut-offs achievable through an intervention. | Among the public, what is the effect of an intervention reducing noise by 20 dB vs. no intervention on hearing impairment? |

The choice of PECO scenario directly influences the evidence grading process. The methodological survey [6] found that the Newcastle Ottawa Scale (NOS) and the GRADE framework were the most commonly used tools for rating individual studies and bodies of evidence, respectively. However, neither was developed specifically for environmental health, leading to widespread modifications and highlighting a critical methodological gap.

The table below compares standard application with the necessary adaptations for reproductive environmental health.

Table 2: Comparison of Standard vs. Adapted Evidence Assessment for Reproductive Environmental Health

| Assessment Domain | Standard/Clinical Application | Adaptation for Reproductive Environmental Health | Rationale and Challenge |

|---|---|---|---|

| Study Design Hierarchy | RCTs are ranked highest; observational studies are downgraded. | The default downgrading of observational evidence is challenged [6]. | RCTs are often unethical for harmful exposures. High-quality observational studies (e.g., cohorts) may provide the best available evidence. |

| Risk of Bias/Confounding | Focus on randomization, allocation concealment, blinding. | Must assess spatial vs. temporal comparators, exposure misclassification, lifecourse confounding [6]. | Exposures are not assigned; confounding control is complex. Exposure assessment timing relative to developmental windows is critical [6]. |

| Directness (Population) | Patients with a specific condition. | Must consider vulnerabilities of pregnant persons, fetuses, and children: metabolic rates, detoxification processes, windows of susceptibility [6]. | Physiological differences drastically alter toxicity. Evidence from general adult populations may not be direct. |

| Exposure Assessment | Precise dose of a drug or intervention. | Graded based on methods to quantify complex, real-world exposures (e.g., personal monitors, models), and mixtures [6]. | Exposure misclassification is a major bias. Co-exposure to pollutant mixtures is the norm but hard to model [6]. |

| Outcome Measurement | Clinical endpoints (e.g., mortality, disease incidence). | Includes subtle endpoints like fetal growth reduction, neurodevelopmental scores, and pubertal timing. | Outcomes may have long latency. Measures must be sensitive to developmental disruption. |

| Burden of Proof | Demonstrate a treatment effect (benefit). | Often concerned with demonstrating an adverse effect for harm/safety assessment [6]. | Philosophically different: protecting health vs. improving it. May require equivalence testing to demonstrate "no harm" [6]. |

Experimental Protocols: Methodological Approaches from Systematic Reviews

Protocol 1: Systematic Review with PECO Framework Application This protocol is derived from the foundational PECO framework article [22].

- Question Formulation: Define the PECO elements precisely. For example: Pregnant women; Exposure to ambient PM2.5; Comparison of an incremental increase of 10 μg/m³ PM2.5; O Incidence of preterm birth [22].

- Scenario Selection: Choose the appropriate PECO scenario from Table 1. An initial review might use Scenario 1 to explore the dose-response relationship.

- Search Strategy: Design a reproducible search for multiple databases using controlled vocabulary and keywords for population, exposure, and outcome.

- Study Selection & Data Extraction: Two independent reviewers screen titles/abstracts and full texts based on PECO-defined eligibility. Data on study design, population characteristics, exposure metrics, outcome measures, effect estimates, and confounders are extracted.

- Evidence Synthesis: For Scenario 1, meta-analysis might model the linear effect per exposure increment. For Scenario 4, studies would be grouped by a specific cut-off (e.g., PM2.5 ≥ WHO guideline value).

Protocol 2: Methodological Survey of Evidence Grading Systems This protocol is based on the 2024 survey that evaluated grading systems [6].

- Eligibility Criteria: Define the unit of analysis (systematic reviews). Set inclusion criteria for population (human, reproductive/child health), exposure (specific, e.g., air pollutants), outcome (adverse health), and requirement for formal evidence grading tool use [6].

- Search Strategy: Conduct a comprehensive search in biomedical databases for systematic reviews meeting the criteria, with no language restriction but within a defined timeframe (e.g., 1995 onward) [6].

- Screening & Data Extraction: Two reviewers independently screen and extract data using a piloted form. Key data include: the grading framework used (e.g., GRADE, NOS), any modifications made to it, and how domains like exposure assessment or confounding were addressed [6].

- Analysis: Quantify the proportion of reviews using formal grading. Catalog and categorize the distinct tools and modifications. Thematically analyze the reported challenges and adaptations related to environmental health specifics [6].

Visualizing the Systematic Review Workflow and Evidence Assessment

The following diagram illustrates the integrated workflow from PECO question formulation through to adapted evidence grading, highlighting critical decision points specific to reproductive environmental health.

Systematic Review Workflow with Environmental Health Adaptations

The assessment of exposure is a central challenge in environmental health that influences multiple stages of the review process, from PECO formulation to final grading.

Exposure Assessment Pathway and Methodological Challenges

Table 3: Essential Methodological Resources for PECO-Based Systematic Reviews

| Tool/Resource | Type | Primary Function in Review | Key Consideration for Reproductive EH |

|---|---|---|---|

| PECO Framework [22] | Question Formulation Tool | Provides structure for the initial research question using Population, Exposure, Comparator, Outcome. | Guides precise definition of vulnerable populations (P) and complex exposures (E). Scenarios inform analysis approach. |

| GRADE Framework [6] | Evidence Grading System | Rates confidence in a body of evidence across domains (risk of bias, consistency, directness, etc.). | Requires adaptation (Table 2). Default downgrading of observational studies is often inappropriate. |

| ROBINS-E (Risk Of Bias In Non-randomized Studies - of Exposures) | Risk of Bias Tool | Assesses bias in observational exposure studies across seven domains. | Specifically designed for environmental exposures; more fit-for-purpose than generic tools. |

| Newcastle-Ottawa Scale (NOS) [6] | Study Quality Assessment Tool | Assesses quality of case-control and cohort studies based on selection, comparability, and exposure/outcome. | Commonly used but lacks specific items for exposure misclassification or developmental timing. |

| Navigation Guide [22] | Systematic Review Methodology | A rigorous, stepwise method for translating environmental health science into evidence-based conclusions. | Explicitly incorporates PECO and integrates human and non-human evidence. |

| CERQual (Confidence in the Evidence from Reviews of Qualitative research) | Qualitative Evidence Grading | Assesses confidence in findings from qualitative evidence syntheses. | Useful for reviewing implementation or acceptability of interventions (e.g., in SRH service delivery [24]). |

Framing a precise research question using the PECO framework is the critical first step in generating reliable evidence for reproductive environmental health. The five PECO scenarios offer a structured approach tailored to different stages of knowledge and decision-making contexts [22]. However, as revealed by recent methodological research, the subsequent step of grading that evidence remains challenged by the direct application of tools like GRADE that were designed for clinical interventions [6]. The path forward requires deliberate and transparent adaptation of these evidence grading systems. Adaptations must account for the primacy of observational evidence, the unique vulnerabilities of developmental life stages, the complexity of real-world exposure assessment, and the fundamental shift in the burden of proof from demonstrating benefit to preventing harm [6]. A research question meticulously framed with PECO, followed by an evidence assessment sensitively adapted to the realities of environmental exposure science, forms the indispensable foundation for systematic reviews that can effectively inform protective public health policies.

Within the critical field of reproductive environmental health, systematic reviews are essential for translating research into protective public health policies [6]. A foundational step in this process is the assessment of risk of bias (RoB) in individual observational studies, which evaluates the internal validity and potential for systematic error in their results [25] [26]. This assessment directly informs the Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework, which determines the overall certainty of a body of evidence [6] [27]. However, the unique methodological challenges of environmental exposure studies—such as uncontrolled exposures, critical developmental windows of susceptibility, and complex confounding—necessitate tailored RoB tools [6]. This guide objectively compares the performance of available RoB instruments for observational environmental data, providing a foundation for their application within GRADE-adapted systematic reviews for reproductive and children's health.

Comparison of Risk-of-Bias Tools for Observational Exposure Studies

The selection of an RoB tool significantly influences the outcome and credibility of a systematic review. The table below compares the core characteristics, advantages, and limitations of the primary tools discussed in the literature.

Table: Comparison of Primary Risk-of-Bias Assessment Tools for Observational Environmental Exposure Studies

| Tool Name | Core Approach & Domains | Key Advantages | Documented Limitations & Practical Challenges |

|---|---|---|---|

| ROBINS-E [25] [28] | Adapted from ROBINS-I; assesses bias via comparison to a hypothetical "target" RCT. Domains: Confounding, Selection, Exposure Classification, Departures from Exposure, Missing Data, Outcome Measurement, Selective Reporting. | Provides a structured, domain-based framework. Integrates theoretically with GRADE by allowing studies to start at a "high" certainty rating. | Conceptual mismatch: Ideal RCT is an unrealistic comparator for environmental exposures [25] [6]. Complex & time-consuming: Users report confusion and lengthy assessments [25]. Limited discrimination: Poor at differentiating between single and multiple biases and assessing confounding bias [25]. |

| Newcastle-Ottawa Scale (NOS) [6] [19] | A star-based scoring system for cohort/case-control studies. Domains: Selection, Comparability, Exposure/Outcome. | Simple, familiar, and widely used. Provides a quick, summary score. | Lacks transparency: Summary score obscures specific biases [6]. Not designed for GRADE: Scores do not map clearly to criteria for upgrading/downgrading evidence certainty. Susceptible to subjective scoring. |

| OHAT / Navigation Guide Framework [19] [27] | Tailored for environmental health. Assesses specified RoB domains (e.g., confounding, exposure assessment) and other GRADE factors (e.g., indirectness, imprecision). | Purpose-built for environmental exposures. Promotes transparency by separating RoB from other study quality considerations. | Heterogeneity in application: Multiple modified versions exist, reducing standardization [6] [19]. Requires significant reviewer judgment to implement. |

| GRADE Framework for RoB | Within GRADE, RoB is one of five domains for rating down evidence certainty. Specific criteria for rating observational studies are under development. | Directly integrated into the evidence certainty rating. Flexible, can incorporate insights from other tools. | Non-prescriptive: Does not mandate a specific RoB tool, leading to inconsistency [6]. Default starting point for observational studies is "low certainty," which may be overly penalizing [6] [28]. |

Performance Analysis Based on Experimental Data

Empirical evaluations of these tools, particularly ROBINS-E, reveal critical insights into their performance and usability in real-world systematic reviews.

Table: Summary of Experimental Findings from ROBINS-E Application Studies

| Study Focus | Methodology | Key Findings on Tool Performance | Implications for Reproductive Health Reviews |

|---|---|---|---|

| Large-Scale User Evaluation [25] | Application of ROBINS-E to 74 exposure studies (diet, drugs, environment) by 12 researchers. Collection of structured written and verbal feedback. | Low Practicality: 66% of users reported the tool was "time-consuming and confusing." Limited Validity: Failed to adequately assess key biases like confounding from unmeasured co-exposures. Poor Discriminatory Power: Could not reliably differentiate between moderate and high risk of bias studies. | Highlights the risk of inefficient and inconsistent RoB assessments in complex reviews of exposures like air pollution or endocrine disruptors, where co-exposures are prevalent [6]. |

| Methodological Survey of Air Pollution Reviews [6] | Assessment of 177 systematic reviews on air pollution and reproductive/child health to identify frameworks used for rating evidence. | Tool Fragmentation: 15 distinct RoB tools were identified across the reviews. Low Adoption of Formal Grading: Only 9.8% of reviews used a formal system to rate the overall body of evidence. Dominance of Generic Tools: NOS and GRADE were most common but are not tailored to environmental health. | Demonstrates a severe lack of methodological standardization in the field, compromising the consistency and comparability of conclusions across reviews on critical pregnancy and childhood outcomes. |

| Case Study: Heat Exposure & Maternal Health [29] | A systematic review of 198 studies on heat and maternal/neonatal outcomes, employing meta-analysis and evidence grading. | Tool Adaptation Necessity: The review required bespoke consideration of exposure timing (e.g., trimester-specific windows) and exposure assessment quality—challenges not fully addressed by generic tools. Heterogeneity Challenge: Highlighted significant variation in exposure metrics and study design as a major limitation. | Illustrates that even high-quality reviews must go beyond standard RoB checklists to appraise domain-specific issues like developmental windows of susceptibility and exposure misclassification [6]. |

Detailed Experimental Protocols

To ensure reproducibility and transparent reporting, the following outlines the key methodological protocols derived from the evaluated studies.

Protocol 1: Evaluating a Risk-of-Bias Tool (ROBINS-E) This protocol is based on the empirical evaluation detailed in [25].

- Team Assembly: Convene a multidisciplinary team of reviewers (epidemiologists, environmental health scientists, systematic review methodologists). Team members should have varying levels of experience.

- Pilot Training & Guidance Development:

- Select a small sample (e.g., 3-5) of diverse observational exposure studies.

- All reviewers independently apply the RoB tool to each study.

- Hold a consensus meeting to resolve discrepancies, document interpretations, and develop supplemental guidance for ambiguous signaling questions.

- Full-Scale Application:

- Apply the tool to a larger set of studies (e.g., >50) from ongoing systematic reviews.

- Each study should be assessed independently by at least two reviewers.

- Use a pre-defined method (e.g., consensus discussion, third-party adjudicator) to resolve disagreements.

- Data Collection & Feedback:

- Record all RoB judgments in a structured form.

- Collect standardized written feedback from all reviewers on each domain and the tool overall, noting time taken and specific points of confusion.

- Conduct a structured group debrief to discuss overarching challenges.

- Analysis:

- Thematically analyze qualitative feedback to identify major themes (e.g., conceptual issues, usability problems).

- Quantify inter-rater agreement and time burden.

Protocol 2: Conducting a Systematic Review with Integrated RoB & GRADE This protocol synthesizes methods from [6] [29] [30].

- PECO Formulation: Define the structured review question: Population (e.g., pregnant women), Exposure (e.g., ambient PM2.5), Comparator (e.g., lower exposure level), and Outcome (e.g., preterm birth) [31] [30].

- Protocol Registration: A priori registration of the review protocol detailing the PECO, search strategy, and analysis plan.

- Comprehensive Search & Screening: Systematic searches across multiple databases (e.g., PubMed, Embase) with dual independent screening of titles/abstracts and full texts.

- Data Extraction & Risk-of-Bias Assessment:

- Extract study data into a standardized form.

- Apply a pre-selected RoB tool (e.g., tailored OHAT, modified ROBINS-E) to each study outcome. Perform assessments in duplicate.

- For reproductive health, pay special attention to: a) Exposure timing: Alignment with biologically plausible windows of susceptibility (e.g., periconception, specific trimesters) [6]. b) Exposure assessment quality: Misclassification differences between modeled and personal measurements [6]. c) Confounding control: Adjustment for critical co-exposures and social determinants.

- Evidence Synthesis & Grading:

- Perform meta-analysis if studies are sufficiently homogeneous, or narrative synthesis.

- Use the GRADE framework to rate the certainty of the body of evidence for each outcome. Explicitly document reasons for downgrading (e.g., RoB, inconsistency, indirectness) or upgrading (e.g., dose-response) [6] [27].

Visualization of Methodological Workflows

RoB Assessment & GRADE Integration Workflow

Research Reagent Solutions

Table: Essential Methodological Tools for Risk-of-Bias Assessment

| Tool / Framework | Primary Function in RoB Assessment | Application Note |

|---|---|---|

| GRADE Framework [6] [27] [31] | Provides the overarching structure for moving from individual study RoB to a rating for the entire body of evidence. | The "certainty of evidence" rating is the final product that informs policy. RoB assessment is a critical input into this rating. |

| ROBINS-E Tool [25] [28] | A domain-based instrument designed to assess RoB in non-randomized studies of exposures by comparison to a target experiment. | Best used by experienced reviewers who can critically engage with its conceptual foundation. Requires extensive piloting and supplemental guidance. |

| OHAT / Navigation Guide Tool [19] [27] | A risk of bias assessment tool customized for environmental health topics, often integrated with a modified GRADE approach. | A pragmatic choice for environmental health reviews as it addresses exposure-specific concerns, though customization is common. |

| Newcastle-Ottawa Scale (NOS) [6] [19] | A checklist that assigns a star-based score to judge the quality of cohort and case-control studies. | Its simplicity is advantageous for rapid assessment but offers less transparency and guidance for complex bias judgments than domain-based tools. |

| PECO Framework [31] [30] | A structured format (Population, Exposure, Comparator, Outcome) for formulating the primary review question. | A precisely defined PECO is the essential first step that guides all subsequent RoB judgments, especially regarding indirectness of populations, exposures, and outcomes. |

The Grading of Recommendations, Assessment, Development, and Evaluations (GRADE) framework is the most widely adopted tool for grading the quality of evidence and for making clinical recommendations [32]. However, its application in reproductive and children’s environmental health presents distinct challenges that necessitate careful adaptation [6]. This field is characterized by predominantly observational studies, complex exposure assessments, vulnerable populations with lifestage-specific susceptibilities, and a focus on demonstrating the absence of harmful effects for public health protection [6].

A methodological survey of systematic reviews on air pollution and reproductive health found that only 9.8% (18 out of 177) used a formal system to rate the body of evidence [6]. Among those, GRADE was the most commonly used framework, yet it, along with other tools, was not originally designed for the unique contours of environmental health research [6]. This comparison guide evaluates the operationalization of core GRADE domains within this specialized field, contrasting it with alternative approaches and providing a roadmap for its effective adaptation.

Comparative Analysis of Evidence Grading Frameworks

The table below compares how major frameworks approach the grading of a body of evidence, highlighting key differences in terminology, initial ratings, and domain focus that are critical for reproductive environmental health reviews.