Acute vs. Chronic Toxicity Testing: A Strategic Guide for Research and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on the distinct yet complementary roles of acute and chronic toxicity testing.

Acute vs. Chronic Toxicity Testing: A Strategic Guide for Research and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the distinct yet complementary roles of acute and chronic toxicity testing. It begins by establishing the foundational differences in definitions, temporal dynamics, and primary objectives between these two testing paradigms, anchored in regulatory science and the principle that 'the dose makes the poison.' The guide then details the core methodological frameworks, including standardized in vivo protocols, the strategic integration of sub-chronic studies, and the application of alternative methods aligned with the 3Rs principles (Replacement, Reduction, Refinement). A critical troubleshooting section analyzes common challenges such as inter-species extrapolation, low-concordance target organs, and strategies for determining optimal study duration to avoid unnecessary animal use. Finally, the article explores validation and comparative analysis, focusing on the predictive value of short-term data for long-term risk, the construction of robust weight-of-evidence assessments, and the future of next-generation testing methodologies like organ-on-a-chip and AI-driven predictive toxicology. The conclusion synthesizes the strategic interplay between acute and chronic data in building a complete safety profile and outlines the future trajectory of toxicity testing toward more human-relevant, mechanistic, and efficient systems.

Defining the Divide: Core Concepts, Objectives, and Regulatory Context of Acute and Chronic Toxicity

Within toxicity testing research, the temporal dimension of exposure fundamentally dictates the nature of the biological insult and the experimental approaches required to characterize it. Immediate damage (acute toxicity) results from a single or short-term exposure, producing a rapid, often overt, pathological effect [1]. In contrast, cumulative insult (chronic toxicity) arises from the progressive summation of incremental injury from repeated sub-threshold exposures over extended periods, leading to delayed dysfunction or disease [1]. This distinction is not merely one of timescale but reflects divergent underlying biological mechanisms, risk assessment paradigms, and testing methodologies. This whitepaper, framed within the broader thesis of acute versus chronic toxicity testing, provides an in-depth technical analysis of these core concepts, detailing their defining characteristics, mechanistic bases, and the specialized experimental protocols designed to elucidate them.

Core Definitions and Temporal Frameworks

The classification of toxicity by exposure frequency and duration provides the foundational lexicon for research and regulation [1].

Table 1: Core Characteristics of Immediate Damage vs. Cumulative Insult

| Characteristic | Immediate Damage (Acute Toxicity) | Cumulative Insult (Chronic Toxicity) |

|---|---|---|

| Exposure Profile | Single or multiple exposures within 24 hours [1]. | Repeated exposures over months to years (>3 months) [1]. |

| Onset of Effects | Rapid, often within minutes to hours (e.g., cyanide poisoning) [1]. | Delayed, manifesting after prolonged latent periods (e.g., fibrosis, neuropathy) [1]. |

| Primary Nature of Injury | Often reversible (e.g., narcosis) or catastrophic and irreversible (e.g., corrosive damage) [1]. | Typically progressive and irreversible, involving adaptation, repair, and compensatory mechanisms [1]. |

| Key Testing Endpoints | Mortality (LD₅₀/LC₅₀), severe clinical signs, organ-specific acute failure [2]. | Morbidity, functional decrements (reproduction, growth), pathological change (inflammation, neoplasia), biochemical markers [2] [3]. |

| Typical Risk Assessment Output | Hazard classification, safety thresholds for single exposures [4]. | No Observed Adverse Effect Level (NOAEL), reference doses (RfD), cancer slope factors, lifetime risk estimates [5]. |

Regulatory testing frameworks operationalize these definitions into standardized study durations [2] [3] [6].

Table 2: Standardized Testing Durations in Toxicity Assessment

| Study Type | Typical Duration (Rodents) | Primary Purpose | Regulatory Context |

|---|---|---|---|

| Acute | ≤24 hours exposure [1]. | Identify immediate hazards, determine LD₅₀/LC₅₀ for classification [4]. | Mandatory first-tier testing for chemicals and pesticides [2] [4]. |

| Subacute | ~28 days (repeated dosing) [1]. | Screen for toxicity, inform doses for longer studies. | Often used in pharmaceutical development. |

| Subchronic | 90 days (1-3 months) [1] [6]. | Identify target organs, establish preliminary NOAEL, guide chronic study design [3] [6]. | Standard for food ingredients, pesticides, and general chemicals [3] [6]. |

| Chronic | >6 months, typically 12-24 months [4]. | Characterize cumulative effects, carcinogenic potential, and establish definitive NOAEL for risk assessment. | Required for long-term exposure risk assessment of pesticides, food additives, and environmental contaminants [2]. |

Biological Mechanisms and Pathophysiological Pathways

The divergence between immediate and cumulative outcomes is rooted in distinct, though sometimes overlapping, pathophysiological sequences.

3.1 Mechanisms of Immediate Damage Immediate toxicity often results from the direct interaction of a toxicant with critical molecular targets at high dose. This includes:

- Excitotoxicity: Massive, acute release of glutamate following insults like traumatic brain injury (TBI) leads to rapid calcium influx and necrotic neuronal death [7].

- Acute Cytotoxicity: Overwhelming oxidative stress or metabolic inhibition, such as from cyanide blocking cytochrome c oxidase, causing catastrophic energy failure [1].

- Direct Tissue Destruction: Corrosive chemicals (e.g., strong acids) causing immediate protein denaturation and cell lysis upon contact [1].

3.2 Mechanisms of Cumulative Insult Cumulative toxicity involves lower-level, repeated challenges that perturb homeostasis, engaging more complex, persistent pathways:

- Sustained Low-Level Inflammation: Repeated irritant exposure (e.g., on skin) or persistent endogenous inflammatory signals (e.g., post-TBI) lead to chronic inflammation, tissue remodeling, and fibrosis [8] [7].

- Progressive Oxidative Stress: Continuous generation of reactive oxygen/nitrogen species (ROS/RNS) depletes antioxidant defenses, leading to cumulative macromolecular damage implicated in neurodegeneration and aging [7].

- Altered Cell Signaling and Gene Expression: Repeated perturbations can dysregulate normal signaling (e.g., growth factors, hormones), leading to proliferative or degenerative diseases [9].

- Accumulation of Toxicant or Effect: The toxicant itself may accumulate in the body (e.g., heavy metals), or subclinical injury may incrementally accrue until a functional threshold is crossed (e.g., organophosphate-induced neuropathy) [1].

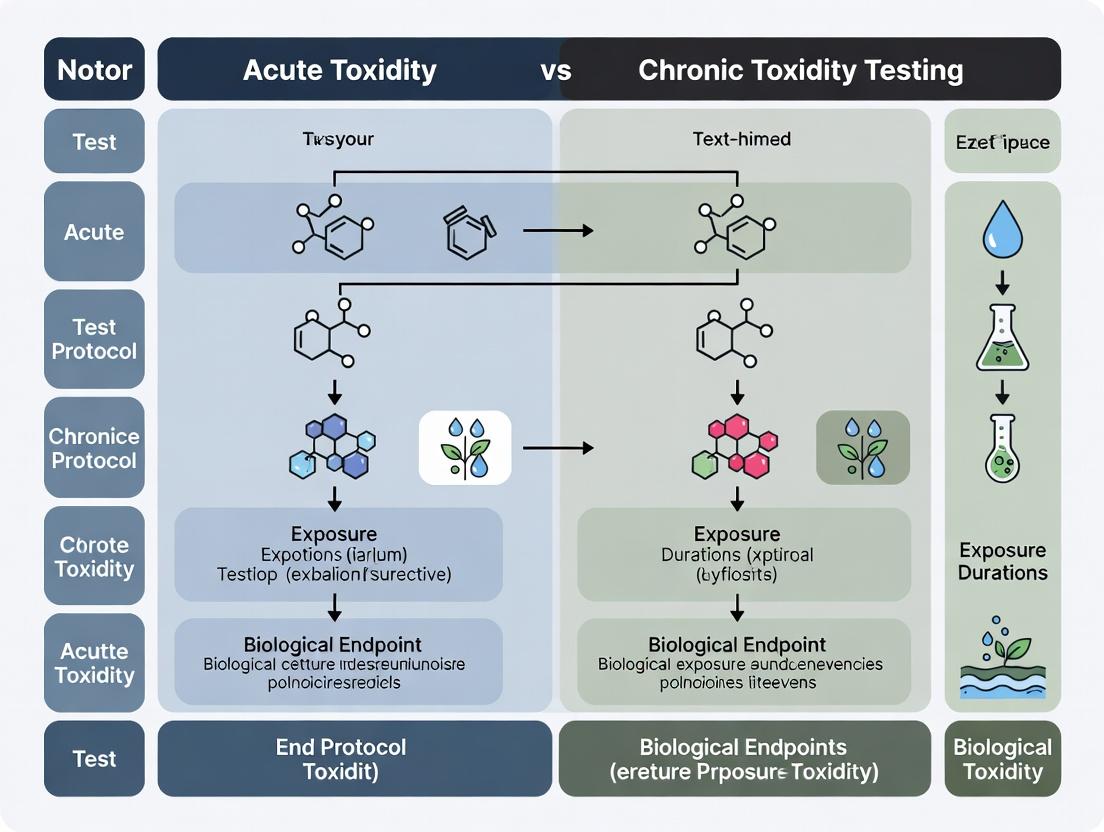

The diagram below illustrates the key divergent and convergent pathways underlying these two toxicity paradigms.

Graph 1: Divergent and Convergent Pathways of Toxicity. This diagram contrasts the linear, high-impact pathways of immediate damage with the cyclical, progressive pathways of cumulative insult. It also shows how a single insult (e.g., TBI) can trigger both acute and chronic cascades that converge on progressive pathology [7].

Experimental Methodologies and Protocols

Protocol for Assessing Immediate Damage: Human Acute Skin Irritation Patch Test

This ethical human test method replaces animal testing for predicting acute chemical skin irritation potential [10] [8].

- Objective: To classify the acute skin irritation potential of a chemical or formulation relative to a benchmark irritant (20% Sodium Dodecyl Sulfate, SDS) [10].

- Test System: Human volunteers under IRB-approved protocols. Subjects with sensitive skin or dermatological conditions are typically excluded [10].

- Graduated Exposure: Patches containing the test material are applied to the skin (typically the back) under occlusion for increasing durations (e.g., 0.5, 1, 2, 3, 4 hours) in a sequential or step-wise design [10].

- Endpoint Assessment: Skin reactions (erythema, edema) are assessed at fixed times (e.g., 1 and 24 hours) after patch removal. A positive response is defined as a clear visible reaction (e.g., uniform erythema) [10].

- Data Analysis: The incidence of positive responses at each time point is plotted. The TR₅₀ (time required to produce a reaction in 50% of subjects) is calculated and compared to the TR₅₀ of 20% SDS. A test material with a significantly shorter TR₅₀ than SDS is classified as an irritant [10].

- Key Results from Detergent Testing: This method successfully ranked product irritancy. Mold removers were most irritating (TR₅₀=0.37h), while powder detergents were least (>16h) [10].

Table 3: Human Patch Test Results for Product Irritancy Ranking

| Product Category | Average TR₅₀ (Hours) | Classification vs. 20% SDS (TR₅₀=1.81h) |

|---|---|---|

| Mold/Mildew Removers | 0.37 | More Irritating |

| Disinfectants/Sanitizers | 0.64 | More Irritating |

| Liquid Laundry Detergents | 3.48 | Less Irritating |

| Shampoos | 5.40 | Less Irritating |

| Powder Laundry Detergents | >16.00 | Much Less Irritating |

Protocol for Studying Cumulative Insult Interactions: Smog Chamber for Sunlight-Enhanced Toxicity

This system studies how cumulative exposure to sunlight (a physical stressor) modifies the toxicity of chemical mixtures over time [9].

- Objective: To simulate atmospheric aging and quantify how sunlight transforms primary air pollutant mixtures into more toxic secondary products [9].

- System Setup: A large, controlled-environment chamber (smog chamber) is filled with a precise mixture of primary pollutants (e.g., nitrogen oxides, volatile organic compounds). It is equipped with a high-intensity light source simulating solar radiation, and controls for temperature and humidity [9].

- Exposure Generation: The mixture is irradiated for defined periods (e.g., equivalent to 1-day of sunlight). Samples of the chamber atmosphere are drawn at intervals directly into in vitro (e.g., air-liquid interface lung cell cultures) or in vivo exposure systems [9].

- Endpoint Analysis:

- Chemical: Quantification of the loss of primary pollutants and formation of secondary products (e.g., ozone, formaldehyde, secondary organic aerosols) [9].

- Biological: Assessment of cytotoxicity, oxidative stress markers, and pro-inflammatory cytokine release in exposed cells. Genomic analyses can show dramatic increases in gene expression perturbations after irradiation (e.g., from 19 to 709 genes altered) [9].

- Interpretation: The experiment demonstrates cumulative risk where the combined insult of chemicals and sunlight over time creates a hazard greater than the sum of its parts [9].

Protocol for Subchronic-to-Chronic Rodent Toxicity Study

The 90-day subchronic study is a cornerstone for identifying cumulative effects and setting doses for chronic studies [3] [6].

- Animals and Design: Young, healthy rodents (at least 20/sex/group) are randomized into control, low, mid, and high-dose groups. Dosing is via diet, gavage, or inhalation for 90 days [6].

- Core In-Life Measurements:

- Clinical Observations: Twice daily for mortality and morbidity [3].

- Cage-side Exams: Detailed observations for signs of toxicity (e.g., posture, behavior, secretions) weekly [3].

- Body Weight and Feed Consumption: Measured weekly to calculate feed efficiency [3].

- Ophthalmology, Hematology, Clinical Chemistry: Performed pre-study and at termination to assess systemic function [3].

- Terminal Procedures:

- Necropsy: Full gross examination of all organs [3].

- Organ Weights: Critical organs (e.g., liver, kidneys, brain, heart, adrenals, gonads) are weighed to detect hypertrophy or atrophy [3].

- Histopathology: A comprehensive set of tissues (40+ organs) from control and high-dose groups are examined microscopically. If effects are seen, tissues from lower-dose groups are analyzed to establish a dose-response [3].

- Key Output: Identification of the No Observed Adverse Effect Level (NOAEL), the highest dose causing no biologically significant adverse effects, which is used to establish safety margins for human exposure [3].

Quantitative Risk Assessment: Bridging Acute and Chronic Data

Quantitative risk assessment (QRA) translates toxicity data into quantitative estimates of risk, applying differently for acute and chronic endpoints [5].

For Non-Cancer Cumulative Risks (e.g., organ toxicity): The Hazard Quotient (HQ) is calculated for individual chemicals: HQ = Estimated Exposure / Reference Dose (RfD). An HQ < 1 indicates risk is considered negligible. For mixtures, Hazard Indices (HI = Σ HQs) are summed for chemicals affecting the same target organ [5].

For Cancer Risks (from chronic exposure): The Excess Lifetime Cancer Risk (ELCR) is estimated: ELCR = Estimated Exposure × Inhalation Unit Risk (IUR). Risks below 1 in 1,000,000 (10⁻⁶) are typically considered negligible [5].

Table 4: QRA Comparing Heated Tobacco Product (HTP) vs. Cigarette Smoke

| Risk Metric | Description | Result for 3R4F Cigarette | Result for HTP Aerosol | Percent Reduction |

|---|---|---|---|---|

| Non-Cancer Hazard Index (HI) | Sum of HQs for respiratory, cardiovascular, etc. effects. | Baseline (1.0) | <0.1 | >90% |

| Total Excess Lifetime Cancer Risk (ELCR) | Sum of ELCRs for all carcinogens measured. | Baseline (1.0) | <0.1 | >90% |

This QRA demonstrates how comparative analysis of emission data, using toxicity reference values, can quantify the potential reduction in cumulative insult from a modified product [5].

The following diagram outlines the integrated workflow from toxicity testing to quantitative risk assessment.

Graph 2: Integrated Workflow from Toxicity Testing to Risk Assessment. This diagram shows the sequential and iterative process of generating toxicity data and using it to derive quantitative risk estimates, which inform regulatory decision-making [2] [5] [6].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 5: Key Reagents and Materials for Toxicity Testing Research

| Category | Item | Function & Application |

|---|---|---|

| In Vivo Model Systems | Rodents (Rat, Mouse) | Primary species for systemic toxicity, carcinogenicity, and reproductive studies [3] [6]. |

| Rabbits | Standard model for dermal and ocular irritation testing [4]. | |

| Aquatic Species (Fathead minnow, Daphnia) | Used for ecotoxicity testing under EPA guidelines [2]. | |

| Exposure & Dosing | Gavage Needles & Formulation Vehicles | For precise oral administration of test compounds [3]. |

| Inhalation Chambers & Nebulizers | For generating controlled atmospheres of aerosols, gases, or vapors for inhalation studies [9]. | |

| Dermal Patches & Occlusive Chambers | For controlled topical application in skin irritation and sensitization tests [10]. | |

| Analytical & Clinical Pathology | Automated Hematology Analyzer | To measure red/white blood cell counts, hemoglobin, etc., for systemic toxicity screening [3]. |

| Clinical Chemistry Analyzer | To quantify serum enzymes (ALT, AST), electrolytes, and metabolites for organ function assessment [3]. | |

| ELISA/Multiplex Assay Kits | To quantify biomarkers of effect (e.g., cytokines, liver enzymes, oxidative stress markers) [9] [7]. | |

| Histopathology | Tissue Fixatives (e.g., 10% NBF) | To preserve tissue architecture for microscopic evaluation [3]. |

| Automated Tissue Processors & Microtomes | For preparing thin, consistent tissue sections for staining [3]. | |

| Special Stains (H&E, Trichrome, IHC markers) | For visualizing general morphology, fibrosis, and specific cell types/proteins [3]. | |

| In Vitro & Alternative Methods | Reconstituted Human Epidermis (RHE) Models | For in vitro skin corrosion/irritation testing, replacing animal methods [8]. |

| Air-Liquid Interface (ALI) Cell Cultures | For direct, realistic inhalation toxicity testing of air pollutants [9]. | |

| High-Throughput Screening Assays | For mechanistic toxicity screening on nuclear receptors, enzyme inhibition, etc. |

Temporal Dimensions and Manifestation of Toxic Effects

The fundamental distinction between acute and chronic toxicity represents a cornerstone of chemical safety evaluation, dictating testing strategies, risk assessment models, and regulatory standards. Acute toxicity describes adverse effects occurring within a short time frame (minutes to days) following a single or limited number of exposures, often revealing immediate pathological outcomes like mortality, organ failure, or severe clinical signs [11]. In contrast, chronic toxicity encompasses insidious harm manifesting after prolonged, repeated exposure over a significant portion of an organism's lifespan (months to years), potentially leading to cancer, organ dysfunction, reproductive deficits, or other degenerative diseases [12] [13].

This whitepaper argues that a sophisticated understanding of the temporal dimension—bridging acute insults to chronic outcomes—is critical for advancing predictive toxicology. Relying solely on long-term, high-cost animal studies is increasingly viewed as unsustainable from both ethical and resource perspectives [12] [14]. A modern paradigm integrates mechanistic in vitro assays and computational modeling to elucidate the biological pathways that, when perturbed briefly, initiate a cascade of events culminating in chronic disease. This approach aligns with the global shift toward New Approach Methodologies (NAMs), which seek to provide human-relevant, efficient, and mechanistic data for safety decisions [11] [14]. By framing toxicity within its temporal context, researchers can better identify early key events in adverse outcome pathways, thereby enabling the use of short-term tests to protect against long-term harm.

Core Concepts and Key Parameters

The experimental characterization of toxic effects across different time scales relies on specific, standardized parameters. These metrics serve as the quantitative foundation for hazard identification and risk assessment.

Table 1: Key Parameters in Acute vs. Chronic Toxicity Testing

| Parameter | Acute Toxicity (In Vivo Focus) | Chronic Toxicity (In Vivo Focus) | Modern NAMs Alternative (In Vitro/In Silico) |

|---|---|---|---|

| Primary Metric | LD₅₀/LC₅₀ (Lethal Dose/Concentration for 50% of population), NOAEL (No Observed Adverse Effect Level) | NOAEL, LOAEL (Lowest Observed Adverse Effect Level), BMD (Benchmark Dose) | Transcriptomic Point of Departure (tPOD), In Vitro IC₅₀/EC₅₀, Predicted LC₅₀ [14] |

| Typical Exposure Duration | Single or repeated doses over ≤24 hours [11]. | Continuous or repeated exposure for ≥12 months (rodents) [13]. | Short-term exposure (hours to days) to cells or tissues [14]. |

| Critical Endpoints | Mortality, moribundity, clinical signs, gross pathology. | Body weight/organ weight changes, clinical pathology (hematology, chemistry), histopathology, tumor incidence, reproductive effects [13]. | Cytotoxicity, gene expression changes, pathway perturbation, cellular stress responses [14]. |

| Typical Test System | Young adult rodents (OECD TG 403, 436). | Rodents (two sexes) over a major life stage (OECD TG 452) [13]. | Cell lines (e.g., RTgill-W1), primary cells, engineered tissues (e.g., EpiAirway), co-culture systems [11] [14]. |

| Temporal Insight | Identifies immediate hazards and lethal potency. | Reveals cumulative damage, adaptive responses, and delayed pathogenesis (e.g., carcinogenesis) [12]. | Provides early mechanistic signals that may predict chronic apical outcomes, linking molecular initiation to potential long-term effects [14]. |

The emergence of transcriptomic points of departure (tPODs) is a pivotal development. A tPOD is a statistically derived dose or concentration at which a significant change in global gene expression occurs. Research indicates that tPODs from short-term in vitro exposures can correlate with and often be more protective than traditional chronic in vivo NOAELs, suggesting that molecular initiating events captured early can forecast later adverse outcomes [14].

Experimental Protocols for Temporal Toxicity Assessment

This protocol leverages the OECD Test Guideline 249 (Fish Gill Cell Line Cytotoxicity) to generate mechanistically rich data for calculating a tPOD, bridging acute in vitro exposure with predictive insights for chronic aquatic toxicity.

1. Cell Culture and Exposure:

- Cell Line: Maintain rainbow trout gill (RTgill-W1) cells in standard culture flasks using L-15 medium supplemented with fetal bovine serum.

- Preparation: Seed cells into 96-well plates at a density optimized for confluence after attachment. Prior to exposure, replace growth medium with a specialized, protein-free exposure medium (L-15/ex).

- Dosing: Prepare a logarithmic series of test chemical concentrations (e.g., eight concentrations in triplicate) in L-15/ex. Include a solvent control (e.g., DMSO) and a positive control (e.g., 3,4-dichloroaniline). Expose cells for the standard 24-48 hour period.

2. RNA Sequencing and Bioinformatics:

- Lysate Collection: After exposure, remove medium and directly lyse cells in the wells with a TRIzol-like reagent. Lysates can be stored at -80°C.

- Library Preparation & Sequencing: Isolate total RNA. Prepare sequencing libraries using a standardized kit (e.g., UPXome). Pool libraries and perform high-throughput sequencing on an Illumina platform to obtain ~20 million reads per sample.

- Differential Expression Analysis: Map sequence reads to the reference genome. Normalize read counts and perform statistical analysis to identify genes whose expression is significantly altered in a dose-dependent manner.

3. tPOD Calculation via Benchmark Dose (BMD) Modeling:

- Data Input: Use the dose-response data for each statistically significant gene.

- Model Fitting: Fit multiple mathematical models (e.g., power, linear, polynomial) to each gene's expression profile. Select the best-fit model based on statistical goodness-of-fit criteria (e.g., lowest Akaike Information Criterion).

- BMD Derivation: Calculate the Benchmark Dose (BMD) for each gene, defined as the dose that causes a predetermined, modest change in expression (e.g., one standard deviation from the control mean).

- Final tPOD: Compile the BMDs for all responsive genes. The tPOD is typically defined as the lower confidence bound of the 10th percentile of these gene-specific BMDs (BMDL₁₀). This value represents a conservative, protective estimate of the dose at which significant biological pathway perturbation begins.

This protocol describes the use of a reconstructed human airway tissue model to assess acute inhalation toxicity potential, replacing or refining traditional animal-based LC₅₀ tests.

1. Tissue Model Preparation:

- System: Use a commercial reconstructed human bronchial epithelium model (e.g., EpiAirway). These are 3D, differentiated tissues cultured at an air-liquid interface (ALI).

- Pre-conditioning: Upon receipt, acclimate tissues in provided maintenance medium in a 37°C, 5% CO₂ incubator for 24-48 hours.

2. Air-Liquid Interface (ALI) Exposure:

- Dosing Strategy: Apply the test substance directly to the apical (air) surface of the tissue in a vehicle appropriate for volatile or particulate materials. For liquids, a small volume is carefully pipetted. For gases or aerosols, use specialized exposure chambers.

- Exposure Duration: Expose tissues for a defined period (typically 1-4 hours).

- Post-Exposure Incubation: After exposure, gently wash the apical surface to remove residual test material and return tissues to fresh culture medium for a post-exposure recovery period (e.g., 20-44 hours).

3. Toxicity Endpoint Measurement:

- Viability Assessment: Measure tissue viability using a standard assay like MTT or Alamar Blue, which quantifies mitochondrial activity. Treat tissues with the dye solution for several hours and measure spectrophotometric absorbance or fluorescence.

- Data Analysis: Calculate cell viability as a percentage of the vehicle control. The concentration that reduces viability by 50% (IC₅₀) is determined. This in vitro IC₅₀ can be used in IVIVE (In Vitro to In Vivo Extrapolation) models to predict a potential in vivo LC₅₀ value for risk assessment.

4. Integrated Testing Strategy: This assay is part of a larger framework like the Collaborative Modeling Project for Acute Inhalation Toxicity (CoMPAIT), which aims to develop and validate computational models that predict inhalation LC₅₀ values from chemical structure or in vitro data [11].

This outlines the core design elements of a traditional in vivo chronic study, which remains a regulatory benchmark for assessing long-term effects.

1. Experimental Design:

- Animals: Use young, healthy rodents (typically rats). Assign at least 20 animals per sex per dose group randomly.

- Groups: Include at least three treated dose groups and a concurrent control group. Dose selection is based on sub-acute (90-day) study results, with the highest dose intended to elicit toxicity but not excessive mortality.

- Route and Frequency: Administer the test substance daily via diet, drinking water, or oral gavage for a period of 12 months.

2. In-Life Observations and Monitoring:

- Clinical Observations: Perform detailed daily observations for morbidity and mortality. Conduct systematic physical examinations weekly.

- Functional Tests: Monitor food and water consumption. Measure body weight weekly.

- Clinical Pathology: Collect blood samples at interim intervals (e.g., 6 months) and at terminal sacrifice for hematology and clinical chemistry. Perform urinalysis at similar intervals.

3. Terminal Procedures and Histopathology:

- Necropsy: At the end of the 12-month period, perform a full necropsy on all animals. Record gross findings and weights of all major organs.

- Tissue Preservation: Preserve a comprehensive list of organs and tissues (e.g., all gross lesions, brain, heart, liver, kidneys, etc.) in fixative.

- Histopathological Examination: Process fixed tissues, embed in paraffin, section, stain with Hematoxylin and Eosin (H&E), and examine microscopically. This is the most critical endpoint for identifying chronic lesions, pre-neoplastic changes, and tumors.

4. Data Analysis and Reporting:

- Compile and statistically analyze all data (body weight, consumption, clinical pathology, organ weights, histopathology incidence).

- Determine the NOAEL and LOAEL for the study based on the totality of biological effects observed.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Temporal Toxicity Studies

| Item | Function & Application | Example in Protocol |

|---|---|---|

| RTgill-W1 Cell Line | A permanent cell line derived from rainbow trout gills used as a standard model for fish acute and mechanistic toxicity testing [14]. | Protocol 3.1: Serves as the in vitro system for pesticide exposure and tPOD derivation. |

| Reconstructed Human Airway Tissues (EpiAirway) | 3D, differentiated human bronchial epithelial tissues cultured at an air-liquid interface (ALI) to model the human respiratory tract for inhalation toxicity testing [11]. | Protocol 3.2: Used for direct apical exposure to test substances to determine in vitro IC₅₀. |

| Specialized Exposure Medium (L-15/ex) | A protein-free, animal-component-free buffer designed to hold test chemicals in solution without interfering with cell health or chemical bioavailability during in vitro fish cell tests [14]. | Protocol 3.1: Used as the vehicle for diluting and exposing pesticides to RTgill-W1 cells. |

| UPXome / RNA-Seq Library Prep Kits | Commercial kits used to convert isolated total RNA into cDNA libraries compatible with next-generation sequencing platforms for transcriptomic analysis [14]. | Protocol 3.1: Used to prepare sequencing libraries from exposed cell lysates for gene expression profiling. |

| BMD/BMDL Modeling Software | Computational tools (e.g., US EPA's BMDS, R package "BMDExpress") that fit mathematical models to dose-response data to calculate a Benchmark Dose and its lower confidence limit [14]. | Protocol 3.1: Used to analyze transcriptomic dose-response data and calculate the final tPOD (BMDL₁₀). |

| IVIVE (In Vitro to In Vivo Extrapolation) Models | Computational frameworks that convert in vitro concentration-response data to an equivalent in vivo dose, often incorporating pharmacokinetic parameters [11]. | Protocol 3.2: Used to translate in vitro IC₅₀ from airway tissues to a predicted in vivo inhalation LC₅₀. |

Visualizing Pathways and Workflows

From Acute Exposure to Chronic Outcome Prediction

Transcriptomic Point of Departure (tPOD) Workflow

Integrated Testing Strategy for Inhalation Toxicity

Primary Objectives and Regulatory Imperatives for Each Testing Paradigm

In the context of advancing research on acute versus chronic toxicity testing, the distinction between these two paradigms is foundational to chemical and drug safety assessment. Acute toxicity testing evaluates adverse effects from a single or short-term exposure, primarily for hazard identification, classification, and labeling. In contrast, chronic toxicity testing investigates the consequences of prolonged, repeated exposure to identify cumulative organ damage, dose-response relationships, and establish safe exposure limits [15] [16]. The regulatory landscape governing these tests is complex, involving guidelines from agencies like the U.S. Environmental Protection Agency (EPA), Food and Drug Administration (FDA), and international bodies like the Organisation for Economic Co-operation and Development (OECD) [17] [18] [19]. While traditional methods rely heavily on animal models, a significant paradigm shift is underway toward New Approach Methodologies (NAMs)—encompassing in vitro, in chemico, and in silico methods—driven by the principles of the 3Rs (Replacement, Reduction, and Refinement) and the pursuit of more human-relevant data [15] [20]. This guide details the core objectives, regulatory requirements, experimental protocols, and the evolving framework of NAMs for both testing paradigms.

Primary Objectives and Core Requirements of Each Paradigm

The fundamental goals of acute and chronic toxicity testing dictate their design, duration, and regulatory application. The following table summarizes their contrasting primary objectives.

Table 1: Core Objectives of Acute vs. Chronic Toxicity Testing

| Aspect | Acute Toxicity Testing | Chronic Toxicity Testing |

|---|---|---|

| Primary Objective | Identify adverse effects from a single or short-term exposure (≤24 hours) [16]. | Determine effects from prolonged, repeated exposure (usually ≥12 months) [21] [22]. |

| Key Goals | - Hazard classification & labeling (e.g., GHS categories) [17].- Estimate lethal dose (e.g., LD₅₀/LC₅₀) [17] [16].- Identify target organs and species differences [16].- Set doses for longer-term studies [16]. | - Characterize cumulative toxicity & effects with long latency [21].- Establish dose-response relationships & a No-Observed-Adverse-Effect Level (NOAEL) [21] [22].- Identify the majority of chronic pathological effects [21]. |

| Typical Study Duration | Single dose; observation for 14 days [16]. | At least 12 months of dosing in rodents [21] [22]. |

| Regulatory Use | - Informing product labels and hazard warnings [17].- Setting acceptable human exposure limits for single events [17].- Risk assessment for accidental exposures [17]. | - Supporting long-term human exposure safety (e.g., food additives, drugs) [23] [22].- Deriving health-based guidance values (HBGVs) for continuous exposure [15]. |

| Common Test Guidelines | OECD TG 420 (Fixed Dose), 423 (Acute Toxic Class), 425 (Up-and-Down); EPA 870.1100 [16] [18]. | OECD TG 451/452; EPA 870.4100; FDA Redbook IV.C.5.a [18] [22]. |

Regulatory Imperatives and Guidelines

Regulatory requirements for toxicity testing are established by multiple national and international authorities to ensure standardized safety assessments.

Acute Toxicity Regulatory Landscape

In the United States, at least six federal agencies require acute systemic toxicity data for regulatory decisions [17]. The specific requirements and flexibility to use non-animal methods vary.

Table 2: U.S. Agency Requirements for Acute Systemic Toxicity Data [17]

| Agency | Key Legislations | Substances Regulated | Flexibility for Non-Animal Methods |

|---|---|---|---|

| Consumer Product Safety Commission (CPSC) | Federal Hazardous Substances Act | Hazardous consumer products | Some flexibility for classification. |

| Environmental Protection Agency (EPA) | FIFRA, Toxic Substances Control Act (TSCA) | Pesticides, industrial chemicals | Actively implementing alternative approaches (e.g., Up-and-Down Procedure) [24]. |

| Food and Drug Administration (FDA) | Federal Food, Drug, and Cosmetic Act | Food ingredients, color additives, medical devices | For drugs, acute data often subsumed by repeated-dose studies; flexibility exists for other products [17]. |

| Occupational Safety and Health Administration (OSHA) | Occupational Safety and Health Act | Workplace chemicals | Uses data for hazard communication; accepts GHS classification which may be derived from alternatives. |

| Department of Transportation (DOT) | Hazardous Materials Transportation Act | Transported hazardous materials | Requires data for classification; follows internationally accepted test methods. |

Globally, the OECD Test Guidelines provide the standard. Modern guidelines like the Fixed Dose Procedure (OECD TG 420) and the Up-and-Down Procedure (OECD TG 425) use fewer animals (5-9) than the classical LD₅₀ test, focusing on evident toxicity rather than mortality [17] [16]. The EPA promotes a process for establishing and implementing alternative approaches to traditional in vivo acute studies for pesticides, aiming to reduce animal use [24].

Chronic Toxicity Regulatory Landscape

Chronic testing is mandated for substances with potential long-term human exposure. Key guidelines include:

- EPA 40 CFR 798.3260 / OPPTS 870.4100: Requires testing in two mammalian species (one rodent, one non-rodent) for at least 12 months. It specifies detailed requirements for animal numbers, dose selection, and clinical examinations [21] [18].

- FDA Redbook 2000 IV.C.5.a.: Guides chronic toxicity studies for food ingredients in rodents, emphasizing a minimum 12-month study to determine a NOAEL and characterize toxicity [22].

- ICH Guidelines (M3(R2), S4, S6(R1)): Provide international harmonized standards for pharmaceuticals. For small molecules, chronic studies typically involve 6 months in rodents and 9 months in non-rodents, though a 6-month non-rodent study is acceptable in the EU [23]. For biologics like monoclonal antibodies, 6-month studies in one pharmacologically relevant species are standard, with ongoing evaluation of whether shorter durations (e.g., 3 months) are sufficient based on a Weight of Evidence (WOE) risk assessment [23].

Experimental Protocols and Methodologies

StandardIn VivoProtocol Specifications

The design of standard animal studies differs significantly between acute and chronic paradigms.

Table 3: Comparative Experimental Design for Standard In Vivo Studies

| Parameter | Acute Toxicity Study (Oral Example) | Chronic Toxicity Study (Typical) |

|---|---|---|

| Species | Usually one rodent species (rat or mouse) [16]. | Two species: a rodent (rat) and a non-rodent (dog) [21]. |

| Animals per Sex per Dose Group | 5-9 (using modern OECD TGs) [17]. | Rodent: ≥20; Non-rodent: ≥4 [21] [22]. |

| Age at Dosing Start | Young adult [16]. | Rodent: 6-8 weeks; Dog: 4-9 months [21]. |

| Dose Groups | Usually 3-5, plus control [16]. | Minimum of 3 dose levels + concurrent control [21]. |

| Dosing Route | Oral, dermal, or inhalation [17]. | Oral (feed, gavage), dermal, or inhalation [21]. |

| Dosing Regimen | Single administration [16]. | Daily (or 5-7 days/week) for ≥12 months [21]. |

| Core Observations | Mortality, clinical signs, body weight, gross necropsy [16]. | Daily clinical signs, weekly body weight, detailed hematology, clinical biochemistry, urinalysis, comprehensive histopathology [21]. |

| Key Endpoint | Lethality or signs of evident toxicity for classification [17] [16]. | NOAEL, target organ toxicity, detailed pathological assessment [21]. |

New Approach Methodologies (NAMs) and Defined Approaches

NAMs represent a paradigm shift from observing apical endpoints in animals to understanding toxicity pathways in human-relevant systems [15] [20].

- Components of NAMs: Include in silico (QSAR, read-across), in chemico (peptide reactivity assays), and in vitro methods (cell-based assays, microphysiological systems (MPS), omics) [15].

- Defined Approaches (DAs): These are fixed combinations of NAMs with a prescribed data interpretation procedure (DIP). Successful examples include OECD TG 497 for skin sensitization, which integrates in chemico and in vitro data, and TG 467 for eye irritation [20]. DAs facilitate regulatory acceptance by ensuring standardized and reproducible assessments.

- Workflow for Systemic Toxicity Assessment: A NAM-based strategy for systemic effects involves a tiered approach: 1) Exposure assessment to define relevant human concentrations; 2) Bioactivity profiling using high-throughput in vitro assays; 3) Mechanistic investigation using omics and pathway analysis; 4) Quantitative in vitro to in vivo extrapolation (QIVIVE) using physiologically based kinetic (PBK) modelling to predict systemic doses; and 5) Risk characterization by comparing bioequivalent doses with exposure estimates [15] [20].

- Validation and Benchmarking: A critical challenge is validating NAMs without defaulting to animal data as the sole "gold standard," given that rodent predictivity for human toxicity is limited (~40-65%) [20]. The focus is shifting toward demonstrating human biological relevance and protective risk assessment rather than replicating animal outcomes [20].

Diagram 1: A comparison of the acute and chronic toxicity testing paradigms and their convergence through New Approach Methodologies (NAMs).

Diagram 2: A tiered workflow for implementing New Approach Methodologies (NAMs) in systemic toxicity assessment.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagent Solutions in Toxicity Testing

| Reagent / Assay System | Category | Primary Function in Toxicity Testing |

|---|---|---|

| Reconstructed Human Epidermis (RHE) Models | In Vitro | Replace animal skin for corrosion/irritation testing (OECD TG 431, 439) [16] [19]. |

| Bovine Corneal Opacity and Permeability (BCOP) Assay | Ex Vivo | Identify eye corrosives/severe irritants, reducing rabbit use (OECD TG 437) [16]. |

| ARE-Nrf2 Luciferase Test (e.g., KeratinoSens) | In Vitro | Detect activation of the Keap1-Nrf2 pathway, a key event in skin sensitization (OECD TG 442D) [16] [19]. |

| Direct Peptide Reactivity Assay (DPRA) | In Chemico | Measure covalent binding to skin proteins, a molecular initiating event for sensitization (OECD TG 442C) [16]. |

| 3T3 Neutral Red Uptake Phototoxicity Test | In Vitro | Predict phototoxic potential by comparing cytotoxicity with/without UV light (OECD TG 432) [16]. |

| Rat or Human Liver S9 Fraction | In Vitro | Provide metabolic activation (Cytochrome P450 enzymes) for genotoxicity assays (e.g., Ames test) [18]. |

| Microphysiological Systems (MPS) | In Vitro | Model organ-level function and inter-tissue communication (e.g., liver-chip, kidney-chip) for repeated-dose toxicity assessment [15]. |

| GARDskin Assay | In Vitro | Genomic biomarker-based assay for skin sensitization potency assessment [20]. |

Signaling Pathways and Adverse Outcome Pathways (AOPs)

The AOP framework is a central concept in modern toxicology, linking a molecular initiating event (MIE) through a series of key events (KEs) to an adverse outcome (AO) at the organism level [15]. This framework supports the development of NAMs by identifying measurable KEs that can be tested in vitro.

Diagram 3: The relationship between an Adverse Outcome Pathway (AOP) for skin sensitization and the testing methods that inform its Key Events (KEs).

For example, the well-developed AOP for skin sensitization begins with the MIE of covalent binding to skin proteins [20]. This leads to KE1: keratinocyte inflammatory response (measurable by the KeratinoSens assay), KE2: dendritic cell activation (measurable by the h-CLAT assay), and KE3: T-cell proliferation, culminating in the AO: allergic contact dermatitis. Defined Approaches like OECD TG 497 integrate data from assays targeting these different KEs to make a hazard prediction without animal testing [20] [19].

The future of toxicity testing lies in the systematic adoption of NAMs within an NGRA framework. This requires:

- Moving beyond one-to-one replacement: Success depends on integrated testing strategies, not finding a single in vitro replacement for each animal test [20].

- Building confidence through fit-for-purpose validation: Demonstrating that NAMs provide protective, human-relevant data for decision-making, with benchmarking against animal data being only one component [15] [20].

- Adapting regulatory frameworks: Shifting from hazard-based classification to exposure-led, risk-based assessment will be necessary to fully leverage NAMs [15] [20].

- Embracing flexibility in chronic testing: For certain modalities like monoclonal antibodies, evidence supports using WOE assessments to justify shorter study durations (e.g., 3-month studies), reducing animal use without compromising safety [23].

The transition from traditional acute and chronic animal tests to a human biology-based NGRA paradigm is not merely a technical challenge but a conceptual evolution. It promises more relevant safety assessments, aligned with both ethical imperatives and scientific progress [15] [20].

The dose-response relationship is the cornerstone principle of toxicology, quantitatively defining the correlation between the magnitude of an administered exposure and the incidence of a specific biological effect [25]. This relationship is universally visualized as a sigmoid curve when the response is plotted against the logarithm of the dose, characterized by a threshold, a linear phase of increasing effect, and a plateau at maximum response. The scientific and regulatory assessment of chemical safety fundamentally relies on deriving specific metrics from this curve, which differ profoundly based on the temporal nature of the exposure.

Acute toxicity describes adverse effects occurring within a short time (usually up to 14 days) following a single or multiple exposures over 24 hours or less [26]. Its primary goal is to identify the poisoning potential of a substance, with the lethal dose for 50% of a test population (LD50) being its most iconic metric [27]. In contrast, chronic toxicity results from repeated exposures, often at lower levels, over a significant portion of a test organism's lifespan (months or years) [26]. The objective here shifts from identifying lethality to determining the highest dose that causes no observed adverse effects (NOAEL) or the lowest dose that does (LOAEL), which are then used to establish safe human exposure thresholds [25]. This guide provides an in-depth technical analysis of these core metrics and the experimental frameworks that generate them, situated within the critical research continuum from acute to chronic toxicity testing.

Core Toxicological Metrics: Definitions, Applications, and Distinctions

Key metrics are extracted from dose-response studies to serve specific purposes in hazard identification, classification, and risk assessment. The following table summarizes the defining characteristics of the primary metrics discussed in this guide.

Table 1: Core Toxicological Dose-Response Metrics

| Metric | Full Name | Primary Study Type | Key Purpose | Typical Units |

|---|---|---|---|---|

| LD₅₀ | Median Lethal Dose | Acute Toxicity | Quantify acute lethal potency for hazard classification [25] [27] | mg/kg body weight [25] |

| LC₅₀ | Median Lethal Concentration | Acute Inhalation Toxicity | Quantify acute lethal potency of airborne substances [25] [27] | mg/L (air) or ppm [25] |

| NOAEL | No Observed Adverse Effect Level | Repeated Dose (Chronic) Toxicity | Identify the highest dose without adverse effects for safety threshold derivation [25] | mg/kg bw/day [25] |

| LOAEL | Lowest Observed Adverse Effect Level | Repeated Dose (Chronic) Toxicity | Identify the lowest dose causing adverse effects when NOAEL is not found [25] | mg/kg bw/day [25] |

| EC₅₀ | Median Effective Concentration | Ecotoxicity | Measure potency for non-lethal effects (e.g., immobility, growth inhibition) [25] | mg/L (water) [25] |

LD50 and LC50: The LD50 (Median Lethal Dose) is a statistically derived single dose expected to cause death in 50% of treated animals [25] [27]. It is a standardized measure for comparing the inherent acute toxicity of substances across different chemicals and studies. A lower LD50 value indicates higher acute toxicity [25]. For airborne substances, the LC50 (Lethal Concentration 50%) is used, representing the concentration in air causing 50% mortality after a set exposure period (typically 4 hours) [27]. These values are pivotal for Globally Harmonized System (GHS) hazard classification and labeling (e.g., "Danger" or "Warning") [25].

NOAEL and LOAEL: In repeated-dose studies (e.g., 28-day, 90-day, or chronic), the NOAEL (No Observed Adverse Effect Level) is identified as the highest tested dose at which there are no biologically significant increases in adverse effects compared to the control group [25]. Effects may occur at this level but are not deemed adverse. The LOAEL (Lowest Observed Adverse Effect Level) is the lowest tested dose where such significant adverse effects are observed [25]. These levels are not inherent properties of the chemical but are determined by the specific design, dosing intervals, and sensitivity of a given study. The NOAEL is the critical point of departure for establishing safe exposure limits for humans, such as the Acceptable Daily Intake (ADI) or Reference Dose (RfD), by applying assessment (uncertainty) factors [25].

Comparative Context: The fundamental distinction lies in their endpoints: LD50 measures a severe, acute outcome (death), while NOAEL is based on the spectrum of sub-lethal adverse effects (e.g., organ weight changes, clinical chemistry alterations, histopathological lesions) observed over prolonged exposure. This is illustrated in the comparative data for the insecticide dichlorvos [27]:

- Oral LD₅₀ (rat): 56 mg/kg (a single dose)

- Inhalation LC₅₀ (rat): 1.7 ppm for 4 hours (a single exposure) These values classify it as moderately to highly toxic acutely [27]. In contrast, a hypothetical 90-day study might identify a NOAEL of 0.5 mg/kg/day based on observed cholinesterase inhibition at higher doses, demonstrating how chronic endpoints yield much lower safety thresholds.

Experimental Protocols for Determining Key Metrics

Protocols for Acute Lethality (LD50/LC50)

Traditional LD50 tests involve administering a range of single doses to groups of animals (typically rodents) via the relevant route (oral, dermal, inhalation), followed by a 14-day observation period [27]. Due to animal welfare concerns and the need for reduction and refinement, fixed-dose and sequential methods have largely replaced the classic mortality-driven protocols.

OECD Guideline 420: Fixed Dose Procedure (FDP) This method uses preset dose levels (5, 50, 300, 2000 mg/kg, and optionally 5000 mg/kg) and aims to identify a dose causing clear signs of toxicity (e.g., evident morbidity) rather than death [28].

- Pre-test: A single animal is dosed at a starting level (often 300 mg/kg). Based on the outcome (mortality or severe signs), the dose for the main test is adjusted.

- Main test: A group of five animals (one sex initially) receives the selected dose. The objective is to find the dose that causes clear evidence of toxicity but not mortality. If this is achieved, the test stops. Otherwise, a higher or lower fixed dose is tested in a new group.

- The result is a categorization of toxicity (e.g., "Category 3" for 300 mg/kg) rather than a precise LD50 value, which is sufficient for hazard classification.

OECD Guideline 425: Up-and-Down Procedure (UDP) This sequential method is highly efficient, using as few as 6-10 animals to estimate the LD50 and its confidence intervals [28].

- A single animal is dosed at a best-estimate starting point. If it survives, the next animal receives a higher dose; if it dies, the next receives a lower dose. The step size between doses is predefined (e.g., a factor of 3.2).

- This sequential testing continues based on the outcome for each previous animal, following a set stopping rule.

- A statistical model (such as the maximum likelihood method) is applied to the sequence of outcomes to estimate the LD50 and its variance.

Protocols for Determining NOAEL/LOAEL

NOAEL and LOAEL are derived from subchronic or chronic repeated-dose toxicity studies. There is no single standardized test, but the study design follows well-established principles.

- Study Design: Groups of animals (typically rodents and non-rodents) are exposed to the test substance daily for a defined period (28 days, 90 days, or 12-24 months) [25] [28]. A minimum of three dose groups plus a concurrent control group is standard.

- Dose Selection: The high dose should induce clear toxicity but not excessive mortality (typically informed by acute or range-finding studies). The low dose should aim to be a NOAEL, and the mid-dose should produce mild, observable effects.

- Endpoint Monitoring: Throughout the study, animals are closely monitored for clinical signs, body weight, food/water consumption, hematology, clinical biochemistry, and organ weights [28]. At termination, a full histopathological examination of tissues and organs is conducted.

- Data Analysis and NOAEL Identification: All collected data are analyzed statistically and biologically. The NOAEL is identified as the highest dose level that does not produce a statistically significant or biologically adverse increase in any toxicological parameter compared to controls. The LOAEL is the next highest dose where such adverse effects are observed.

The Scientist's Toolkit: Essential Reagents and Materials

Conducting dose-response studies requires standardized reagents, materials, and biological systems to ensure reproducibility, validity, and regulatory acceptance.

Table 2: Key Research Reagent Solutions for Dose-Response Studies

| Category/Item | Function & Purpose | Technical Specifications & Notes |

|---|---|---|

| Test Substance | The chemical agent whose toxicity is being characterized. | Must be of defined purity, stability, and batch consistency. Prepared in a suitable vehicle (e.g., corn oil, methylcellulose, saline) [28]. |

| Vehicle/Formulation Reagents | To dissolve, suspend, or deliver the test substance at the required concentrations without causing toxicity themselves. | Common examples: Carboxymethylcellulose (suspending agent), Tween-80 (emulsifier), Corn Oil (vehicle for lipophilic compounds), Phosphate-Buffered Saline (aqueous vehicle) [28]. |

| Clinical Pathology Kits | For analyzing in-life toxicity biomarkers in blood (hematology) and serum/plasma (clinical chemistry). | Kits for enzymes (ALT, AST), metabolites (creatinine, BUN), ions, and cell counts. Vital for identifying target organ toxicity. |

| Histopathology Reagents | For tissue preservation, processing, staining, and microscopic evaluation to identify morphological changes. | Includes fixatives (10% Neutral Buffered Formalin), embedding media (paraffin), stains (Hematoxylin and Eosin - H&E), and special stains for specific tissues. |

| Validated Animal Models | Biological systems for in vivo toxicity assessment. | Rodents: Specific strains of rats (Sprague-Dawley, Wistar) and mice (ICR, C57BL/6) [28]. Non-rodents: Beagle dogs, minipigs, non-human primates (for advanced studies). |

| Diet & Bedding | Standardized nutrition and housing to minimize variable physiological responses. | Certified, contaminant-free rodent diets. Sterilized corn cob or aspen bedding. Environmental conditions (temp, humidity, light cycle) are strictly controlled. |

Visualizing Concepts and Workflows

Dose-Response Curve with Key Metrics and Application Flow

Workflow for Acute and Chronic Toxicity Assessment

From Protocol to Practice: Standardized Testing Frameworks and Strategic Study Design

Within the comprehensive landscape of toxicological research, the distinction between acute and chronic toxicity is foundational. Acute toxicity refers to adverse effects occurring shortly after a single, short-term, or brief exposure to a substance, where effects often appear immediately and can be reversible [29]. In contrast, chronic toxicity results from repeated exposures over a longer period, where effects may be significantly delayed and are often irreversible [30] [29]. This technical guide focuses on the evolving paradigms for assessing acute toxicity, a critical endpoint for initial hazard identification, safety labeling, and emergency response planning.

The traditional cornerstone of acute toxicity assessment has been the in vivo determination of the Lethal Dose 50 (LD50)—the dose that causes death in 50% of tested animals [29]. However, driven by scientific, ethical (the 3Rs principles), and regulatory imperatives, the field is undergoing a transformative shift. This shift is marked by the refinement of traditional animal protocols to reduce suffering and animal numbers and, more significantly, by the development and integration of sophisticated in silico (computational) models designed to predict toxicity based on chemical structure. Framing acute toxicity testing within the broader context of chronic toxicity research is essential; while the exposure scenarios and biological endpoints differ, the ultimate goal is a cohesive, mechanism-based understanding of chemical hazard across all timescales of exposure. The progression from acute to chronic testing represents a continuum from identifying immediate hazards to understanding long-term health risks, with emerging alternative methods offering tools applicable across this spectrum [31].

Defining the Scope: Acute versus Chronic Toxicity

A clear understanding of the operational differences between acute and chronic toxicity is prerequisite to discussing testing frameworks. The table below summarizes the key distinguishing characteristics.

Table 1: Core Characteristics of Acute versus Chronic Toxicity

| Characteristic | Acute Toxicity | Chronic Toxicity |

|---|---|---|

| Exposure Pattern | Single, short-term, or brief repeated exposure within 24 hours [29]. | Repeated, long-term exposure over a significant portion of a lifespan (e.g., 12+ months in rodents) [13]. |

| Onset of Effects | Rapid, often immediate or within hours/days of exposure [30]. | Delayed, with effects manifesting after months or years of exposure [29]. |

| Primary Measured Endpoint | Mortality, often quantified by LD50 (oral, dermal) or LC50 (inhalation) [29]. Observations of severe clinical signs. | Morbidity. Focus on functional impairment, organ pathology, tumor formation, and reproductive effects [13]. |

| Typical Testing Objective | Hazard identification, classification, and labeling (e.g., GHS/CLP categories). Emergency response guidance. | Risk assessment for long-term health effects, establishment of safe exposure limits (e.g., Acceptable Daily Intake). |

| Common Test Guidelines (OECD) | TG 423 (Acute Toxic Class Method), TG 425 (Up-and-Down Procedure). | TG 452 (Chronic Toxicity Studies) [13], TG 451 (Carcinogenicity Studies). |

| Example Agents | Cyanide, phenol, high-concentration solvents [30]. | Heavy metals (e.g., arsenic, lead), tobacco smoke, certain persistent organic pollutants [30] [29]. |

The LD50 value remains a central metric for acute oral toxicity. It is crucial to interpret this value correctly: a lower LD50 indicates greater toxicity [29]. Regulatory frameworks like the Globally Harmonized System (GHS) use LD50 ranges to assign hazard categories (Category 1 being the most toxic). It is critical to recognize that inherent biological variability means a single chemical's experimentally derived LD50 can span an order of magnitude, a point of reference when evaluating the performance of predictive models [32].

Refined In Vivo Testing Methodologies

Refined in vivo methods aim to minimize animal suffering and reduce the number of animals used while generating reliable data for acute hazard classification.

OECD Test Guideline 423 (Acute Toxic Class Method): This is a stepwise procedure using a small number of animals (typically 3 per step) of a single sex. Animals are dosed sequentially at fixed dose levels (5, 50, 300, and 2000 mg/kg body weight). The outcome is not a precise LD50 but a determination of the dose range that causes mortality, allowing for direct classification into one of the predefined GHS toxicity classes. This method significantly reduces animal use compared to the older, traditional LD50 protocols.

OECD Test Guideline 425 (Up-and-Down Procedure): This statistical method involves dosing animals one at a time or in small groups at a minimum of 48-hour intervals. The dose for each subsequent animal is adjusted up or down based on the outcome (death or survival) of the previous animal. A computer program analyzes the sequence of outcomes to estimate the LD50 and its confidence intervals. This method can further reduce animal numbers, particularly for substances of low or very high toxicity.

Key Considerations: These refined tests are specifically designed for hazard classification and not for providing detailed mechanistic insights into the mode of toxic action. Their continued relevance lies in providing in vivo anchor points for validating non-animal methods and fulfilling specific regulatory requirements where alternative methods are not yet accepted [32].

In Silico Predictive Models for Acute Toxicity

In silico toxicology uses computational models to predict the toxicological effects of chemicals from their molecular structure. For acute toxicity, Quantitative Structure-Activity Relationship (QSAR) models are paramount [33].

Core Modeling Approaches:

- Quantitative Structure-Activity Relationship (QSAR): Mathematical models that correlate descriptors of chemical structure (e.g., molecular weight, lipophilicity, presence of functional groups) with a biological activity, such as LD50 [33].

- Read-Across: A non-quantitative approach where the known properties of one or more "source" chemicals are used to predict the properties of a similar "target" chemical based on structural similarity.

- Machine Learning (ML) & AI: Advanced algorithms (e.g., random forests, neural networks) that can identify complex, non-linear patterns in large chemical datasets to improve predictive accuracy [33] [34].

Leading Tools and Performance: Table 2: Key In Silico Models for Acute Oral Toxicity Prediction

| Model | Description | Key Output | Reported Performance & Notes |

|---|---|---|---|

| CATMoS (Collaborative Acute Toxicity Modeling Suite) [35] [32] | A freely available, consensus QSAR model within the OPERA suite. Developed via an international consortium. | Predicts GHS categories, EPA categories, and point estimates for LD50 with confidence metrics. | On REACH chemicals, high-reliability predictions match or are adjacent to experimental category [32]. Requires expert judgment for regulatory application; sole reliance can lead to misclassification [35]. |

| AOrTA (Acute Oral Toxicity Alert) [36] | A global QSAR model with additional local models for specific chemical classes (e.g., esters, alcohols). | Predicts CLP/GHS classification categories. Includes prediction refinement based on closest analogues. | Designed for regulatory use under the QSAR Assessment Framework. Uses a high-quality, curated dataset from ECHA dossiers. |

| Leadscope Model Applier [34] | A commercial software platform with extensive toxicology databases and predictive models. | Provides toxicity profiles, including acute oral toxicity predictions aligned with CLP regulations. | 2025.0 release added 2,000+ new curated acute toxicity records from REACH, improving model robustness [34]. |

Critical Model Components:

- Applicability Domain (AD): A defined chemical space within which the model's predictions are considered reliable. Chemicals outside the AD (e.g., inorganic compounds, mixtures, novel scaffolds) have uncertain predictions [32] [36].

- Validation: Models must be internally and externally validated to assess their predictive power and avoid overfitting.

- Expert Judgement: As emphasized in recent evaluations, computational output must be integrated with expert analysis. This includes assessing the quality of the training data, reviewing nearest structural analogues, and evaluating mechanistic plausibility [35] [32]. A pure "black-box" approach is not considered sufficient for regulatory decision-making.

Integrated Testing Strategies and Workflows

The future of acute toxicity assessment lies not in a single method but in Integrated Approaches to Testing and Assessment (IATA). These strategies combine multiple lines of evidence (in silico, in vitro, and refined in vivo) within a weight-of-evidence framework to reach a conclusion.

The In Silico Forensic Toxicology Workflow provides a template for a systematic integrated approach [33]:

- Data Curation & Problem Formulation: Gather all existing chemical, toxicological, and study data.

- Model Selection & Computational Analysis: Apply relevant QSAR and read-across tools, ensuring the chemical falls within the models' applicability domains.

- Expert Review & Validation: Critically assess predictions against known biology, analogue data, and any available in vitro data. This step is crucial for regulatory acceptance.

- Targeted Experimental Confirmation: Use computational predictions to guide focused, hypothesis-driven in vitro assays or, if absolutely necessary, a refined in vivo study to resolve uncertainties.

- Final Assessment & Reporting: Synthesize all evidence into a transparent, documented hazard assessment.

Integrated Testing Strategy Workflow [33] [32]

This integrated paradigm underscores the complementary roles of different methods. In silico tools provide rapid, cost-effective screening and mechanistic hypotheses. Targeted in vivo tests provide definitive data for complex or high-priority cases where uncertainties remain. This synergy is central to modern regulatory science, as seen in the EPA's strategic vision for implementing alternative approaches [37].

Market Trends and the Evolving Testing Landscape

The early toxicity testing market, which includes acute toxicity assessment, is experiencing significant growth and transformation, driven by technological and regulatory forces.

Table 3: Market Trends in Early Toxicity Testing

| Trend | Description | Implication for Acute Toxicity |

|---|---|---|

| Market Growth | The global market was valued at $1.47 billion in 2024 and is projected to grow at a CAGR of 8.3% to $2.19 billion by 2029 [31]. | Indicates robust investment and demand for more efficient, predictive testing solutions. |

| Rise of In Silico & NAMs | A major trend is the adoption of in silico models and other New Approach Methodologies (NAMs) [31]. | Directly supports the replacement and reduction of animal use for acute endpoints, aligning with EU REACH goals [35] [32]. |

| Advanced Alternative Models | Emergence of sophisticated platforms like zebrafish embryo screening (e.g., ZBEScreen) and organ-on-a-chip models [31]. | Provides in vivo-like systemic biology in a higher-throughput, more ethical format for screening acute systemic toxicity. |

| Personalized Medicine | Growing focus on tailored therapies increases demand for precise safety assessments [31]. | Pushes toxicity testing towards more mechanistic, pathway-based understanding, bridging acute and chronic effects. |

These trends highlight a clear industrial and scientific shift away from standalone animal tests and towards integrated, knowledge-driven testing strategies. The acquisition of specialized toxicology firms (e.g., Scantox's acquisition of Gentronix in 2024) to expand in silico and genetic toxicology capabilities further exemplifies this shift [31].

The framework for acute toxicity testing is evolving from a reliance on observational animal mortality studies to a predictive, science-based paradigm anchored in computational toxicology and defined integrated strategies. As demonstrated by tools like CATMoS and AOrTA, in silico models have achieved a level of performance where they can, with appropriate expert oversight, serve as replacements for in vivo tests in specific regulatory contexts [35] [36]. However, challenges remain, including the need for transparent validation, clear guidance on expert judgment, and expansion of applicability domains to cover more complex chemistries.

Future progress will depend on:

- Enhancing Model Interpretability: Moving beyond "black box" predictions to models that provide mechanistic insights into the Mode of Action (MoA) for acute toxicity [32].

- Bridging Acute and Chronic Effects: Developing integrated models that can use early, acute perturbations in key pathways to predict potential long-term adverse outcomes.

- Regulatory Harmonization: Continued collaboration between industry, academia, and regulators to develop internationally accepted standards for validating and applying these new approaches [37].

In the context of a broader thesis on toxicity testing, acute toxicity assessment is no longer an isolated endpoint but the first, critical node in a network of toxicological understanding. Its modernization through refined in vivo methods and robust in silico models paves the way for a more efficient, ethical, and ultimately more human-relevant safety science ecosystem.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Reagents and Tools for Acute Toxicity Research

| Item / Solution | Function / Purpose | Typical Application |

|---|---|---|

| OECD TG 423 & 425 Protocols | Standardized experimental guidelines for refined in vivo acute oral toxicity testing. | Conducting regulatory-accepted animal studies for hazard classification with minimal animal use. |

| CATMoS or AOrTA Software | Freely available QSAR platforms for predicting acute oral toxicity and GHS/CLP categories. | Initial hazard screening, read-across justification, and as part of a weight-of-evidence assessment [35] [36]. |

| Commercial In Silico Platforms (e.g., Leadscope) | Comprehensive software suites with extensive databases and predictive models for multiple toxicity endpoints [34]. | Generating detailed toxicity profiles, identifying structural alerts, and supporting regulatory submissions. |

| Zebrafish Embryos (Zebrafish Models) | A vertebrate model offering high-throughput, real-time assessment of developmental and systemic toxicity in a whole organism [31]. | Early screening for acute systemic toxicity and organ-specific effects, serving as a bridge between in silico and mammalian in vivo studies. |

| Defined Chemical Libraries for Validation | Curated sets of chemicals with high-quality, reference in vivo acute toxicity data (e.g., from ECHA REACH dossiers). | Benchmarking and validating the performance of new in silico models or integrated testing strategies [32] [36]. |

| Toxicogenomics Assay Kits | Tools for measuring gene expression changes related to specific toxicological pathways (e.g., oxidative stress, inflammation). | Investigating the mechanistic basis of acute toxicity predictions from in silico models or observed in alternative in vivo models. |

Complementary Roles in Modern Toxicology

The assessment of chemical and pharmaceutical safety relies on a tiered toxicological strategy that progresses from acute to sub-chronic and finally chronic studies. This progression is fundamental to a comprehensive thesis on acute versus chronic toxicity testing. Acute toxicity studies evaluate adverse effects resulting from a single or short-term exposure, focusing on immediate, often severe outcomes like mortality or overt organ damage [30]. In contrast, chronic toxicity studies investigate the adverse health effects of prolonged, repeated exposure, which may involve subtle, cumulative damage, organ dysfunction, or cancer [30] [38]. Sub-chronic studies, typically lasting 1-3 months, serve as a critical bridge between these two, identifying target organs and providing dose-ranging data to inform the design of longer-term chronic studies [39].

This guide details the technical design of sub-chronic and chronic studies, focusing on the core pillars of species selection, study duration, and endpoint analysis. These studies are mandated to support late-stage clinical trials and new drug applications, providing the data necessary to characterize risks associated with long-term human use [23]. The design must be scientifically rigorous, ethically conscious (adhering to the 3Rs principles: Replacement, Reduction, and Refinement), and compliant with global regulatory guidelines [40] [41].

Strategic Species Selection and Justification

Selecting the most appropriate animal species is the cornerstone of a predictive nonclinical safety program. The primary goal is to use a species that responds to the test substance in a manner pharmacologically and toxicologically relevant to humans [41].

Foundational Principles for Selection

For small molecule pharmaceuticals, the key considerations are comparative pharmacokinetics and metabolism. The species chosen should metabolize the compound in a way that produces a similar profile of active and inactive metabolites as expected in humans [40] [41]. For biologics (e.g., monoclonal antibodies, recombinant proteins), selection is fundamentally based on pharmacological relevance. The test species must express the target epitope with sufficient homology to the human target to allow for meaningful binding and elicit a similar downstream pharmacological response [40] [41]. The use of non-relevant species is discouraged as it may yield misleading results.

Industry practice has led to the predominant use of a limited set of species. A collaborative survey by the NC3Rs and the Association of the British Pharmaceutical Industry (ABPI) provided quantitative data on species use across drug modalities [40].

Table 1: Species Selection Patterns by Drug Modality (Based on NC3Rs/ABPI Survey Data) [40]

| Drug Modality | Primary Rodent Species | Primary Non-Rodent Species | % Tested in Two Species | Key Justification Drivers |

|---|---|---|---|---|

| Small Molecules | Rat (Predominant) | Dog (Common), NHP (Case-by-case) | 97% | Metabolism, PK, Regulatory Expectation, Historical Data |

| Monoclonal Antibodies | Rat (Minority, ~17%) | NHP (Majority, ~96%) | ~35% | Pharmacological Relevance (Cross-reactivity), PK/ADA |

| Recombinant Proteins | Rat (~60%) | NHP (~87%), Dog | 80% | Pharmacological Relevance, PK |

| Synthetic Peptides | Rat (~92%) | Dog (~50%), NHP (~50%) | 100% | Pharmacological Relevance, Metabolism |

| Antibody-Drug Conjugates | Rat (~66%) | NHP (100%) | 83% | Pharmacological Relevance, PK/ADA, Toxin Metabolism |

The Species Selection Workflow

The process is iterative and science-driven, moving from in silico and in vitro assessments to in vivo confirmation.

Diagram: A science-driven workflow for selecting toxicology species.

Defining Study Duration and Regulatory Alignment

Study duration is dictated by the intended clinical use and specific regulatory guidelines, with flexibility based on modality and risk assessment.

Standard Duration Requirements

The International Council for Harmonisation (ICH) provides the core guidance. For small molecules (ICH M3(R2)), the standard requires a 6-month study in rodents and a 9-month study in non-rodents to support clinical trials longer than six months [23] [39]. For biologics (ICH S6(R1)), a 6-month study in one pharmacologically relevant species (usually non-rodent) is typically sufficient [23]. Sub-chronic studies are generally 3 months (13 weeks) in duration and support clinical trials up to one month [39].

Evolving Flexibility and Duration Optimization

Recent data and regulatory discussions support more flexible, science-based approaches to reduce animal use and accelerate development:

- Monoclonal Antibodies: An industry consortium analysis found that for over 85% of mAbs, studies ≥6 months revealed no new toxicities of human concern beyond those seen in shorter (e.g., 3-month) studies [23]. A Weight-of-Evidence (WoE) model has been developed to justify a 3-month study for lower-risk mAbs, correctly advising strategy for ~90% of cases [23].

- Non-Rodent Duration: A key disparity exists between regions for small molecules (6-month EU vs. 9-month US/Japan). Industry is advocating for wider acceptance of the 6-month non-rodent study globally, aligning with biologic practice and offering significant resource and animal-use reductions [23].

- Oncology Drugs (ICH S9): For advanced cancer therapeutics, shorter durations (e.g., 3 months) in both rodent and non-rodent are generally acceptable [23].

Table 2: Standard and Flexible Chronic Toxicity Study Durations [23] [39]

| Guideline / Modality | Traditional Rodent Duration | Traditional Non-Rodent Duration | Emerging Flexible Approach |

|---|---|---|---|

| ICH M3(R2) (Small Molecules) | 6 months | 9 months (Global) / 6 months (EU) | Advocacy for global 6-month non-rodent study [23]. |

| ICH S6(R1) (Biologics) | Not always required | 6 months | WoE model for 3-month study for lower-risk mAbs [23]. |

| ICH S9 (Advanced Cancer) | 3 months | 3 months | Standard practice. |

| Sub-chronic (General) | 3 months (13 weeks) | 3 months (13 weeks) | Standard practice for clinical support up to 1 month. |

Comprehensive Endpoints and Analytical Methodologies

Chronic and sub-chronic studies integrate a wide array of endpoints to detect and characterize adverse effects. The core methodology involves comparing treated groups (low, mid, high dose) to a concurrent control group.

Core In-Life and Terminal Endpoints

- Clinical Observations & Ophthalmology: Daily checks for morbidity/mortality, detailed physical exams weekly, and periodic ophthalmologic examinations [39].

- Body Weight and Food Consumption: Measured and recorded at least weekly. Failure to gain weight normally is a sensitive indicator of systemic toxicity [30].

- Clinical Pathology: Hematology, clinical chemistry, and urinalysis are performed at interim intervals and study termination. Key parameters include liver enzymes (ALT, AST), kidney markers (BUN, creatinine), electrolytes, and blood cell counts [23].

- Anatomic Pathology: The cornerstone of chronic studies. A full necropsy is performed on all animals. Protocol: All major organs are weighed (absolute and relative-to-body-brain weights). Tissues are preserved in 10% neutral buffered formalin, processed, embedded in paraffin, sectioned, stained with Hematoxylin and Eosin (H&E), and examined microscopically by a board-certified veterinary pathologist [39]. Special stains (e.g., Masson's trichrome for fibrosis, immunohistochemistry for specific biomarkers) are used as needed.

- Toxicokinetics: Serial blood sampling to measure drug exposure (AUC, Cmax, Tmax) and confirm dose proportionality. This links observed effects to systemic exposure levels [23].

Specialized Endpoints for Chronic Studies

- Recovery Period Assessment: To evaluate the reversibility of findings, a subset of animals from control and high-dose groups is maintained without dosing for a period (e.g., 4-8 weeks) after the main dosing phase concludes, then subjected to full terminal analysis [23] [39].

- Immunogenicity: Critical for biologics. The formation of anti-drug antibodies (ADA) is monitored as it can alter pharmacokinetics, reduce efficacy, or cause immune complex disease [39].

- Biomarkers: Specific, quantifiable indicators of organ injury or pharmacological effect (e.g., cardiac troponins, urinary kidney injury molecule-1) may be incorporated to enhance sensitivity.

Experimental Protocol: A Standard Chronic Toxicity Study Outline

Title: 6-Month Repeated-Dose Oral Toxicity Study of [Test Article] in Sprague-Dawley Rats with a 4-Week Recovery Period. Objective: To characterize the toxicological profile of [Test Article] following daily oral administration for 6 months. Test System: Sprague-Dawley rats, 7-8 weeks old at dosing initiation. Groups: 4 groups (Vehicle Control, Low, Mid, High Dose), 20/sex/group for main study, plus 5/sex/group for recovery (Control and High only). Dosing: Daily oral gavage, dose volume based on most recent body weight. Endpoint Schedule:

- Daily: Mortality, clinical signs.

- Weekly: Body weight, food consumption.