Accelerating Evidence Synthesis: Practical Strategies to Reduce Time Requirements for Systematic Reviews in Toxicology

Systematic reviews are foundational to evidence-based toxicology but are notoriously time-consuming, with traditional projects taking over a year to complete on average [citation:4].

Accelerating Evidence Synthesis: Practical Strategies to Reduce Time Requirements for Systematic Reviews in Toxicology

Abstract

Systematic reviews are foundational to evidence-based toxicology but are notoriously time-consuming, with traditional projects taking over a year to complete on average [citation:4]. This creates a critical bottleneck for research and regulatory decision-making. This article synthesizes current strategies to expedite the systematic review process without compromising scientific rigor. It begins by examining the core reasons for extended timelines, including complex problem formulation and the challenge of screening vast literature yields. The discussion then explores modern methodological accelerants, such as AI-assisted screening and 'right-sized' protocols, highlighting the emerging 'human-in-the-loop' model debated at recent toxicology forums [citation:3]. Furthermore, the article provides troubleshooting guidance for common inefficiencies and evaluates validation frameworks, including the COSTER recommendations, to ensure accelerated methods remain reliable [citation:8]. The synthesis aims to equip researchers and drug development professionals with a validated toolkit to produce high-quality, timely evidence syntheses, thereby accelerating the pace of toxicological research and safety assessment.

Why Are Systematic Reviews in Toxicology So Time-Consuming? Analyzing the Core Bottlenecks

The transition from narrative reviews to systematic reviews represents a fundamental shift towards rigor, transparency, and reproducibility in evidence-based toxicology. Pioneered in clinical medicine, systematic reviews provide a methodologically rigorous framework for summarizing all available evidence pertaining to a precisely defined research question [1]. In toxicology, this approach is critical for informing regulatory decisions, risk assessments, and safety evaluations, moving beyond expert opinion to an objective analysis of the collective evidence [2].

Traditional narrative reviews, while valuable for providing broad expert perspectives, often suffer from unstated methodologies, unclear literature selection criteria, and potential for selective citation, increasing the risk of bias and making independent verification difficult [2]. In contrast, a systematic review follows a predefined, peer-reviewed protocol that details every step: from formulating the question and searching multiple literature databases to selecting studies, assessing their quality, and synthesizing findings, sometimes quantitatively via meta-analysis [1]. This process, though historically more resource-intensive, is essential for minimizing error and producing reliable, defensible conclusions that can support high-stakes public health and regulatory decisions [2].

The drive towards systematic reviews in toxicology is part of the broader Evidence-Based Toxicology (EBT) movement, which seeks to apply core principles of evidence-based medicine to toxicological questions [2]. A core challenge, however, has been the significant time and resource investment required. Completing a systematic review can often take over a year, compared to months for a narrative review, requiring specialized expertise in science, informatics, and data analysis [2]. This article, and the subsequent technical guidance, is framed within the critical thesis of reducing these time requirements without compromising methodological rigor, leveraging automation, clear protocols, and efficient workflows to make robust evidence synthesis more accessible and timely for researchers and drug development professionals.

Technical Support Center: Troubleshooting Guides and FAQs

This section addresses common practical challenges researchers face when conducting systematic reviews in toxicology, offering solutions grounded in methodological best practices and emerging automation technologies.

FAQ 1: Our review team is overwhelmed by the volume of search results. How can we screen studies more efficiently without missing relevant ones?

- Problem: Initial database searches in toxicology often return thousands of citations. Manual title/abstract screening is the most time-consuming phase of a review [3].

- Solution: Implement semi-automated screening tools that use machine learning (ML).

- Actionable Guide:

- Select a Tool: Choose a dedicated systematic review platform like Rayyan or DistillerSR, which incorporate active learning algorithms [4].

- Train the Algorithm: Start by manually screening a random sample of 100-200 references, labeling them as "Include" or "Exclude."

- Prioritize Screening: The ML algorithm will rank the remaining citations, placing those most similar to your "Include" studies at the top of your screening queue.

- Validate Performance: Continue screening until you pass a point of diminishing returns (e.g., after screening 50 consecutive irrelevant studies). Studies have shown this approach can reduce the number of abstracts needing manual review by 55-64% while maintaining high recall [3].

FAQ 2: We need to update a living systematic review (LSR) but cannot manually re-run searches every month. Is there a way to automate search updates?

- Problem: Maintaining a living review requires constant surveillance for new literature, which is unsustainable manually.

- Solution: Set up automated search alerts and use dedicated LSR platforms.

- Actionable Guide:

- Save and Register Searches: Save your final, executed search string in each database (PubMed, Embase, etc.). Use the "Alert" function to receive weekly or monthly email updates of new results.

- Implement a Dedicated Workflow: Use a review production platform (e.g., DistillerSR) that allows you to save search strings and periodically re-execute them against integrated database APIs, importing new results directly into your screening project.

- Automate Deduplication: Configure the platform to automatically identify and flag duplicate references from the new search results against your already-screened corpus.

- Streamline Integration: New, unique citations are automatically fed into your pre-trained semi-automated screening workflow (see FAQ 1), requiring your team to screen only the highest-priority new entries.

FAQ 3: How do we handle and synthesize data from highly heterogeneous toxicology studies (e.g., different species, exposure routes, endpoints)?

- Problem: Toxicological evidence is often fragmented across in vivo, in vitro, and in silico streams with diverse experimental designs, making direct synthesis challenging [2].

- Solution: Prioritize a structured qualitative synthesis and use explicit frameworks for evidence integration.

- Actionable Guide:

- Design a Detailed Extraction Template: Before data extraction, create a template that captures all relevant variables: test system (species, cell line, model), test article characterization, exposure regimen, endpoint(s), results, and study reliability indicators.

- Use a Predefined Framework: Employ a framework like OHAT (Office of Health Assessment and Translation) or GRADE (Grading of Recommendations Assessment, Development, and Evaluation) to guide your assessment of the body of evidence [2]. These frameworks provide rules for rating confidence based on risk of bias, consistency, directness, and precision.

- Tabulate and Visualize: Present extracted data in structured tables and evidence maps. Use tables to compare study characteristics and outcomes side-by-side. Create diagrams (e.g., heat maps) to visually represent the presence, direction, and consistency of effects across different evidence streams.

- State Limitations Explicitly: In your synthesis, clearly document the heterogeneity and explain how it influences the interpretability and generalizability of the conclusions. A quantitative meta-analysis may only be appropriate for a highly homogenous subset of studies.

Experimental Protocols for Key Methodologies

Protocol 1: Conducting a High-Throughput In Vitro to In Vivo Extrapolation (HT-IVIVE) Screening for Hepatotoxicity

- Objective: To rapidly screen a library of chemical compounds for potential human hepatotoxicity using a tiered in vitro-in silico approach.

- Background: This protocol aligns with New Approach Methodologies (NAMs) that reduce time and cost by prioritizing compounds for deeper investigation [5].

- Materials: HepG2 or primary human hepatocyte cultures; 96- or 384-well plates; test compound library; high-content imaging system; LC-MS/MS for analytics; genomic/proteomic analysis tools (optional); IVIVE computational modeling software (e.g., GastroPlus, Simcyp).

- Methodology:

- Tier 1 - Viability Screening: Plate cells in multi-well plates. Treat with a range of compound concentrations (e.g., 1 nM – 100 µM) for 24-48 hours. Assess cell viability using high-throughput assays (ATP content, calcein-AM).

- Tier 2 - Mechanistic Profiling: For compounds showing cytotoxicity, conduct high-content imaging to assess specific injury phenotypes: mitochondrial membrane potential (TMRE staining), oxidative stress (CellROX staining), lipid accumulation (BODIPY staining), and nuclear morphology (Hoechst staining).

- Tier 3 - Transcriptomics/Proteomics: For priority compounds, perform targeted RNA-seq or proteomic analysis to identify perturbed pathways (e.g., oxidative stress response, bile acid metabolism, fibrosis).

- In Silico IVIVE: Use pharmacokinetic modeling software to extrapolate in vitro bioactivity concentrations (e.g., AC~50~ values) to a human equivalent oral dose. Input parameters include in vitro activity data, compound physicochemical properties, and human physiological parameters.

- Data Integration & Prioritization: Rank compounds based on the potency in vitro, severity of mechanistic alerts, and the magnitude of the extrapolated human dose relative to anticipated exposure.

Protocol 2: Systematic Review with Integrated Risk of Bias (RoB) Assessment for Animal Studies

- Objective: To systematically identify, evaluate, and synthesize evidence from animal studies on a specified toxicological endpoint, with explicit assessment of study reliability.

- Background: This protocol provides a transparent alternative to narrative reviews, directly addressing concerns about bias and reproducibility [1].

- Materials: DistillerSR or Covidence software; pre-published review protocol (PROSPERO registration); SYRCLE's Risk of Bias tool for animal studies; data extraction forms; statistical software for meta-analysis (e.g., R, RevMan).

- Methodology:

- Protocol Development: Define the PECO question (Population, Exposure, Comparator, Outcome). Detail search strategy, inclusion/exclusion criteria, and synthesis plan. Register on PROSPERO.

- Search & Screening: Execute search across MEDLINE, Embase, ToxFile, etc. Use semi-automated screening (see FAQ 1) to select studies.

- Data Extraction & RoB Assessment: Extract study data using a pilot-tested form. Concurrently, two independent reviewers assess each study using the SYRCLE RoB tool, which covers sequence generation, blinding, selective outcome reporting, and other biases. Disagreements are resolved by consensus.

- Evidence Synthesis: Group studies by outcome and species/strain. Perform meta-analysis if studies are sufficiently homogeneous. If not, perform a qualitative synthesis weighted by the RoB assessment. Use the GRADE approach for animal studies to rate the overall confidence in the evidence.

- Reporting: Follow the PRISMA and ARRIVE reporting guidelines to ensure complete transparency of methods and findings.

Table 1: Summary of Key Efficiency Metrics for Systematic Review Automation

| Automation Technology | Application Phase | Reported Efficiency Gain | Key Benefit |

|---|---|---|---|

| Machine Learning (Rayyan, ASReview) | Title/Abstract Screening | 55-64% reduction in abstracts to screen [3]; Work Saved at 95% recall (WSS@95%) up to 90% [3] | Drastically reduces most labor-intensive phase |

| Natural Language Processing (NLP) | Data Extraction | Variable; can auto-extract PICO elements from text | Reduces human error and time in manual extraction |

| Living Systematic Review Platforms | Search Updates & Integration | Enables monthly updates instead of full re-review | Maintains review currency with manageable effort |

| Automated De-duplication | Reference Management | Near-instantaneous processing of thousands of citations | Eliminates a tedious manual task |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Modern Toxicology

| Item | Function in Toxicology Research | Example/Notes |

|---|---|---|

| Primary Human Hepatocytes | Gold-standard in vitro model for hepatic metabolism and toxicity studies; maintain cytochrome P450 activity. | Essential for hepatotoxicity and metabolism studies; cryopreserved formats increase accessibility. |

| High-Content Screening (HCS) Assay Kits | Multiplexed assays to quantify multiple cellular endpoints (viability, oxidative stress, apoptosis) simultaneously via automated imaging. | Kits for mitochondrial health, DNA damage, and steatosis accelerate mechanistic profiling. |

| ToxCast/Tox21 Bioactivity Library | Publicly available database of high-throughput screening results for thousands of chemicals across hundreds of biological pathways. | Serves as a primary data source for predictive modeling and chemical prioritization [5]. |

| Physiologically Based Kinetic (PBK) Modeling Software | In silico tools to perform IVIVE, predicting tissue concentrations and pharmacokinetics in humans from in vitro data. | Software like GastroPlus or Simcyp is critical for translating NAMs data to human-relevant doses [5]. |

| Systematic Review Automation Software | Platforms that streamline and partially automate literature screening, data extraction, and reporting. | Tools like DistillerSR or Rayyan are fundamental for implementing efficient, reproducible evidence synthesis [4] [3]. |

| SYRCLE's Risk of Bias Tool | A specialized tool to assess the methodological quality and risk of bias in animal studies, improving evidence reliability assessment. | Critical for ensuring rigor in systematic reviews of preclinical toxicology data [2]. |

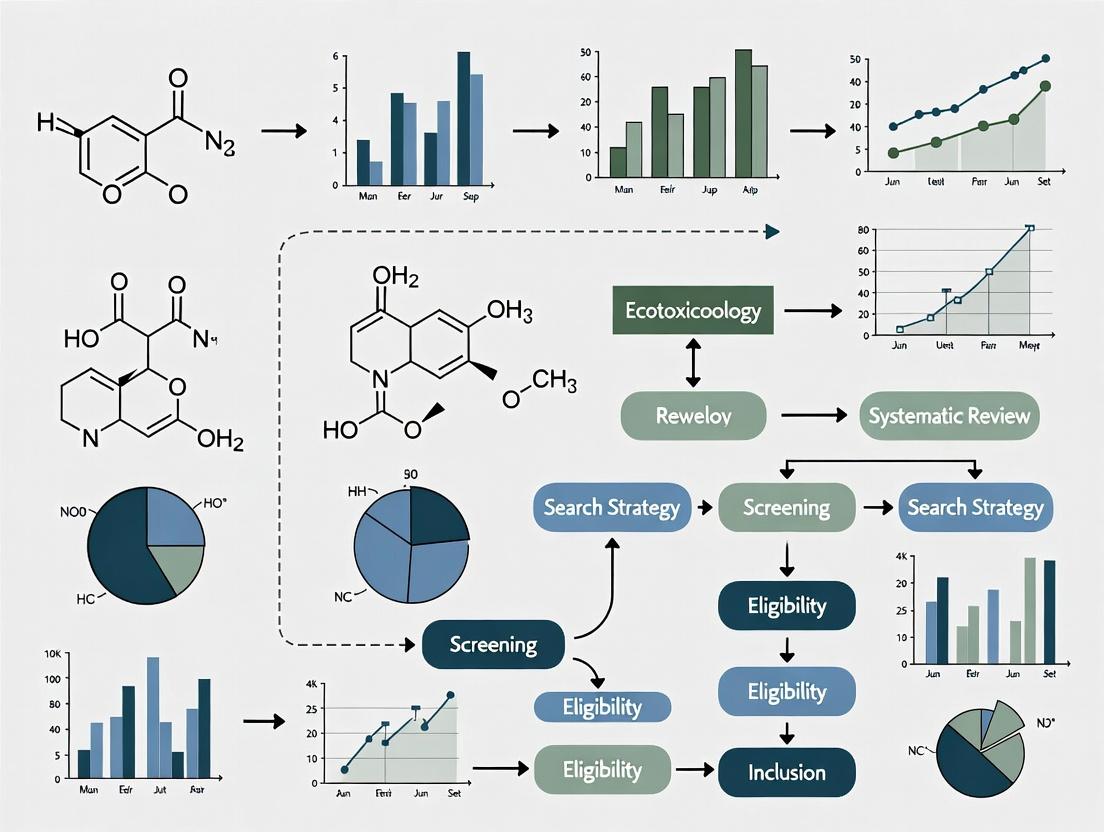

Visualizing Workflows and Decisions

Diagram: Traditional vs. Automated Systematic Review Workflow (Max 760px)

Diagram: Decision Path for Selecting a Review Strategy (Max 760px)

Systematic reviews represent the gold standard for evidence synthesis in toxicology, offering a transparent, reproducible, and methodologically rigorous means to summarize available evidence on a precisely framed research question [2]. In the broader movement toward evidence-based toxicology (EBT), systematic reviews are essential for informing regulatory decisions and policy [2]. However, this rigor comes at a significant cost: time. Compared to traditional narrative reviews, which may be completed in months, a full systematic review typically requires more than one year to complete and demands expertise not only in the scientific subject matter but also in systematic review methodology, literature search strategies, and data analysis [2].

This time burden is a major obstacle to the wider adoption of systematic reviews in toxicology. The process is inherently labor-intensive, involving steps such as developing protocols, executing comprehensive searches across multiple databases, screening thousands of references, extracting data, and performing critical appraisals [2]. This article quantifies this burden, presents data on average durations, and provides a technical support center with targeted strategies and tools designed to drastically reduce the time and resource requirements for conducting high-quality systematic reviews in toxicology.

Quantitative Analysis: Time and Resource Data

The following tables summarize the comparative time investment and break down the distribution of effort across a typical systematic review project in toxicology.

Table 1: Comparison of Narrative vs. Systematic Review Characteristics and Time Burden

| Feature | Narrative Review | Systematic Review |

|---|---|---|

| Research Question | Broad and often informal [2]. | Specified, focused, and explicit [2]. |

| Literature Search | Not typically specified or comprehensive [2]. | Comprehensive, multi-database search with explicit strategy [2]. |

| Study Selection | Unspecified, often subjective [2]. | Explicit, pre-defined selection criteria [2]. |

| Quality Assessment | Usually absent or informal [2]. | Critical appraisal using explicit criteria [2]. |

| Synthesis | Qualitative summary [2]. | Qualitative and often quantitative (meta-analysis) summary [2]. |

| Typical Duration | Months [2]. | >1 year [2]. |

| Required Expertise | Subject matter science [2]. | Science, systematic review methods, literature search, data analysis [2]. |

| Relative Cost | Low [2]. | Moderate to High [2]. |

Table 2: Quantified Time Investments and Potential Savings in Key Systematic Review Stages

| Review Stage | Traditional Manual Process (Estimated Time) | Optimized Process with Automation Tools | Key Supporting Evidence & Tools |

|---|---|---|---|

| De-duplication of Search Results | Manual checking can be a major time sink, with variable accuracy (Recall: ~88.65%) [6]. | Automated tools like Deduklick can achieve near-perfect accuracy (Recall: ~99.51%, Precision: ~100%) in minutes [6]. | Deduklick uses NLP and rule-based algorithms to normalize metadata and calculate similarity scores [6]. |

| Title/Abstract Screening | Reviewers manually screen thousands of citations, a process taking weeks [7]. | Machine learning classifiers can prioritize likely relevant articles, reducing manual screening by ≥50% for some topics [7]. | A voting perceptron-based classifier was shown to effectively triage articles for drug efficacy reviews [7]. |

| Full-Text Review & Data Extraction | Extremely time-consuming, requiring detailed reading and data entry from PDFs. | AI-assisted tools can help with data location and extraction, though human verification remains critical. | Workflow optimization principles emphasize eliminating redundant steps and parallelizing tasks [8]. |

| Overall Project Timeline | Median time to submission can be around 40 weeks for a team [6]. | Strategic automation can save hundreds of person-hours, potentially reducing timelines by weeks [6]. | Integration of tools, clear process ownership, and continuous monitoring are key [8] [9]. |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: Our initial literature search from multiple databases returns over 10,000 references, and de-duplication seems overwhelming. What is the most efficient and reliable method? A: Manual de-duplication, often using features in reference managers, is time-consuming and prone to error, with studies showing a sensitivity (recall) as low as 51-57% for tools like EndNote when used alone [6]. We recommend using a dedicated, automated de-duplication tool such as Deduklick [6]. This tool uses natural language processing (NLP) to normalize metadata (authors, journals, titles) and a rule-based algorithm to identify duplicates with superior accuracy (99.51% recall, 100% precision) [6]. This transforms a days-long manual task into one that can be completed in minutes with greater reliability.

Q2: We need to screen thousands of abstracts for relevance. Are there valid ways to use automation to speed this up without missing key studies? A: Yes. Machine learning-based classification systems can act as a "triage" tool. These systems are trained on your initial manual screening decisions to learn what constitutes a relevant vs. irrelevant citation for your specific review question [7]. In practice, such systems have been shown to reduce the number of abstracts needing manual review by 50% or more for certain topics while aiming to maintain 95% recall (i.e., missing only 5% of relevant studies) [7]. This allows your team to focus manual effort on the most promising citations.

Q3: How can we accurately measure and document the time spent on our review to identify bottlenecks? A: Accurately measuring time use is challenging. For structured activities like team meetings, use passive data collection (e.g., calendar records of duration and attendance) [10]. For irregular tasks like screening or data extraction, active data collection is needed. The most reliable method is to integrate time tracking into existing workflows; if screeners already use a web-based platform, adding a time-logging function is efficient [10]. When asking team members for self-reported estimates, frame questions around "typical" tasks (e.g., "How long does it take to screen a typical abstract?") rather than recalling aggregate time over a vague period, as this yields more accurate data [10].

Q4: Our review process feels chaotic, with unclear hand-offs and frequent delays. How can we structure our workflow? A: Implement the core principles of workflow optimization [8] [9].

- Map Your Current Workflow: Visually diagram each step from protocol to publication, noting who is responsible and average time taken [8].

- Identify Bottlenecks: Look for stages where work piles up, such as a single person responsible for quality checking all extractions [8].

- Set Clear Goals & Owners: Assign a dedicated owner to each major stage (e.g., screening, extraction) who is responsible for monitoring progress [8].

- Eliminate Redundancies: Question if every approval step or data re-entry is necessary [8].

- Automate Repetitive Tasks: Implement tools for de-duplication, initial screening prioritization, and report generation where possible [8] [7] [6].

- Monitor and Iterate: Use a shared dashboard to track progress against timelines and hold regular brief check-ins to solve problems quickly [8] [9].

Q5: We are concerned about the quality and consistency of data extraction, which is slow. What can we do? A: Quality and speed in data extraction are improved through standardization and training.

- Develop a Detailed, Piloted Extraction Form: Create a data extraction form in your chosen software (e.g., Covidence, Rayyan, SRDR+) with explicit, coded fields. Pilot this form on 5-10 studies by all extractors to ensure instructions are clear.

- Dual Extract a Subset: Have two reviewers independently extract data from a critical subset of studies (e.g., 20%). Calculate inter-rater reliability, discuss discrepancies to align understanding, and then proceed with single extraction (plus spot-checking) for the remainder.

- Use Text Mining Assistants: Emerging AI tools can help locate specific data points (e.g., sample size, dose) within PDFs, but all outputs must be meticulously verified by a human expert. The primary gain is in navigation, not cognition.

Troubleshooting Guide for Common Technical Issues

Problem: Search strategy is too broad, yielding an unmanageable number of irrelevant results.

- Solution: Consult with an information specialist (librarian) experienced in systematic reviews. Use controlled vocabularies (MeSH, Emtree) and field tags effectively. Apply validated methodology filters for study design (e.g., for animal studies) but be aware they may not be as robust as those for clinical trials [2].

Problem: Managing and sharing PDFs and extraction data across the team is disorganized.

- Solution: Use a dedicated systematic review project management platform. These cloud-based platforms (e.g., Covidence, Rayyan, DistillerSR) centralize references, enable blind screening with conflict resolution, host custom extraction forms, and provide audit trails.

Problem: The team is stuck on assessing the risk of bias (quality) of non-randomized animal studies.

- Solution: Do not adapt tools meant for human clinical trials (e.g., Cochrane RoB). Use a tool specifically designed for toxicology, such as the Office of Health Assessment and Translation (OHAT) Risk of Bias Rating Tool or the SYRCLE's risk of bias tool for animal studies. Ensure all reviewers are trained on the tool using practice studies.

Experimental Protocols & Methodologies for Time-Reduction Strategies

Protocol 1: Implementing an Automated De-duplication Workflow Using Deduklick

Objective: To remove duplicate citations from merged search results with maximum accuracy and minimal manual effort. Materials: Merged citation file in RIS format; Access to Deduklick or similar automated de-duplication software. Procedure:

- Export the complete, merged set of citations from all database searches into a single RIS file.

- Upload the RIS file to the Deduklick platform.

- The algorithm automatically processes the references:

- Preprocessing: Normalizes author names, journal titles, DOIs, and page numbers; translates non-English titles [6].

- Similarity Calculation: Computes similarity scores between all references using the Levenshtein distance [6].

- Rule-Based Clustering: Clusters similar references and applies conservative, pre-defined rules to mark duplicates, prioritizing the retention of unique references [6].

- Download the output, which includes two folders: one with unique references and one with the identified duplicates.

- Quality Check (Recommended): Manually review a random sample of the "duplicates" folder (e.g., 50 references) to verify they are true duplicates. Also, check the "unique" folder for any obvious remaining duplicates (e.g., from a key study known to be in the results).

Objective: To reduce the manual abstract screening workload by training a classifier to prioritize likely relevant citations. Materials: Reference management software; A machine learning screening tool (e.g., Rayyan AI, Abstractx); A set of at least 500 initially screened references (labeled as "Include" or "Exclude"). Procedure:

- Initial Manual Screening & Training Set: After de-duplication, a reviewer screens a substantial, random sample of abstracts (e.g., 20-30% or a minimum of 500) against the inclusion/exclusion criteria, making a definitive decision for each.

- Model Training: Import these labeled references into the chosen AI tool. The tool's algorithm (e.g., a support vector machine or perceptron model) learns the linguistic patterns associated with "included" vs. "excluded" references [7].

- Prediction & Prioritization: The model then predicts the relevance of the remaining unscreened abstracts. It ranks them from most likely to least likely to be relevant.

- Prioritized Screening: Reviewers screen the "likely relevant" pile first. The system can be set to halt screening after a consecutive batch of low-relevance abstracts (e.g., 100) yields no inclusions, allowing the team to confidently discard the lowest-ranked portion. All "Include" decisions must be made by a human.

- Validation: The performance of the model (recall, precision) should be monitored and reported in the review's methods section.

Diagram 1: Automated Screening Workflow. This workflow integrates machine learning to prioritize abstracts, potentially reducing manual screening burden [7].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools and Resources for Efficient Systematic Reviews

| Item Name | Category | Function & Application | Key Benefit for Time Reduction |

|---|---|---|---|

| Deduklick [6] | Software Algorithm | Automated de-duplication of citation libraries using NLP and rule-based clustering. | Replaces days of manual work with minutes of processing at near-perfect accuracy. |

| Machine Learning Classifiers [7] | AI Tool | Ranks citations by predicted relevance based on training from initial screening decisions. | Can halve the manual screening workload by allowing reviewers to focus on high-probability articles. |

| Systematic Review Platforms (e.g., Covidence, Rayyan) | Project Management Software | Cloud-based platforms for collaborative reference screening, data extraction, and conflict resolution. | Centralizes workflow, eliminates version control issues, and provides structured progress tracking. |

| OHAT Risk of Bias Tool | Methodological Tool | A standardized tool for assessing risk of bias in human and animal studies of toxicology. | Provides a consistent, pre-defined framework for critical appraisal, speeding up and standardizing evaluations. |

| Reference Management Software with API (e.g., EndNote, Zotero) | Citation Software | Manages references and PDFs; APIs allow integration with other screening and analysis tools. | Serves as the central repository and can automate data flow between search databases and review platforms. |

| Text Mining / NLP Libraries (e.g., spaCy, SciSpacy) | Programming Library | For developing custom scripts to locate and extract specific data points from PDF text. | Automates the most tedious aspects of full-text review and data extraction (requires technical expertise). |

Optimized Workflow Diagrams for Systematic Reviews

Diagram 2: Optimized Systematic Review Workflow. This end-to-end workflow highlights phases where automation and structured project management are integrated to reduce time burden [8] [7] [6].

In the field of toxicology, where the timely assessment of chemical safety is critical for public health and regulatory decision-making, the systematic review (SR) is a cornerstone of evidence synthesis [11]. However, conducting a traditional SR is a notoriously resource-intensive endeavor, often requiring 12 months or more to complete [12]. This significant time investment creates a major bottleneck, delaying the translation of research into safety guidelines and effective policies.

This technical support center is designed within the context of a thesis focused on reducing time requirements for systematic reviews in toxicology research. It targets the most labor-intensive phases—searching, screening, and data extraction—by providing researchers, scientists, and drug development professionals with practical troubleshooting guides, optimized protocols, and evidence-based strategies to enhance efficiency without compromising rigor [13] [14].

Technical Support Center: FAQs for Systematic Review Workflows

This section addresses common logistical and methodological questions faced by researchers undertaking systematic reviews.

Q1: How long does a systematic review actually take, and why is it so time-consuming? A traditional systematic review is a major research project that typically takes at least 12 months to conduct from protocol development to final manuscript [12]. The timeline is extensive due to the rigorous, multi-stage process designed to minimize bias. The most labor-intensive phases are the systematic searching of multiple databases, the manual screening of thousands of records and full-text articles, and the detailed extraction of data from included studies [13] [15].

Q2: Why is a team essential, and what roles are required? A team is essential to avoid bias, distribute the significant workload, and provide necessary expertise [12]. A typical team should include:

- Content Experts: Provide deep knowledge of the toxicology field.

- Methodology Experts: Design the review protocol and ensure rigorous standards.

- Information Specialist/Librarian: Develop and execute comprehensive, reproducible search strategies across multiple databases [12].

- Data Manager: Handle references and data using specialized software.

- Statistician: Conduct meta-analyses if required.

Q3: What is the role of an information specialist or librarian, and should they be an author? A librarian or information specialist is crucial for developing a high-quality, reproducible search strategy—a foundational step in the SR. Their role includes advising on databases, designing complex search syntax, managing results, and documenting the entire search process [12]. According to systematic review standards, contributing to key methodological steps like the search strategy warrants authorship [12].

Q4: What is the difference between a systematic review and a scoping review? Choosing the right review type is critical for managing scope and workload.

- Systematic Review: Aims to answer a specific, focused research question (e.g., "What is the effect of chemical X on liver enzyme Y in rodent models?"). It involves pre-defined eligibility criteria, rigorous quality assessment of studies, and often a quantitative synthesis (meta-analysis) [15].

- Scoping Review: Aims to map the key concepts and evidence base for a broader topic (e.g., "What in vitro methodologies exist for assessing the hepatotoxicity of perfluoroalkyl substances?"). It has broader inclusion criteria, does not typically include quality assessment, and results in a narrative or thematic synthesis [15].

Table 1: Comparison of Systematic Review and Scoping Review Characteristics [15].

| Indicator | Systematic Review (SR) | Scoping Review (ScR) |

|---|---|---|

| Purpose | Answer a specific research question by summarizing existing evidence. | Map existing literature, identify key concepts, sources, and knowledge gaps. |

| Research Question | Focused and clearly defined. | Broader, exploratory, or multi-part. |

| Inclusion Criteria | Narrow and defined a priori. | Broader and more flexible. |

| Quality Assessment | Required (critical appraisal). | Optional. |

| Synthesis | Quantitative (meta-analysis) or qualitative synthesis of results. | Narrative/descriptive synthesis to map evidence. |

Q5: What are the core steps in conducting a systematic review? The process follows a standardized sequence to ensure completeness and transparency [13] [12]:

- Frame the research question.

- Develop and register a protocol.

- Conduct a comprehensive, systematic literature search.

- Screen records (title/abstract, then full-text) against eligibility criteria.

- Critically appraise the risk of bias in included studies.

- Extract relevant data from included studies.

- Synthesize and analyze the evidence (narratively or via meta-analysis).

- Interpret findings and report the review.

Troubleshooting Common Workflow Bottlenecks

Problem 1: Unmanageable Search Yield

- Symptoms: Initial database searches return an overwhelming number of records (e.g., tens of thousands), making screening infeasible.

- Solutions:

- Refine the PICO: Revisit and narrow your Population, Intervention/Exposure, Comparator, and Outcome elements with your team [14].

- Consult a Specialist: Work with an information specialist to add relevant, precise MeSH/Emtree terms and apply appropriate search filters (e.g., by study type, species) [12].

- Pilot Search Strategy: Test and refine your search in one database before running it across all sources.

Problem 2: Inconsistent Screening and Low Inter-Rater Reliability

- Symptoms: High disagreement between independent reviewers during the title/abstract or full-text screening phase, leading to delays and need for extensive reconciliation.

- Solutions:

- Develop a Detailed Codebook: Create explicit, written inclusion/exclusion criteria with practical examples and edge-case rules before screening begins [13].

- Conduct Calibration Exercises: All reviewers should independently screen the same pilot set of 50-100 records, compare results, discuss discrepancies, and refine the codebook until high agreement (e.g., Kappa > 0.8) is achieved.

- Use Dedicated Software: Employ systematic review software (e.g., Covidence, Rayyan) which is designed to manage blinded, dual screening workflows and track decisions.

Problem 3: Slow, Error-Prone Data Extraction

- Symptoms: The data extraction phase takes weeks, data forms are incomplete or inconsistent, and validation between extractors reveals numerous discrepancies.

- Solutions:

- Design a Pre-Piloted Extraction Form: Build a structured form in your SR software or spreadsheet before extraction starts. It should include clear definitions for every variable.

- Automate Where Possible: For high-volume, repetitive tasks, investigate automation tools. For example, in omics-based toxicology reviews, automated workstations can process 192 samples in the time it takes to manually process 50, drastically reducing hands-on time and improving consistency [16].

- Extract in Duplicate: Have two reviewers extract data independently from each study, then resolve differences. This is mandatory for key outcome data.

Optimizing the Labor-Intensive Phases: Protocols and Visual Workflows

The Traditional Systematic Review Workflow

The following diagram illustrates the standard, largely manual workflow, highlighting stages where time burdens are highest.

Diagram 1: Traditional SR workflow with high-intensity phases.

Optimized Workflow with Integrated Automation & NAMs

This diagram models a modern, efficient workflow for toxicology SRs that integrates automation and New Approach Methodologies (NAMs) to target bottlenecks [11] [16].

Diagram 2: Optimized workflow leveraging technology and NAMs.

Detailed Protocol: Implementing Semi-Automated Screening

This protocol targets the most labor-intensive screening phase [13] [14].

- Objective: To reduce the manual burden of title/abstract screening by using machine learning prioritization while maintaining methodological rigor.

- Materials: Systematic review software with AI screening capabilities (e.g., ASReview, Rayyan AI), pre-piloted inclusion/exclusion codebook.

- Methodology:

- Seed Set Creation: After de-duplication, reviewers independently screen a random sample of at least 200 records. These human-coded "relevant" and "irrelevant" records form the seed set to train the algorithm.

- Algorithm Training & Prioritization: The AI model is trained on the seed set. It then re-ranks the entire unscreened bibliography, placing records it predicts as relevant at the top of the screening queue.

- Human-in-the-Loop Screening: Reviewers screen the AI-prioritized list sequentially. The model continuously updates its predictions based on new human decisions.

- Stopping Rule: Screening can be stopped using a pre-defined rule (e.g., after reviewing 100 consecutive irrelevant records), with the understanding that a small proportion of relevant records in the unscreened tail may be missed—a trade-off for efficiency that must be justified in the protocol.

- Validation: A second reviewer should screen a sample of records from the AI-excluded tail to quantify the potential miss rate and validate the stopping rule.

The Scientist's Toolkit: Research Reagent Solutions for Modern Toxicology Reviews

Modern toxicology systematic reviews increasingly incorporate evidence from New Approach Methodologies (NAMs) like in vitro and omics studies [11] [16]. Understanding the key tools in these studies is essential for accurate data extraction and appraisal.

Table 2: Key Research Reagent Solutions in Omics-Driven Toxicology [16].

| Item | Function in Toxicology Research | Role in Systematic Review Efficiency |

|---|---|---|

| Automated Liquid Handling Workstations | Perform high-throughput, precise pipetting for sample preparation (e.g., metabolite extraction). | Enables generation of large, consistent NAM datasets. Reviewers extract data from studies with standardized, high-quality methods. |

| Targeted & Untargeted Metabolomics Assays | Quantify known metabolites or discover novel metabolic changes in response to toxicants. | Provides rich, mechanistic outcome data for synthesis. Automated data output formats can facilitate machine-readable extraction. |

| Multi-Omics Integration Platforms | Combine data from metabolomics, transcriptomics, and proteomics for a systems-level view of toxicity. | Presents complex, structured data that can be appraised and extracted as cohesive units, revealing adverse outcome pathways. |

| Model Organisms (Zebrafish, Daphnia) | Provide human-relevant toxicology data in a high-throughput, ethically preferable system. | Expands the evidence base beyond rodent studies. Data from standardized models may be more homogeneous, aiding synthesis. |

| Cohort Samples & Biobanks | Provide well-characterized biological samples for analyzing real-world exposure effects. | Allows reviewers to include human observational data with biomarker validation, bridging NAMs and population health outcomes. |

Deconstructing the systematic review timeline reveals that the phases of screening and data extraction impose the greatest manual burden on researchers [13] [12]. The thesis that these time requirements can be reduced is supported by tangible strategies: forming skilled multi-disciplinary teams, leveraging specialized software for workflow management, and strategically implementing automation—particularly in screening and in processing data from advanced NAMs [12] [16].

The future of efficient evidence synthesis in toxicology lies in hybrid workflows that combine irreplaceable human expertise in critical appraisal and interpretation with technological tools that handle repetitive, high-volume tasks. By adopting the troubleshooting guides, optimized protocols, and toolkit awareness outlined in this support center, researchers can accelerate the pace of safety evaluation, thereby more swiftly informing regulatory science and protecting public health [11].

Central Thesis: Traditional systematic reviews in toxicology are critically hampered by time-intensive processes, often taking over a year to complete, which delays risk assessment and decision-making [2]. This technical support center provides targeted solutions for accelerating these reviews by addressing three core complexities: formulating precise PECO criteria, efficiently mapping multiple evidence streams, and integrating data for toxicological specificity.

Troubleshooting Guide 1: PECO Criteria Formulation

A poorly constructed PECO (Population, Exposure, Comparator, Outcomes) statement is the most common source of delay, leading to irrelevant search results, unnecessary screening labor, and ambiguous inclusion decisions [17].

Frequently Asked Questions (FAQs)

Q1: Our initial literature search is returning far too many irrelevant results. Where did we go wrong?

- A: This typically indicates an overly broad PECO, especially in the Exposure (E) or Outcomes (O) domains. Reframe your question using specific scenarios. For example, instead of "What is the effect of chemical X?", ask "What is the effect of an exposure to ≥ 80 mg/kg/day of chemical X compared to < 10 mg/kg/day on liver weight in adult female Sprague-Dawley rats?" [17]. This specificity sharply focuses the search.

Q2: How do we define a meaningful "Comparator" for an environmental exposure?

- A: The comparator is frequently the most challenging element. Use a framework to select the most appropriate approach based on what is known [17]. See the table below for common scenarios and corrections.

Q3: We need to integrate both animal and human studies. How do we frame a single PECO?

Common PECO Errors and Corrections

Table 1: Troubleshooting common PECO formulation errors based on established frameworks [17].

| Error Type | Vague Example | Corrected, Actionable Example | Scenario Applied [17] |

|---|---|---|---|

| Uncertain Comparator | "Effect of noise exposure..." | "Effect of the highest noise exposure tertile compared to the lowest tertile..." | Scenario 2: Using data-driven cut-offs. |

| Unquantified Exposure | "Effect of high dose of chemical Y..." | "Effect of an oral dose of ≥ 100 mg/kg/day of chemical Y..." | Scenario 4: Using a pre-defined toxicological cut-off. |

| Unspecific Outcome | "Effect on neurological health..." | "Effect on motor coordination as measured by rotarod latency to fall..." | Applicable to all scenarios; requires precise endpoint definition. |

Experimental Protocol: Systematic Review Problem Formulation

Objective: To develop a precise, answerable PECO question that minimizes downstream screening workload. Materials: Internal expertise, existing assessment reports (e.g., ATSDR profiles, IRIS plans [19]), exposure data. Methodology:

- Assemble Team: Include a subject matter expert, a systematic review methodologist, and a risk assessor/end-user.

- Gather Context: Review previous assessments for the chemical or endpoint of interest to understand known effect levels and data gaps [19] [20].

- Draft PECO Elements: Independently draft proposed definitions for P, E, C, and O.

- Scenario Workshop: Using the five-scenario framework [17], debate which scenario matches your review's purpose (e.g., exploring association vs. defining a safe level).

- Iterate and Pilot: Finalize the PECO statement and test it with a pilot literature search in one database. Refine if precision or recall is inadequate.

Diagram 1: PECO Formulation and Refinement Workflow (80 characters)

Troubleshooting Guide 2: Systematic Evidence Mapping (SEM)

When a full systematic review is too resource-intensive, or the evidence base is vast and complex, a Systematic Evidence Map (SEM) provides a rapid alternative to visualize the landscape of available research and identify key studies for deeper analysis [19].

Frequently Asked Questions (FAQs)

Q1: What is the difference between a full systematic review and an SEM?

- A: An SEM focuses on cataloging and visualizing the availability of studies (e.g., by chemical, outcome, study type) with limited data extraction. A full review involves detailed data extraction, study evaluation, and evidence synthesis for a narrow question [19]. An SEM is a strategic scoping tool that can reduce the time for a subsequent full review.

Q2: How can an SEM accelerate the update of an existing toxicity assessment?

- A: When updating an assessment (e.g., for uranium [20]), an SEM can be applied only to new literature published since the last review. The map quickly identifies which health outcomes have new data, allowing assessors to focus their effort on re-evaluating only those endpoints, rather than re-reviewing the entire evidence base.

Q3: Should we evaluate study quality in an SEM?

- A: Typically, no. The primary goal is mapping coverage. However, a "fit-for-purpose" SEM can include a high-level study evaluation (e.g., reporting quality) to help prioritize certain studies for further review [19].

Key Metrics for SEM Efficiency

Table 2: Quantitative benchmarks for planning and executing a Systematic Evidence Map [19] [2] [21].

| Metric | Typical Range / Value | Implication for Time Savings |

|---|---|---|

| Time to complete full SR | Often > 1 year [2] | Baseline for comparison. |

| SEM as % of full SR time | Approximately 30-50% | Significant acceleration for landscape analysis. |

| Screening speed with ML tools | Can reduce screening time by ~50% [21] | Critical for large evidence bases. |

| Studies tagged as 'Supplemental' | Can be >50% of retrieved records [19] | Efficiently sidelines in vitro, NAMs, PK studies for later consideration. |

Experimental Protocol: Conducting a Fit-for-Purpose SEM

Objective: To rapidly create an interactive inventory of mammalian in vivo and epidemiological studies for a chemical or class of chemicals. Materials: Systematic review software (e.g., DistillerSR, Rayyan), machine learning classifiers for screening (optional) [21], visualization software (e.g., Tableau, R Shiny). Methodology:

- Define Broad PECO & Supplemental Categories: Use a broader PECO than a full review. Pre-define supplemental categories (e.g., in vitro, toxicokinetics, NAMs) [19].

- Search & Screen with Automation: Execute search strings. Use machine learning tools to prioritize likely relevant records for manual screening [21].

- Tag and Categorize: For studies meeting the PECO, tag key metadata (species, exposure duration, health system). Assign supplemental studies to their category.

- Visualize: Create interactive charts and tables showing study count by health outcome, species, and study type. Public examples from IRIS assessments serve as templates [19].

- Identify Key Studies: Use the visualization to identify clusters of studies for potential full review and obvious data gaps.

Diagram 2: Systematic Evidence Mapping Process (68 characters)

Troubleshooting Guide 3: Integrating Multiple Evidence Streams

Modern toxicology must integrate traditional animal studies, human epidemiology, and New Approach Methodologies (NAMs). This integration is complex but essential for human-relevant conclusions and can be streamlined with structured frameworks [18].

Frequently Asked Questions (FAQs)

Q1: How do we combine evidence from streams with very different levels of validity (e.g., epidemiology vs. in vitro)?

- A: Do not combine them directly. Use a weight-of-evidence approach. Evaluate the strength (quality, consistency) of each stream independently. A graphical framework can plot a qualitative probability of causation for each line of evidence, leading to a deliberative, integrated conclusion [18].

Q2: How should we handle the severe missing data common in clinical toxicology studies (e.g., overdose cases)?

- A: Acknowledge and model the uncertainty. Pharmacometric methods can treat unknown doses or timing as random variables within estimated bounds. For example, Bayesian models have incorporated patient history veracity scores to inform prior distributions for dose in overdose cases [22].

Q3: Can NAMs data really replace animal studies in a systematic review for risk assessment?

- A: Currently, NAMs are best used as supplemental and supporting evidence to strengthen biological plausibility or fill specific mechanistic gaps. Their use for direct hazard identification in regulatory reviews requires a validated, standardized framework which is still under development [23].

Comparison of Evidence Streams for Integration

Table 3: Characteristics and integration considerations for primary evidence streams in toxicology [22] [18] [23].

| Evidence Stream | Key Strength | Common Limitation for Integration | Handling Strategy in Review |

|---|---|---|---|

| Human Epidemiology | Direct human relevance, identifies associations. | Confounding, exposure misclassification, often lacking precise dose data. | Assess bias rigorously; use for hazard identification. |

| Mammalian In Vivo | Controlled exposure, full-system biology. | Interspecies extrapolation uncertainty, high cost limits replicates. | Source for dose-response; evaluate translational relevance. |

| New Approach Methods (NAMs) | Human-relevant cells/ pathways, high-throughput. | Uncertain predictive validity for apical outcomes, lack of standardized protocols. | Track as supplemental evidence; assess for mechanistic support [19] [23]. |

| Toxicokinetics/PBPK | Informs dose extrapolation across species/routes. | Model complexity, parameter uncertainty. | Use to convert external doses to target tissue doses for comparison. |

Experimental Protocol: Weight-of-Evidence Integration Framework

Objective: To reach a transparent, consensus conclusion on the likelihood of causation by integrating multiple, heterogeneous evidence streams. Materials: Completed evaluations of individual studies from each evidence stream, graphical plotting tool. Methodology:

- Independent Stream Assessment: For each evidence stream (e.g., human, animal, NAMs), have reviewers assess the internal validity, consistency, and relevance of the constituent studies.

- Assign Probability Estimate: For each stream, based on the assessment, agree on a qualitative probability estimate (e.g., "Unlikely," "Possible," "Probable," "Highly Probable") that the exposure causes the outcome.

- Graphical Plotting: Plot each stream's probability estimate on a simple graph (evidence stream vs. probability). This visualizes the contribution of each line [18].

- Deliberative Integration: The review team discusses the plot. Is there convergence? Does a strong signal in one stream outweigh weak or conflicting signals in others? Reach a consensus on the integrated conclusion.

- Document Rationale: Explicitly document how the evidence from each stream contributed to the final conclusion, explaining the reasoning behind the integration.

Diagram 3: Framework for Integrating Multiple Evidence Streams (81 characters)

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key digital, experimental, and data resources for accelerating toxicological systematic reviews.

| Tool Category | Specific Item / Solution | Primary Function in Accelerating Reviews |

|---|---|---|

| Digital & Software Tools | Machine Learning Classifiers (e.g., SWIFT-Review, ASySD [21]) | Prioritize records during screening, reducing manual effort by up to 50%. |

| Systematic Review Management Platforms (e.g., DistillerSR, Rayyan) | Streamline collaborative screening, deduplication, and data extraction workflows. | |

| Interactive Visualization Software (e.g., R Shiny, Tableau) | Create live evidence maps and dashboards for real-time data exploration [19] [21]. | |

| Experimental Model Systems | Defined New Approach Methodologies (NAMs) [23] | Provide rapid, human-relevant mechanistic data to support or refute in vivo findings. |

| Transcriptomics/High-Throughput Screening Data | Offered as supplemental evidence to identify potential modes of action and sensitive endpoints [19]. | |

| Data Sources & Repositories | EPA CompTox Chemicals Dashboard [19] | Central source for chemical identifiers, properties, and associated study references. |

| Systematic Online Living Evidence Summaries (SOLES) [21] | Provides continuously updated, pre-processed evidence bases for specific research domains. |

Technical Support Center: Streamlining Systematic Reviews for Toxicology

Welcome, Researcher. This technical support center is designed to help you navigate the methodological challenges of conducting rigorous yet efficient systematic reviews (SRs) in toxicology and environmental health. The core tension is between the comprehensive rigor demanded by evidence-based science and the practical feasibility of completing reviews in a timely and resource-conscious manner [2]. The guidance below, framed within a thesis on reducing time requirements, provides actionable protocols, troubleshooting, and tools to optimize your workflow.

Systematic reviews are inherently more resource-intensive than traditional narrative reviews. The following table summarizes key comparative data, illustrating the initial investment required for an SR.

Table 1: Comparison of Narrative vs. Systematic Review Characteristics and Resource Use [2]

| Feature | Narrative Review | Systematic Review | Implication for Time Management |

|---|---|---|---|

| Typical Timeframe | Months | >1 year (usually) | SRs require a significantly longer initial commitment. |

| Research Question | Broad, often informal | Specified and precise (PICO/PECO) | A precise protocol prevents scope creep but requires upfront time. |

| Literature Search | Not specified, often limited | Comprehensive, explicit strategy across multiple databases | Searching, deduplication, and documentation are major time sinks. |

| Study Selection | Not specified, expert choice | Explicit criteria, often dual screening | Dual independent screening increases rigor but doubles person-hours. |

| Quality Assessment | Usually absent or informal | Critical appraisal using explicit tools | Adds a substantial analytical step not present in narrative reviews. |

| Synthesis | Qualitative summary | Qualitative + often quantitative (meta-analysis) | Statistical synthesis requires specialized skills and software. |

| Expertise Required | Subject matter expertise | Subject matter, SR methodology, data analysis | Need for a multidisciplinary team or training adds complexity. |

A critical, often overlooked temporal factor is the expiration date of evidence. Research landscapes evolve, and the clinical or regulatory relevance of findings can diminish over time [24]. This is especially pertinent in fast-moving fields or when reviewing technologies (e.g., sequencing platforms) that rapidly become obsolete. A review taking two years to complete may be outdated upon publication. Therefore, feasibility is not just about saving resources, but about ensuring the review's conclusions remain relevant [24].

Experimental Protocols for Accelerated Reviews

Adhering to a structured protocol is non-negotiable for rigor. The following workflows detail two approaches: the gold-standard comprehensive SR and a streamlined "Rapid Review" variant designed for efficiency.

Protocol 1: Comprehensive Systematic Review (COSTER Framework) This protocol follows the Conduct of Systematic Reviews in Toxicology and Environmental Health Research (COSTER) recommendations, an expert consensus covering 70 practices across eight domains [25].

Protocol Development & Registration:

- Action: Define a precise PECO question (Population, Exposure, Comparator, Outcome). Write and publicly register a detailed protocol (e.g., on PROSPERO or Open Science Framework) specifying all methods [25].

- Time-Saving Tip: Use a pre-filled protocol template from COSTER or OHAT to ensure completeness and avoid later revisions [2] [25].

Search & Retrieval:

- Action: Develop a search strategy with a librarian/information specialist. Search multiple databases (e.g., PubMed, Embase, TOXLINE, Web of Science). Document the full search syntax and dates [2].

- Time-Saving Tip: Use automated deduplication tools (e.g., EndNote, Covidence, Rayyan) immediately after exporting search results.

Screening & Selection:

- Action: Conduct dual-independent screening of titles/abstracts and full texts against pre-defined eligibility criteria. Resolve conflicts via consensus or a third reviewer [2].

- Time-Saving Tip: Use systematic review software with AI-assisted prioritization to expedite initial screening, though final decisions must remain with human reviewers.

Data Extraction & Risk of Bias:

- Action: Use a calibrated, pilot-tested data extraction form. Perform dual-independent extraction for critical fields. Assess risk of bias/study quality using standardized tools (e.g., OHAT Risk of Bias Tool, SYRCLE for animal studies) [2].

- Time-Saving Tip: Develop detailed, unambiguous extraction guidelines to minimize reviewer discussion and reconciliation time.

Synthesis, Analysis & Reporting:

- Action: Synthesize findings narratively. Conduct meta-analysis if studies are sufficiently homogeneous. Grade the confidence in the evidence (e.g., using GRADE adapted for toxicology). Report following PRISMA guidelines [2].

- Time-Saving Tip: Use meta-analysis software (e.g., RevMan, Stata, R

metaforpackage) with pre-scripted analysis code for efficiency.

Protocol 2: Streamlined Rapid Review This modified protocol strategically limits scope or methods to produce evidence summaries in a shorter timeframe (e.g., 3-6 months), useful for emerging issues or internal decision-making.

Focused Protocol:

- Action: Restrict the PECO question (e.g., to a single, most relevant outcome or a key species). Use a published SR protocol as a template and modify.

- Feasibility Gain: Narrow scope directly reduces the search, screening, and data extraction burden.

Targeted Search:

- Action: Limit the number of databases searched. Apply date restrictions (e.g., last 10 years). Restrict to core journals. May exclude grey literature or non-English language studies.

- Feasibility Gain: Drastically reduces the volume of records to screen and manage. Acknowledged Limitation: Increases risk of missing relevant evidence.

Accelerated Screening:

- Action: Use single screener for titles/abstracts, with a second reviewer checking a random sample. Use a "liberal accelerated" method where only one reviewer needs to include a study for full-text retrieval.

- Feasibility Gain: Reduces person-hours by approximately 50%. Acknowledged Limitation: Slightly increases risk of missing eligible studies.

Simplified Data Extraction:

- Action: Single reviewer extraction, with verification of critical data (e.g., outcomes, dose) by a second reviewer. Use a streamlined extraction form focused on key PECO elements.

- Feasibility Gain: Significantly speeds up the most labor-intensive phase.

Narrative Synthesis & Summary:

- Action: Forgo formal meta-analysis and complex evidence grading. Present a structured narrative summary and table of findings, clearly stating conclusions are based on a rapid methodology.

- Feasibility Gain: Eliminates the need for advanced statistical expertise and time-consuming analysis.

Decision Pathway for Systematic Review Scoping

The Scientist's Toolkit: Essential Research Reagent Solutions

Efficiency in systematic reviewing relies on digital and methodological "reagents." The following table lists essential tools for key stages of the review process.

Table 2: Research Reagent Solutions for Efficient Systematic Reviews

| Tool Category | Specific Tool/Resource | Function & Purpose | Feasibility Benefit |

|---|---|---|---|

| Protocol & Registration | PROSPERO, Open Science Framework | Publicly register review protocol to reduce duplication, lock methods, and ensure transparency [25]. | Prevents wasted effort on existing reviews; minimizes post-hoc method changes. |

| Search & Deduplication | EndNote, Zotero, Covidence, Rayyan | Manage bibliographic records, automatically identify and remove duplicate references. | Saves hours of manual work in the initial phase. Rayyan's AI features can assist with initial screening prioritization. |

| Screening & Selection | Covidence, Rayyan, Abstrackr | Web-based platforms for dual-independent title/abstract and full-text screening with conflict resolution. | Streamlines collaboration, automatically tracks inclusion/exclusion decisions, and generates PRISMA flow diagrams. |

| Data Extraction | Custom Google/Excel Forms, SRDR+, Covidence | Structured, pilot-tested forms for consistent and efficient data capture from included studies. | Ensures completeness, reduces error, and facilitates data sharing and analysis. |

| Risk of Bias Assessment | OHAT Risk of Bias Tool, SYRCLE's RoB Tool, ROBINS-I | Standardized tools to evaluate methodological quality and risk of bias in toxicological and epidemiological studies [2]. | Provides a consistent, transparent framework for a critical review step. |

| Evidence Synthesis | RevMan (Cochrane), Stata, R (metafor, meta packages) |

Perform statistical meta-analysis, create forest plots, and assess heterogeneity. | Automates complex calculations and standardized visual output of synthesized data. |

| Reporting | PRISMA Checklist, SR Template from COSTER/OHAT | Ensure complete and transparent reporting of the systematic review process and findings [2] [25]. | Guides writing to meet journal and methodological standards, reducing revision rounds. |

Troubleshooting Guides & FAQs

Troubleshooting Guide: Common Scoping and Workflow Issues

Problem: The literature search yields an unmanageably large number of records (e.g., >10,000).

- Cause: The research question (PECO) is too broad. Search terms lack specificity.

- Solution: Refocus the PECO question. Add more specific MeSH/Emtree terms or keywords related to a critical component (e.g., a specific outcome like "steatosis"). Consider applying a date filter if justified [24]. Pilot your search strategy and adjust.

Problem: Screening is taking far longer than projected.

- Cause: Eligibility criteria are vague, leading to excessive deliberation. Reviewers are not adequately calibrated.

- Solution: Revise eligibility criteria to be more operational (e.g., define "chronic exposure" as >90 days). Pilot the screening process on a batch of 50-100 records with all reviewers, discuss conflicts to establish consensus, and refine the guidelines before proceeding [2].

Problem: Included studies are too heterogeneous for meaningful synthesis.

- Cause: The scoping was overly inclusive, combining different species, exposure routes, or outcome measures.

- Solution: It is methodologically sound to present a narrative synthesis organized by sub-groups (e.g., by species or study design). Clearly state that quantitative meta-analysis was not appropriate due to heterogeneity. This is a common outcome in toxicological SRs [2].

Problem: The team lacks specific methodological expertise (e.g., in meta-analysis or statistical software).

- Cause: Systematic reviewing requires a multi-skilled team.

- Solution: Consult the community or a collaborator. Many online forums and professional networks exist for systematic reviewers. For complex analysis, consider collaborating with a biostatistician from the outset. Using tools with strong support communities (like R) can be beneficial [26] [27].

Frequently Asked Questions (FAQs)

Q1: How can I justify a "rapid review" methodology to a journal or regulatory body?

- A: Complete transparency is key. Publish a protocol stating it is a "rapid review" and explicitly describe the methodological streamlining decisions (e.g., limited databases, date restrictions, single screening). Clearly discuss these as limitations in the discussion section. The COSTER guidelines acknowledge that "the extent of the search may vary" based on purpose and resources [25]. The justification is the trade-off for timeliness [24].

Q2: What is the single biggest time sink in a systematic review, and how can I optimize it?

- A: The screening stage (title/abstract and full-text) is often the most labor-intensive. Optimization strategies include: 1) Using robust SR software (Covidence, Rayyan) for collaboration and tracking, 2) Investing significant time upfront to develop crystal-clear, unambiguous eligibility criteria, and 3) For rapid reviews, considering validated accelerated screening methods where a second reviewer only checks a sample of exclusions [2].

Q3: How do I handle the "grey literature" (theses, reports, conference abstracts) to be both rigorous and efficient?

- A: Grey literature is crucial for reducing publication bias but is time-consuming to search and obtain. A balanced approach is to search key sources relevant to your field (e.g., specific government agency websites like EPA, EFSA; dissertation databases) but not to exhaustively search every possible outlet. Document which grey sources you searched. The COSTER recommendations provide specific guidance on this challenge [25].

Q4: The evidence base for my toxicological question includes a mix of human, animal, and in vitro studies. How do I synthesize this?

- A: This is a hallmark challenge in evidence-based toxicology. Do not force a quantitative synthesis across evidence streams. Instead, follow a structured narrative synthesis: present the human, animal, and in vitro evidence in separate sections or tables. Then, use a framework like the OHAT/IRIS approach to integrate the streams, discussing the consistency, biological plausibility, and coherence of findings across them to reach an overall conclusion on hazard [2].

Systematic Review Workflow Troubleshooting Logic

Modern Accelerants: AI, Streamlined Protocols, and Tools to Expedite the Review Process

Technical Support Center: Troubleshooting Systematic Reviews in Toxicology

Welcome to the technical support center for toxicology research synthesis. This resource is designed to help researchers, scientists, and drug development professionals overcome common methodological challenges, reduce time burdens, and enhance the rigor of their systematic reviews through strategic problem framing and the iterative refinement of PECO (Population, Exposure, Comparator, Outcome) questions [17] [2].

Troubleshooting Guides

This section provides structured solutions for common problems encountered during the systematic review process.

Problem 1: Unfocused Research Question Leading to Unmanageable Scope

- Symptoms: An overwhelming number of search results, inconsistent study designs in the retrieved literature, inability to define clear inclusion/exclusion criteria.

- Root Cause: A poorly framed research question that is too broad or vague [2].

- Solution - Apply the PECO Framework Iteratively:

- Draft Initial PECO: Write a first draft defining each element.

- Pilot Search: Conduct a preliminary literature search with the draft PECO.

- Analyze & Refine: Review the results. Is the volume of studies too large? Are the studies irrelevant? Refine the PECO elements (e.g., narrow the population, specify the exposure metric) based on the evidence found [17].

- Repeat: Iterate steps 2 and 3 until the search yields a focused, relevant, and manageable set of studies. This iterative process is more efficient than a single, broad search followed by manual sifting.

Problem 2: Difficulty Defining a Meaningful Comparator (C) in Exposure Studies

- Symptoms: Uncertainty about what constitutes an appropriate control group, leading to inconsistent synthesis of data.

- Root Cause: Unlike intervention studies (PICO), exposure studies often lack a clear "no intervention" comparator [17].

- Solution - Use the PECO Scenario Framework: Select and define your comparator based on your review's context and the available data [17].

- Table: PECO Comparator Scenarios for Toxicology

Scenario & Context Approach to Define Comparator Example PECO Question (Toxicology Focus) 1. Explore Dose-Response Incremental increase in exposure level [17]. In laboratory rats, what is the effect of a 0.5 mg/kg/day increase in oral exposure to Chemical X on liver weight? 2. Compare Exposure Extremes Highest vs. lowest quantile of exposure found in the literature [17]. In occupational cohorts, what is the effect of exposure to the highest quartile of airborne particulate matter compared to the lowest quartile on pulmonary function? 3. Use an External Standard A known exposure threshold from other research or regulation [17]. In a human population, what is the effect of serum perfluorooctanoic acid (PFOA) levels ≥ 20 ng/mL compared to < 20 ng/mL on thyroid hormone levels? 4. Evaluate a Mitigation Target An exposure level achievable through an intervention [17]. In a contaminated community, what is the effect of an intervention that reduces soil arsenic by 50% compared to pre-intervention levels on neurological development in children?

- Table: PECO Comparator Scenarios for Toxicology

Problem 3: Slow Manual Screening of Search Results

- Symptoms: The screening phase takes months, delaying the entire review process.

- Root Cause: Reliance on manual screening of thousands of titles/abstracts.

- Solution - Integrate Automation Tools:

- Acknowledge the Time Burden: The average systematic review takes ~67 weeks [28].

- Adopt a Supported Tool: Use specialized software (e.g., Covidence, Rayyan, DistillerSR) for the screening phase, where 79% of tool users report applying them [28].

- Invest in Training: The primary barrier to adoption is lack of knowledge [28]. Utilize tutorials and documentation to overcome this initial hurdle. These tools can save significant time and increase accuracy [28].

Frequently Asked Questions (FAQs)

Q1: What is the single biggest time-saving step in conducting a systematic review? A1: Investing time in iteratively framing and refining the research question using the PECO framework before finalizing the protocol. A precise PECO directly informs an efficient search strategy and clear eligibility criteria, preventing wasted effort on irrelevant studies downstream [17] [2].

Q2: How does a systematic review for toxicology differ from one for clinical medicine? A2: Toxicology reviews face specific challenges including integrating multiple evidence streams (in vivo, in vitro, in silico), extrapolating across animal species and strains to human outcomes, and assessing complex exposures and mixtures. The PECO framework is adapted from clinical medicine's PICO to better handle these nuances, particularly in defining Exposure and Comparator [17] [2].

Q3: We have limited resources. Can we still do a systematic review? A3: Yes, by strategically focusing the scope. A highly focused PECO question will yield a more manageable number of studies to process. Furthermore, leveraging free or institutional-access automation tools for screening can reduce personnel time. The key is rigorous methodology within a defined scope, not volume [2] [28].

Q4: How do we handle variability in how outcomes are measured across studies? A4: This must be addressed at the protocol stage. Your PECO should define the outcome (O) with as much specificity as possible (e.g., "serum alanine aminotransferase (ALT) concentration as a continuous measure" rather than "liver injury"). During screening and data extraction, document all variants and plan a sensitivity analysis or qualitative synthesis if meta-analysis is not feasible due to heterogeneity [2].

Detailed Methodologies & Protocols

Protocol 1: Iterative PECO Refinement for Protocol Development This protocol formalizes the troubleshooting solution for Problem 1.

- Assemble Team: Include a librarian/information specialist.

- Brainstorm Draft PECO: Based on initial knowledge, draft the four elements.

- Develop Preliminary Search: Translate the draft PECO into a search string for a primary database (e.g., PubMed/TOXLINE).

- Execute and Analyze Search: Run the search. Record the number of results. Randomly sample 50-100 records. Assess relevance.

- Refine PECO: Based on sample analysis, refine elements. For example, if sampled studies use "Sprague-Dawley rats," specify the species in P. If exposure is measured in "plasma," specify this in E.

- Lock PECO: After 2-3 iterations, when search results are consistently relevant, finalize the PECO and proceed to the full, registered protocol [17].

Protocol 2: Implementing a Semi-Automated Title/Abstract Screening Workflow This protocol addresses Problem 3.

- Tool Selection & Setup: Choose a screening tool (e.g., Covidence). Import all deduplicated search results.

- Calibration: All reviewers independently screen the same set of 50-100 records using the eligibility criteria based on the locked PECO. Resolve conflicts to ensure consistent understanding.

- Dual-Blind Screening: Reviewers screen titles/abstracts independently within the tool. The tool automatically highlights conflicts.

- Conflict Resolution: The team meets to adjudicate conflicts. The tool's interface streamlines this process.

- Progress Monitoring: Use the tool's dashboard to track screening progress and reviewer workload [28].

Visualizations: Workflows and Pathways

Toxic Course in Drug-Induced Phospholipidosis

Table: Core Knowledge Domains & Methodological Tools for Efficient Reviews

| Category | Item | Function & Relevance to Efficient Systematic Reviews |

|---|---|---|

| Conceptual Foundation [29] | Mechanisms of Toxicity | Working knowledge is essential for accurately defining exposure (E) and outcome (O) in PECO, and for interpreting synthesized results. |

| Risk Assessment | Core to the application of review findings. Informs the framing of PECO questions aimed at decision-making (e.g., Scenarios 3-5) [17]. | |

| Toxicokinetics (Absorption, Distribution, Metabolism, Excretion) | Critical for evaluating internal dose and biological relevance in exposure studies, guiding comparator selection. | |

| Methodology [2] | Systematic Review Guidelines (e.g., OHAT, Navigation Guide) | Provide toxicology-specific protocols for conducting reviews, reducing time spent designing methods from scratch. |

| Study Design & Critical Appraisal | Working knowledge allows for efficient development of inclusion/exclusion criteria and quality assessment checklists. | |

| Efficiency Tools [28] | Screening Software (e.g., Covidence, Rayyan) | Automates and manages the title/abstract and full-text screening process, saving significant time and reducing error. |

| Dedicated Review Platforms (e.g., DistillerSR) | Integrates multiple systematic review steps (screening, data extraction, risk of bias) into one managed environment. | |

| Reference Management (e.g., EndNote, Zotero) | Essential for handling large bibliographies, deduplication, and citation. |

Systematic reviews are foundational to evidence-based toxicology, synthesizing data to inform safety assessments, risk analysis, and regulatory decisions for chemicals, pharmaceuticals, and environmental agents [30]. However, the traditional manual process is notoriously slow, often taking over a year to complete, creating a critical bottleneck in translating research into public health guidance [31]. The proliferation of New Approach Methodologies (NAMs) and the increasing volume of published studies further exacerbate this challenge, making comprehensive evidence synthesis a daunting task [30].