Accelerating Ecotoxicology Reviews: A 2025 Guide to Automated Screening Tools and AI Workflows

Systematic reviews in ecotoxicology face unique challenges, including interdisciplinary terminology, diverse study methodologies, and a vast, growing literature base.

Accelerating Ecotoxicology Reviews: A 2025 Guide to Automated Screening Tools and AI Workflows

Abstract

Systematic reviews in ecotoxicology face unique challenges, including interdisciplinary terminology, diverse study methodologies, and a vast, growing literature base. This article provides a comprehensive guide for researchers and scientists on leveraging digital tools to automate the labor-intensive screening phase. We explore the foundational need for automation, detail the application of leading software and AI-assisted methods, address practical troubleshooting and optimization strategies, and present comparative validations of current technologies. The goal is to empower research teams to conduct more efficient, transparent, and reproducible evidence syntheses, ultimately accelerating the integration of toxicological evidence into environmental and biomedical decision-making.

Why Automate? Defining the Need and Tools for Ecotoxicology Systematic Reviews

Technical Support Center: Troubleshooting Systematic Review Screening

This technical support center addresses common operational challenges researchers face when screening literature for systematic reviews (SRs) in ecotoxicology. The guidance is framed within a thesis exploring tools for automating this screening process, focusing on overcoming hurdles posed by interdisciplinary jargon and diverse methodologies [1].

Frequently Asked Questions (FAQs)

Q1: Our screening process is overwhelmed by the volume of papers from different fields (e.g., chemistry, ecology, hydrology). How can we manage this complexity efficiently? A: The volume and diversity are central challenges in ecotoxicology [1]. Implement a structured screening workflow and leverage AI-assisted tools designed for multi-disciplinary corpora. Begin by using a platform like Sysrev, which introduces machine learning to increase the accuracy and efficiency of the review process [2]. For very large datasets, consider a multi-agent AI system like InsightAgent, which partitions the literature corpus based on semantic similarity, allowing parallel processing of different disciplinary clusters [3].

Q2: Reviewers from different disciplines interpret the same eligibility criteria differently, leading to inconsistencies. How can we standardize screening? A: This is a known issue where interdisciplinary terminology leads to variable interpretation [1]. The solution is a three-step protocol: First, hold calibration meetings to develop a unified, written glossary of key terms (e.g., bioavailability, LC50, biomagnification) [4] [5]. Second, translate these agreed-upon eligibility criteria into a precise prompt for a fine-tuned Large Language Model (LLM) [1]. Third, use the AI model to perform a first-pass screening on all articles, ensuring a consistent application of the baseline criteria, which human reviewers can then verify.

Q3: We are considering an AI tool for screening. What are the critical performance metrics, and what accuracy can we realistically expect? A: The critical metrics are recall (sensitivity) and precision, and their harmonized measure, the F1 score. Agreement with human experts is typically measured using Cohen's Kappa for two raters or Fleiss' Kappa for multiple raters [1]. Realistic performance varies by task: a recent AI agent system demonstrated a 47% improvement in F1 score for article identification with user interaction [3]. Another study using a fine-tuned ChatGPT model reported "substantial agreement" at the title/abstract stage and "moderate agreement" at the full-text stage compared to human reviewers [1]. Expect to iteratively refine the AI model with expert feedback to achieve optimal results.

Q4: How do we choose the right database or knowledgebase for ecotoxicology data extraction after screening? A: For curated ecotoxicology data, the EPA ECOTOX Knowledgebase is an essential resource. It contains over one million test records for more than 12,000 chemicals and 13,000 species [6]. Use its advanced SEARCH and EXPLORE features to filter data by specific endpoints, species, and test conditions. For human exposure assessment data to support risk assessment, refer to systematic scoping reviews that identify and evaluate accessible computational tools and models [2].

Troubleshooting Guides

Problem: Low Inter-Rater Reliability (IRR) During Manual Title/Abstract Screening Symptoms: Low Cohen's Kappa scores among reviewers, frequent disagreements during consensus meetings, unpredictable inclusion/exclusion decisions. Diagnosis: Inconsistent application of eligibility criteria due to ambiguous terminology or a lack of shared understanding of interdisciplinary concepts. Solution:

- Pause Screening & Refine Criteria: Hold a dedicated calibration workshop with the review team.

- Create a Decision Tree: Visually map the inclusion/exclusion criteria with specific examples from pilot articles.

- Develop a Disambiguation Glossary: Build a living document defining ambiguous terms (e.g., "adverse effect," "chronic exposure," "model") as they are used in the context of your review [4] [5].

- Pilot Test Revised Protocol: Screen a new batch of 50-100 articles independently. Calculate IRR again. Repeat steps 1-4 until IRR reaches an acceptable level (e.g., Kappa > 0.6).

- Implement AI-Assisted Consistency Check: Use a fine-tuned LLM to screen the same batch and compare its decisions to the reconciled human decisions to identify any remaining systemic ambiguities in the criteria [1].

Problem: Poor Recall or Precision from an AI Screening Tool Symptoms: The AI model is missing too many relevant papers (low recall) or including too many irrelevant ones (low precision). Diagnosis: The model has not been adequately trained or fine-tuned on domain-specific, labeled data representative of your research question. Solution:

- Audit the Training Data: Ensure your labeled dataset (used for fine-tuning) is of high quality, balanced, and reflects the interdisciplinary scope of your review.

- Optimize Hyperparameters: Adjust the model's technical settings. For an LLM like GPT, key parameters include:

- Temperature (0.1-0.5): Lower for more deterministic, consistent outputs during screening.

- Top_p (0.8-0.95): Controls the diversity of predicted tokens.

- Epochs (3-10): Increase if the model is underfitting, decrease if it is overfitting [1].

- Implement a Multi-Agent or Ensemble Approach: If using a single agent, consider switching to a framework like InsightAgent, which uses multiple AI agents to process different semantic clusters of literature in parallel, improving overall accuracy [3].

- Integrate Human-in-the-Loop Feedback: Use an interactive platform where human experts can correct the AI's screening decisions in real-time. This feedback should be used to continuously re-train and improve the model.

Problem: Difficulty Synthesizing Findings from Methodologically Diverse Studies Symptoms: Inability to perform meaningful meta-analysis, qualitative synthesis feels fragmented, results from different study types (e.g., field monitoring vs. lab microcosms) appear contradictory. Diagnosis: This is a fundamental challenge in interdisciplinary ecotoxicology reviews [1]. The screening phase did not adequately categorize studies by methodology for later synthesis. Solution:

- Tag During Screening: During the full-text screening phase, implement additional tagging for key methodological variables (e.g., study_type: field_observational, lab_experimental, computational_model; scale: mesocosm, watershed, population).

- Use a Structured Data Extraction Tool: Employ tools that force extraction into predefined fields related to methods (e.g., test organism life stage, exposure duration, measured endpoints) rather than free text. The ECOTOX Knowledgebase data structure is a good model [6].

- Synthesize by Methodological Group: Structure your results synthesis not just by outcome, but by methodological approach. Clearly state how different methods contribute to the overall weight of evidence, acknowledging the strengths and limitations of each [7].

Experimental Protocols for Cited Screening Methodologies

- Objective: To consistently apply interdisciplinary eligibility criteria for title/abstract screening in an SR.

- Materials: Access to OpenAI API (GPT-3.5 Turbo or similar), a corpus of article titles/abstracts in a manageable format (CSV/JSON), a labeled training dataset of 100-200 articles reviewed by experts.

- Procedure:

- Expert Calibration: Reviewers independently screen a pilot set of articles, resolve disagreements, and finalize eligibility criteria.

- Prompt Engineering: Translate the final eligibility criteria into a clear, structured LLM prompt (e.g., "You are a systematic review screener. Based on the following title and abstract, determine if the study is relevant. Criteria: [List]. Respond only with 'Include' or 'Exclude.'").

- Model Fine-Tuning: Use the OpenAI fine-tuning API with your labeled dataset. Suggested hyperparameters:

epochs=4,learning_rate_multiplier=0.1,batch_size=8[1]. - Stochastic Sampling & Decision: Run the fine-tuned model on each article multiple times (e.g., 15 runs) due to inherent stochasticity. Take the majority vote as the final decision [1].

- Validation: Apply the model to a held-out test set of expert-screened articles. Calculate agreement metrics (Cohen's Kappa, F1 score).

- Objective: To rapidly screen and synthesize a very large, multidisciplinary corpus for an SR.

- Materials: InsightAgent or similar multi-agent framework, full-text or abstract corpus.

- Procedure:

- Corpus Mapping & Partitioning: The system projects the literature corpus into a Radial-based Relevance and Similarity (RSS) map, where article position indicates relevance (center) and semantic similarity (clusters) [3].

- Cluster Assignment: The map is partitioned into distinct semantic clusters (e.g., using K-means). Each cluster is assigned to a dedicated AI agent.

- Parallel Agent Processing: Each agent explores its cluster, starting from the most relevant (central) articles. It reads, summarizes, and makes screening decisions based on the review's objectives.

- Human Oversight & Interaction: Researchers monitor the agents' "trajectories" on the visual map. They can intervene to redirect an agent, adjust its focus, or correct its decisions, providing real-time expert feedback.

- Evidence Synthesis: Agents generate interim summaries for their clusters. A final synthesis agent or the human team integrates these into a cohesive review, supported by a provenance tree tracing claims back to source articles [3].

- Objective: To conduct a broad scoping review of available methods and tools (e.g., for exposure assessment).

- Materials: Sysrev web platform or similar (DistillerSR, Rayyan), predefined PICOS (Population, Intervention, Comparator, Outcome, Study) criteria.

- Procedure:

- Platform Setup: Upload retrieved references to the platform. Define inclusion/exclusion fields and screening forms.

- AI-Assisted Priority Screening: Screen an initial random subset of references (e.g., 3,000) with the platform's AI "watching." The AI learns to predict the likelihood of inclusion.

- Prioritized Screening: Screen the remaining references in order of the AI-predicted likelihood of inclusion (e.g., >45% likelihood). This increases the rate of finding relevant papers quickly [2].

- Dual Verification: Maintain a human verification step, especially for lower-probability articles, to ensure recall.

- Data Extraction & Mapping: Use the platform's tools to extract and chart key data (e.g., tool names, chemical classes, exposure routes) from included studies to map the available evidence [2].

Table 1: Performance Comparison of AI Screening Methodologies

| Methodology | Reported Efficiency Gain | Key Strength | Primary Challenge | Best Suited For |

|---|---|---|---|---|

| Fine-Tuned LLM [1] | Substantial agreement with humans (Kappa) | High consistency in applying complex criteria | Requires quality labeled data for tuning | Reviews with clear, complex eligibility rules |

| Multi-Agent AI (InsightAgent) [3] | Completes SR in ~1.5 hours vs. months | Handles large, diverse corpora via parallel processing | System complexity; requires interactive oversight | Large, interdisciplinary reviews |

| AI-Prioritized Screening (Sysrev) [2] | Increased relevant hit rate during screening | Efficiently prioritizes workload for human screeners | Less autonomous; still human-dependent | Scoping reviews & large-scale evidence mapping |

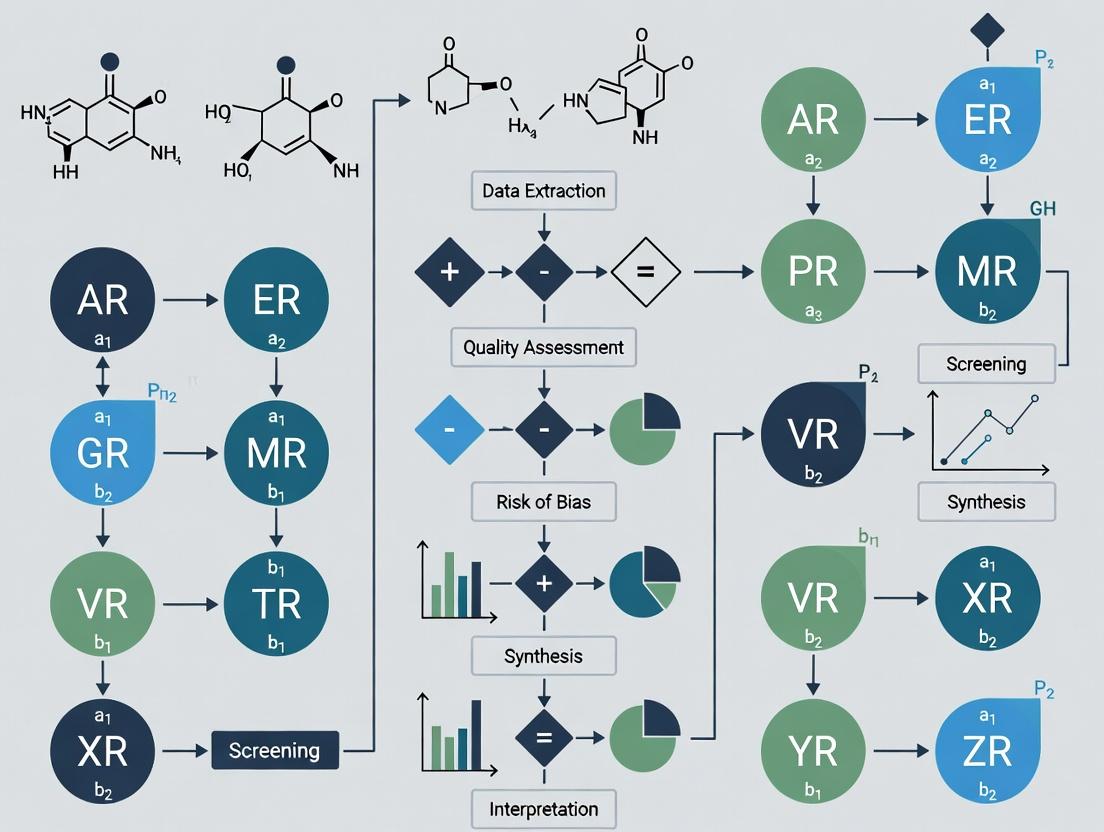

Visualizations of Screening Workflows and Relationships

Diagram 1: Ecotoxicology Systematic Review Screening Workflow (Max Width: 760px)

Diagram 2: AI-Human Collaborative Screening System Architecture (Max Width: 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital Tools & Platforms for Ecotoxicology Review Screening

| Tool/Resource Name | Type | Primary Function in Screening | Key Consideration |

|---|---|---|---|

| Sysrev [2] | Web Platform | Integrates machine learning to prioritize and manage the screening process for systematic/scoping reviews. | Effective for evidence mapping and reviews with clear, categorical inclusion data. |

| InsightAgent [3] | Multi-Agent AI Framework | Partitions literature by semantics for parallel AI agent processing, with human-in-the-loop visualization. | Designed for rapid synthesis of large corpora; requires technical setup and interactive oversight. |

| Fine-Tuned LLM (e.g., GPT) [1] | AI Model | Provides consistent, automated application of complex eligibility criteria to titles/abstracts/full texts. | Performance depends heavily on the quality of training data and prompt engineering. |

| EPA ECOTOX Knowledgebase [6] | Curated Database | Provides pre-extracted toxicity data for chemicals and species; useful for validating scope and informing criteria. | Not a screening tool per se, but a critical resource for defining relevant endpoints and understanding data landscape. |

| Explainable AI (XAI) Principles [8] | Conceptual Framework | Guides the selection and implementation of AI tools that provide transparent, interpretable decisions for auditing. | Critical for maintaining scientific rigor and trust when using "black box" AI models in high-stakes reviews. |

| Interdisciplinary Glossary [4] [5] | Documentation | Serves as an agreed-upon reference to align team understanding of key toxicological and ecological terms. | A simple but foundational tool to mitigate the core challenge of interdisciplinary jargon [1]. |

Technical Support Center: Troubleshooting Automated Screening Tools

Frequently Asked Questions (FAQs)

Q1: Our automated screening tool (e.g., ASReview, Rayyan with AI) is flagging too many irrelevant studies in the 'included' set after the first training round. What went wrong? A: This is often due to unrepresentative or insufficient initial training data. The algorithm may be overfitting to your first few relevance judgments.

- Solution: Re-initialize the screening and provide a more balanced "prior knowledge" set. Manually identify and include 5-10 highly relevant ("golden") studies and 10-15 clearly irrelevant studies before starting active learning. This teaches the model the boundaries of your inclusion criteria more effectively.

Q2: During dual-reviewer screening with an AI-assisted tool, how do we resolve discrepancies when the AI's prediction heavily influenced one reviewer? A: The AI should be an aid, not an arbitrator. Implement a blinded reconciliation phase.

- Protocol: 1) Both reviewers screen independently with AI suggestions hidden. 2) Compare results. 3) For conflicting decisions, reveal the AI's prediction score and the other reviewer's decision. 4) Discuss the study's abstract/full text against the protocol's PICO criteria to make a final, consensus decision. This minimizes automation bias.

Q3: We are using a text classifier (e.g., in DistillerSR, SWIFT-Review) and performance seems poor for our ecotoxicology topic. How can we improve it? A: Ecotoxicology-specific terminology may not be well-represented in general models.

- Solution: Create and apply a custom synonym dictionary or thematic lexicon. For example, map all variant terms ("Daphnia magna," "D. magna," "water flea") to a standardized key. Augment your training data by including studies from known, relevant systematic reviews in your field to improve contextual understanding.

Q4: Our screening workflow keeps stalling at the deduplication stage, with many false positives. A: Standard deduplication often fails with preprints, conference abstracts, and different database export formats.

- Troubleshooting Guide:

- Pre-Process Exports: Ensure all imports are in the same format (e.g., RIS) and from consistent sources.

- Use Fuzzy Matching: Enable settings for matching on "Title + Author + Year" with a similarity threshold (e.g., 90-95%).

- Manual Check Pass: Sort the "duplicate groups" by confidence score and manually verify the top 50-100 groups. This trains you to identify common false-positive patterns (e.g., series reports).

- Protocol Note: Document your exact deduplication settings (software, fields, algorithm) in your methods section for reproducibility.

Q5: How do we validate that our AI-assisted screening process did not miss key studies? A: You must perform a validation check, often called a "stopping rule" verification.

- Detailed Methodology:

- After the AI-assisted screening is complete (e.g., after screening 20% of the corpus), take a random sample of all records excluded by the tool.

- The sample size should be statistically justified (e.g., 95% confidence level, 5% margin of error). For 10,000 excluded records, sample ~370.

- Screen this sample of "excluded" studies at the full-text level against your eligibility criteria.

- Calculate the proportion of missed relevant studies. If this proportion is below an acceptable threshold (e.g., <1%), you can confidently stop. If a relevant study is found, retrain the model and continue screening.

Quantitative Data on the Screening Burden

Table 1: Time and Cost Implications of Manual vs. Automated Screening

| Metric | Manual Screening (Traditional) | AI-Assisted Screening (Active Learning) | Data Source & Context |

|---|---|---|---|

| Screening Time | 100% (Baseline) | Reduced by 50-90%. Typically requires screening only 10-25% of the total corpus to identify 95% of relevant studies. | - Simulation studies across biomedical domains. |

| Cost Per Review | High. Primarily driven by personnel time (weeks to months of salary). | Substantially Lower. Reduces person-hours by a proportional amount to time saved. Software costs are fixed. | - Economic analyses of systematic review production. |

| Human Error Rate (Missed Studies) | Estimated 5-10% inconsistency rate between independent human reviewers. | Can be reduced to <1-2% when used with a proper validation stop rule (see FAQ #5). | - Studies on inter-rater reliability in environmental health reviews. |

| Optimal Use Case | Necessary for very small datasets (<100 records) or when criteria are highly complex and non-textual. | Essential for large-scale reviews (>1000 records). Most beneficial in the title/abstract phase. | Best practice guidelines from CEEDER, SRDB. |

Experimental Protocol: Implementing an AI-Assisted Screening Workflow

Title: Protocol for a Dual-Reviewer, AI-Powered Title/Abstract Screening Phase in Ecotoxicology.

Objective: To efficiently and accurately screen a large bibliographic dataset (n>5000) for relevance to a predefined PICO question using active learning.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Preparation:

- Develop and register the systematic review protocol (including PICO).

- Export search results from all databases into RIS files.

- Import all files into the chosen screening tool (e.g., ASReview).

- Perform automated deduplication using fuzzy matching on title, author, and year.

Prior Knowledge Injection (Critical Step):

- The review team manually identifies and labels 5-10 key relevant studies and 10-15 clearly irrelevant studies from the corpus. These are loaded into the tool as the initial training set.

Active Learning Screening Loop:

- The AI model (e.g., naive Bayes, SVM) is trained on the initial set.

- The tool presents one record at a time, ranked by its predicted probability of relevance.

- Two independent reviewers screen each presented record, blinded to each other's decision and the AI's prediction score. They label each as "relevant" or "irrelevant."

- Each new decision is added to the training data, and the model is updated in real-time.

- This loop continues.

Stopping Rule & Validation:

- A stopping rule is pre-defined (e.g., after 100 consecutive irrelevant records).

- Upon triggering the rule, execute the validation methodology described in FAQ #5.

- If the validation passes, the title/abstract phase is complete. Proceed to full-text retrieval.

Reconciliation:

- For records where reviewers disagreed during the active learning phase, a third reviewer adjudicates based on the PICO criteria, with the AI score remaining hidden.

Visualizations

Diagram 1: AI-Assisted Systematic Review Workflow

Diagram 2: Human-AI Interaction in Screening Decision

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Automated Screening in Ecotoxicology

| Tool / Resource | Function & Explanation |

|---|---|

| ASReview (Open Source) | Core active learning screening software. Allows for custom model selection and is highly flexible for research on screening automation itself. |

| Rayyan (Freemium) | Web-based tool with a user-friendly interface and basic AI assistance. Excellent for collaborative screening across institutions. |

| DistillerSR (Commercial) | Full-featured, enterprise-level systematic review management software with advanced AI, deduplication, and workflow customization. |

| SYRCLE's Toolbox | A set of tools and guidelines specifically for animal studies, crucial for adapting PICO criteria for ecotoxicology models. |

| EndNote / Zotero | Reference managers for initial collection and deduplication before import into specialized screening tools. |

| PubMed / ETOX DB APIs | Programmatic access to database entries allows for reproducible search strategies and bulk data retrieval. |

| Custom Ecotoxicology Lexicon | A pre-defined list of standardized terms (species, chemicals, endpoints) to improve text mining accuracy. |

| Reporting Guideline (PRISMA) | The PRISMA checklist and flow diagram template are essential for reporting the modified, AI-assisted screening method transparently. |

This technical support center is designed for researchers, scientists, and drug development professionals conducting systematic reviews in ecotoxicology. It provides targeted troubleshooting guides and FAQs to help you overcome common challenges when implementing automation tools for screening studies. The content is framed within a broader thesis on enhancing the efficiency and reliability of evidence synthesis through technological innovation [9] [10].

Troubleshooting Workflow for Automation Tools

Adopting a structured approach is critical when diagnosing issues with systematic review automation. The following workflow, adapted from established technical troubleshooting methodologies, provides a logical progression from problem identification to resolution [11].

Frequently Asked Questions (FAQs) and Troubleshooting Guides

Tool Selection and Implementation

Problem: I'm overwhelmed by the number of tools available (e.g., Covidence, Rayyan, DistillerSR). How do I choose the right one for my ecotoxicology review? [9] [12]

- Solution: Base your selection on project needs. For a full-process platform, consider Covidence or DistillerSR, which manage screening, data extraction, and quality assessment [12]. If you need a free, collaborative screener, Rayyan is an excellent starting point [9]. For reviews incorporating AI-based prioritization, explore tools like EPPI-Reviewer or SWIFT-Review [12]. Always start with a pilot test on a small subset of your references.

Problem: My team and I are self-taught on an automation tool, and we lack confidence. This is a common barrier to adoption [9]. Where can we find reliable training?

- Solution: First, consult the official knowledge base and tutorial videos provided by the tool developer. Second, seek out method papers that validate the tool's use (see Table 1). Third, engage with the research community through forums or networks like the International Collaboration for the Automation of Systematic Reviews (ICASR).

Workflow and Process Efficiency

Problem: The promised time savings from automation aren't materializing. Our screening phase is still taking too long.

- Solution: This often stems from a poorly configured workflow. Ensure you are using the tool's deduplication functions before screening begins. For AI-powered tools, the "work saved" is not immediate; you must first manually screen a sufficient batch of references (often 500-1000) to train the algorithm before it can effectively prioritize the remainder [10]. Re-evaluate your inclusion/exclusion criteria for clarity.

Problem: We have discrepancies in how different reviewers apply labels during screening, undermining the AI model's learning.

- Solution: Before full screening, conduct a calibration exercise. All reviewers should independently screen the same 50-100 abstracts, compare decisions, and discuss disagreements to refine the protocol. Use your tool's feature to resolve conflicts formally. This establishes consistency, which is crucial for both manual and automated screening accuracy [12].

Technical Performance and Accuracy

Problem: Our AI-based screening tool is excluding too many relevant studies (high false negatives). How do we improve recall?

- Solution: High recall (minimizing false negatives) is paramount [10]. First, check if your training set is representative and large enough. Second, adjust the tool's confidence threshold to be more inclusive. Third, consider a hybrid approach. A study found that a rules-based filter looking for the co-occurrence of key study characteristics (like Exposure and Outcome) in an abstract achieved 98% recall, potentially outperforming some standard ML classifiers [10]. You can apply such a filter before or after AI screening as a safety check.

Problem: The tool's performance seems erratic and different from validation studies we've read.

- Solution: Remember that tool performance is question- and corpus-dependent. An AI model validated on clinical trial abstracts may not perform as well on ecotoxicological observational studies due to different writing conventions and terminology. Treat validation study metrics as a guide, not a guarantee. Continuously monitor your project's specific performance metrics.

Data and Collaboration

Problem: We need to transfer data (e.g., screened references) from one tool to another, but we're worried about losing information.

- Solution: Always export and back up your data at each major stage. Most tools allow export in RIS or CSV formats. When transferring, map fields carefully and perform a spot-check on a sample of records post-import to ensure fidelity. Document all data handling steps for reproducibility [12].

Problem: Collaboration features in our tool are clunky, causing version control issues and communication gaps within the team.

- Solution: Clearly define and document your collaboration protocol. Assign roles (e.g., who can make final inclusion decisions). Use the tool's built-in commenting or conflict resolution modules for all discussions related to specific studies to maintain an audit trail. Schedule regular sync-ups outside the tool to discuss process issues.

The table below summarizes key features of major automation tools, based on survey data and technical evaluations [9] [12] [10].

Table 1: Comparison of Major Systematic Review Automation Tools

| Tool Name | Primary Screening Methodology | Key Features & Integration | Reported User Adoption & Notes |

|---|---|---|---|

| Covidence | Manual screening with AI prioritization (in some versions) | Manages title/abstract screening, full-text review, risk of bias (RoB), data extraction. Integrates with reference managers. | Top-used tool (45% of respondents). Commonly abandoned (15%), indicating potential usability challenges [9]. |

| Rayyan | Manual screening with ML-based ranking and deduplication | Free, collaborative web app for blinding and resolving conflicts during screening. | Used by 22% of respondents; also highly abandoned (19%), suggesting users may outgrow its initial features [9]. |

| DistillerSR | Configurable manual screening with AI assist | Highly customizable forms for screening and data extraction, strong compliance and audit trail features. | Robust platform for large-scale reviews; noted as abandoned by 14% of users [9]. |

| EPPI-Reviewer | Manual screening with active learning (AI prioritization) | Supports complex review types (e.g., meta-narrative, framework synthesis). Code is open-source. | Part of the "Big Four" comprehensive platforms. Known for active learning capabilities [12]. |

| JBI SUMARI | Manual screening | Supports systematic reviews, umbrella reviews, and scoping reviews across diverse fields. | Developed by the Joanna Briggs Institute; part of the comprehensive platform suite [12]. |

| PECO/EO Rule-Based Filter [10] | Automated exclusion based on missing key characteristics | Uses NLP to detect if Exposure and Outcome terms are absent from an abstract. | Not a standalone tool, but a method. Research demonstrated 93.7% exclusion rate with 98% recall, offering a high-recall pre-screening filter [10]. |

Experimental Protocol: PECO-Based Automated Screening

The following protocol details a validated, rules-based methodology for automating the initial screening of observational studies in fields like ecotoxicology. This approach can be implemented using text-mining software (e.g., the General Architecture for Text Engineering - GATE) or as a pre-processing step before using commercial screening tools [10].

Detailed Methodology [10]:

Search & Corpus Creation: Execute your systematic search strategy in relevant databases (e.g., PubMed, Web of Science, Environment Complete). Import results into a reference manager, remove duplicates, and export the titles and abstracts of all unique references into a plain text format suitable for text mining.

Development of Characteristic Dictionaries: For your specific review question, create controlled vocabularies.

- Population (P): Terms describing the organisms or systems studied (e.g., "Daphnia magna," "zebrafish embryo," "soil microbiome").

- Exposure (E): Terms for the chemical or stressor (e.g., "microplastic," "herbicide," "heavy metal," "temperature stress").

- Outcome (O): Terms for the measured effects (e.g., "mortality," "growth inhibition," "gene expression," "reproductive success").

- Confounders (C): (Optional) Terms for adjusting factors. This study found confounder terms were rarely stated in abstracts and their inclusion reduced screening efficiency [10].

Text Mining and Rule Execution: Using a text-mining platform (e.g., GATE), implement a rule-based algorithm. The algorithm parses each abstract sentence, identifies key nouns and phrases, and matches them against the P, E, C, O dictionaries. The output is a simple binary code for each abstract indicating the presence or absence of phrases from each category.

Application of Screening Threshold: Apply a pre-defined inclusion rule. The validation study found the most effective rule was: "Include a study for manual screening only if the algorithm detects terms for both Exposure (E) AND Outcome (O) in the abstract." Studies missing either E or O terms are automatically excluded. This rule achieved a recall of 98%, meaning it missed only 2% of truly relevant studies, while saving approximately 90% of the manual screening workload [10].

Validation and Manual Review: The final step is to manually screen the subset of studies flagged as "includes" by the algorithm. It is critical to document the performance of the automated step (calculating its recall and precision against a small, manually screened sample) in your systematic review methods section.

The Researcher's Toolkit: Essential Materials & Reagents

Table 2: Key Research Reagent Solutions for Automated Screening Experiments

| Item | Function in the Experimental Protocol | Notes & Considerations |

|---|---|---|

| Reference Corpus | The primary "reagent": A cleaned, deduplicated set of study titles and abstracts in machine-readable format (e.g., XML, JSON, plain text). | Quality is critical. Ensure abstracts are correctly matched to citations. Missing abstracts will be auto-excluded, potentially lowering recall. |

| Characteristic Dictionaries | Controlled vocabularies defining key concepts (P, E, O) for the NLP algorithm. Act as specific "detection probes." | Must be developed with domain expertise. Start from MeSH terms or authoritative glossaries. Requires iterative refinement and testing. |

| Text-Mining Software (e.g., GATE) | The "instrument" for executing the rule-based screening protocol. Processes the corpus using the dictionaries and linguistic rules. | GATE is open-source and provides a framework for developing custom processing pipelines. Alternatively, scripts can be written in Python (using NLTK, spaCy) or R. |

| Gold Standard Test Set | A subset of references (min. 50-100) that have been definitively classified (include/exclude) by human experts. | Used to calibrate dictionaries and validate the algorithm's performance (calculate recall/precision). Essential for reporting methodology. |

| Deduplication Tool | A pre-processing tool to remove duplicate records from multiple database searches. | Built into many reference managers (EndNote, Zotero) and systematic review platforms (Covidence, Rayyan). Critical for an accurate workflow. |

| Reporting Checklist (PRISMA) | A guideline framework for transparently reporting the entire review process, including the use of automation tools. | Using automation affects the PRISMA flow diagram. You must report the number of records excluded by the automation tool and its performance [12]. |

For researchers conducting systematic reviews in ecotoxicology, the Ecotoxicology (ECOTOX) Knowledgebase is an indispensable, publicly available resource for streamlining the initial evidence-gathering phase [6]. It is a comprehensive, curated database that provides information on the adverse effects of single chemical stressors on ecologically relevant aquatic and terrestrial species [6]. By compiling peer-reviewed test results into a structured, searchable format, ECOTOX addresses one of the most time-consuming steps in systematic reviews: the identification and collation of relevant toxicity data.

The database is curated from over 53,000 scientific references, encompassing more than one million test records for over 13,000 species and 12,000 chemicals [6]. This vast repository allows researchers to rapidly access toxicity benchmarks, inform ecological risk assessments, and support chemical registration processes without starting literature searches from scratch [6]. Within the context of automating systematic review screening, tools like ECOTOX serve as a critical pre-filtered data layer. They reduce the volume of primary literature that must be manually screened by sophisticated AI-driven tools (e.g., SWIFT-Active Screener, EPPI-Reviewer) in later stages, thereby accelerating the entire evidence synthesis workflow [12] [13].

The following table summarizes the core attributes and relevance of the ECOTOX Knowledgebase to automated systematic reviewing:

Table: The ECOTOX Knowledgebase as a Foundational Resource for Automated Screening

| Attribute | Description | Role in Systematic Review Automation |

|---|---|---|

| Data Scope | >1M test records; 13K species; 12K chemicals; from 53K references [6]. | Provides a massive, pre-identified corpus of relevant studies, reducing initial search burden. |

| Source Quality | Data abstracted from peer-reviewed literature via exhaustive search protocols [6]. | Ensures data quality and reliability for the downstream review process. |

| Key Functionality | Search by chemical, species, or effect; advanced filtering; data visualization [6]. | Enables rapid, targeted queries to gather a precise subset of data for a review question. |

| Regulatory Utility | Used to develop water quality criteria, ecological risk assessments, and support TSCA evaluations [6]. | Directly supports regulatory-focused systematic reviews common in ecotoxicology. |

| Integration Potential | Data can be exported for use in other screening and analysis tools [6]. | Serves as a high-quality data feed for dedicated systematic review software platforms. |

Core Workflow: Integrating Curated Databases with Active Learning Tools

The most efficient modern systematic reviews in ecotoxicology combine the breadth of curated databases with the intelligent prioritization of active learning screening tools. This integration creates a hybrid workflow that significantly enhances efficiency.

The foundational step involves using the ECOTOX Knowledgebase to execute a precise, high-recall query based on the review's PICO criteria (Population/Plant, Intervention/Chemical, Comparator, Outcome) [6] [14]. The resulting set of literature citations and associated test records forms the initial corpus. This corpus is then imported into an active learning systematic review platform like SWIFT-Active Screener or EPPI-Reviewer [12] [13]. These platforms use machine learning models that learn from a reviewer's initial inclusion/exclusion decisions. They subsequently prioritize the remaining unscreened documents, pushing the most likely-to-be-relevant articles to the top of the queue [13]. This allows reviewers to identify the majority of relevant articles after screening only a fraction of the total list, achieving significant time savings [13].

Diagram: Integrated Workflow for Semi-Automated Evidence Gathering. This process combines the targeted data retrieval of curated databases with the intelligent prioritization of active learning tools to streamline screening [6] [13].

Technical Support Center: Troubleshooting Guides & FAQs

This section addresses common technical and methodological challenges researchers face when using curated databases and automation tools for systematic reviews.

FAQ 1: Data Retrieval and Handling

Q1: My query in the ECOTOX Knowledgebase returned an overwhelming number of results. How can I refine it to be more manageable for screening? A: An overly broad result set undermines efficiency. Use ECOTOX's 19 available filter parameters strategically [6]. Start by applying filters for the most critical aspects of your review protocol:

- Effect/Endpoint: Filter for the specific toxicity endpoints (e.g., "LC50," "mortality," "reproduction").

- Test Duration: Differentiate between acute and chronic studies.

- Exposure Medium: Specify aquatic (freshwater/saltwater) or terrestrial.

- Species Taxonomic Group: Filter to your relevant groups (e.g., "fish," "aquatic invertebrate"). Export the refined list and use it as the primary corpus for your screening tool. This targeted approach reduces noise and improves the performance of subsequent active learning algorithms [14] [13].

Q2: How do I handle the export from ECOTOX to ensure compatibility with my systematic review software (e.g., Covidence, SWIFT-Active Screener)? A: Compatibility is key for a smooth workflow. ECOTOX allows you to customize output selections from over 100 data fields during export [6]. For a seamless import into most screening tools:

- Ensure you export the core citation metadata (Author, Title, Journal, Year, DOI/PMID) as a standard format like

.csvor.ris. - The ECOTOX-specific test data (species, chemical, effect values) can be exported in a separate file. This detailed data is crucial for the subsequent data extraction phase after screening.

- Consult the "Help" section of your chosen systematic review software for specific import formatting requirements. Most modern tools accept standard bibliographic formats [12] [13].

FAQ 2: Integration with Screening Automation

Q3: The active learning model in my screening tool doesn't seem to be prioritizing relevant articles accurately. What could be wrong? A: Poor model performance often stems from an inadequate or biased initial "seed" set. The active learning model relies on your initial screening decisions to learn [13]. To fix this:

- Screen a Larger, Random Seed Set: Before relying on prioritization, screen a randomly selected batch of 100-200 articles. This gives the model a more representative foundation.

- Ensure Consistent Application of Inclusion Criteria: Review your protocol. Inconsistency in early decisions confuses the model. Dual screening on the seed set can improve consistency.

- Check Corpus Quality: If your initial corpus from ECOTOX is still too broad or off-topic, the model will struggle. Return to ECOTOX and refine your search with additional filters [6] [13].

Q4: How do I know when to stop screening with an active learning tool? When is it safe to assume I've found all relevant articles? A: You should not stop screening simply because relevant articles stop appearing consecutively. Reliable active learning tools like SWIFT-Active Screener incorporate a statistical recall estimation model [13]. This model continuously estimates the number of relevant articles remaining in the unscreened pile. A common best practice is to set a stopping threshold, such as screening until the model estimates with high confidence that over 95% of all relevant articles have been found. This provides a objective, data-driven stopping point instead of an arbitrary one [13].

FAQ 3: Regulatory and Validation Context

Q5: How can I validate that my semi-automated review process using these tools is robust enough for regulatory submission (e.g., for REACH, TSCA)? A: Regulatory acceptance hinges on transparency and methodological rigor. Your review protocol must pre-specify the use of these tools. Key steps include:

- Documenting Search & Filtering: Precisely record all ECOTOX search terms and filters used [6].

- Describing the Automation Method: Detail the active learning tool used, the seed set size, and the stopping criterion (e.g., 95% estimated recall) [13].

- Performing Quality Checks: Even with automation, a human quality check is essential. Plan to double-screen a random sample (e.g., 10-20%) of the excluded records to validate the model's accuracy and ensure no relevant studies were missed. This quality assurance data should be reported [12].

Q6: Are there other key EPA tools that complement ECOTOX in the evidence gathering and review process? A: Yes, the EPA's CompTox suite offers complementary tools. A critical one is the EPI Suite, a screening-level tool that estimates physical/chemical properties and environmental fate [15]. While ECOTOX provides observed toxicity data, EPI Suite's ECOSAR module can predict aquatic toxicity for chemicals with little or no available experimental data using Structure-Activity Relationships (SARs) [15]. This can be useful for prioritizing chemicals for review or filling data gaps. However, per EPA guidance, EPI Suite estimates "should not be used if acceptable measured values are available" [15].

Successful automation of systematic reviews requires a combination of specialized digital tools and a clear understanding of the experimental data being synthesized. The table below outlines key resources.

Table: Research Reagent Solutions: Digital Tools & Experimental Data Components

| Tool / Resource Name | Type | Primary Function in Review Automation | Key Consideration for Ecotoxicology |

|---|---|---|---|

| ECOTOX Knowledgebase [6] | Curated Database | Provides pre-identified, structured toxicity data as a high-quality starting corpus for screening. | Contains ecologically relevant species data. Must be queried carefully to align with review PICO. |

| SWIFT-Active Screener [13] | Active Learning Screening Software | Uses machine learning to prioritize references during title/abstract screening, drastically reducing workload. | Effective performance depends on a well-defined initial corpus (e.g., from ECOTOX). |

| EPPI-Reviewer, Covidence, DistillerSR [12] | Comprehensive Systematic Review Platform | Manages the entire review pipeline (screening, data extraction, risk of bias) in a collaborative, online environment. | Ensure the platform's data extraction forms can capture ecotoxicology-specific fields (e.g., test species, endpoint, exposure regime). |

| EPA EPI Suite (ECOSAR) [15] | Predictive (QSAR) Tool | Provides predicted ecotoxicity values for data-poor chemicals, aiding in prioritization or gap analysis. | A screening-level tool only. Predictions must be clearly distinguished from experimental data in the review. |

| Toxicity Test Data (from primary studies) | Experimental Evidence | The fundamental material for synthesis. Includes details on species, chemical, concentration, duration, endpoint, and measured effect. | Critical to extract all relevant metadata (e.g., OECD test guideline, water chemistry) for use in sensitivity and bias analyses. |

| Digital Object Identifier (DOI) | Reference Identifier | Enables reliable linking between curated database records, screening tool imports, and full-text documents. | Verifying DOIs during the initial data export/import phase prevents matching errors later. |

Experimental Protocol: Validating an Automated Screening Workflow

To empirically assess the efficiency gain from integrating a curated database with an active learning screener, researchers can follow this validation protocol.

Title: Protocol for Benchmarking a Semi-Automated Screening Workflow in Ecotoxicology Systematic Reviews. Objective: To compare the screening efficiency and recall accuracy of a traditional screening approach versus a hybrid (ECOTOX + Active Learning) approach for a defined review question. Materials: Access to the ECOTOX Knowledgebase [6], a licensed active learning screening tool (e.g., SWIFT-Active Screener [13]), a standard reference management tool. Method:

- Define a Test Review Question: Select a focused question (e.g., "What are the chronic toxicity values of Chemical X to freshwater invertebrates?").

- Create a Gold Standard Reference Set: Manually perform an exhaustive, traditional systematic search across multiple bibliographic databases (e.g., PubMed, Scopus, Web of Science) for the test question. Have two independent reviewers screen all retrieved records to establish a final "gold standard" set of included studies.

- Execute the Hybrid Workflow:

- Benchmarking Analysis:

- Primary Outcome (Efficiency): Record the total number of records screened in the hybrid workflow before stopping. Calculate the percentage reduction in screening effort compared to the total number screened in the traditional method.

- Primary Outcome (Accuracy): Compare the final set of included studies from the hybrid workflow against the "gold standard" set. Calculate recall (percentage of gold standard studies found) and precision (percentage of included studies that are relevant).

- Validation: A valid hybrid workflow should achieve recall ≥ 95% while demonstrating a screening workload reduction of ≥ 50% compared to the traditional approach.

This protocol provides a framework for researchers to validate their own automated processes, ensuring they are both efficient and trustworthy for informing regulatory decisions and ecological risk assessments [16] [17].

Technical Support Center: HTS & Computational Toxicology Platform

Frequently Asked Questions (FAQs)

Q1: What are the most common causes of high false-positive rates in my high-throughput screening (HTS) assay for endocrine disruption? A: High false-positive rates in endocrine HTS (e.g., ER/AR transactivation assays) are frequently due to: 1) Compound interference (auto-fluorescence, quenching), 2) Cytotoxicity at test concentrations masking specific activity, 3) Non-specific binding to assay components, and 4) Edge effects in microplates due to evaporation. Implement counter-screens (viability assays) and use orthogonal assay confirmation.

Q2: How do I handle and process large, heterogeneous data streams from multiple HTS and high-content screening (HCS) platforms for systematic review? A: Utilize a structured data pipeline: 1) Ingestion: Use standardized formats (e.g., AnIML, ISA-TAB). 2) Normalization: Apply plate-based controls (Z', Z-factor) and robust statistical normalization (B-score). 3) Integration: Employ a centralized database with ontology-based tagging (e.g., ECOTOX, ChEBI). Automation tools like SWIFT-Review or ASReview can then be applied to the curated dataset for screening prioritization.

Q3: Why is my concentration-response curve fitting unstable when deriving AC50 values for ToxCast/Tox21 data?

A: Unstable fits often stem from: 1) Insufficient data points across the critical effect range, 2) High variability in replicate measurements, 3) Inappropriate model selection (e.g., using Hill model for non-monotonic data). Ensure at least 10 concentrations with triplicate reads, and use suite-fitting algorithms (like those in the R tcpl package) that test multiple models and flag ambiguous fits.

Q4: What are the key validation steps when applying a machine learning model to predict in vivo toxicity from in vitro HTS data? A: Critical steps include: 1) External Validation: Testing on a wholly independent compound set not used in training. 2) Applicability Domain Assessment: Defining the chemical space where predictions are reliable. 3) Performance Metrics: Reporting AUC-ROC, precision-recall, and confusion matrices. 4) Mechanistic Plausibility: Ensuring predictions align with known adverse outcome pathways (AOPs).

Troubleshooting Guides

Issue: Low Assay Robustness (Z' < 0.5) in a Cell-Based Viability HTS.

- Check 1: Confirm cell viability and passage number at time of seeding. Use cells within passage 5-20.

- Check 2: Verify liquid handler precision for nanoliter dispensing. Perform dye-based dispense verification.

- Check 3: Monitor incubation conditions (CO2, temperature, humidity) for consistency.

- Check 4: Re-optimize positive/negative control concentrations. A shallow control response window lowers Z'.

Issue: Inconsistent Readout from a High-Content Imaging Cytotoxicity Assay.

- Step 1: Check for fluorescence crosstalk between channels. Include single-stain controls and adjust emission filters.

- Step 2: Validate focus stability across the plate. Use autofocus offset maps or whole-well focusing algorithms.

- Step 3: Standardize image analysis pipeline. Use supervised machine learning for segmentation (e.g., CellProfiler Analyst) to adapt to morphological changes induced by compounds.

Issue: Failure in Automated Data Extraction for Systematic Review Screening.

- Step 1: Audit the source PDFs. Poor OCR quality is the primary cause. Use pre-processors to enhance PDF image quality or seek native text versions.

- Step 2: Refine your natural language processing (NLP) query. Overly broad terms increase false positives; too specific increases false negatives. Iterate on a test set.

- Step 3: Implement active learning feedback. Tools like RobotAnalyst or Abstractx allow user feedback on relevance, which retrains the classifier in real-time to improve subsequent screening.

Table 1: Performance Metrics of Common HTS Assays in Tox21 Portfolio

| Assay Target (PubChem AID) | Avg. Z'-Factor | Signal-to-Noise Ratio | False Positive Rate (%) | False Negative Rate (%) |

|---|---|---|---|---|

| NRf2 Response (743077) | 0.72 | 12.5 | 4.2 | 7.8 |

| p53 Activation (743079) | 0.65 | 8.2 | 6.1 | 9.5 |

| Mitochondrial Tox (743122) | 0.58 | 6.8 | 8.5 | 12.3 |

Table 2: Comparison of Automation Tools for Systematic Review Screening

| Tool Name (Version) | Recall (%) | Precision (%) | Workload Savings (%) | Supported File Formats |

|---|---|---|---|---|

| SWIFT-Review (v2.0) | 98.5 | 35.7 | ~70 | PDF, TXT, MEDLINE, RIS |

| ASReview (v1.0) | 99.1 | 30.2 | ~90 | CSV, RIS, TSV, Excel |

| RobotAnalyst (v1.0) | 96.8 | 42.1 | ~75 | PDF, PubMed IDs |

| DistillerSR (Enterprise) | 95.0* | 50.0* | ~60* | All major formats |

*Values based on published case studies; tool uses both NLP and manual rules.

Detailed Experimental Protocols

Protocol 1: HTS Assay for Cytotoxicity (ATP Content)

- Plate Seeding: Seed HEK293 or HepG2 cells in 1,536-well plates at 1,000 cells/well in 5 µL growth medium. Incubate (37°C, 5% CO2) for 24 h.

- Compound Addition: Using a pintool or acoustic dispenser, transfer 23 nL of test compound from a 10 mM DMSO stock. Include controls: DMSO only (negative), 100 µM Staurosporine (positive cytotoxic).

- Incubation: Incubate compound with cells for 48 hours.

- ATP Detection: Add 3 µL of CellTiter-Glo 2.0 reagent. Shake orbitally for 2 min, incubate at RT for 10 min to stabilize luminescent signal.

- Readout: Measure luminescence on a plate reader (integration time: 0.5-1 sec/well).

- Analysis: Normalize raw luminescence: % Viability = (RLUsample - RLUpositive) / (RLUnegative - RLUpositive) * 100. Calculate Z' = 1 - [3*(SDpositive + SDnegative) / |Meanpositive - Meannegative|].

Protocol 2: Building an Active Learning Model for Abstract Screening

- Data Preparation: Compile a corpus of title-abstract records from a PubMed/Web of Science search. Manually label a seed set (e.g., 100 records) as "relevant" or "irrelevant."

- Feature Extraction: Convert text to TF-IDF (Term Frequency-Inverse Document Frequency) vectors using n-grams (1-2 words).

- Model Initialization: Use a naive Bayes or SVM classifier within an active learning framework (e.g., ASReview software).

- Iterative Screening: The model ranks remaining abstracts by relevance probability. Reviewer screens the top 10-20 records, providing new labels.

- Model Update: The classifier retrains after each batch of labeled records.

- Stopping Criterion: Continue until a pre-defined threshold is met (e.g., 100 consecutive irrelevant abstracts).

The Scientist's Toolkit: Research Reagent Solutions

| Item (Supplier Example) | Function in HTS/Toxicology |

|---|---|

| CellTiter-Glo 2.0 (Promega) | Luminescent ATP quantitation for viability/cytotoxicity. |

| Beta-lactamase Reporter Gene Cell Lines (Thermo Fisher) | Engineered cells for nuclear receptor screening (Tox21). |

| HuMo-DC (Hµrel) | Human primary cell co-culture for immunotoxicity screening. |

| UPLC-MS/MS System (Waters, Agilent) | Quantitative analytical chemistry for exposure assessment. |

| 1,536-Well Microplates (Corning) | Ultra-high-throughput assay format. |

| Echo 650 Acoustic Dispenser (Labcyte) | Contactless, precise transfer of compounds/DMSO. |

| CellProfiler (Broad Institute) | Open-source HCS image analysis software. |

| tcpl R Package (US EPA) | Curve-fitting and data analysis pipeline for ToxCast data. |

Workflow and Pathway Diagrams

From Setup to Synthesis: A Practical Workflow for Automated Screening

Within the demanding landscape of ecotoxicology research, the synthesis of evidence through systematic reviews is paramount for chemical safety assessment and regulatory decision-making. However, the traditional process is labor-intensive, often involving the manual screening of thousands of studies [18]. This article establishes a foundational technical support center, framed within a thesis on automation tools, to empower researchers in developing robust protocols and formulating precise eligibility criteria—the critical first steps toward implementing efficient, automated screening workflows.

Technical Support Center: Troubleshooting Guides & FAQs

This section addresses common challenges researchers encounter when initiating a systematic review with an eye toward automation.

Q1: How do I formulate a precise research question and eligibility criteria suitable for automation?

- Answer: Use a structured framework. The PICOST (Population, Intervention, Comparators, Outcome, Study design, Time period) framework is recommended for formulating the review question [19]. In ecotoxicology, this often adapts to PECO (Population, Exposure, Comparator, Outcome) [18]. Eligibility criteria must directly extend from these components. For automation, clarity is non-negotiable. Ambiguous criteria will confuse both human screeners and machine learning algorithms. Precisely define each element (e.g., "fathead minnow (Pimephales promelas) larvae" not just "fish"; "measured concentration of Bisphenol-A" not just "BPA exposure").

Q2: What are the key elements of a review protocol, and why is it critical for automated screening?

- Answer: A protocol is an a priori plan that minimizes bias and ensures reproducibility. Key elements include: administrative details; background and rationale; clearly defined PICOST/PECO question; explicit eligibility criteria; detailed search strategy for all databases; descriptions of the study selection process, data extraction, and risk-of-bias assessment methods; and a data synthesis plan [20]. For automation, the protocol is the source code. It defines the "rules" (eligibility criteria) that automated tools will help enforce and the workflow they will accelerate. It must be registered (e.g., PROSPERO) before screening begins [21].

Q3: Our initial search yields too many results. How can we refine it without compromising comprehensiveness?

- Answer: This is a common issue. First, ensure your eligibility criteria are sufficiently specific. Next, collaborate with a research librarian to troubleshoot the search strategy [21]. Use Boolean operators (AND, OR, NOT) effectively, but use NOT with caution to avoid accidentally excluding relevant studies [21]. Leverage "benchmark articles"—key papers you know should be included—to test your search string's sensitivity [21]. Finally, understand that high sensitivity is expected; a broad search retrieving many irrelevant articles is a prime use case for automation tools that can prioritize or exclude records.

Q4: What tools are available to assist with the screening phase, and how do they work?

- Answer: Several tools use machine learning to expedite screening. They typically require an initial set of human decisions ("training") and then predict the relevance of remaining records.

- Rayyan: A free, widely-used web tool that facilitates collaborative screening and uses a relevancy classifier to prioritize citations [18] [22].

- ASReview: An open-source tool that actively learns from your inclusion/exclusion decisions to present the most relevant records next [22].

- SWIFT-Review: Provides interactive literature prioritization and categorization using text mining [22].

- PICO Portal: A platform that assists with deduplication, screening, and highlighting PICO concepts in text [22].

Q5: We found an existing systematic review on a similar topic. Should we proceed?

- Answer: Conducting a duplicative review should be avoided [21]. First, thoroughly search for published reviews and protocols in registries like PROSPERO. If you find an overlapping review, assess its currency, scope, and quality. Your work is justified only if you are addressing a new question, updating outdated evidence, or using a significantly different methodology [21]. A librarian can help you navigate this decision [23].

Q6: How long does a systematic review typically take, and how does automation change this?

- Answer: A full systematic review typically takes 12 to 18 months [24]. The most time-consuming phase is screening titles and abstracts [18]. Automation tools do not perform the review for you but can significantly reduce this burden. Evidence suggests machine learning classifiers can reduce the number of abstracts needing manual screening by 30-70% [18], potentially saving weeks or months of work.

Performance Data & Experimental Protocols

Quantitative Comparison of Screening Approaches

The following table summarizes the performance of different screening methodologies, highlighting the efficacy of rule-based automation.

Table 1: Performance Comparison of Systematic Review Screening Methods

| Screening Method | Core Principle | Typical Work Saved | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| Manual Screening | Human review of all titles/abstracts | 0% (Baseline) | High judgment capability; handles ambiguity. | Extremely time-consuming and labor-intensive [18]. |

| ML-Powered Prioritization (e.g., Rayyan) | Ranks studies by relevance using word similarity [18]. | Not fixed; accelerates finding includes. | Reduces time to first inclusion; good for early stopping. | Does not fully automate exclusion; final recall uncertain. |

| Rule-Based Automated Exclusion (PECO Detection) [18] | Excludes studies lacking predefined key characteristics. | Up to 93.7% (for EO rule) | High, quantifiable work reduction; transparent logic. | Dependent on quality of abstracts and extraction rules. |

| High-Throughput Ecotoxicology Paradigms [25] | Applies lab automation (e.g., fluidics, imaging) to in vitro/vivo bioassays. | N/A (Primary research) | Generates standardized, machine-readable toxicity data. | Not a screening tool for literature; generates new data for future reviews. |

Detailed Experimental Protocol: Automated Screening via PECO Extraction

This protocol, based on published research [18], details steps to implement a rule-based automated screening module.

Objective: To automatically exclude studies from a systematic review search results that have a high probability of being irrelevant, based on the absence of key PECO (Population, Exposure, Comparator, Outcome) elements in their abstracts.

Materials & Software:

- Reference Set: Bibliographic records (title and abstract) from systematic review search, exported in XML or text format.

- Text Engineering Platform: General Architecture for Text Engineering (GATE) or similar natural language processing software [18].

- Custom Dictionaries & Rules: Domain-specific dictionaries for Exposure and Outcome terms relevant to the review (e.g., chemical names, ecological endpoints). Semantic rules to identify phrases describing Population and Comparator/Confounders.

Procedure:

- Training Set Creation: From the total references, identify a subset of included and excluded studies from a pilot manual screen (e.g., 20-50 studies) [18].

- Dictionary Development: Using the training set, manually curate comprehensive lists of keywords and phrases for Exposure and Outcome. For example, for a review on microplastics, exposure terms would include "microplastic," "nanoplastic," "polyethylene," etc.

- Rule Development in GATE: Create "JAPE" (Java Annotation Patterns Engine) transducers in GATE. These are grammatical rules that identify patterns indicative of PECO elements.

- Example Rule for Exposure: Match a phrase where a term from the Exposure dictionary is preceded by verbs like "exposed to," "treated with," or measurements like "concentration of."

- Algorithm Validation: Run the extraction algorithm on the training set. Calculate precision (percentage of correctly identified phrases) and recall (percentage of all relevant phrases found). Iteratively refine dictionaries and rules until performance is acceptable (e.g., F-score >85%) [18].

- Application to Full Dataset: Process all retrieved abstracts through the validated GATE pipeline. The output is a structured annotation marking text spans for each PECO element.

- Apply Screening Threshold Rule: Implement a logical rule to tag studies for inclusion or exclusion. For example, the highly effective "EO rule": IF (Exposure term is detected) AND (Outcome term is detected) THEN tag for manual screening; ELSE tag for automated exclusion [18].

- Human Verification: Manually screen all studies tagged for inclusion by the algorithm, plus a random sample (e.g., 10%) of excluded studies to validate rule performance and calculate final recall/work saved.

Detailed Protocol: High-Throughput Phenotypic Profiling (Cell Painting) for Ecotoxicology

This protocol aligns with emerging automation in primary research, which generates data for future reviews [25] [26].

Objective: To perform high-content, automated screening of chemical toxicity using morphological profiling in non-human vertebrate cell lines.

Materials & Reagents:

- Cell Line: Fish or other environmentally relevant vertebrate cell line (e.g., RTgill-W1 from rainbow trout).

- Staining Reagents: Cell Painting cocktail: dyes for nuclei (Hoechst), cytoplasm (Concanavalin A or phalloidin), endoplasmic reticulum, Golgi apparatus, and mitochondria [26].

- Automated Equipment: Robotic liquid handler, automated cell culture incubator, high-content imaging microscope (e.g., ImageXpress).

- Software: Image analysis software (e.g., CellProfiler) for feature extraction.

Procedure:

- Cell Seeding & Treatment: Using a robotic liquid handler, seed cells into 384-well microplates. After adherence, treat cells with a concentration gradient of the test chemical(s) and appropriate controls (vehicle, positive cytotoxicant).

- Staining & Fixation: At exposure endpoint (e.g., 48h), automate the steps of fixation, permeabilization, and staining with the Cell Painting dye cocktail.

- Automated Imaging: Plates are automatically loaded into a high-content imager, which acquires high-resolution fluorescent images from multiple sites per well across all channels.

- Morphological Feature Extraction: Images are processed by CellProfiler. The software identifies individual cells and measures ~1,500 morphological features (size, shape, texture, intensity) for each organelle stain.

- Data Analysis & Bioactivity Profile: The multidimensional data is normalized and analyzed. Treatments causing significant morphological changes are identified, and clustering analysis groups chemicals with similar phenotypic "fingerprints," inferring potential mechanisms of action.

Diagram: Workflow for Automated Systematic Review Screening

Diagram: High-Throughput Ecotoxicology (HITEC) Paradigm

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Automated Screening & High-Throughput Ecotoxicology

| Item Category | Specific Tool/Reagent | Function in Protocol Development & Automation |

|---|---|---|

| Protocol & Project Management | PRISMA Checklist [19] [24] | Provides evidence-based minimum reporting items for protocols and reviews, ensuring completeness and transparency. |

| Eligibility Criteria Framework | PICOST / PECO Template [18] [19] | Provides a structured framework to define the research question and operationalize eligibility criteria for both humans and algorithms. |

| Text Mining & NLP Engine | General Architecture for Text Engineering (GATE) [18] | An open-source platform for building custom text processing pipelines to extract PECO and other key concepts from abstracts. |

| Machine Learning Screening | ASReview / Rayyan [22] | Open-source and free-to-use software that implements active learning to prioritize screening queues, reducing manual workload. |

| High-Throughput Bioassay | Cell Painting Assay Cocktail [26] | A multiplexed fluorescent dye set that labels multiple organelles, enabling high-content morphological profiling for chemical bioactivity screening. |

| Automated Imaging & Analysis | High-Content Imager & CellProfiler [25] [26] | Hardware and software for automated, quantitative capture and analysis of cellular phenotype images, generating rich datasets for toxicity prediction. |

| Reference Management | EndNote, Rayyan [18] [22] | Tools for deduplicating search results, managing citations, and facilitating collaborative screening among review team members. |

Technical Support Center: Troubleshooting for Automated Screening Tools

This support center addresses common issues encountered when implementing AI-powered tools for automating the title/abstract screening phase of systematic reviews in ecotoxicology. Effective tool selection hinges on matching software capabilities to your project's specific scale, team structure, and review complexity.

Frequently Asked Questions (FAQs)

Q1: The AI model in our screening software (e.g., ASReview, Rayyan AI) is performing poorly, consistently prioritizing irrelevant studies. What steps should we take? A: Poor AI performance often stems from insufficient or biased initial training data. Follow this protocol:

- Pause live screening. Revert to the software's "exploration" or "training" mode.

- Re-evaluate your seed set. Manually screen a random sample of 50-100 records. Label them as "relevant" or "irrelevant" with high confidence.

- Ensure balance. If your topic is niche, aim for at least 15-20 relevant studies in the seed set. The AI needs clear positive examples.

- Re-train the model. Use this curated, balanced seed set to initiate or re-train the AI model. The software should now have a better foundational understanding of your inclusion criteria.

- Resume screening. Monitor the "relevancy ranking" as you screen; relevant studies should cluster toward the top.

Q2: Our multi-reviewer team is experiencing conflicts and inconsistencies in labeled records when using collaborative screening platforms. How can we resolve this? A: This is a workflow and calibration issue, not solely a software bug.

- Implement a pre-screening calibration exercise. Before the main screening, all reviewers independently screen the same pilot set of 50-100 articles using the shared platform.

- Calculate Inter-Rater Reliability (IRR). Use the platform's reporting tool or export data to calculate Cohen's Kappa or Percent Agreement.

- Hold a conflict resolution meeting. Discuss disagreements on the pilot set to clarify and refine the inclusion/exclusion criteria.

- Configure software settings. Enable "conflict resolution" workflows where disputed records are flagged for a third reviewer or lead investigator to make a final judgment. Ensure all users are assigned to the correct project phase.

Q3: We need to customize our screening workflow to include a specific data extraction field (e.g., "LOE: Level of Evidence") immediately after inclusion. How can we achieve this without breaking the workflow? A: Most advanced tools (e.g., DistillerSR, SysRev) allow for custom form creation.

- Access the study/form designer. Navigate to the project administration settings.

- Add a custom field. Create a new field with the label "LOE." Define the answer type (e.g., dropdown: "I, II, III, IV" or numeric).

- Apply conditional logic. Set the field to become mandatory only after the "Title/Abstract Screening" decision is set to "Include." This ensures screeners are not burdened with it prematurely.

- Test the workflow. Use a test project or a few dummy records to verify that the field appears and behaves as intended at the correct stage.

Comparative Performance Data of Common Screening Tools

Table 1: Feature Comparison of Selected Systematic Review Automation Tools Relevant to Ecotoxicology

| Tool Name | Core AI/ML Capability | Collaboration Features | Customization Level | Ideal Project Scale |

|---|---|---|---|---|

| ASReview | Active Learning (Prioritization) | Limited (Basic sharing) | Low (Open-source; can modify code) | Small to Medium, single-reviewer focus |

| Rayyan | AI Suggestions & Deduplication | Strong (Multi-reviewer, blinding, conflict resolution) | Medium (Custom tags, filters) | Medium to Large, collaborative teams |

| DistillerSR | AI Rank & Relevance Scoring | Enterprise-grade (Complex roles, audit trails) | High (Custom forms, workflows, reporting) | Large, regulatory-compliant reviews |

| SysRev | AI Classifier & Prioritization | Strong (Dashboards, task assignment) | High (Custom data extraction forms) | Medium to Large, interdisciplinary teams |

Experimental Protocol: Benchmarking AI Tool Performance

Objective: To empirically evaluate the workload savings offered by an AI-powered prioritization tool compared to traditional random screening for an ecotoxicology systematic review.

Methodology:

- Dataset Preparation: A validated benchmark dataset of approximately 5,000 citation abstracts from an existing ecotoxicology review is imported into the test software (e.g., ASReview). The "ground truth" inclusion/exclusion labels are hidden from the algorithm.

- Seed Set Creation: A random sample of 25 records (containing ~5 relevant studies) is selected to simulate the initial manual screening effort and used to train the AI model.

- Simulated Screening: The experiment runs in simulation mode. The AI model prioritizes the remaining records. The software records the cumulative number of relevant studies found (recall) versus the cumulative number of records screened.

- Control Arm: A separate simulation is run where records are presented in a random order.

- Outcome Measurement: The primary metric is Work Saved over Sampling at 95% recall (WSS@95). This calculates the percentage of records a reviewer does not have to screen before finding 95% of all relevant studies, compared to the random approach.

Example Results: In a simulation, the AI-prioritized order may achieve 95% recall after screening only 30% of the total dataset, whereas random order requires screening 95% of it. Therefore, WSS@95 = 95% - 30% = 65% workload reduction.

Title: AI-Powered Screening Simulation Protocol

The Scientist's Toolkit: Research Reagent Solutions for Automated Review

Table 2: Essential Digital "Reagents" for an Automated Screening Experiment

| Item | Function in the Experiment | Example/Note |

|---|---|---|

| Benchmark Dataset | A pre-labeled collection of citations (relevant/irrelevant) used to validate and compare AI tool performance. | e.g., A publicly available systematic review dataset from the field of environmental toxicology. |

| Active Learning Algorithm | The core AI "engine" that queries the next most informative record to label, optimizing the discovery of relevant studies. | e.g., Support Vector Machines (SVM), Naïve Bayes, or neural networks embedded in tools like ASReview. |

| Deduplication Module | Identifies and merges duplicate citations from multiple databases (e.g., PubMed, Scopus, Web of Science) to prevent bias. | A critical pre-processing step in Rayyan, DistillerSR, and others. |

| Inter-Rater Reliability (IRR) Calculator | A statistical module (often built into collaboration tools) that quantifies screening consistency between reviewers (e.g., Cohen's Kappa). | Essential for ensuring protocol adherence in team-based screening. |

| PRISMA Flow Diagram Generator | A reporting tool that automatically populates the PRISMA flowchart based on screening decisions logged in the platform. | Saves significant time during the manuscript writing phase (feature in DistillerSR, SysRev). |

Troubleshooting Guides & FAQs

Q1: After importing my references from EndNote, many records appear to be missing. What could be the cause? A: This is commonly due to duplicate records being automatically removed by the platform. Both Covidence and DistillerSR have strict deduplication protocols upon import. First, check the import report summary. If the issue persists, ensure your EndNote library exports all relevant fields (including abstracts) in a compatible format like RIS or PubMed XML. A preliminary deduplication in a reference manager before import can prevent unexpected record loss.

Q2: During title/abstract screening, the "Maybe" or "Conflict" pile is growing too large, slowing down progress. How can we refine our criteria? A: A large uncertain pile often indicates screening criteria that are too vague. Pause screening and conduct a "calibration exercise." Have all screeners independently review the same 50-100 records from the "Maybe" pile, then meet to discuss discrepancies. Use this discussion to clarify and explicitly rewrite inclusion/exclusion rules, adding specific examples. Update the platform's screening form with these new decision trees before proceeding.

Q3: We are experiencing significant lag or timeout errors when trying to screen references in Rayyan. What steps can we take? A: Rayyan's performance can degrade with very large review projects (>10k references) or when using many complex keywords/filters simultaneously. First, try clearing your browser cache or switching to a different browser (Chrome/Firefox are recommended). If the issue persists, break your project into smaller, manageable phases (e.g., screen by year of publication). For persistent issues with large datasets, consider platforms like Covidence or DistillerSR, which are engineered for higher-volume commercial research.