Accelerating Ecotoxicology Research: A Comprehensive Guide to FAIR Data Principles for Scientists and Drug Developers

This article provides a comprehensive overview of FAIR (Findable, Accessible, Interoperable, Reusable) data principles tailored for ecotoxicology researchers and drug development professionals.

Accelerating Ecotoxicology Research: A Comprehensive Guide to FAIR Data Principles for Scientists and Drug Developers

Abstract

This article provides a comprehensive overview of FAIR (Findable, Accessible, Interoperable, Reusable) data principles tailored for ecotoxicology researchers and drug development professionals. It explores foundational concepts, methodological applications, troubleshooting strategies, and validation techniques to enhance data integrity, reproducibility, and collaboration [citation:3][citation:6][citation:8]. By integrating FAIR principles, ecotoxicology can advance scientific discovery, support regulatory compliance, and foster innovation in environmental health research [citation:2][citation:10].

Demystifying FAIR: Foundational Principles and Their Critical Role in Ecotoxicology

The Genesis and Core Tenets of FAIR Data Principles

Ecotoxicology research, which investigates the effects of toxic chemicals on biological organisms and ecosystems, generates complex, multi-scale data. This spans from molecular pathways and single-species bioassays to complex field studies and population modeling. The increasing volume, velocity, and variety of this data present a significant stewardship challenge [1]. Historically, valuable datasets have been siloed, poorly described, and formatted in ad-hoc ways, rendering them difficult to find, interpret, or integrate for new analyses or meta-studies. This undermines scientific reproducibility, hampers the reuse of costly experimental data, and ultimately slows progress in environmental risk assessment and regulatory science.

The FAIR Guiding Principles (Findable, Accessible, Interoperable, Reusable), formally published in 2016, were conceived to address this exact crisis in data management across the sciences [1] [2]. Their genesis lies in a 2014 workshop in Leiden, Netherlands, where stakeholders from academia, industry, and publishing convened to develop guidelines for enhancing the reusability of digital assets [2]. A cornerstone of the FAIR principles is their emphasis on machine-actionability—the capacity of computational systems to automatically find, access, interoperate, and reuse data with minimal human intervention [1] [3]. This is not merely about human readability but about preparing data for the computational age, enabling advanced analytics, artificial intelligence, and large-scale data integration essential for tackling modern ecotoxicological questions [4].

This whitepaper details the genesis and core tenets of the FAIR principles, framing them within the specific needs and workflows of ecotoxicology research. It provides a technical guide for implementing these principles to transform data from a scattered byproduct into a foundational, enduring, and reusable asset for the community.

The Genesis and Philosophical Foundation

The FAIR principles emerged from a clear recognition of a growing problem: data was becoming both the lifeblood of scientific discovery and a potential liability due to poor management. The seminal 2016 paper by Wilkinson et al. in Scientific Data codified a set of community-developed guidelines that shifted the focus from data sharing as an endpoint to data reusability as the ultimate objective [4] [2].

A critical philosophical underpinning of FAIR is the distinction between FAIR data and Open Data. Data can be FAIR without being openly accessible to the public [4] [5]. For ecotoxicology, which often deals with sensitive location data, proprietary chemical structures, or confidential regulatory studies, this distinction is vital. FAIR principles ensure that even restricted data, when accessed by authorized researchers or systems, is structured and described to be optimally usable. Conversely, data can be openly available (e.g., dumped in a public repository without rich metadata) but not FAIR, severely limiting its utility [4]. The principles also complement other frameworks like the CARE principles (Collective Benefit, Authority to Control, Responsibility, Ethics) for Indigenous data governance, highlighting that technical excellence (FAIR) must be paired with ethical stewardship [4].

Table 1: Foundational Concepts of FAIR Data Principles

| Concept | Definition | Relevance to Ecotoxicology |

|---|---|---|

| Machine-Actionability | The capacity of computational systems to find, access, interoperate, and reuse data autonomously [1] [3]. | Enables high-throughput toxicity prediction, cross-study meta-analysis, and automated workflow integration. |

| Metadata | Data that provides structured information about other data (the who, what, when, where, why, and how) [3]. | Essential for describing experimental conditions, test organisms, chemical dosing, and environmental parameters critical for interpretation. |

| Persistent Identifier (PID) | A globally unique and permanent reference to a digital object (e.g., DOI, Handle) [1] [6]. | Uniquely and permanently identifies a dataset, bioassay protocol, or a chemical sample, preventing ambiguity and link rot. |

| Interoperability | The ability of data or tools from disparate sources to work together with minimal effort [3]. | Allows integration of chemical fate data, genomic response data, and field ecological monitoring data for a systems-level view. |

| Provenance | Information about the origin, history, and processing steps of data [3]. | Tracks data lineage from raw instrument output through quality control and analysis, which is crucial for regulatory acceptance and reproducibility. |

The Core Tenets: A Detailed Breakdown for Ecotoxicology

The four pillars of FAIR provide a structured framework for enhancing data utility.

3.1 Findable The first step to reuse is discovery. For data to be findable, it must be equipped with machine-readable metadata and a globally unique, persistent identifier (PID) like a Digital Object Identifier (DOI) [1] [7]. In ecotoxicology, this means datasets should be registered in searchable repositories (e.g., ESS-DIVE, BCO-DMO, or domain-specific ones like the US EPA's CompTox Chemistry Dashboard) rather than languishing on lab servers [8]. Rich metadata should include standardized keywords (e.g., from the ECOTOXicology Knowledgebase ontology), the tested chemical (using an InChIKey or CAS RN), test species, and endpoints measured [3].

3.2 Accessible Accessibility stipulates that once a user finds the desired data's metadata and identifier, they can retrieve the data using a standardized, reliable protocol [1] [6]. This often involves APIs (Application Programming Interfaces) for programmatic access. Importantly, metadata should remain accessible even if the underlying data is deprecated or access is restricted [3]. For sensitive ecotoxicology data (e.g., from confidential business information studies), the principle requires clear authentication and authorization protocols, not necessarily open access [4] [5].

3.3 Interoperable Interoperable data uses shared languages and vocabularies to allow integration with other datasets. This is paramount in ecotoxicology for combining data across studies, chemicals, or species. Key practices include:

- Using controlled vocabularies and ontologies (e.g., Environment Ontology (ENVO), Phenotype And Trait Ontology (PATO), Chemical Entities of Biological Interest (ChEBI)) to describe terms unambiguously [3].

- Using standard, machine-readable data formats (e.g., JSON-LD, RDF, well-structured CSV following templates) over proprietary or custom binary formats [3].

- Including qualified references to other related data (e.g., linking a toxicity result to the specific chemical structure in PubChem and the test protocol in a methods repository) [1].

3.4 Reusable Reusability is the ultimate goal, demanding that data is richly described with the clarity and context needed for replication or novel application. This extends beyond basic metadata to include [6] [7]:

- Clear Licensing: An explicit data usage license (e.g., Creative Commons, Open Data Commons) defines the terms of reuse [2].

- Detailed Provenance: A complete history of the data's origin and processing steps [3].

- Community Standards: Adherence to domain-specific reporting standards, such as the newly developed community (meta)data reporting formats for environmental science, which provide templates for data like water chemistry or soil respiration measurements [8].

- Accurate, Relevant Attributes: Comprehensive documentation of methodologies, units, measurement precision, and quality control steps [3].

Implementing FAIR: Protocols and a Framework for Ecotoxicology

Moving from principle to practice requires a structured approach. The following protocol, adapted from successful community frameworks in environmental science, provides a actionable pathway [8] [9].

4.1 Experimental Protocol: Adopting Community Reporting Formats

A proven methodology for achieving interoperability and reusability is the development and use of community-centric (meta)data reporting formats [8]. These are templates and guidelines for consistently formatting specific data types.

- Objective: To structure ecotoxicology datasets for seamless integration and reuse by standardizing both data and metadata formatting.

- Materials: Raw experimental data, spreadsheet or database software, access to relevant ontologies (e.g., OBO Foundry), a trusted data repository (e.g., ESS-DIVE, Zenodo).

- Procedure:

- Identify Relevant Standards: Before data collection, search for existing reporting formats or standards in ecotoxicology and adjacent fields (e.g., the formats for water chemistry or amplicon sequences developed for environmental science) [8].

- Create a Data Crosswalk: Map the variables and metadata you plan to collect to terms in existing ontologies. Identify gaps where community standards are lacking [8].

- Use and Adapt Templates: Employ existing reporting format templates. If none exist for your specific data type (e.g., a novel behavioral bioassay), draft a new template using a generic framework. Define a minimal set of required metadata fields (e.g., chemical identifier, concentration, exposure duration, species/strain, endpoint, statistical n) and optional fields for rich context [8].

- Iterate with Community Feedback: Share draft templates with collaborators or at community workshops to refine variable definitions and ensure utility [8].

- Document and Publish the Format: Finalize the format with clear instructions. Publish the format template itself in a repository with a PID to enable citation and version control [8].

- Apply the Format: Structure all collected data according to the final template. Use vocabulary terms from ontologies in metadata fields.

- Deposit Data: Submit the formatted dataset and its rich metadata to a FAIR-aligned repository, ensuring it receives a persistent identifier [3].

Table 2: Common Challenges and Strategic Solutions in FAIR Implementation [4] [5]

| Challenge | Prevalence / Impact | Strategic Solution for Ecotoxicology |

|---|---|---|

| Fragmented Data Systems & Formats | High. Labs use diverse instruments and software, creating silos. | Adopt Laboratory Information Management Systems (LIMS) or middleware that export data in standardized, machine-readable formats. Use consolidated platforms for data warehousing [5]. |

| Lack of Standardized Metadata | Very High. Free-text descriptions are common and unparseable. | Implement metadata templates (e.g., reporting formats) mandatory for data submission. Employ data stewards to assist researchers [2] [8]. |

| High Cost of Transforming Legacy Data | Significant. Retrofitting old data is resource-intensive. | Prioritize FAIRification for high-value legacy datasets with reuse potential. Seek dedicated funding for curation projects. Focus on making new data FAIR from the outset [2]. |

| Cultural Resistance & Lack of Skills | Major barrier. FAIR is perceived as a burden with unclear reward. | Integrate FAIR training into graduate programs. Institutions must recognize data management as a scholarly contribution and provide professional support staff [3]. |

| Ambiguous Data Ownership & Governance | Creates compliance and audit risk, especially with multi-partner projects. | Develop clear, project-specific data governance agreements upfront. Define roles for data stewards, custodians, and lifecycle owners [5]. |

Implementing FAIR principles is facilitated by a growing ecosystem of tools and resources.

- Metadata Standards and Ontologies: The Environment Ontology (ENVO) for habitats and environmental materials; Chemical Entities of Biological Interest (ChEBI) for small molecules; Phenotype And Trait Ontology (PATO) for measured outcomes; the ECOTOX Ontology for specific toxicological concepts.

- Data Repositories: ESS-DIVE for integrated environmental systems science data; Zenodo or Figshare for general-purpose, citable archiving; GBIF for biodiversity and species occurrence data; US EPA CompTox Chemistry Dashboard for chemical property and toxicity data.

- FAIRification Tools: FAIR Data Point software for publishing metadata; OpenRefine for data cleaning and reconciliation with ontologies; ISA (Investigation-Study-Assay) tools for creating standardized metadata.

- Implementation Frameworks: The FAIR Process Framework provides a six-step guide (Discovery, Understanding, Planning, Co-developing, Strategy, Implementing) for organizational adoption [9]. The Three-point FAIRification Framework (from GO FAIR) offers a practical "how-to" guideline [1].

The FAIR principles represent a fundamental shift in scientific culture, treating data as a primary, reusable research output. For ecotoxicology, embracing FAIR is not an administrative burden but a strategic imperative to enhance reproducibility, accelerate discovery through data fusion, and maximize the return on investment from complex and expensive environmental studies. The journey to becoming FAIR requires commitment, resources, and community collaboration, often facilitated by data stewards—professionals specializing in data management and curation [2].

The future of FAIR lies in increased automation (e.g., AI-assisted metadata generation), deeper semantic interoperability (FAIR 2.0), and the concept of FAIR Digital Objects—bundles of data, metadata, and code that are independently actionable [2] [10]. As funders and publishers increasingly mandate FAIR-aligned data practices, the ecotoxicology community that leads in implementing these principles will be best positioned to generate robust, credible, and impactful science for environmental protection.

Why FAIR is Transformative for Ecotoxicology and Environmental Health Research

Ecotoxicology and environmental health research are at a critical juncture. The field faces a dual challenge: an ever-expanding list of environmental chemicals requiring safety assessment and a well-documented crisis in research reproducibility that leads to wasted resources and delayed policy action [11]. This is compounded by data that are often siloed in incompatible formats, described with inconsistent terminology, and lack the detailed metadata necessary for validation or reuse. The Findable, Accessible, Interoperable, and Reusable (FAIR) principles provide a transformative framework to overcome these obstacles, shifting the paradigm from data as a private research output to a public, foundational asset for the entire scientific community.

The imperative for FAIR is not merely theoretical. In drug discovery, making a large-scale toxicology database (eTOX) more FAIR directly increased its potential for reuse and sharing, which can lower drug attrition rates, reduce animal testing, and accelerate novel drug development [12]. Similarly, in environmental health, the preregistration of studies through platforms like the FAIR Environmental and Health Registry (FAIREHR) enhances transparency and harmonizes data collection from the outset, enabling more robust exposure assessments and policy decisions [13] [14]. This article details how the systematic application of FAIR principles—through standardized reporting, persistent identifiers, and interoperable metadata—is revolutionizing experimental workflows, empowering computational toxicology, and building a sustainable, collaborative future for environmental science.

Table 1: Documented Impact of FAIR Implementation in Toxicology and Environmental Health

| Project/Initiative | Domain | Key FAIR Achievement | Quantified or Projected Benefit |

|---|---|---|---|

| eTOX IMI Project [12] | Predictive Toxicology | Increased FAIRness level from 25% to 50% via chemical identifier standardization and ontology mapping. | Enables broader sharing/reuse of 8.8 million pre-clinical data points; potential to lower drug attrition and reduce animal testing. |

| FAIREHR Platform [13] [14] | Human Biomonitoring (HBM) | Prospective harmonization of HBM metadata via a preregistration registry using the Minimum Information Requirements for HBM (MIR-HBM). | Enhances comparability of global HBM studies, supports machine discoverability, and strengthens the science-to-policy interface. |

| EFSA on Effect Models [15] | Regulatory Risk Assessment | Framework for interpreting FAIR principles for mechanistic effect models used in pesticide risk assessment. | Leads to a more efficient model review process and better integration of advanced models into regulatory workflows. |

Deconstructing FAIR: Core Principles and Their Technical Implementation

The FAIR principles establish a continuum of requirements that ensure data are machine-actionable and ready for reuse by humans. Their implementation in ecotoxicology requires domain-specific standards, tools, and a shift in research culture.

Findable: The foundation of data reuse is discoverability. This is achieved by assigning Globally Unique and Persistent Identifiers (PIDs) to both datasets and key entities within them (e.g., chemicals, organisms, samples). For example, the FAIRification of the eTOX database involved converting chemical files to commonly accepted standards and extracting formal identifiers [12]. Resources like the Research Organization Registry (ROR) provide PIDs for institutions, further clarifying provenance [16]. Rich, standardized metadata must then be registered in searchable repositories.

Accessible: Data and metadata should be retrievable by their identifier using a standardized, open communication protocol. This does not necessarily mean "open access"; data can be accessible under well-defined authorization procedures. The key is that the protocol is universal and free. Platforms like the Information Platform for Chemical Monitoring (IPCHEM) exemplify this by providing standardized access to human biomonitoring data [13].

Interoperable: This is the most technical pillar, requiring data to integrate with other datasets and applications. It is achieved through the use of controlled vocabularies, ontologies, and community-developed reporting formats. For instance, the FAIREHR platform uses a harmonized metadata schema based on MIR-HBM to ensure different studies collect compatible data [13]. The environmental health community utilizes standards like the Tox Bio Checklist (TBC) and Toxicology Experiment Reporting Module (TERM) to describe in vivo studies [11]. Tools like the ISA (Investigation, Study, Assay) framework and the CEDAR workbench provide structured platforms to collect this interoperable metadata [11].

Reusable: The ultimate goal is to optimize data reuse. This depends on the other three principles and adds the requirement of rich, domain-relevant context. Data must be released with a clear usage license and detailed provenance, describing how the data were generated. The FAIRplus Cookbook provides reusable "recipes" (e.g., for chemical identifier conversion or ontology mapping) that codify best practices for FAIRification, directly supporting this principle [12].

Table 2: Key Reporting Standards and Tools for FAIR Environmental Health Data [11]

| Standard/Tool | Full Name | Primary Purpose | Relevance to Ecotoxicology |

|---|---|---|---|

| TBC | Tox Bio Checklist | Minimum information for toxicogenomics and other toxicology data. | Specifically designed for environmental health; captures study design and biology. |

| TERM | Toxicology Experiment Reporting Module | Reporting module for toxicology experiments (OECD). | Developed for regulatory toxicology; applicable to standardized ecotoxicity tests. |

| ISA Framework | Investigation, Study, Assay | A metadata tracking framework to manage an increasingly diverse set of life science experiments. | Structures complex environmental health study metadata to enhance interoperability. |

| CEDAR | Center for Expanded Data Annotation and Retrieval | A metadata management platform based on semantic web technology. | Enables creation of smart, ontology-based metadata forms for experimental data. |

A Blueprint for Action: Experimental Protocol for a FAIR-Enabled qAOP Study

The following protocol, based on published research using quantitative Adverse Outcome Pathways (qAOPs), demonstrates how FAIR principles can be embedded into a concrete ecotoxicology experiment. The study aims to predict in vivo endocrine disruption from in vitro data by leveraging the AOP for aromatase inhibition leading to reproductive impairment in fish (AOP-Wiki #25) [17].

1. Study Preregistration & Data Management Planning:

- Action: Before experimentation, register the study design in a public registry like FAIREHR [13] [14]. Create a Data Management Plan (DMP) outlining how all digital objects (raw data, metadata, code, models) will be made FAIR.

- FAIR Rationale: Ensures findability and combat publication bias. The DMP ensures reusability by pre-defining provenance, formats, and licenses.

2. Chemical Selection & Identifier Assignment:

- Action: Select test chemicals (e.g., letrozole, imazalil) from a source like the EPA ToxCast dashboard. For each chemical, obtain and record standardized identifiers (CAS No., DSSTox CID, InChIKey, SMILES) at the start [17].

- FAIR Rationale: Unique, persistent identifiers make chemicals findable and interoperable across databases, enabling linkage to other hazard data.

3. In Vitro Aromatase Inhibition Assay:

- Methodology: Conduct a concentration-response assay using fathead minnow (Pimephales promelas) ovarian aromatase enzyme or cell line.

- FAIR-Compliant Metadata: Describe the assay using terms from ontologies (e.g., BioAssay Ontology). Report exact concentrations, exposure time, temperature, and positive/negative controls. Express potency as an AC50 (concentration for 50% activity) and report it in a structured table. Link the raw analytical data (e.g., plate reader outputs) to the processed results.

4. In Vivo Fathead Minnow Exposure:

- Methodology: Expose female fathead minnows to a logarithmic concentration series of the chemical in water for 24 hours (e.g., 5 concentrations plus control) [17].

- FAIR-Compliant Metadata:

- Organism: Use a taxonomy identifier (e.g., NCBI:txid7998).

- Exposure: Document water chemistry (pH, hardness, temperature), exposure regimen (static/renewal), and measured versus nominal concentrations.

- Sample Collection: Record precise sampling times and methods for blood (for plasma E2) and tissue (liver for VTG mRNA, ovary for aromatase mRNA).

- Endpoints: Measure plasma 17β-estradiol (E2) via ELISA, hepatic vitellogenin (vtg) mRNA via qPCR, and ovarian cyp19a1a and fshr mRNA via qPCR [17].

5. Data Integration & qAOP Modeling:

- Action: Express in vitro chemical potency relative to a reference inhibitor (e.g., fadrozole) as Fadrozole Equivalents (FAD-EQ). Use this as input to a published qAOP mathematical model to predict in vivo E2 reduction. Compare predictions to measured E2 values [17].

- FAIR Rationale: Using a standardized unit (FAD-EQ) and a publicly accessible, well-described model enhances interoperability and reusability of the analysis. The entire dataset supports the reusability of the AOP itself.

6. Data Deposition & Publication:

- Action: Deposit all raw and processed data in a public repository (e.g., Gene Expression Omnibus for omics data, Zenodo for diverse data). Use the repository's tools to create rich metadata, linking to the preregistration record. Publish the model code on a platform like GitHub. Cite all datasets and software with their PIDs in the resulting manuscript.

- FAIR Rationale: This final step fulfills all FAIR principles, making every digital object findable via its PID, accessible via the repository, interoperable via shared formats, and reusable via complete provenance and licensing.

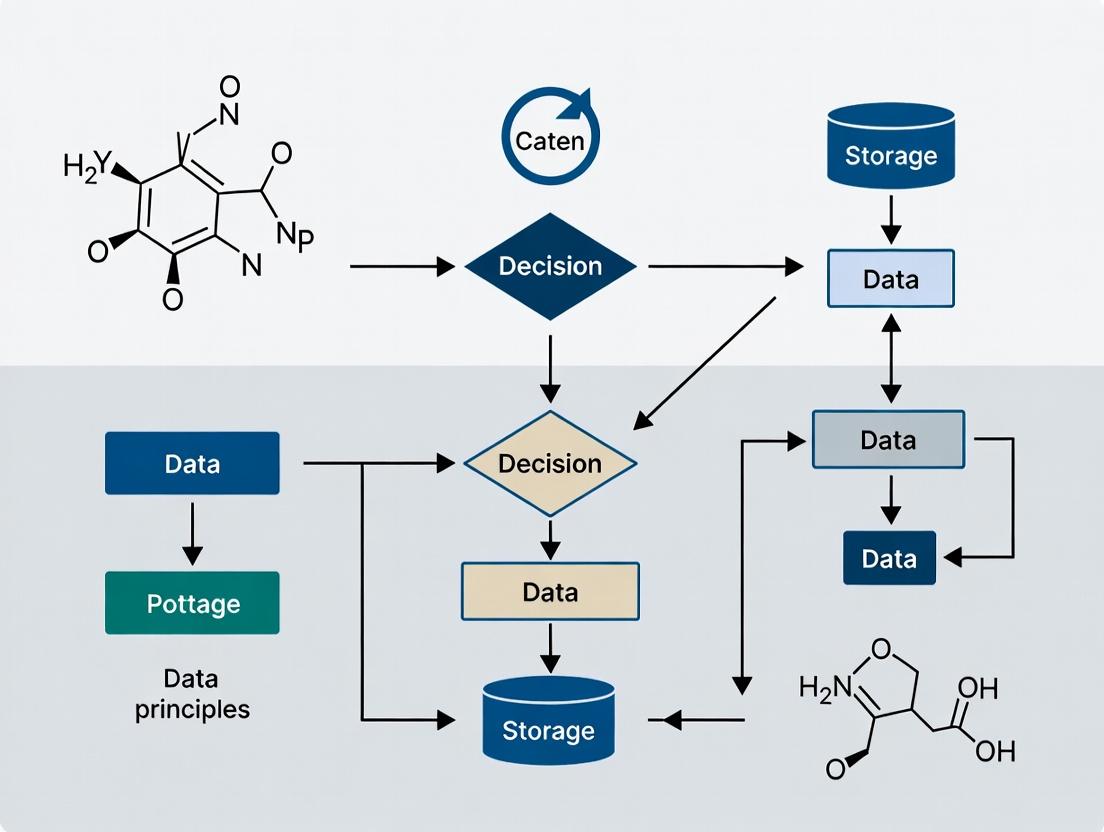

Diagram 1: FAIR Implementation Workflow for Ecotoxicology Studies. This workflow illustrates the integration of FAIR principles into the research lifecycle, from planning to reuse [13] [11] [17].

Building a FAIR-compliant ecotoxicology study requires both traditional laboratory materials and new digital resources. This toolkit lists essential items for conducting and documenting a study like the qAOP investigation for aromatase inhibitors described above [17].

Table 3: Research Reagent Solutions for a FAIR qAOP Study on Aromatase Inhibition

| Reagent / Resource | Specification / Example | Function in the Study |

|---|---|---|

| Test Organism | Fathead minnow (Pimephales promelas), reproductively mature females. | In vivo model organism for assessing endocrine disruption. |

| Reference Chemical | Fadrozole hydrochloride (CAS 102676-47-1). | Potent, specific aromatase inhibitor used to calibrate the in vitro assay and as a baseline for FAD-EQ calculation. |

| Test Chemicals | Letrozole, Imazalil, Epoxiconazole (with CAS No., DSSTox CID). | Chemicals with suspected aromatase-inhibiting activity to test the qAOP prediction. |

| In Vitro Assay System | Recombinant fathead minnow aromatase enzyme or ovarian cell preparation. | System for measuring the molecular initiating event (aromatase inhibition) potency (AC50). |

| qPCR Assay Kits | Assays for cyp19a1a, vtg, fshr, and housekeeping genes (e.g., ef1a). | Quantification of gene expression changes as key event responses in tissues. |

| Hormone ELISA Kit | 17β-Estradiol (E2) ELISA kit, validated for fish plasma. | Measurement of a critical physiological key event (circulating estrogen level). |

| Metadata Collection Tool | ISA framework configuration or CEDAR template based on TBC/TERM. | Tool to structure and collect standardized experimental metadata. |

| Chemical Identifier Database | EPA CompTox Chemicals Dashboard, NORMAN Network. | Authoritative source to obtain persistent identifiers (DTXSID, InChIKey) and properties for test chemicals. |

| Data Repository | Public domain repository (e.g., Zenodo, GEO, BCO-DMO). | Platform for the permanent, citeable deposition of datasets, models, and metadata with a PID. |

Transformative Outcomes: From Data Silos to Predictive Science

The rigorous implementation of FAIR principles catalyzes a fundamental transformation across the ecotoxicology and environmental health landscape.

Accelerated Hazard Assessment & Reduced Animal Testing: FAIR data enables the development and validation of New Approach Methodologies (NAMs) like qAOPs. The study on aromatase inhibitors demonstrates how in vitro data, made interoperable through standardized reporting, can be used to predict in vivo outcomes [17]. This directly supports the 3Rs (Replacement, Reduction, Refinement) by providing reliable, mechanistically grounded alternatives to traditional whole-animal testing.

Empowered Computational Toxicology and AI: Machine learning and artificial intelligence require large, high-quality, and interoperable training datasets. FAIR data provides this fuel. For example, the FAIREHR platform creates machine-discoverable metadata that can be leveraged by AI tools to identify exposure patterns or predict health risks [13]. Similarly, a FAIRified database like eTOX becomes a powerful resource for training predictive toxicology models [12].

Strengthened Regulatory and Policy Decision-Making: Regulatory bodies like the European Food Safety Authority (EFSA) are actively interpreting FAIR principles for mechanistic models used in risk assessment [15]. FAIR data ensures that the evidence supporting regulations is transparent, reproducible, and based on the integratable totality of available science. This builds greater trust and efficacy in public health and environmental protection measures.

Catalyzed Global Collaboration and Innovation: FAIR breaks down barriers between academia, industry, and government. It allows disparate research groups to build upon each other's work efficiently, turning individual studies into interconnected parts of a global evidence network. This collaborative environment is essential for tackling complex challenges like chemical mixtures, environmental justice, and planetary health.

Diagram 2: Aromatase Inhibition Adverse Outcome Pathway (AOP) and FAIR Data Integration. This diagram visualizes the biological pathway from molecular initiation to adverse outcome, highlighting how FAIR in vitro and in vivo data are integrated to build and validate predictive quantitative models (qAOPs) [17].

The adoption of FAIR principles represents a necessary and transformative evolution for ecotoxicology and environmental health research. It moves the field beyond isolated, single-use data generation toward a future where research outputs are integrated, foundational assets. By making data Findable, Accessible, Interoperable, and Reusable, scientists can accelerate the pace of discovery, enhance the reliability of risk assessments, reduce reliance on animal testing, and provide policymakers with a more robust, integrated evidence base. The tools, standards, and platforms—from reporting formats and ontologies to registries like FAIREHR—are now available. The challenge and opportunity lie in their widespread adoption, embedding FAIR practices into the very fabric of the research lifecycle to build a more sustainable, collaborative, and impactful science for environmental and public health.

A Deep Dive into Findability, Accessibility, Interoperability, and Reusability

Ecotoxicology, the science of understanding the impacts of chemicals on ecosystems, is undergoing a data-driven revolution. The field generates vast amounts of complex data from high-throughput in vitro assays, omics technologies, environmental monitoring, and computational models. The central challenge is no longer data generation but effective data stewardship. The Findable, Accessible, Interoperable, and Reusable (FAIR) principles have emerged as the critical framework to transform this heterogeneous data from isolated results into a cohesive, actionable knowledge asset [18].

Framed within the broader thesis of advancing animal-free safety assessment and robust environmental risk analysis, implementing FAIR is essential for computational toxicology models [18]. FAIR ensures that models and the data underpinning them are transparent, trustworthy, and can be integrated across studies and institutions. This guide provides a technical deep dive into each FAIR pillar, translating the principles into actionable protocols and tools for researchers, scientists, and drug development professionals dedicated to building a sustainable, data-centric future for ecotoxicology.

Deconstructing the FAIR Principles: A Technical Analysis

The FAIR principles provide a structured approach to data management. The following table breaks down each principle into its core technical requirements, implementation examples from ecotoxicology, and key enabling technologies.

Table 1: Technical Specification and Implementation of FAIR Principles in Ecotoxicology

| FAIR Principle | Core Technical Requirement | Ecotoxicology Implementation Example | Key Enabling Technology / Standard |

|---|---|---|---|

| Findable | Rich, machine-readable metadata with a globally unique and persistent identifier. | Assigning a DOI to a dataset from a Daphnia magna toxicity transcriptomics study. Metadata includes chemical identifier (e.g., InChIKey), exposure conditions, and sequencing platform. | Digital Object Identifier (DOI), DataCite Metadata Schema, ECOTOX Knowledgebase identifiers. |

| Accessible | Data is retrievable by their identifier using a standardized, open communication protocol. | Storing data in a public repository like Figshare or GEO (Gene Expression Omnibus) with a standard HTTPS protocol, even if access requires authentication/authorization. | HTTPS/HTTP, OAuth 2.0, FAIR Data Point, Repository APIs. |

| Interoperable | Data uses formal, accessible, shared, and broadly applicable languages and vocabularies. | Using the ECOTOX ontology to describe "LC50" and the OBO Relation Ontology for "has_result" instead of free-text column headers like "result1". | Ontologies (e.g., ECOTOX, EnvO, ChEBI), JSON-LD, RDF data models, controlled vocabularies. |

| Reusable | Data are richly described with multiple relevant attributes, clear usage licenses, and detailed provenance. | A QSAR model package includes the training data (with license), algorithm parameters, validation results, and a clear provenance trail from raw data to final model [18]. | Research Resource Identifiers (RRIDs), PROV-O ontology, Creative Commons licenses, detailed README files. |

A refined concept known as FAIR Lite has been proposed specifically for computational toxicology models. It condenses the principles into four actionable criteria: a unique identifier for citation, comprehensive model capture and curation, detailed metadata for variables and data, and storage on a searchable, interoperable platform [18]. This pragmatic approach ensures models are not just theoretically FAIR but are practically usable by risk assessors.

Experimental Protocols for FAIR Data Generation in Ecotoxicology

Implementing FAIR begins at the experimental design phase. The following protocols outline methodologies for generating data with inherent FAIRness.

Protocol 1: Generating FAIR-Compliant Data for an Omics-Based Ecotoxicity Study This protocol details the steps for a transcriptomics experiment to assess the molecular impact of a contaminant on zebrafish (Danio rerio) embryos.

- Pre-Experimental Registration: Before beginning, register the study design in a publicly accessible registry (e.g., via the FAIRsharing.org resource for ecotoxicology). Define and document all variables (chemical, concentration, exposure duration, biological replicates) using controlled terms.

- Sample Processing & Data Generation:

- Expose zebrafish embryos to the test chemical and a control according to OECD Test Guideline 236.

- Extract RNA, prepare libraries, and perform RNA-sequencing.

- Generate raw sequencing reads (FASTQ files) and processed gene count matrices.

- Metadata Curation: Simultaneously, create a machine-readable metadata file (e.g., in JSON-LD format). This must include:

- Unique Identifier: A reserved DOI for the dataset.

- Provenance: Detailed protocol steps, software versions (e.g., Trim Galore! v0.6.10, DESeq2 v1.40.2).

- Context: Chemical identifier (InChIKey), organism (NCBI Taxonomy ID: 7955), exposure conditions with units, and links to the registered study design.

- Data Deposition: Upload the raw FASTQ files, processed count matrix, and the JSON-LD metadata file to a specialized repository like the Gene Expression Omnibus (GEO) or the European Nucleotide Archive (ENA). The repository mints the DOI upon public release.

Protocol 2: Implementing FAIR Lite for a QSAR Ecotoxicity Model [18] This protocol follows the FAIR Lite framework for a Quantitative Structure-Activity Relationship (QSAR) model predicting fish acute toxicity.

- Model Identification & Capture: Assign a unique identifier (e.g., a DOI or a model ID in the QSAR Model Reporting Format). Exhaustively document the model in a structured format, including: the mathematical algorithm, software code (e.g., Python/R script), and all dependencies.

- Variable & Data Metadata: For the training dataset, specify metadata for all dependent (e.g., LC50 value, unit: mg/L, species: Pimephales promelas) and independent variables (e.g., molecular descriptors like logP, topological surface area). Where possible, provide or link to the underlying experimental data.

- Model Curation & Packaging: Package the model into a reusable container (e.g., a Docker image, a Python package on PyPI). Include a clear human- and machine-readable manifest file listing all components, their roles, and relationships.

- Storage in a Searchable Platform: Deposit the entire model package, including code, data metadata, and documentation, into a searchable platform such as the JRC QSAR Model Database, GitHub with a Zenodo DOI, or a specialized computational toxicology platform. Ensure the platform's metadata is harvestable via standard APIs.

Table 2: The Scientist's Toolkit: Essential Research Reagent Solutions for FAIR Ecotoxicology

| Tool / Reagent Category | Specific Example | Primary Function in FAIR Context |

|---|---|---|

| Persistent Identifier Services | DataCite DOI, RRID (Research Resource ID) | Provides globally unique, persistent references for datasets, models, and antibodies, ensuring Findability and Reusability. |

| Metadata Specification Tools | ISA (Investigation-Study-Assay) framework, DataCite Metadata Schema, MIAME (Minimal Information About a Microarray Experiment) | Provides standardized templates to create rich, structured metadata, enabling Interoperability and Reusability. |

| (Meta)Data Repositories | Zenodo (general), GEO (genomics), NORMAN Digital Sample Freezing Platform (environmental chemistry), JRC QSAR Model Database | Offers FAIR-compliant storage with curation, identifiers, and access protocols, addressing Accessibility and Findability. |

| Controlled Vocabularies & Ontologies | ECOTOX Ontology, Environmental Ontology (EnvO), Chemical Entities of Biological Interest (ChEBI) | Provides shared, unambiguous language to describe experiments, organisms, and chemicals, which is the foundation of Interoperability. |

| Data Modeling & Serialization Formats | JSON-LD, RDF (Resource Description Framework), netCDF (for environmental data) | Structures data and metadata in machine-readable, linked formats, facilitating data integration and Interoperability. |

| Provenance Tracking Tools | PROV-O ontology, electronic lab notebooks (ELNs) like RSpace or LabArchives | Documents the complete history of data from generation to publication, which is a critical component for Reusability. |

Visualizing the FAIR Data Lifecycle and Workflows

Diagrams are effective for summarizing large amounts of data and illustrating complex relationships and workflows at a glance [19] [20]. The following diagrams visualize key processes in FAIR ecotoxicology.

FAIR Data Lifecycle in Ecotoxicology Research

Computational Toxicology Model Workflow with FAIR Lite [18]

FAIR-Based Integrated Analysis of Emerging Contaminants

The adoption of FAIR principles represents a foundational shift toward robust, collaborative, and efficient science. In ecotoxicology, the tangible benefits are already emerging: reduced duplication of expensive and ethically charged animal testing, accelerated risk assessment of chemicals through reusable models [18], and the unlocking of novel insights via the integration of disparate datasets. While challenges in implementation remain—such as the need for cultural change, training, and sustained resources—the trajectory is clear. By embedding FAIR and FAIR Lite [18] practices into the core of research design, the ecotoxicology community can build a resilient, interconnected knowledge ecosystem. This will empower researchers and regulators to better understand and mitigate the complex impacts of chemicals on the environment, ultimately supporting more effective drug development and environmental protection.

Ecotoxicology research stands at a critical juncture. The field is tasked with assessing the risks of thousands of chemicals to environmental and human health, a challenge magnified by ethical and financial pressures to reduce vertebrate animal testing [21]. Computational models, including quantitative structure-activity relationships (QSARs) and more advanced machine learning (ML), offer a promising path forward. However, their potential is hamstrung by a fundamental data problem: most existing data, even when digitized, are not readily processable by computational agents without significant human intervention [21].

This is the challenge that machine-actionability addresses. Moving beyond the human-centric FAIR principles (Findable, Accessible, Interoperable, and Reusable), machine-actionability ensures that data and metadata are structured and annotated so that software can automatically find, access, interpret, and use them with minimal human effort. In the context of FAIR data for ecotoxicology, machine-actionability is the logical and necessary evolution, transforming well-managed data into a utility for automated discovery and analysis [15] [18].

The stakes are high. Regulatory frameworks like the European Union's Registration, Evaluation, Authorisation and Restriction of Chemicals (REACH) require extensive safety data. The global annual use of fish and birds for chemical hazard assessment is estimated between 440,000 and 2.2 million individuals, at a cost exceeding $39 million [21]. Machine-actionable data pipelines are essential for building the next generation of in silico models that can reduce this burden. Furthermore, as seen in initiatives by the European Food Safety Authority (EFSA), applying FAIR principles to mechanistic effect models in pesticide risk assessment can lead to a more efficient review process and better model integration [15]. This guide details the technical foundations, implementation strategies, and practical applications of machine-actionability specifically for advancing ecotoxicology research and regulatory science.

Core Principles: From FAIR to Machine-Actionable

The transition from FAIR data to machine-actionable data requires operationalizing each principle for computational agents. The following table contrasts the human-oriented FAIR objective with its machine-actionable implementation.

Table: Translating FAIR Principles into Machine-Actionable Requirements

| FAIR Principle | Human-Centric Interpretation | Machine-Actionable Requirement |

|---|---|---|

| Findable | A researcher can search a repository and locate a dataset. | Unique, persistent identifiers (PIDs) like DOIs or accession numbers are embedded in metadata in a globally parsable schema (e.g., DataCite). Metadata is indexed in searchable registries with standardized APIs for programmatic querying [22]. |

| Accessible | A user can retrieve data after authentication if required. | Data and metadata are retrievable via standardized, open, and free protocols (e.g., HTTPS, FTP) using the PID. Authentication and authorization are managed through machine-to-machine protocols (e.g., OAuth) [23]. |

| Interoperable | Data is in a format that can be opened with available software. | Data uses formal, accessible, and broadly applicable knowledge representation languages (e.g., RDF, JSON-LD). It employs shared, resolvable vocabularies, ontologies (e.g., ECOTOX ontology, ChEBI), and qualified references to other data [22] [23]. |

| Reusable | Metadata provides enough information for a scientist to understand and reuse the data. | Metadata is rich, uses domain-specific community standards (e.g., MIAME, CRED), and includes clear, machine-readable licensing and provenance information detailing origin and processing steps [21] [24]. |

A simplified "FAIR Lite" framework has been proposed for computational toxicology models, distilling the requirements to four key points: a globally unique identifier, captured/curated model components, metadata for variables, and storage in a searchable platform [18]. This pragmatic approach aligns well with achieving machine-actionability by focusing on the minimal essential elements for automated use.

The logical progression from managed data to a utility for automation is depicted below.

Diagram 1: The Data Utility Pipeline: From Raw Data to Automated Discovery. This workflow illustrates the transformation of data into an automated utility through stages of curation, FAIR implementation, and machine-actionable standardization.

Technical Implementation: Architecting Machine-Actionable Systems

Implementing machine-actionability requires a cohesive technical architecture built on standardized metadata, persistent identifiers, and interoperable knowledge structures.

Foundational Components

- Persistent Identifiers (PIDs): Every digital object (dataset, model, workflow) must have a unique, persistent identifier like a DOI, accession number, or a Life Science Identifier (LSID). This allows for reliable, permanent referencing [22] [18].

- Structured Metadata Schemas: Metadata must conform to community-agreed, machine-parsable schemas. For ecotoxicology, this involves extending general schemas (e.g., DataCite, ISO 19115) with domain-specific fields for test organisms (species, life stage), experimental conditions (duration, endpoint like LC50), and chemical identifiers (CAS, InChIKey, SMILES) [21] [24].

- Controlled Vocabularies and Ontologies: Interoperability is achieved by using resolvable, standard terms. Key resources include:

- Chemical Identifiers: IUPAC International Chemical Identifier (InChI), Simplified Molecular Input Line Entry System (SMILES), DSSTox Substance ID (DTXSID) [21].

- Taxonomic Ontologies: NCBI Taxonomy, World Register of Marine Species (WoRMS).

- Ecotoxicology Parameters: Ontologies defining endpoints (e.g., "LC50"), effects (e.g., "mortality"), and test guidelines (e.g., "OECD Test Guideline 203").

The Role of Knowledge Graphs and Computational Workflows

A knowledge graph is a powerful tool for achieving machine-actionability. It represents entities (chemicals, species, tests) and their relationships as a network, enabling sophisticated, context-aware queries. As implemented by organizations like AstraZeneca, a knowledge graph built on semantic web standards (RDF, OWL, SPARQL) integrates fragmented data silos, allowing researchers to ask complex questions across integrated data in minutes rather than weeks [23].

Computational workflows are another critical component. They are formal specifications of multi-step data analysis pipelines, crucial for reproducibility and scalability [22]. A FAIR and machine-actionable workflow should itself be findable (with a PID), accessible, interoperable (using standard languages like Common Workflow Language or Nextflow DSL), and reusable (with detailed, machine-readable provenance) [22]. Workflows automate the use of machine-actionable data, creating a virtuous cycle where data fuels automated analyses whose outputs are, in turn, new FAIR data.

Table: Key Components of a Machine-Actionable Data System Architecture

| Component | Function | Examples & Standards |

|---|---|---|

| PID System | Provides permanent, unique references to digital objects. | DOI, Handle, ARK, LSID. |

| Metadata Repository | Stores and indexes structured metadata for discovery. | DataCite API, EDI Metadata Repository, custom Elasticsearch indices. |

| Knowledge Graph Engine | Stores semantic triples and enables complex graph queries. | Blazegraph, GraphDB, Neptune, powered by RDF/OWL. |

| Vocabulary Service | Hosts and resolves controlled terms and ontologies. | BioPortal, OLS, Identifiers.org. |

| Workflow Management System | Executes and records computational pipelines. | Nextflow, Snakemake, Galaxy, Common Workflow Language [22]. |

The interaction of these components within an operational architecture is shown below.

Diagram 2: Technical Architecture for Machine-Actionable Ecotoxicology Data. This system diagram shows how components like a knowledge graph, APIs, and vocabulary services interact to enable automated data discovery and integration.

Practical Application: Creating and Using Machine-Actionable Data in Ecotoxicology

Case Study: The ADORE Benchmark Dataset

The A Dataset for Ontology-based Research in Ecotoxicology (ADORE) is a prime example of moving towards machine-actionability [21]. Its creation involved:

- Sourcing Core Data: Extracting acute aquatic toxicity data for fish, crustaceans, and algae from the US EPA's ECOTOX database.

- Data Curation & Expansion: Filtering for relevant endpoints (LC50, EC50), standardizing experimental durations, and enriching records with chemical features (molecular descriptors from SMILES) and phylogenetic data.

- Structuring for Reuse: Providing the data with clear, documented splits for training and testing machine learning models to prevent data leakage and enable fair benchmarking [21].

To be fully machine-actionable, a dataset like ADORE would benefit from:

- A detailed data dictionary in a machine-readable format (e.g., JSON Schema).

- Provenance metadata tracing each record back to its source in ECOTOX.

- Explicit licensing in a machine-readable form (e.g., SPDX).

- Hosting with a standardized API for programmatic subsetting and retrieval.

The protocol for generating such a benchmark resource is outlined below.

Diagram 3: Protocol for Creating a Machine-Actionable Benchmark Dataset. This workflow details the steps from raw data sourcing to the publication of a reusable, well-documented benchmark resource for model development.

Table: Characteristics of the ADORE Benchmark Dataset for Machine Learning [21]

| Feature | Description | Machine-Actionability Consideration |

|---|---|---|

| Core Data | 41,477 acute toxicity records for fish, crustaceans, algae. | Each record should link to a stable source identifier (e.g., ECOTOX result_id). |

| Chemical Information | CAS, DTXSID, InChIKey, SMILES for ~1,900 unique substances. | Use of standard, resolvable identifiers enables linking to external compound databases. |

| Taxonomic Information | Phylogenetic hierarchy for test species. | Use of standard taxonomic identifiers (e.g., NCBI TaxID) would enhance interoperability. |

| Experimental Parameters | Endpoint (LC50/EC50), duration, concentration units. | Values should be paired with ontology terms (e.g., OBA:LC50, UO:milligram_per_liter). |

| Pre-defined Splits | Training/test splits based on chemical scaffold & taxonomy. | Splits should be published as separate, clearly identified lists of record PIDs. |

To work effectively with machine-actionable data, researchers require a set of tools and resources.

Table: Research Reagent Solutions for Machine-Actionable Ecotoxicology

| Tool/Resource | Category | Function in Machine-Actionable Research |

|---|---|---|

| ECOTOX Knowledgebase | Data Source | Primary source of curated ecotoxicity data; provides a structured download format that can be the starting point for creating FAIR datasets [21]. |

| CompTox Chemicals Dashboard | Chemical Identifier Resolver | Provides access to DSSTox IDs (DTXSID), a stable identifier system for chemicals, and links to associated properties and toxicity data. |

| BioPortal / OLS | Ontology Service | Platforms to find, browse, and resolve ontology terms (e.g., for species, endpoints, units) essential for annotating metadata [23]. |

| Nextflow / Snakemake | Workflow Management System | Enables the creation of reproducible, scalable computational workflows that can automatically process machine-actionable data [22]. |

| RDF Triplestore (e.g., GraphDB) | Knowledge Graph Platform | Software to store and query data as a semantic knowledge graph, enabling complex, linked data queries. |

| JSON-LD / Schema.org | Metadata Standard | Lightweight formats for embedding structured, linked data metadata into web resources and datasets. |

Challenges and Future Directions

Despite clear benefits, significant challenges hinder widespread adoption of machine-actionability in ecotoxicology.

- Legacy Data and Heterogeneity: Vast amounts of historical data are trapped in PDFs, spreadsheets, or proprietary formats with inconsistent terminology, making retrospective curation costly. Initiatives like the Minimum Information Requirements for Human Biomonitoring (MIR-HBM) seek to harmonize future data collection [24], but legacy data remains a hurdle.

- Data Gaps and Accessibility: Critical data, such as geographically resolved plant protection product usage in the EU, are often not collected or made accessible in a usable format, impairing exposure assessment and model development [25].

- Cultural and Technical Skill Gaps: Shifting research culture to value data stewardship as highly as publication requires training and incentive structures. Technical expertise in semantic technologies and data engineering is not yet widespread in the domain.

Future progress depends on:

- Community-Agreed Standards: Wider adoption of minimal information checklists and metadata schemas specific to ecotoxicology studies and models [15] [24].

- Tool Development and Integration: Creating user-friendly tools that lower the barrier for scientists to annotate data with ontologies and publish FAIR, machine-actionable datasets [23].

- Incentive Structures: Funders and journals mandating the deposition of both data and computational models (e.g., QSARs) in machine-actionable formats as a condition of grant funding or publication [18].

Machine-actionability is the key that unlocks the full potential of the FAIR principles for ecotoxicology. It transforms data from a static record into a dynamic, interoperable resource that can power automated meta-analyses, feed next-generation predictive models, and accelerate evidence-based environmental risk assessment. The technical path is clear, involving persistent identifiers, semantic knowledge graphs, standardized metadata, and executable workflows. While challenges of legacy data, culture, and skills persist, the imperative to make more efficient use of existing data and reduce animal testing provides strong motivation. By implementing machine-actionable data systems, the ecotoxicology community can enhance the reproducibility, transparency, and predictive power of its research, ultimately leading to more robust and timely protection of environmental and human health.

In the data-intensive field of ecotoxicology, the terms "FAIR data" and "open data" are often conflated, yet they represent distinct—and sometimes orthogonal—paradigms for research data management. This whitepaper clarifies the core differences between the two frameworks, framing the discussion within the urgent need for advanced data stewardship in environmental health science. While open data prioritizes unrestricted public access to foster transparency and collaboration, FAIR (Findable, Accessible, Interoperable, Reusable) principles provide a technical blueprint to ensure data are machine-actionable and reliably reusable, even when access must be restricted. We argue that for ecotoxicology to effectively address complex challenges like chemical mixture toxicity and cross-species extrapolation, a nuanced strategy that strategically integrates both FAIR and open approaches is essential. The paper provides quantitative comparisons, detailed implementation protocols, and a toolkit of essential resources to guide researchers, scientists, and drug development professionals in building a robust, future-proof data ecosystem.

Ecotoxicology research generates vast, complex datasets critical for chemical risk assessment, regulatory decision-making, and protecting ecosystem health. However, the field faces a "data scarcity problem," not due to a lack of studies, but because existing data are often siloed, poorly described, and impossible to integrate or reuse[reference:0]. This limits the ability to conduct powerful meta-analyses and apply advanced computational methods like machine learning.

In response, two major movements have emerged: the Open Science/Open Data movement, advocating for free and unrestricted access to research outputs, and the FAIR data principles, a technical framework designed to optimize data for both human and machine use[reference:1]. These concepts are complementary but not synonymous. Confusing them can lead to poorly implemented data management that fails to achieve either true openness or functional reusability.

This paper, situated within a broader thesis on applying FAIR principles to ecotoxicology, delineates the fundamental distinctions between FAIR and open data. It provides actionable guidance for researchers to navigate this landscape, ensuring their data management practices not only comply with growing funder mandates but genuinely accelerate scientific discovery.

Core Concepts Defined

FAIR Data: A Framework for Machine-Actionable Reuse

FAIR is an acronym for four guiding principles:

- Findable: Data and metadata are assigned persistent, unique identifiers (e.g., DOIs) and are indexed in searchable resources.

- Accessible: Data are retrievable using standardized, open protocols. Metadata remain accessible even if the data itself is under restricted access.

- Interoperable: Data use formal, shared, and broadly applicable vocabularies and formats to enable integration with other datasets.

- Reusable: Data are richly described with provenance, clear usage licenses, and domain-relevant community standards[reference:2].

Crucially, FAIR does not mandate that data be "open." The "A" stands for "Accessible under well-defined conditions," which can include authentication for privacy, security, or intellectual property reasons[reference:3].

Open Data: A Philosophy of Unrestricted Access

Open data is defined by its licensing and availability. Its key tenets are that data must be:

- Freely available to anyone, without cost beyond reproduction.

- Free to reuse and redistribute, with minimal restrictions (often under licenses like Creative Commons).

- Promotive of transparency in collection and processing[reference:4].

While open data can be FAIR, openness alone does not guarantee findability, interoperability, or reusability. A dataset can be openly posted online yet be in a proprietary format, lacking essential metadata, and thus be virtually useless for automated reuse.

Quantitative Comparison: Objectives, Adoption, and Impact

The following tables synthesize key differences and current adoption metrics.

Table 1: Conceptual & Operational Comparison

| Aspect | FAIR Data | Open Data |

|---|---|---|

| Primary Goal | Ensure data are machine-readable and reusable for both humans and computational systems. | Promote unrestricted sharing, transparency, and democratization of access. |

| Access Requirement | Can be open, restricted, or embargoed based on ethical, legal, or commercial constraints. | Must be freely accessible to all, by definition. |

| Focus on Metadata | Rich, structured metadata is a strict requirement for findability and reusability. | Metadata may be present but is not a formal requirement. |

| Interoperability | Emphasizes standardized vocabularies and formats (e.g., RDF, JSON-LD) as a core principle. | Does not inherently require standardization, though it is beneficial. |

| Typical Licensing | Varies; can range from open licenses to bespoke data use agreements. | Typically uses standard open licenses (e.g., CC0, CC-BY). |

| Ideal Application | Structured data integration in R&D, reproducible computational workflows, sensitive data. | Democratizing access to large public datasets, fostering public trust, accelerating collaborative research. |

Source: Synthesis from comparative literature[reference:5].

Table 2: Adoption Metrics and Trends (2024-2025)

| Metric | FAIR Data | Open Data |

|---|---|---|

| Awareness Among Funders | 73% of international research software funders are "extremely familiar" with FAIR principles[reference:6]. | N/A (broader cultural movement) |

| Global Sharing Rate | N/A (varies by discipline and policy) | Average ~25% repository sharing rate in the US, UK, Germany, and France; significantly lower in many Global South nations[reference:7]. |

| Annual Output Volume | N/A (integrated into various outputs) | ~2 million datasets published openly each year, comparable to global article output in the year 2000[reference:8]. |

| Key Driver for Researchers | Funder and publisher mandates, need for reproducibility and meta-analysis. | Funder requirements (primary in the US) and the desire for data citation (primary in Japan, Ethiopia)[reference:9]. |

| Major Challenge | Gap between policy and practice; complexity of creating rich metadata and using standards[reference:10]. | Resource disparities, lack of institutional support, and discipline-specific community practices[reference:11]. |

Sources: Scientific Data (2025)[reference:12], State of Open Data 2024 report[reference:13][reference:14].

Experimental Protocols for Implementation

Protocol 1: Implementing the ATTAC Workflow for Wildlife Ecotoxicology Data

The ATTAC (Access, Transparency, Transferability, Add-ons, Conservation sensitivity) workflow is a discipline-specific protocol that operationalizes FAIR and open principles for integrating scattered wildlife ecotoxicology data[reference:15].

Objective: To homogenize and integrate heterogeneous data from primary studies for subsequent meta-analysis. Materials: Literature databases (e.g., Web of Science, PubMed), data extraction sheets, controlled vocabularies (e.g., ECOTOX ontology), statistical software (e.g., R, Python). Procedure:

- Access: Systematically search and identify all relevant primary studies using predefined search strings. Record the provenance of each data point.

- Transparency: Fully document all steps of data collection, inclusion/exclusion criteria, and any data transformations applied. Publish this protocol as a methodological supplement.

- Transferability: Extract data into a standardized template. Convert all units and measurements to a common system. Map all reported species, chemicals, and endpoints to persistent identifiers or controlled terms.

- Add-ons: Enhance the dataset by linking to additional resources (e.g., species trait databases, chemical property databases) where possible.

- Conservation sensitivity: Apply appropriate statistical methods that account for data structure (e.g., phylogenetic relatedness of species, study weighting) and ensure the integrated data supports conservation-relevant conclusions[reference:16]. Deliverable: A fully curated, documented, and publicly archived dataset ready for meta-analysis.

Protocol 2: Assessing the FAIRness of an Ecotoxicology Dataset

Objective: To evaluate and score the degree to which a given dataset adheres to the FAIR principles. Materials: Dataset and its associated metadata; a FAIR assessment tool (e.g., FAIR Evaluator, F-UJI, or community-specific checklists); a computational environment if using automated tools. Procedure:

- Tool Selection: Choose an assessment tool appropriate for the data type. Semi-automated tools (e.g., F-UJI) are practical for evaluating entire databases, while self-assessment surveys are suitable for a quick scan[reference:17].

- Metadata Inspection: Manually or automatically check for the presence of: a persistent identifier (Findable), a standardized access protocol (Accessible), the use of community standards and vocabularies (Interoperable), and detailed provenance and licensing information (Reusable).

- Machine-Actionability Test: Verify that metadata is structured in a machine-readable format (e.g., XML, JSON-LD) and not just present in a PDF document.

- Scoring and Reporting: Generate a report detailing compliance with each FAIR sub-principle. Note that different tools may produce varying results due to different interpretations of the principles[reference:18].

- Improvement Plan: Based on the report, create an action plan to enhance FAIRness, such as depositing in a certified repository, adding missing metadata using a standard like ISA-Tab, or applying a clear usage license. Deliverable: A FAIRness assessment report with a quantitative score and qualitative recommendations for improvement.

Visualizing Workflows and Relationships

Diagram 1: The FAIR Data Principles Cycle

This diagram illustrates the iterative, interconnected nature of the FAIR principles, where each pillar supports the others to enable reusable data ecosystems.

Diagram 2: The ATTAC Workflow for Data Integration

This diagram outlines the five-step ATTAC workflow, a specific implementation for making wildlife ecotoxicology data both FAIR and open for meta-analysis.

This table lists key tools, standards, and platforms essential for implementing FAIR data practices in ecotoxicology research.

| Category | Tool/Resource | Function in FAIR Ecotoxicology |

|---|---|---|

| Repositories & Identifiers | Zenodo / Figshare | General-purpose repositories that mint DOIs, providing persistent identifiers and long-term archiving (Findable, Accessible). |

| DataCite | Provides the infrastructure for creating and managing DOIs, connecting data to citations. | |

| Metadata Standards | ISA-Tab | A framework for capturing metadata from multi-omics and other biomedical investigations, adaptable for ecotoxicology assays[reference:19]. |

| Ecological Metadata Language (EML) | A widely used standard for describing ecological and environmental data. | |

| Vocabularies & Ontologies | ECOTOXicology Knowledgebase | A curated database providing standard toxicity endpoints and controlled terms for data harmonization[reference:20]. |

| Environment Ontology (ENVO) / Chemical Entities of Biological Interest (ChEBI) | Ontologies for standardizing descriptions of environments and chemical entities. | |

| Software & Packages | ecotoxr R Package |

Facilitates reproducible and transparent retrieval of data from the EPA ECOTOX database, promoting interoperability and reuse[reference:21]. |

| FAIR assessment tools (e.g., F-UJI) | Automated services to evaluate the FAIRness of a dataset against community-agreed metrics. | |

| Reporting Guidelines | FAIRsharing.org | A registry to discover and select appropriate standards, databases, and policies for your data type[reference:22]. |

| Minimum Information Checklists (e.g., MIAME/Tox) | Discipline-specific reporting standards to ensure data are sufficiently described for reuse[reference:23]. |

The distinction between FAIR and open data is not merely semantic but foundational to effective data stewardship. For ecotoxicology, where data sensitivity (e.g., proprietary chemical data) and complexity are high, a blanket "open everything" approach is neither feasible nor optimal. Conversely, data that is merely "available" but not FAIR fails to unlock its full potential for computational reuse and integration.

The path forward lies in a strategic integration of both paradigms. Researchers should aim to make all data as FAIR as possible, applying rich metadata and standards from the point of creation. Subsequently, data should be made as open as possible, sharing via repositories under appropriate licenses, while respecting necessary restrictions. Frameworks like the ATTAC workflow demonstrate how this integration can be achieved in practice.

Embracing this nuanced approach will transform ecotoxicology from a field hampered by scattered data into one powered by a reusable, interconnected knowledge base. This is essential for tackling grand challenges, from assessing the risks of emerging contaminants to protecting biodiversity in a changing world.

This whitepaper is part of a thesis on "Implementing FAIR Data Principles to Overcome Data Fragmentation in Ecotoxicology." All cited sources were accessed in December 2024. The tools and protocols described are intended as a starting point for researchers and institutions developing their data management strategies.

A Practical Roadmap: Implementing FAIR Data Principles in Ecotoxicology Workflows

Ecotoxicology research generates critical data for understanding the impacts of chemicals, nanomaterials, and other stressors on ecosystems and human health. However, the full potential of this data is often unrealized due to inconsistencies in formatting, incomplete metadata, and a lack of standardization, which hinder data discovery, integration, and reuse [26]. The FAIR (Findable, Accessible, Interoperable, and Reusable) Guiding Principles provide a framework to address these challenges by making data machine-actionable and widely reusable [1] [2]. For ecotoxicology, FAIRification is not merely a data management exercise but a foundational step toward advancing New Approach Methodologies (NAMs), enabling predictive computational toxicology, and supporting 21st-century, evidence-based environmental risk assessment [27] [28].

This guide presents a practical, three-phase framework for the FAIRification of ecotoxicology data. It is grounded in the broader thesis that systematically applied FAIR principles are essential for building robust, interconnected knowledge systems—such as Adverse Outcome Pathway (AOP) networks and integrated testing strategies—that can accelerate the safety assessment of chemicals and reduce reliance on animal testing [27] [14]. By translating FAIR from theory into actionable steps, this framework aims to empower researchers, data stewards, and risk assessors to enhance the quality, utility, and longevity of their scientific data.

The FAIRification Framework: A Three-Phase Approach

The FAIRification of legacy or newly generated ecotoxicology data is a structured process that requires planning, execution, and integration. The following three-phase framework breaks down this process into manageable steps, providing clear checkpoints and deliverables.

Phase 1: Pre-FAIRification Assessment and Planning

This initial phase focuses on evaluating the current state of the data and designing a tailored FAIRification plan. It ensures that resources are allocated efficiently and that the process aligns with both scientific and data stewardship goals.

Key Steps and Methodologies:

- Data Inventory and Curation: Conduct a comprehensive audit of all datasets, including raw data, processed results, and associated metadata (e.g., protocols, instrument parameters). Identify missing information, correct obvious errors, and document known issues or limitations in a "README" file [26] [8].

- FAIR Maturity Assessment: Evaluate the dataset against each FAIR pillar. A simple checklist can be used:

- Findable: Do datasets have persistent identifiers? Are they described with rich metadata?

- Accessible: Is data stored in a trusted repository with a clear access protocol?

- Interoperable: Are community-standard vocabularies and formats used?

- Reusable: Is provenance (experimental methods, processing steps) thoroughly documented with clear licensing? [1] [2]

- Semantic Mapping Design: Identify the core data entities (e.g., "chemical," "assay," "organism," "endpoint") and their relationships. Map these to existing ontologies and controlled vocabularies (e.g., ChEBI for chemicals, OBI for assays, ENVO for environmental media) to ensure semantic interoperability [29] [8]. This step is critical for linking ecotoxicology data to broader AOP frameworks [27].

Table 1: Phase 1 - Assessment Steps and Deliverables

| Step | Primary Action | Key Deliverable | Checkpoint Question |

|---|---|---|---|

| 1. Inventory | Catalog all files and metadata. | A detailed inventory spreadsheet. | Is the scope of the FAIRification project clearly defined? |

| 2. Curation | Clean data and document issues. | Curated data files and a provenance "README". | Are the data and metadata accurate and complete enough to proceed? |

| 3. Maturity Assessment | Score data against FAIR criteria. | A FAIR maturity scorecard with gap analysis. | What are the biggest barriers to FAIRness for this dataset? |

| 4. Semantic Design | Map data concepts to ontologies. | A semantic mapping diagram or schema. | Are the key concepts linkable to community-accepted terms? |

Phase 2: Core FAIRification Execution

This phase involves the technical implementation of the plan developed in Phase 1. The focus is on transforming data into standardized, annotated, and machine-readable formats.

Key Steps and Methodologies:

- Metadata Enhancement: Using templates or guided forms, populate metadata fields that make data findable and reusable. This includes administrative metadata (creator, license), descriptive metadata (abstract, keywords), and most importantly, rich structural and methodological metadata detailing the experimental design [8] [13]. For ecotoxicology, this must capture information on the test substance (e.g., nanomaterial characterization [29]), test organism (species, life stage), exposure regimen, and measured endpoints.

- Data Structuring and Formatting: Convert data from unstructured formats (e.g., free-text notes, idiosyncratic spreadsheets) into structured formats. A highly effective method is to use community-developed reporting formats that specify required and optional fields for common data types [8]. For spreadsheet data, tools like the NMDataParser can be configured to automatically map custom layouts to a standard data model, such as the eNanoMapper ontology used in nanosafety [29].

- Identifier Assignment and Linking: Assign Persistent Identifiers (PIDs), such as Digital Object Identifiers (DOIs), to the finalized dataset. Within the dataset, use resolvable identifiers for key entities (e.g., a chemical's InChIKey or a protocol's DOI) instead of plain text names. This creates a web of linked data, enhancing both findability and interoperability [13] [2].

Table 2: Phase 2 - Structured Templates for Key Ecotoxicology Data Types

| Data Type | Core Metadata Requirements (Examples) | Suggested Reporting Format / Standard | Linked AOP Element [27] |

|---|---|---|---|

| Chemical/Nanomaterial Characterization | Substance name, CAS RN/InChIKey, core size, surface coating, purity, supplier. | ISA-Tab-Nano, eNanoMapper data model [29]. | Molecular Initiating Event (MIE) |

| Ecotoxicological Assay Data | Test guideline (e.g., OECD), species/strain, exposure duration/concentration, endpoint (e.g., LC50, growth inhibition), statistical results. | OECD Harmonised Templates (OHTs), ISA-Tab extensions [29] [8]. | Key Event (KE) |

| Omics Data (Transcriptomics, Metabolomics) | Platform (e.g., RNA-Seq), sample preparation protocol, raw/processed data file locations, differential expression lists. | MINSEQE, ESS-DIVE reporting formats for biological data [8]. | Key Event Relationship (KER) |

Phase 3: Post-FAIRification Publication and Integration

The final phase ensures that the FAIRified data is published, validated, and connected to broader knowledge systems to maximize its impact and reuse.

Key Steps and Methodologies:

- Repository Deposition and Publication: Deposit the FAIRified dataset, along with its enhanced metadata, into a disciplinary or general-purpose repository that assigns PIDs and provides long-term preservation. Suitable repositories include those compliant with the Enviromental Science Data Infrastructure (ESS-DIVE), the eNanoMapper database, or generalist repositories like Zenodo [29] [8]. The metadata should be published under an open license (e.g., CC-BY) to permit reuse.

- Validation and Quality Assurance: Use both automated and expert-driven checks. Automated validators can check for format compliance and required metadata fields. Expert review, potentially through community peer-review platforms, should assess the scientific soundness, clarity of descriptions, and appropriateness of the semantic annotations [13].

- Integration with Knowledge Systems: Actively link the published dataset to relevant external resources. This is where the data fulfills its role in the broader thesis. For example, assay data documenting a specific toxic effect can be linked to a Key Event in an Adverse Outcome Pathway within the AOP-Wiki [27] [28]. Data from human biomonitoring or environmental monitoring studies can be registered in platforms like the FAIR Environmental and Health Registry (FAIREHR) to enhance findability and support policy interface [14] [13].

Table 3: Phase 3 - Validation and Integration Tools

| Tool / Resource Name | Primary Function in FAIRification | Applicable Data Type / Field |

|---|---|---|

| NMDataParser [29] | Converts custom spreadsheets into structured, semantic data (JSON, RDF). | Nanosafety, ecotoxicology assay data. |

| FAIREHR Platform [13] | Preregistration and metadata registry for studies; enables prospective FAIRification. | Human biomonitoring, environmental exposure studies. |

| AOP-Wiki / FAIR AOP Tools [27] | Allows annotation and linkage of mechanistic data to established AOP frameworks. | In vitro and in vivo data supporting Key Events. |

| Repository-Specific Validators (e.g., ESS-DIVE) [8] | Checks metadata and file format compliance against community standards. | Diverse environmental and ecological data types. |

Detailed Experimental Protocol: FAIRification of an In Vitro Comet Assay Dataset

The following protocol details the FAIRification process for a specific, common ecotoxicology endpoint: genotoxicity data from an in vitro Comet assay, based on a published case study [26].

1. Pre-FAIRification Assessment: