A Practical Guide to Calculating LD50 with Probit Analysis: From Theory to Statistical Implementation

This article provides a comprehensive guide for researchers and toxicology professionals on determining the median lethal dose (LD50) using probit analysis.

A Practical Guide to Calculating LD50 with Probit Analysis: From Theory to Statistical Implementation

Abstract

This article provides a comprehensive guide for researchers and toxicology professionals on determining the median lethal dose (LD50) using probit analysis. It progresses from foundational concepts—including the definition of LD50, the history of the probit method, and its underlying statistical theory—to a detailed, practical methodology for conducting the analysis, covering experimental design, data transformation, and regression[citation:2][citation:5]. The guide further addresses common troubleshooting scenarios, validation techniques, and a comparative evaluation with alternative methods like logit regression and modern computational models[citation:6]. It concludes by synthesizing the role of classical probit analysis within the contemporary landscape of computational toxicology and predictive safety science[citation:1].

Understanding LD50 and Probit Analysis: Core Concepts for Toxicology Research

Core Definition and Historical Context

The median lethal dose (LD₅₀) is defined as the amount of a material, administered in a single dose, that causes the death of 50% of a group of test animals within a specified observation period [1]. It is a quantal measurement of acute toxicity, meaning it records an effect (death) that either occurs or does not occur [1]. This value is typically expressed as the mass of substance per unit body weight of the test animal (e.g., milligrams per kilogram, mg/kg) [1] [2].

The concept was developed in 1927 by J.W. Trevan to provide a standardized method for comparing the relative poisoning potency of drugs and chemicals that harm the body in diverse ways [1] [2]. By using death as a clear, unambiguous endpoint, LD₅₀ allows for the comparison of toxicity across different chemical classes [1].

A related term, LC₅₀ (Lethal Concentration 50), refers to the concentration of a chemical in air (or water) that kills 50% of test animals over a set exposure period, commonly 4 hours [1].

Regulatory Framework and Significance

LD₅₀ testing is governed by internationally recognized guidelines to ensure consistency, reliability, and ethical compliance. Key regulatory bodies include:

- OECD (Organisation for Economic Co-operation and Development): Its Test Guidelines are the global standard for chemical safety testing, promoting the Mutual Acceptance of Data (MAD) across member countries [3]. The guidelines are continuously updated to incorporate scientific advancements and the 3Rs principles (Replacement, Reduction, and Refinement of animal testing) [3].

- U.S. Environmental Protection Agency (EPA): Maintains the Health Effects Test Guidelines (Series 870), which include specific protocols for acute oral (870.1100), dermal (870.1200), and inhalation (870.1300) toxicity studies [4].

- U.S. Food and Drug Administration (FDA): Provides guidance through its Redbook 2000, outlining general principles for designing and conducting toxicity studies for food ingredients and additives, emphasizing Good Laboratory Practice (GLP) [5].

The primary significance of the LD₅₀ value lies in hazard classification and labeling. It is used to place substances into toxicity categories, which dictate handling precautions, personal protective equipment (PPE) requirements, and transportation regulations [1] [6]. In drug development, it helps establish the therapeutic index (the ratio between toxic and effective doses) [2] [7].

Table 1: Common Toxicity Classification Systems Based on LD₅₀ Values (Oral, Rat)

| Toxicity Rating | Common Term | Oral LD₅₀ (mg/kg) | Probable Lethal Dose for 70 kg Human |

|---|---|---|---|

| 1 (Hodge & Sterner) [1] | Extremely Toxic | ≤ 1 | A taste, a drop (~1 grain) |

| 2 [1] | Highly Toxic | 1 – 50 | 1 teaspoon (~4 ml) |

| 3 [1] | Moderately Toxic | 50 – 500 | 1 ounce (~30 ml) |

| 4 [1] | Slightly Toxic | 500 – 5000 | 1 pint (~600 ml) |

| 5 [1] | Practically Non-toxic | 5000 – 15000 | > 1 quart (~1 L) |

| 6 (Gosselin et al.) [1] | Super Toxic | < 5 | < 7 drops |

The Mathematical Foundation: Probit Analysis

Probit analysis is a classical statistical method for analyzing binomial response data (like death/survival) in relation to a quantitative stimulus (like dose) [8] [9]. It transforms the sigmoidal dose-response curve into a straight line, enabling precise calculation of the LD₅₀ and its confidence intervals.

The core transformation uses the probit (probability unit), which is derived from the inverse of the cumulative standard normal distribution. The percentage mortality is converted to a probit value [9]. A linear model is then fitted: Probit(Y) = k + (m * log₁₀(Dose)) [8] Where 'k' and 'm' are constants. The LD₅₀ is calculated as the dose at which the probit equals 5 (corresponding to 50% mortality).

Probit Analysis Workflow for LD50 Calculation

Evolving Paradigms: Alternative and In Silico Methods

Traditional LD₅₀ testing requires significant numbers of animals. Modern toxicology emphasizes New Approach Methodologies (NAMs) to reduce, refine, and replace animal use [6].

- Alternative Tests: The OECD Up-and-Down Procedure (UDP) and Fixed Dose Procedure (FDP) use sequential dosing in fewer animals to estimate an LD₅₀ range without requiring death as an endpoint [4].

- In Silico (Computational) Models: Quantitative Structure-Activity Relationship (QSAR) and machine learning models predict LD₅₀ based on chemical structure.

- Collaborative Acute Toxicity Modeling Suite (CATMoS): A consensus platform that leverages multiple machine learning models (e.g., random forest, support vector machines, deep learning) and has demonstrated high accuracy in predicting rat oral LD₅₀ [6].

- Model Requirements: Regulatory-use models must have a defined endpoint, unambiguous algorithm, applicability domain, and measures of goodness-of-fit and predictivity [6].

Table 2: Examples of Acute Oral LD₅₀ Values in Rats [2]

| Substance | Approx. LD₅₀ (mg/kg) | Relative Toxicity Category |

|---|---|---|

| Botulinum toxin | 0.000001 | Extremely Toxic |

| Sodium cyanide | 6-8 | Highly Toxic |

| Arsenic | 763 | Moderately Toxic |

| Paracetamol (Acetaminophen) | 2000 | Slightly Toxic |

| Ethanol | 7060 | Practically Non-toxic |

| Sucrose (Table Sugar) | 29700 | Relatively Harmless |

Detailed Experimental Protocol for Acute Oral Toxicity (OECD/EPA Guideline-Based)

This protocol outlines the key steps for determining an acute oral LD₅₀ in rodents using a multi-dose design suitable for probit analysis.

5.1 Pre-Study Preparations

- Test Article: Use a well-characterized, pure substance. Prepare dosing solutions/suspensions daily in a suitable vehicle (e.g., water, methylcellulose) [5].

- Animals: Use healthy, young adult rats (e.g., 6-8 weeks old). Common strains include Sprague-Dawley or Wistar. Both sexes should be tested, using separate groups [5].

- Housing: House animals individually under standard conditions (controlled temp/humidity, 12h light/dark cycle) with ad libitum access to certified rodent diet and water [5].

- Acclimation: Acclimate animals for at least 5 days prior to dosing [5].

- Randomization: Assign animals to control and dose groups using a stratified random method based on body weight to ensure comparable group means [5].

5.2 Study Design

- Dose Selection: Based on a range-finding study, select at least 3-5 logarithmically spaced doses expected to produce mortality between 0% and 100%.

- Group Size: A minimum of 5-10 animals per sex per dose group is typical. Control groups receive the vehicle only.

- Dosing: Administer the test article in a single dose by oral gavage. Use a constant dosing volume (e.g., 10 mL/kg body weight). Record exact dose for each animal (mg/kg).

5.3 In-Life Observations and Data Collection

- Clinical Observations: Observe animals frequently on day 0, and at least daily for 14 days. Record signs of toxicity, morbidity, and time of death [1].

- Body Weight: Record individual animal weights at dosing, and periodically during the observation period.

- Necropsy: Perform gross necropsy on all animals found dead or sacrificed moribund, and on all survivors at terminal sacrifice.

5.4 Data Analysis via Probit Method

- Tabulate dose (converted to log₁₀) against the number dead/total per group.

- Calculate percentage mortality and convert percentages to probit values using a standard probit table [9].

- Perform weighted linear regression of probits on log₁₀(dose). Software like SPSS, R, or specialized toxicology packages is used.

- From the regression line, calculate the LD₅₀ (log₁₀ dose where probit=5) and its 95% confidence limits [8] [9].

- Report the LD₅₀ value with species, strain, sex, route, and confidence intervals (e.g., LD₅₀ (oral, rat, female) = 250 mg/kg (95% C.I. 200-310 mg/kg)).

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for LD₅₀ Studies

| Item / Reagent | Function / Purpose | Key Considerations |

|---|---|---|

| Test Substance | The chemical entity whose acute toxicity is being assessed. | Purity and stability must be characterized. Requires safe handling per MSDS [5]. |

| Vehicle (e.g., Water, 0.5% Methylcellulose, Corn Oil) | To dissolve or suspend the test substance for accurate dosing. | Must be non-toxic, not react with test article, and ensure homogenous dosing solution [5]. |

| Clinical Pathology Kits (Serum Biochemistry, Hematology) | To evaluate organ dysfunction and systemic effects in survivors. | Used in satellite groups or main study survivors to provide mechanistic toxicity data. |

| Fixative (10% Neutral Buffered Formalin) | For tissue preservation during necropsy for potential histopathology. | Essential for identifying target organs of toxicity [5]. |

| Reference Control Compound | A substance with a known, stable LD₅₀. | Used occasionally to validate assay sensitivity and laboratory performance. |

| Software for Probit Analysis | To perform statistical calculation of LD₅₀, confidence limits, and regression parameters. | Examples include EPA's BMDS, commercial stats packages, or validated in-house scripts [8]. |

LD50 as a Convergence Point in Toxicology

The concept of the Median Lethal Dose (LD₅₀), introduced by J.W. Trevan in 1927, was born from a need to standardize the assessment of drug and chemical potency [1] [10]. Trevan's innovation was to use death as a universal, measurable endpoint, allowing for the comparison of substances with vastly different mechanisms of action [1]. This foundational work established dose-response as a core principle in toxicology. The LD₅₀ is defined as the statistically derived single dose of a substance expected to cause death in 50% of a defined animal population under specific test conditions [1].

Subsequent statistical refinements, most notably Finney's probit analysis, transformed Trevan's concept into a robust quantitative tool [11] [10] [12]. Probit analysis linearizes the sigmoidal dose-response relationship, allowing for precise calculation of the LD₅₀ and its confidence intervals [11] [12]. While modern toxicology increasingly emphasizes mechanistic understanding and alternative testing strategies, the LD₅₀ derived from probit analysis remains a critical benchmark in regulatory science for classifying chemical hazards, setting safety thresholds, and prioritizing risk assessments [1] [10]. This article details the experimental and computational protocols that underpin this enduring metric, framing them within a thesis on probit analysis as the statistical bridge between empirical observation and regulatory decision-making.

Foundational Concepts and Toxicity Classification

The core purpose of LD₅₀ determination is to quantify and compare acute toxicity. It is crucial to understand that the LD₅₀ value is inversely related to toxicity: a lower LD₅₀ indicates a more toxic substance [1] [13]. The value is typically expressed as the mass of substance per unit body weight of the test animal (e.g., mg/kg) [1]. For inhalation studies, the analogous metric is the Lethal Concentration 50 (LC₅₀), expressed as concentration in air (e.g., ppm) over a specified duration, usually 4 hours [1].

To standardize communication of hazard, LD₅₀ values are classified using established toxicity scales. Two prominent systems are shown below, highlighting how the same numerical value can be described by different terms. It is imperative to reference which scale is being used [1].

Table 1: Toxicity Classification by the Hodge and Sterner Scale [1]

| Toxicity Rating | Commonly Used Term | Oral LD₅₀ in Rats (mg/kg) | Probable Lethal Dose for an Adult Human |

|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | A taste (< 7 drops) |

| 2 | Highly Toxic | 1 – 50 | 1 teaspoon (4 ml) |

| 3 | Moderately Toxic | 50 – 500 | 1 ounce (30 ml) |

| 4 | Slightly Toxic | 500 – 5000 | 1 pint (600 ml) |

| 5 | Practically Non-toxic | 5000 – 15,000 | 1 quart (1 liter) |

| 6 | Relatively Harmless | ≥ 15,000 | > 1 quart |

Table 2: Toxicity Classification by the Gosselin, Smith and Hodge Scale [1]

| Toxicity Class | Probable Oral Lethal Dose (Human) | For a 70-kg Person |

|---|---|---|

| 6, Super Toxic | < 5 mg/kg | < 7 drops |

| 5, Extremely Toxic | 5 – 50 mg/kg | 7 drops – 1 tsp |

| 4, Very Toxic | 50 – 500 mg/kg | 1 tsp – 1 oz |

| 3, Moderately Toxic | 0.5 – 5 g/kg | 1 oz – 1 pint |

| 2, Slightly Toxic | 5 – 15 g/kg | 1 pint – 1 quart |

| 1, Practically Non-Toxic | > 15 g/kg | > 1 quart |

The LD₅₀ for a substance is not a fixed property; it can vary significantly based on the route of exposure (e.g., oral, dermal, inhalation) and the test species. For example, the insecticide dichlorvos shows differing toxicities: Oral LD₅₀ (rat): 56 mg/kg; Dermal LD₅₀ (rat): 75 mg/kg; Inhalation LC₅₀ (rat): 1.7 ppm (4-hour exposure) [1]. This underscores the importance of specifying test conditions when reporting or using LD₅₀ data.

Core Statistical Methodology: Probit Analysis

Probit analysis is the statistical engine for deriving the LD₅₀ from quantal dose-response data (where the outcome is binary, e.g., dead/alive) [11] [12]. It linearizes the sigmoidal cumulative normal distribution of responses to dose.

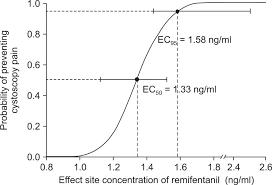

3.1 The Probit Transformation The proportion of subjects responding (p) at a given dose is converted to a "probit" (probability unit). The transformation is: Y = 5 + Φ⁻¹(p), where Φ⁻¹(p) is the inverse of the cumulative standard normal distribution [11] [12]. The addition of 5 is a historical convention to avoid negative values. A probit of 5 corresponds to the median response (p=0.5, i.e., LD₅₀), a probit of 6.64 corresponds to ~95% response, and 3.36 to ~5% response [11].

3.2 Model Fitting and LD₅₀ Calculation Transformed probits (Y) are regressed against the logarithm of the dose (log₁₀(dose)) using maximum likelihood estimation, fitting a linear model: Y = a + b * log₁₀(dose) [12]. The slope (b) represents the steepness of the dose-response curve. The LD₅₀ is calculated by setting Y=5 and solving for dose: log₁₀(LD₅₀) = (5 - a) / b. Software packages provide the LD₅₀ and its confidence intervals, which are essential for understanding the estimate's precision [12].

3.3 Goodness-of-Fit Assessment A critical step is evaluating the model's fit using a chi-square (χ²) heterogeneity test [12]. A non-significant p-value (typically >0.05) indicates the data do not deviate significantly from the fitted probit model. A significant result suggests the model is a poor fit, possibly due to underlying non-normal tolerance distribution or experimental issues, and inferences like the LD₅₀ may be unreliable [12].

Detailed Experimental Protocols

Protocol A: Classical In Vivo LD₅₀ Determination (OECD Guideline-Informed)

Objective: To determine the acute oral LD₅₀ of a test substance in rodents using a fixed-dose procedure and probit analysis.

Materials & Subjects:

- Test Substance: Pure compound of known concentration/ potency [1].

- Animals: Healthy young adult rats (e.g., Sprague-Dawley or Wistar), typically 8-12 weeks old. A common design uses 5-6 dose groups, with 5-10 animals per sex per group [1] [10].

- Housing: Standard laboratory conditions with ad libitum access to food and water (fasting period of 4-6 hours prior to dosing may be standard).

- Dosing Vehicle: An appropriate solvent/suspending agent (e.g., water, corn oil, methylcellulose).

Procedure:

- Dose Selection: Based on a pilot range-finding study, select at least four doses that are expected to produce mortality between 0% and 100%, ideally spanning the 10%-90% range.

- Randomization & Group Assignment: Randomly assign animals to dose groups and control group(s). Control groups receive the dosing vehicle only.

- Dosing: Administer the test substance in a single bolus via oral gavage. The dose is calculated based on the most recent body weight (mg/kg). Record the exact volume administered.

- Post-Dosing Observation: Observe animals frequently (e.g., at 30 min, 1, 2, 4, 6, and 24 hours) on the first day, and at least daily for a total of 14 days [1]. Record detailed clinical observations: signs of toxicity, onset/duration, morbidity, and mortality.

- Necropsy: Perform gross necropsy on all animals found dead and those euthanized at the end of the study.

Data Analysis:

- Tabulate the number of animals dosed (N) and the number deceased (R) at each dose level at the end of the 14-day observation period.

- Calculate the proportion responding (mortality) at each dose: p = R/N.

- Input data (Dose, N, R) into statistical software capable of probit analysis (e.g., StatsDirect, specific R packages, or the USDA Probit programs) [14] [12].

- Perform probit regression (log₁₀ dose transformation) and obtain the LD₅₀ estimate with 95% confidence limits.

- Report the slope of the probit line, the χ² goodness-of-fit statistic, and the final LD₅₀ (mg/kg) with confidence limits. The result should be reported as, for example, "Oral LD₅₀ (rat) = 250 mg/kg (95% C.I. 195 – 320 mg/kg)" [1].

Protocol B: Modern Application - Determining Limit of Detection (LoD) for a Diagnostic Assay via Probit Analysis

Objective: To determine the 95% detection limit (LoD or C95) of a qualitative diagnostic assay (e.g., SARS-CoV-2 RT-PCR) using probit regression, as per CLSI EP17-A2 guidelines [11].

Materials:

- Target Analyte: SARS-CoV-2 virus stock of known concentration (e.g., in plaque-forming units per mL, PFU/mL).

- Clinical Matrix: Negative nasopharyngeal swab (NPS) transport medium.

- Assay: Xpert Xpress SARS-CoV-2 test kit or equivalent.

- Instrumentation: Appropriate PCR detection system.

Procedure [11]:

- Sample Preparation: Serially dilute the virus stock in the negative NPS matrix to create 5-7 concentration levels near the expected LoD (e.g., spanning from 0.0001 to 0.02 PFU/mL).

- Replicate Testing: For each concentration level, test a minimum of 20 independent replicates. Include at least 20 replicates of the negative matrix as a control.

- Run Assay: Perform the test according to the manufacturer's instructions. Record results as positive or negative for each replicate.

Data Analysis:

- For each concentration level, calculate the proportion of positive replicates (hit rate).

- Convert hit rates to probits using the formula: Y = 5 + NORMSINV(P), where P is the hit rate [11].

- Perform linear regression of probits (Y) against log₁₀(concentration).

- Calculate the C95 concentration (LoD) by solving the regression equation for Y = 6.64 (the probit for 95% response). LoD = 10^[(6.64 - a) / b], where 'a' is the intercept and 'b' is the slope.

- Verification: Prepare samples at the calculated LoD concentration and test at least 20 replicates. A hit rate of ≥95% verifies the LoD [11].

Advanced Models: From Descriptive to Mechanistic

While probit analysis descriptively models the dose-response relationship, Toxicokinetic-Toxicodynamic (TK-TD) models represent a paradigm shift toward mechanistic prediction [15].

5.1 The GUTS Framework The General Unified Threshold Model of Survival (GUTS) integrates two processes [15]:

- Toxicokinetics (TK): Describes the time-course of substance uptake, distribution, and elimination within the organism (internal dose).

- Toxicodynamics (TD): Describes the processes of damage accumulation and repair leading to the observed effect (death).

5.2 Core Mechanistic Hypotheses GUTS operates under two alternative survival models [15]:

- Stochastic Death (SD): All individuals are assumed identical. At any moment, the probability of death is the same for all living individuals and increases with increasing internal damage.

- Individual Tolerance (IT): Individuals differ in their sensitivity (threshold). An individual dies instantly when its internal damage exceeds its personal threshold. The distribution of thresholds in the population is modeled.

These advanced models allow for extrapolation to time-variable exposures and can provide insights into the mode of toxic action, moving beyond the single-point estimate of the LD₅₀.

Visual Synthesis of Concepts and Workflows

Diagram 1: From Historical Concept to Modern Protocols and Models

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Reagents, Software, and Resources for LD₅₀ and Probit Analysis Research

| Item | Function & Application | Specific Examples / Notes |

|---|---|---|

| Standard Laboratory Animals | In vivo bioassay subjects for classical acute toxicity testing. | Rat (Rattus norvegicus), mouse (Mus musculus). Specific strains (Sprague-Dawley, Wistar) are standard [1]. |

| Dosing Vehicles | To solubilize or suspend test compounds for accurate oral or parenteral administration. | Corn oil, carboxymethylcellulose (CMC), saline, dimethyl sulfoxide (DMSO, with caution) [1]. |

| Statistical Software with Probit | To perform probit regression, calculate LD₅₀/LC₅₀, and generate confidence intervals. | StatsDirect [12], R packages (e.g., ecotoxicology, drc), USDA Probit Programs (require Mathematica) [14]. |

| Online Calculators | For preliminary analysis and educational purposes. | AAT Bioquest LD₅₀ Calculator [13]. Note: Peer-reviewed analysis requires full statistical software. |

| Reference Toxins | Positive controls to validate experimental and analytical protocols. | Standardized chemicals with known, published LD₅₀ values (e.g., potassium cyanide, sodium dichromate). |

| CLSI & OECD Guidelines | Authoritative protocols for experimental design and data analysis to ensure regulatory acceptance. | OECD Test Guideline 425 (Up-and-Down Procedure), CLSI EP17-A2 (for LoD determination via probit) [11]. |

| Alternative Testing Matrices | For modern, reductionist approaches to toxicity screening. | In vitro cell lines, 3D tissue models, computational QSAR platforms [10]. |

The dose-response relationship, a cornerstone of toxicology and pharmacology, is fundamental for quantifying the biological effect of a chemical agent. When plotting the proportion of a population exhibiting a binary response (e.g., death/survival) against the logarithm of the dose, the data typically form an S-shaped sigmoid curve [12]. This shape reflects the cumulative distribution of individual tolerances within the population [16]. The primary challenge for researchers is to accurately determine key summary statistics, such as the median lethal dose (LD50)—the dose required to kill 50% of a test population—from this non-linear relationship [1].

Probit analysis is the established statistical method designed to solve this challenge. Developed primarily for biological assay work, it linearizes the sigmoid curve by transforming the observed proportions into "probability units" or probits [12] [11]. A probit is derived from the inverse of the cumulative standard normal distribution; essentially, it converts a proportion (p) into the equivalent number of standard deviations from the mean of a normal distribution, with 5 added for historical convenience to avoid negative numbers [12] [17]. The resulting linear model, Probit(p) = a + b * Log(Dose), can be analyzed using maximum likelihood estimation, providing robust estimates for the LD50 and its confidence intervals [12] [18]. This method is preferred for quantal (binary) data with a binomial error structure, distinguishing it from techniques suited for continuous response data [12].

Core Protocol: Designing and Executing an LD50 Probit Analysis Experiment

This protocol outlines the standardized procedure for determining the LD50 of a substance using probit analysis, in accordance with established toxicological principles [1] [17].

Pre-Experimental Design and Reagent Toolkit

A successful experiment requires careful planning and the following essential materials.

Table 1: Research Reagent Solutions & Essential Materials for LD50 Probit Analysis

| Item Category | Specific Items & Examples | Primary Function in Protocol |

|---|---|---|

| Test Substance | Pure chemical compound, purified toxin (e.g., snake venom) [19]. | The agent whose toxicity is being quantified. Must be of known and stable composition [1]. |

| Vehicle/Solvent | Phosphate-buffered saline (PBS), sterile water, corn oil, dimethyl sulfoxide (DMSO). | To dissolve or suspend the test substance for accurate dosing. Must be non-toxic at administered volumes. |

| Biological System | Inbred strain of laboratory animals (e.g., mice, rats). Defined cell culture for in vitro assays. | Provides the standardized, responsive population for the dose-response experiment [1]. |

| Dosing Apparatus | Oral gavage needles, calibrated syringes, inhalation chambers, topical application devices. | Ensures precise and consistent delivery of the test substance via the chosen route (oral, dermal, intravenous, etc.) [1]. |

| Data Collection Tools | Animal monitoring sheets, clinical scoring systems, laboratory information management system (LIMS). | Records binomial outcomes (dead/alive, affected/unaffected) and all associated metadata for statistical analysis. |

Experimental Procedure

Step 1: Animal Assignment and Dose Preparation. Healthy, acclimatized animals of a single species, strain, sex, and age range are randomly assigned to treatment groups (typically 5-8 animals per group) [1]. A control group receives the vehicle only. Prepare a logarithmic series of 5-7 test doses. The range should be estimated from preliminary studies to span from a dose expected to cause ~0% response to one causing ~100% response [17].

Step 2: Substance Administration and Observation. Administer the single, prepared dose to each animal in the corresponding group via the specified route (e.g., oral gavage, intravenous injection) [1]. Observe all animals, including controls, meticulously for a predefined period (often 24, 48, or 72 hours, depending on the substance's toxicokinetics). Record the binomial endpoint (e.g., dead or alive at 48 hours) for each subject. Clinical observations of morbidity should also be noted.

Step 3: Data Compilation. Compile the raw data into a grouped format suitable for analysis. For each dose level, record: the dose (D), the total number of animals tested (N), and the number of animals responding (R, e.g., died) [18]. The proportional response is calculated as p = R/N.

Data Analysis Protocol: From Raw Counts to LD50 Estimate

Following data collection, statistical transformation and analysis are performed.

Step 1: Data Transformation.

First, apply a logarithmic transformation to the dose values (X = Log(D)). This step is critical as the relationship between probit and dose is typically linear on a logarithmic scale [12] [20]. Next, transform the observed proportion (p) for each dose group to a probit value (Y). This can be done using statistical software, published probit tables, or the Excel function: Y = 5 + NORMSINV(p), where NORMSINV is the inverse standard normal function [11] [18]. Proportions of 0% or 100% require correction (e.g., using Abbott's formula or replacing 0 with 0.25/N and 1 with (N-0.25)/N) before transformation [17].

Step 2: Model Fitting via Maximum Likelihood Estimation (MLE).

Fit the linear model Y = a + bX using MLE, not ordinary least squares. MLE is the standard method for probit analysis as it correctly accounts for the binomial nature of the data and provides the best estimates for the intercept (a) and slope (b) [12] [18]. This process is iterative and is performed automatically by specialized software (e.g., StatsDirect, MedCalc, SAS, R).

Step 3: Calculating LD50 and Confidence Intervals.

The fitted model is used to calculate the LD50. Since the LD50 corresponds to a probit value of 5 (representing the 50% point on the standard normal distribution), the formula is derived from the regression equation: Log(LD50) = (5 - a) / b. The anti-log of this value gives the LD50 in the original dose units [18] [17]. Software will also calculate 95% confidence intervals for the LD50 using Fieller's theorem or similar methods, which are essential for stating the precision of the estimate [12].

Step 4: Goodness-of-Fit and Model Validation. Assess the model's fit using a chi-square heterogeneity test. A non-significant p-value (e.g., p > 0.05) indicates the observed data do not deviate significantly from the fitted probit model, validating the analysis [12]. Significant heterogeneity suggests the model is a poor fit, possibly due to an incorrect dose spacing, outliers, or non-binomial variance, and results should be interpreted with extreme caution [12].

Figure 1: Probit Analysis Workflow: From Raw Data to LD50.

Applications, Interpretation, and Advanced Context

Interpreting Results and Toxicity Classification

The calculated LD50 is a primary metric for acute toxicity. Lower LD50 values indicate higher toxicity [1]. To standardize communication, results are often classified using established toxicity scales.

Table 2: Toxicity Classification Based on Oral LD50 in Rats (Hodge and Sterner Scale) [1]

| Toxicity Rating | Common Term | Oral LD50 (mg/kg) | Probable Lethal Dose for Humans |

|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | A taste, a drop (~1 grain) |

| 2 | Highly Toxic | 1 – 50 | 4 mL (~1 teaspoon) |

| 3 | Moderately Toxic | 50 – 500 | 30 mL (~1 fluid ounce) |

| 4 | Slightly Toxic | 500 – 5000 | 600 mL (~1 pint) |

| 5 | Practically Non-toxic | 5000 – 15000 | >1 Litre |

It is crucial to report the species, route of exposure, and observation time alongside the LD50 value (e.g., LD50 (oral, rat, 48h) = 250 mg/kg), as these factors dramatically influence the result [1]. Furthermore, while probit analysis is the gold standard for quantal data, alternative methods like logistic regression (based on the logistic distribution) or the non-parametric trimmed Spearman-Karber method are used when data does not fit a probit model or when responses do not span the 0-100% range [16].

Critical Cautions and Modern Applications

Researchers must heed key cautions. Probit analysis is not a universal solution; some dose-response relationships are not adequately described by a Gaussian sigmoid [12]. It is designed for binomial data only; continuous response data (e.g., enzyme activity, percent body weight change) require different regression methods [12]. For complex analyses like comparing relative potencies of multiple compounds, expert statistical guidance is recommended [12].

Beyond traditional toxicology, probit analysis has found a vital modern application in clinical diagnostics, particularly for determining the Limit of Detection (LoD) of qualitative tests (e.g., for viruses like SARS-CoV-2) [11]. Here, the "dose" is the analyte concentration, and the "response" is a positive test result. The concentration at which 95% of replicates test positive (C95) is estimated via probit regression and reported as the LoD, following guidelines such as CLSI EP17-A2 [11] [18].

Figure 2: The Logic of Probit Transformation: From Sigmoid to Straight Line.

Probit analysis remains an indispensable statistical tool for transforming the sigmoid dose-response curve into a tractable linear model, enabling the precise calculation of the LD50 and other critical quantiles. Its proper application requires stringent experimental design, appropriate binomial data, and rigorous validation of model fit. While its roots are in toxicology, the core mathematical principle of linearizing a cumulative distribution function ensures its continued relevance in modern scientific fields, from eco-toxicology to the validation of cutting-edge diagnostic tests. Mastery of this technique equips researchers with a powerful method for quantifying biological potency and risk.

Mathematical Foundation and Conceptual Framework

The probit model is a specialized type of regression analysis designed for binary outcome variables (e.g., alive/dead, success/failure) [21]. Its core purpose is to estimate the probability that an observation with given characteristics falls into one of the two possible categories [21]. The model is specified as:

( P(Y = 1 | X) = \Phi(\alpha + \beta X) )

where Φ represents the cumulative distribution function (CDF) of the standard normal distribution [21] [22]. The term (α + βX) is a linear predictor, but the response probability is a non-linear function of this predictor.

A powerful way to motivate this model is through the latent variable framework. Suppose an unobserved, continuous latent variable Y* determines the binary outcome Y [21]. This latent variable is modeled as:

( Y^* = \alpha + \beta X + \epsilon )

where ε ~ N(0, 1). The observed binary outcome Y is then defined as:

( Y = \begin{cases}

1 & \text{if } Y^* > 0 \

0 & \text{otherwise}

\end{cases} ) [21]

Consequently, the probability that Y=1 is:

( P(Y=1|X) = P(\alpha + \beta X + \epsilon > 0) = P(\epsilon > -\alpha - \beta X) = \Phi(\alpha + \beta X) ) [21]

This formulation directly links the linear model for the latent variable to the probit function for the observed binary outcome.

The probit transformation itself is the inverse of this process. It converts an observed probability p into a "probit" or a z-score from the standard normal distribution [23] [24]:

( \text{probit}(p) = \Phi^{-1}(p) )

In historical toxicological work, a value of 5 was often added to the probit (probit = 5 + z) to avoid working with negative numbers [24]. This transformation is key to linearizing a sigmoidal dose-response relationship: by converting mortality proportions to probits and doses to logarithms, the relationship becomes approximately linear (Y = α + βX), enabling analysis by linear regression [16] [25].

Application in LD50 Calculation: Protocols and Procedures

Probit analysis is the standard parametric method for calculating the median lethal dose (LD50) or concentration (LC50) from dose-response bioassay data [16]. The following protocol details the steps from experimental design to final calculation.

Experimental Design and Data Collection Protocol

A valid probit analysis for LD50 determination requires careful experimental design.

- Test Organisms & Grouping: Use healthy, genetically similar organisms of a defined life stage. Randomly assign individuals to dose groups and a control group [16].

- Dose Selection: Administer at least 5-6 geometrically spaced doses (e.g., doubling concentrations) expected to produce mortality rates between 10% and 90%. Include a negative control (vehicle only) [25].

- Replication: Each dose group must contain an adequate number of organisms (typically 20-100, depending on the organism) to reliably estimate the proportion affected [16].

- Exposure & Observation: Under standardized conditions (temperature, humidity), expose groups for a specified duration. Record the number of organisms showing the defined adverse effect (e.g., death) in each group after the observation period [16].

Data Preparation and Transformation Protocol

Raw mortality counts must be transformed for linear regression.

- Calculate Observed Proportion (p): For each dose group, ( p = \frac{\text{Number Dead}}{\text{Total in Group}} ).

- Apply Control Mortality Correction: If control group mortality (

c) exceeds a threshold (e.g., 10%), correct proportions using Abbott's or Schneider-Orelli's formula [25]: ( p_{\text{corrected}} = \frac{p - c}{1 - c} ) - Transform Dose: Convert administered doses to

log10(dose)(X-axis variable) [25]. - Transform Proportion to Empirical Probit (Y): Convert each corrected proportion

pto an empirical probit valueY. This can be done using statistical tables or software functions:Y = Φ⁻¹(p)[23] [24]. For manual calculation,Y = 5 + normsinv(p)in spreadsheet software provides the traditional probit value [11].

Computational Analysis Protocol for LD50

The core analysis involves iterative weighted least-squares regression.

- Initial Regression: Perform a simple linear regression of Empirical Probits (Y) on log10(dose) (X) to obtain an initial intercept (α) and slope (β).

- Calculate Expected Probits (Ŷ): For each dose, compute ( Ŷ = \alpha + \beta \times \log10(\text{dose}) ).

- Calculate Weighting Coefficients (w): The weight for each data point is critical for handling the non-constant variance of binomial data. It is calculated as [25]:

( w = \frac{Z^2}{P \times Q} )

where:

Zis the ordinate (height) of the standard normal distribution at the expected probit Ŷ.Pis the expected probability corresponding to Ŷ (P = Φ(Ŷ - 5)).Q = 1 - P.

- Iterative Weighted Regression: Perform a weighted least squares regression using the weights

w. This yields new, more precise estimates for α and β [21] [25]. - Iterate to Convergence: Recalculate expected probits and weights using the new coefficients. Repeat the weighted regression until the parameter estimates stabilize (converge).

- Calculate LD50 and Confidence Intervals:

- LD50: The log10(dose) at which the expected probit

Ŷ = 5(corresponding to 50% mortality). Solve the final regression equation: ( \log10(\text{LD50}) = (5 - \alpha) / \beta ). The antilog gives the LD50 [25]. - Standard Error and CI: The standard error of the log10(LD50) is calculated from the weighted regression variance-covariance matrix. The 95% fiducial confidence limits are [25]: ( \text{Antilog} [ \log10(\text{LD50}) \pm 1.96 \times \text{SE}(\log10(\text{LD50})) ] )

- LD50: The log10(dose) at which the expected probit

- Goodness-of-Fit Test: Assess model fit using a Chi-square test comparing observed versus expected numbers of affected organisms across doses. A non-significant p-value indicates an adequate fit [25].

Table 1: Key Steps in Computational Probit Analysis for LD50 [16] [25]

| Step | Action | Purpose | Output |

|---|---|---|---|

| 1. Transformation | Convert dose to log10, proportion to probit. | Linearize sigmoidal dose-response curve. | Linear coordinates (X, Y). |

| 2. Initial Fit | Simple linear regression. | Obtain starting estimates for parameters. | Initial α, β. |

| 3. Weight Calculation | Compute weighting coefficient w for each point. |

Account for binomial variance, giving more weight to precise points. | Weights (w). |

| 4. Weighted Regression | Perform weighted least squares regression. | Obtain efficient, minimum-variance parameter estimates. | Refined α, β. |

| 5. Iteration | Repeat steps 3-4 until convergence. | Achieve final stable parameter estimates. | Final α, β. |

| 6. LD50 Estimation | Solve 5 = α + β*log10(Dose). |

Calculate median lethal dose. | LD50 point estimate. |

| 7. Uncertainty Quantification | Calculate standard error from final model. | Establish confidence in the LD50 estimate. | 95% Fiducial Limits. |

Comparative Analysis with Alternative Models

While probit is standard, other models are applicable to binary dose-response data. The choice depends on the underlying distribution of tolerance within the test population [16].

Table 2: Comparison of Binary Dose-Response Models [16] [22]

| Feature | Probit Model | Logit Model | Trimmed Spearman-Karber |

|---|---|---|---|

| Mathematical Foundation | Based on cumulative standard normal distribution. | Based on cumulative logistic distribution. | Non-parametric method; does not assume a specific distribution. |

| Link Function | ( \Phi^{-1}(p) = \alpha + \beta X ) | ( \ln(p/(1-p)) = \alpha + \beta X ) | Not applicable. |

| Assumption | Population tolerance follows a log-normal distribution. | Population tolerance follows a log-logistic distribution. | Minimal; only requires monotonic dose-response. |

| Primary Use Case | Standard toxicology, especially when tolerances are normally distributed. | Widely used in epidemiology and general statistics; tails are slightly heavier than normal. | When data does not fit parametric models or responses are not normally distributed. |

| Output | LD50 with confidence intervals. | LD50 with confidence intervals. | LD50 with confidence intervals. |

| Software/Implementation | Available in most statistical packages (SAS, R, SPSS, specialized tools) [25]. | Available in all standard statistical packages. | Available in ecotoxicology and specific statistical software. |

Advanced Applications and Validation in Research

Beyond classic toxicology, probit analysis is vital in method validation for clinical and analytical laboratories, particularly for determining the Limit of Detection (LoD) for qualitative assays like PCR [11].

Protocol for LoD Determination using Probit Analysis [11]:

- Sample Preparation: Create a series of 5-7 samples with analyte concentrations near the expected LoD, serially diluted in a negative matrix.

- Replicate Testing: Analyze each concentration level a minimum of 20 times (recommended), including negative controls.

- Calculate Hit Rate: For each concentration, compute the proportion of positive results (Detection Probability,

Dᵢ). - Probit Regression: Regress the probit-transformed hit rates against

log10(concentration). - Estimate LoD: The LoD is defined as the concentration corresponding to a detection probability of 0.95. From the fitted model, calculate the concentration where the predicted probit equals

Φ⁻¹(0.95) ≈ 6.64(using the +5 convention) or 1.645 (without it) [11].

Validation of Disinfestation Treatments: In phytosanitary treatment research, a Probit 9 efficacy standard is often required (99.9968% mortality). To demonstrate this with 95% confidence requires testing approximately 93,600 insects with zero survivors [16]. This extreme level of validation underscores the role of probit analysis in confirming the safety and efficacy of treatments for international trade.

Visualizing the Probit Workflow and Transformation

Workflow for LD50 Calculation via Probit Analysis

Probit Transformation Linearizes the Dose-Response Curve

The Researcher's Toolkit: Essential Materials and Reagents

Table 3: Essential Research Toolkit for Probit Analysis [16] [11] [25]

| Category | Item / Solution | Specification / Function |

|---|---|---|

| Statistical Software | R, SAS, SPSS, Stata | Core platforms for performing probit regression, maximum likelihood estimation, and calculating confidence intervals. glm() function in R is commonly used [22]. |

| Specialized Tools | POLO, LeOra Software, EPA BMDS | Specialized suites for dose-response analysis, often including probit, logit, and other models with advanced benchmarking features [25]. |

| Spreadsheet Implementation | Custom Excel Spreadsheet | User-friendly templates implementing Finney's method of iterative weighted regression for calculating LD50/LC50, accessible without advanced software [25]. |

| Laboratory & Data Collection | Test Organisms | Standardized, healthy populations (e.g., Daphnia magna, Oncorhynchus mykiss, specific insect strains) of defined age/size [16]. |

| Test Compound/Vehicle | High-purity analytical standard of the toxicant. Appropriate solvent/vehicle for serial dilution (e.g., acetone, DMSO, water) [16]. | |

| Controlled Environment Chambers | For maintaining constant temperature, humidity, and light cycles during exposure to minimize stress-related variability [16]. | |

| Reference Materials | Probit Transformation Tables | Historical tables for converting proportions to probits (e.g., Finney's tables), useful for manual calculation or verification [11] [24]. |

| Standard Operating Procedures (SOPs) | Protocols for acute toxicity testing (e.g., OECD, EPA, ASTM guidelines) ensuring regulatory compliance and reproducibility [16]. |

Within the framework of calculating the median lethal dose (LD₅₀), the selection of an appropriate statistical model is foundational to valid and interpretable results. Probit analysis emerges as the specialized statistical tool for this purpose when the core assumptions of the experimental data and research question align with its mathematical underpinnings [26] [16]. Originally developed by Bliss in 1934 and formalized by Finney, probit analysis was designed to solve the fundamental challenge in toxicology and bioassay: transforming the sigmoidal (S-shaped) relationship between the logarithm of a dose and the probability of a quantal response (e.g., death or survival) into a linear form suitable for regression [27] [26].

The broader thesis of LD₅₀ determination posits that an agent's toxicity can be summarized by the dose required to kill half of a test population. Probit analysis directly serves this thesis by providing a robust method to estimate this dose and its confidence limits, but its appropriateness is conditional [28]. It is specifically indicated when the tolerance distribution of the test subjects to the toxicant is normally distributed—that is, when the individual doses required to elicit the response are distributed symmetrically around a mean [16]. When this core assumption holds, the cumulative distribution of responses follows the cumulative normal distribution, which probit analysis leverages through its transformation of proportions to "probability units" or probits [11]. Consequently, the tool is most powerful and accurate in fields like entomology, pharmacology, and toxicology for acute lethality testing, where the binary outcome aligns with the model's structure and the underlying biological variability often approximates normality on a logarithmic dose scale [27] [16].

Table 1: Core Assumptions of Probit Analysis and Diagnostic Checks

| Assumption | Theoretical Basis | How to Validate | Consequence of Violation |

|---|---|---|---|

| Normally Distributed Tolerance | Individual effective doses are normally distributed, leading to a cumulative normal dose-response curve [16]. | Goodness-of-fit test (e.g., Chi-square); inspect standardized residuals for systematic patterns [28]. | Biased estimates of LD₅₀ and inaccurate confidence limits. |

| Linear Relationship (Log Dose vs. Probit) | The probit transformation linearizes the sigmoidal cumulative normal curve [27] [26]. | Visual inspection of the probit plot; significance test of the regression slope [28]. | Regression model is misspecified; predictions are unreliable. |

| Independent Responses | The outcome for one subject does not influence the outcome for another [28]. | Controlled experimental design; assessing over-dispersion in the goodness-of-fit statistic. | Inflated variance, leading to underestimation of standard errors. |

| Stimulus is Quantifiable | The independent variable (dose/concentration) is known and measured on a continuous scale [26]. | Experimental protocol verification. | Fundamental regression requirement cannot be met. |

Quantitative Comparison: Probit Analysis vs. Alternative Methods

Selecting the correct analytical tool requires a clear understanding of the methodological landscape. While probit analysis is a standard for LD₅₀ calculation, other methods are applicable under different data conditions or assumptions [16]. The choice among probit, logit, and non-parametric methods fundamentally hinges on the distribution of the underlying tolerance and the nature of the data collected.

Logistic regression, or logit analysis, is the most direct alternative, designed for binary outcome data but based on the cumulative logistic distribution [16]. The Spearman-Karber method, particularly the trimmed version, provides a non-parametric alternative that does not assume a specific distribution shape but has stricter data coverage requirements [16]. The relative potency test is a specific application used for comparing two agents under the stringent assumption of parallel dose-response curves [28].

Table 2: Comparison of Statistical Methods for Quantal Bioassay Data

| Method | Key Principle | Data Requirements | Primary Output | Best Used When |

|---|---|---|---|---|

| Probit Analysis | Transforms proportions using the inverse cumulative normal distribution to linearize the relationship [11] [26]. | Multiple dose groups with partial responses (e.g., % kill between 5% and 95%) [11]. | LD₅₀, LD₉₅, slope, and confidence intervals [26]. | The tolerance distribution is assumed or verified to be normal (e.g., standard toxicology assays). |

| Logit Analysis | Transforms proportions using the inverse cumulative logistic distribution [16]. | Same as probit analysis. | LD₅₀, ED₅₀, and odds ratios. | The tolerance distribution has heavier tails than the normal distribution; results are often similar to probit. |

| Trimmed Spearman-Karber | Non-parametric method estimating the mean of the tolerance distribution [16]. | At least one response proportion ≤50% and one ≥50% [16]. | LD₅₀ with confidence interval. | Data do not fit a normal distribution; a distribution-free estimate is preferred. |

| Relative Potency (Parallel Lines) | Compares two probit/logit lines constrained to have the same slope [28]. | Two full dose-response datasets. | Relative potency ratio (e.g., Drug B is X times more potent than Drug A). | The primary question is comparative potency and the dose-response curves are parallel. |

Detailed Experimental Protocols for LD₅₀ Determination

Protocol 1: Foundational Bioassay for Probit Analysis

This protocol outlines the standard procedure for generating the quantal response data required for probit-based LD₅₀ calculation [27].

Experimental Design & Dose Selection:

- Define a minimum of five dose concentrations, logarithmically spaced where possible. The range should bracket the expected LD₅₀, with the lowest dose yielding a response near 0% (e.g., <10%) and the highest dose yielding a response near 100% (e.g., >90%) [11] [27].

- Include a control group (dose = 0) to account for natural mortality or background response.

- Assign a sufficient number of test subjects (n) to each dose group. A minimum of 20-30 subjects per dose is common for initial assays, though precise methods like the EPA require more [11]. Replication is critical for robust proportion estimates.

Data Collection:

- Administer the treatment and record the number of subjects exhibiting the defined quantal response (r_i) at each dose level (i) after a specified observation period.

- Record the total number of subjects treated (n_i) at each dose.

Data Preparation for Analysis:

- Calculate the observed proportion responding: ( p^*i = ri / n_i ) [28].

- Abbott's Correction: If a response occurs in the control group (natural mortality, c), correct the proportions using Abbott's formula: ( pi = (p^*i - c) / (1 - c) ) [27] [28]. This step is crucial for obtaining an unbiased estimate of the treatment effect.

- Prepare a data table with columns for: Dose, log10(Dose), n, r, and corrected p [27].

Protocol 2: Computational LD₅₀ Calculation via Maximum Likelihood Probit Regression

This protocol details the steps to perform the probit analysis, progressing from manual estimation to software implementation [27] [28].

Initial (Empirical) Probit Transformation:

Preliminary Linear Regression:

- Perform a least-squares linear regression of the empirical probits (y) against the log10(dose) (x), excluding any points where p_i was 0 or 1. This yields an initial slope (β₀) and intercept (α₀) [28].

Iterative Maximum Likelihood Fitting (Finney's Method):

- This iterative process refines the estimates [28].

- Step A: Using α₀ and β₀, calculate the expected probit (Yi) for *all* dose levels: ( Yi = α₀ + β₀ x_i ).

- Step B: Calculate the expected proportion (Pi): ( Pi = \Phi(Y_i - 5) * (1 - c) + c ), where c is natural mortality.

- Step C: Calculate the working probit (y'i): ( y'i = Yi + (pi - Pi) / Zi ), where Zi is the ordinate (height) of the standard normal distribution at Yi [28].

- Step D: Calculate a weighting coefficient (wi) for each dose: ( wi = Zi^2 / [ (Pi + c/(1-c)) * (1 - P_i) ] ) [28].

- Step E: Perform a weighted least-squares regression of the working probits (y') on log10(dose) (x), using weights ( ni wi ). This yields new parameters α₁ and β₁.

- Step F: Use α₁ and β₁ as new starting values and repeat steps A through E. The process iterates until the estimates converge (i.e., the change in the Chi-square statistic between iterations is minimal).

Model Diagnostics & LD₅₀ Calculation:

- Assess the model's goodness-of-fit using a Chi-square test: ( \chi^2 = \sum [ ni (pi - Pi)^2 / (Pi (1-P_i)) ] ) with (k-2) degrees of freedom (k = number of doses) [28]. A non-significant p-value (e.g., >0.05) indicates the model fits the data adequately.

- Calculate the log(LD₅₀) (denoted as m): ( m = (5 - \alpha) / \beta ), where 5 is the probit for a 50% response [27].

- Calculate the variance of m: ( V(m) = [1/\beta^2] * [ (1 / \sum ni wi) + ( (m - \bar{x})^2 / \sum ni wi (x_i - \bar{x})^2 ) ] ), where (\bar{x}) is the mean log(dose) [27].

- Calculate the 95% confidence interval for LD₅₀: ( \text{Antilog}[ m \pm t_{0.05, df} * \sqrt{V(m)} ] ), where t is the critical value from the t-distribution [27].

Visual Workflow: From Experiment to LD₅₀ Estimate

Workflow for Probit Analysis in LD₅₀ Determination

The Researcher's Toolkit: Essential Reagents & Software

Table 3: Essential Research Toolkit for Probit Bioassay & Analysis

| Category | Item / Solution | Specification / Example | Primary Function in Protocol |

|---|---|---|---|

| Biological Materials | Test Organisms | Species/strain with defined age, weight, and health status (e.g., Drosophila, lab mice, mosquito larvae). | Standardized biological unit for dose-response. |

| Negative Control Matrix | Vehicle solution (e.g., saline, acetone, water) without toxicant. | Administers control dose; assesses natural mortality. | |

| Positive Control Substance | A reference toxicant with known LD₅₀ (e.g., potassium dichromate). | Validates assay system performance and organism sensitivity. | |

| Test Substances & Prep | Serial Dilution Series | Log-spaced concentrations of the analyte in appropriate solvent [11]. | Creates the range of doses needed to define the sigmoidal curve. |

| Analytical Grade Solvents | DMSO, ethanol, distilled water, etc. | Dissolves and dilutes test substance without inducing toxicity. | |

| Software for Analysis | Statistical Packages | SPSS, SAS, R (glm with probit link), Polo-Plus [26] [16] [28]. |

Performs iterative maximum likelihood probit regression efficiently. |

| General Analysis Tools | Microsoft Excel (with NORM.S.INV, etc., for manual method) [11] [28]. | Useful for data organization, initial calculations, and implementing custom scripts. | |

| Reference Materials | Statistical Tables | Finney's tables of probits, weighting coefficients, and empirical probits [11] [27]. | Legacy resource for manual transformation and calculation. |

| Standard Protocols | CLSI EP17-A2, OECD Test Guidelines for chemical toxicity [11]. | Guides experimental design, dose selection, and replicate numbers. |

Step-by-Step Guide: Conducting Probit Analysis for LD50 Determination

The accurate determination of the median lethal dose (LD50)—the dose required to kill half of a test population—is a cornerstone of toxicological research and drug development. This parameter is critical for understanding the safety profile of chemical compounds, pharmaceuticals, and agrochemicals. The reliability of an LD50 estimate is not merely a function of statistical calculation but is fundamentally dependent on the initial experimental design. This includes the strategic selection of dose levels, the appropriate number and type of subjects, and the proper implementation of controls [12].

Probit analysis is the preferred statistical method for analyzing quantal (all-or-nothing) dose-response data, such as death or a specific toxic effect, to derive the LD50 and its confidence intervals [12] [16]. It operates on the principle that individual tolerances to a substance follow a log-normal distribution. A well-designed experiment provides the high-quality, binomial response data (e.g., number dead vs. number tested at each dose) that probit analysis requires for a robust and reliable fit [29] [18]. Poor design choices can lead to heterogeneous data, inadequate model fitting, and ultimately, unreliable or misleading potency estimates that compromise scientific validity and safety assessments.

This protocol details the fundamental components of experimental design for classical dose-response studies aimed at calculating LD50 via probit analysis. It integrates statistical theory with practical laboratory application, providing a structured framework for researchers.

Theoretical Foundation of Probit Analysis for Dose-Response

Probit analysis is a specialized form of regression analysis designed for binomial response variables. It linearizes the sigmoidal (S-shaped) relationship typically observed when the proportion of responding subjects is plotted against the logarithm of the dose [12] [16].

The core transformation converts observed proportions (p) into "probability units" or probits, which correspond to the inverse of the cumulative standard normal distribution (the z-score). The standard transformation is: Probit(p) = Φ⁻¹(p) + 5, where the addition of 5 is a historical convention to avoid negative values [12]. The analysis then fits a linear model: Probit(p) = a + b × Log(Dose) where a is the intercept and b is the slope, which represents the steepness of the dose-response curve [18].

The method relies on maximum likelihood estimation (MLE) rather than ordinary least squares, as MLE is more appropriate for binomial-distributed data [29]. The output provides an estimate of the LD50 (the dose corresponding to a probit of 5, or a 50% response rate) along with its confidence intervals, and a statistical test for goodness-of-fit (often a chi-square test) to assess whether the data adequately conform to the probit model [12] [18].

Experimental Protocol for LD50 Determination

Dose Selection and Preparation

The selection of dose levels is the most critical step in defining the experimental scope and ensuring an accurate probit fit.

Table 1: Dose Selection Criteria and Recommendations

| Criterion | Objective | Practical Recommendation |

|---|---|---|

| Number of Doses | To adequately define the sigmoid curve. | A minimum of 5 dose levels, plus a negative control. 6-8 levels are preferred for robust regression [12]. |

| Range | To encompass the full range from 0% to 100% response. | Preliminary range-finding studies are essential. The final experiment should include doses expected to cause ≈10% and ≈90% mortality. |

| Spacing | To ensure even distribution of information across the curve. | Use a geometric progression (e.g., doubling doses: 10, 20, 40, 80 mg/kg). Logarithmic spacing creates evenly spaced points on the log-dose axis. |

| Vehicle & Formulation | To ensure accurate and consistent delivery of the test agent. | The test substance must be soluble or homogenously suspendable in a vehicle (e.g., saline, corn oil, 0.5% carboxymethylcellulose). The formulation must be stable for the duration of the study. |

Subject Selection and Group Allocation

The test subjects must be appropriate for the research question and handled consistently to minimize variability.

Table 2: Subject Selection and Group Allocation Protocol

| Factor | Consideration | Standardization Protocol |

|---|---|---|

| Species & Strain | Relevance to research question and genetic uniformity. | Use a defined, healthy strain (e.g., Sprague-Dawley rats, CD-1 mice, Drosophila melanogaster). Justify choice based on metabolic or physiological relevance. |

| Age, Weight, & Sex | To reduce within-group variability in response. | Use subjects from a narrow age/weight range. Conduct separate assays for males and females, or stratify by sex if pooling is justified. |

| Health Status | To ensure responses are due to the test agent, not underlying illness. | Acquire subjects from reputable suppliers. Allow for a minimum 5-7 day acclimatization period in the test facility under standard conditions. |

| Randomization | To avoid systematic bias in group assignment. | Randomly assign each subject to a dose group or control group after acclimatization, using a computer-generated random number sequence. |

| Group Size (n) | To achieve sufficient statistical power and precision for the LD50 estimate. | A common starting point is n=8-12 subjects per dose group. Larger groups (n=20+) narrow confidence intervals but increase animal use [11]. |

Control Groups and Blinding

Controls are non-negotiable for validating the experimental results.

- Negative (Vehicle) Control: Subjects receive only the vehicle in the same volume and via the same route as dosed groups. This controls for effects of the administration procedure and the vehicle itself.

- Positive Control (Optional but Recommended): For some established testing frameworks (e.g., insecticide testing), a group may receive a reference compound with a known LD50 to verify the sensitivity and performance of the assay system.

- Handling & Sham Controls: If the administration route is invasive (e.g., injection, gavage), a sham group that undergoes the handling and procedure without any substance may be necessary.

- Blinding: The personnel responsible for observing endpoints (e.g., mortality, clinical signs) and recording data should be blinded to the group allocation of each subject to prevent observational bias.

The Scientist's Toolkit: Essential Reagent Solutions

Table 3: Key Research Reagent Solutions for Dose-Response Studies

| Item | Function | Key Considerations |

|---|---|---|

| Test Article | The active substance whose toxicity is being quantified. | Characterize purity, stability, and solubility. Store under appropriate conditions (e.g., -20°C, desiccated, protected from light). |

| Vehicle/Solvent | Medium for dissolving or suspending the test article for administration. | Must be non-toxic at the administered volumes. Common examples:生理盐水, 0.5-1% Carboxymethylcellulose (CMC)钠, corn oil, dimethyl sulfoxide (DMSO) with caution. |

| Formulation Matrix | Simulates the final product form (e.g., for agrochemicals or pharmaceuticals). | May include emulsifiers, stabilizers, or excipients. These components must be accounted for in control formulations. |

| Analytical Standard | A certified reference material of the test article. | Used to verify the concentration and purity of dosing solutions via HPLC, GC-MS, or other analytical methods. |

| Clinical Chemistry Assays | For supplemental toxicological data (e.g., liver/kidney injury panels). | Kits for measuring biomarkers like ALT, AST, BUN, and creatinine in serum/plasma can provide mechanistic insight. |

Experimental Workflow for an LD50 Study

Statistical Analysis Protocol: From Data to LD50

Following the in-life phase, data is compiled for probit analysis. The grouped data format requires three variables per dose level: the dose, the total number of subjects tested (n), and the number responding (r) [12] [18].

Step 1: Data Preparation and Transformation

- Tabulate data: Dose, N (tested), R (responders).

- Calculate the observed proportion responding: p = R / N.

- Most software (e.g., MedCalc, SAS, R, specialized scripts) will automatically perform the probit transformation and, if selected, the log transformation of the dose [18] [30].

Step 2: Model Fitting and Validation

- Fit the probit model using Maximum Likelihood Estimation (MLE). The model is: Probit(p) = a + b × Log(Dose) [18].

- Assess goodness-of-fit using the Chi-square heterogeneity test. A non-significant p-value (e.g., p > 0.05) indicates the data do not deviate significantly from the fitted probit model, supporting its use [12].

- If heterogeneity is significant (p < 0.05), investigate outliers or consider using a heterogeneity factor to adjust confidence intervals, or explore alternative models (e.g., logit, complementary log-log) [16] [30].

Step 3: LD50 Calculation and Reporting

- The LD50 is calculated as the dose corresponding to a probit value of 5. For the model Probit = a + b × Log(Dose), LD50 = 10^[(5 - a) / b] [18].

- Report the LD50 with its 95% confidence intervals (CI). The CI, not just the point estimate, is critical for communicating the precision of the estimate.

- The slope (b) of the probit line should also be reported, as it indicates the steepness of the dose-response relationship. A steeper slope suggests less variability in individual tolerance.

Probit Regression Analysis Workflow

Advanced Considerations and Troubleshooting

- Natural Mortality and Immunity: In some bioassays, control subjects may die naturally or a subset may be immune. Advanced probit procedures allow for the estimation of natural mortality and natural immunity parameters to correct the dose-response curve accordingly [30].

- Parallelism Testing: When comparing the potency of two compounds (e.g., a test article vs. a standard), probit analysis can test if their dose-response curves are parallel (have equal slopes). A significant difference in slopes invalidates a simple potency ratio comparison and requires more complex analysis [16] [30].

- Beyond LD50: Probit analysis can estimate any effective dose level (e.g., LD10, LD90). In diagnostic testing, it is used to determine the Limit of Detection (LoD), defined as the concentration corresponding to a 95% detection probability (often called C95) [11] [18].

- Cautions and Limitations: Probit analysis assumes tolerance is log-normally distributed. If the data systematically deviate from this model, the estimates may be biased. It is designed for quantal (binomial) data and should not be used for continuous data without expert statistical consultation [12] [16]. The method is a tool for well-designed experiments, not a remedy for poor design.

Data Preparation: Formatting Mortality Data for Analysis constitutes the foundational step for reliably determining the median lethal dose (LD₅₀), a critical metric in toxicology and drug development. The LD₅₀ is defined as the amount of a substance that, administered in a single dose, causes the death of 50% of a test animal population [1]. This protocol details the systematic process for collecting, structuring, and validating mortality data for subsequent analysis by probit analysis, a specialized statistical method designed for quantal (all-or-nothing) response data [29].

Probit analysis is a nonlinear estimation procedure that fits a cumulative normal distribution to dose-response data, overcoming the limitations of linear regression models when the dependent variable is dichotomous (e.g., dead/alive) [29]. Its use is mandated in standardized guidelines for determining limits of detection in diagnostic tests and remains the gold standard for calculating precise LD₅₀ values with confidence intervals [11]. The core of the analysis involves transforming observed mortality proportions into "probability units" or probits, which are linearly related to the logarithm of the dose, enabling the calculation of the dose corresponding to 50% mortality [11].

Core Data Structure and Collection Protocol

The integrity of the LD₅₀ calculation is entirely dependent on the quality of the raw experimental data. The following protocol ensures data is collected in a structured, consistent manner suitable for probit analysis.

Experimental Design and Data Collection Table

All mortality data must be recorded at the level of the individual test subject but aggregated for analysis. The following table defines the minimal data structure.

Table 1: Essential Data Structure for LD₅₀ Mortality Trials

| Data Field | Description | Format & Example | Critical Notes |

|---|---|---|---|

| Test Group ID | Unique identifier for each dose/concentration group. | Alphanumeric (e.g., G1, G2, LowDose) | Links individual subjects to a specific dose. |

| Dose/Concentration | The absolute amount or concentration of test substance administered. | Numerical value with unit (e.g., 5.0 mg/kg, 100 ppm) | Must be logged precisely. Log10 transformation is typically used in analysis [11]. |

| Log10(Dose) | Base-10 logarithm of the dose. | Numerical value (e.g., 0.699 for 5.0 mg/kg) | Calculated field; essential for linearizing the probit model. |

| Subject ID | Unique identifier for each animal or test unit. | Alphanumeric (e.g., A01, Mouse_12) | Ensures traceability and prevents duplicate records. |

| Observation Period | Time from administration to final observation. | Fixed duration (e.g., 14 days, 4 hours) [1] | Must be consistent across all subjects for valid comparison. |

| Mortality Status | Primary dichotomous (quantal) outcome. | Binary: 0 = Alive / 1 = Dead [29] | Must be clearly defined (e.g., confirmed cessation of vital signs). |

| Route of Administration | Method of substance delivery. | Categorical: Oral, Dermal, Intravenous, Inhalation [1] | LD₅₀ values are route-specific and cannot be compared directly across routes [1]. |

| Species/Strain | Biological model used. | Categorical: e.g., Sprague-Dawley rat, CD-1 mouse | Toxicity can vary significantly by species and strain [1]. |

| Sex & Age | Demographics of test subjects. | Categorical & Numerical (e.g., Male, 8 weeks) | Critical for interpreting and comparing results, as sensitivity can vary. |

Step-by-Step Experimental Protocol

- Dose Selection: Prepare a minimum of 4-5 test doses, spaced logarithmically (e.g., half-log intervals), expected to produce mortality between 5% and 95%. Include a vehicle-only control group (0 dose) [11].

- Subject Randomization: Randomly assign a sufficient number of healthy, acclimatized animals to each dose group. OECD guidelines typically recommend a minimum of 5 subjects per sex per dose for initial range-finding, and 8-10 for definitive testing.

- Administration & Monitoring: Administer the test substance uniformly according to the chosen route. Observe all subjects meticulously and consistently throughout the predetermined observation period (commonly 14 days for oral studies) [1]. Record the day of death for time-to-event analysis, if applicable.

- Data Recording: For each subject, record all fields specified in Table 1. Record data directly into a structured electronic system (e.g., spreadsheet or database) to prevent transcription errors.

Data Cleaning, Validation, and Transformation Protocol

Raw data must be rigorously checked and formatted before analysis.

Data Cleaning and Validation Checklist

- Completeness Check: Verify no missing values for Dose, Subject ID, or Mortality Status.

- Outlier Investigation: Confirm any extreme responses (e.g., death in the lowest dose group, survival in the highest). Review experimental notes for technical errors (e.g., dosing mishap). Do not discard data without justification.

- Dose-Response Consistency: Visually inspect for a monotonic increase in mortality proportion with increasing dose. Inversions can occur due to biological variability but should be noted.

- Control Group Validation: Confirm the mortality rate in the vehicle control group is zero (or within expected background levels).

Data Aggregation and Probit Transformation

For probit analysis, individual subject data is aggregated by dose group.

Table 2: Aggregated Data Format for Probit Analysis

| Dose (mg/kg) | Log10(Dose) | N (Total Subjects) | r (Number Dead) | Mortality Proportion (p = r/N) | Empirical Probit (Yₚ) |

|---|---|---|---|---|---|

| 10 | 1.000 | 10 | 1 | 0.10 | 3.72 |

| 32 | 1.505 | 10 | 3 | 0.30 | 4.48 |

| 100 | 2.000 | 10 | 5 | 0.50 | 5.00 |

| 320 | 2.505 | 10 | 8 | 0.80 | 5.84 |

| 1000 | 3.000 | 10 | 9 | 0.90 | 6.28 |

Calculating Empirical Probits: The mortality proportion (p) is transformed to an empirical probit (Yₚ).

- Formula:

Yₚ = 5 + NORMSINV(p)whereNORMSINVis the inverse of the standard normal cumulative distribution function [11]. - Adjustment for 0% or 100% Mortality: These values have undefined probits. Apply a correction (e.g., replace p = 0 with p = 0.5/N, and p = 1 with p = (N-0.5)/N) or use statistical software that handles censored data.

Analysis Workflow and Visualization

The analysis follows a logical progression from raw data to a calculated LD₅₀ value with confidence intervals. The following diagram illustrates the complete experimental and analytical workflow.

Workflow for LD50 Determination via Probit Analysis

Statistical Analysis via Probit Model Fitting

The core analysis involves fitting a linear model between the transformed variables.

Probit Model Equation

The fundamental relationship is: Probit (Y) = Intercept + Slope × Log₁₀(Dose) [11]. The LD₅₀ is the dose at which Y = 5 (the probit corresponding to 50%). The formula is derived from the linear model: Log₁₀(LD₅₀) = (5 - Intercept) / Slope

Protocol for Model Fitting and Calculation

- Perform Weighted Regression: Using statistical software (R, SAS, or specialized toxicology packages), fit a linear regression of Empirical Probits (Y) on Log₁₀(Dose). The regression should be weighted to account for the unequal variance of proportions; weights are typically inversely proportional to the variance of the probit.

- Calculate LD₅₀ and Confidence Intervals: From the fitted model parameters, calculate the Log₁₀(LD₅₀) using the formula above, then back-transform to the original dose units. Use Fieller's theorem or the delta method to calculate the 95% confidence interval for the LD₅₀, which is essential for stating the precision of the estimate.

- Assess Model Goodness-of-Fit: Evaluate the model using:

- Chi-square test for heterogeneity: A non-significant result (p > 0.05) indicates the model adequately fits the data.

- Visual inspection of residuals: Plot residuals versus fitted values to check for patterns.

The relationship between data transformation, model fitting, and final output is shown in the following diagram.

Probit Analysis Data Flow to LD50

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for LD₅₀ Mortality Studies

| Item | Function/Description | Critical Application Notes |

|---|---|---|

| Test Substance (API) | The active pharmaceutical ingredient or chemical of known, high purity (>95-98%) [1]. | The foundation of the study; purity must be documented. Impurities can significantly alter toxicity. |

| Vehicle/Solvent | Agent to dissolve or suspend the test substance (e.g., methylcellulose, saline, corn oil). | Must be non-toxic at administration volumes and compatible with both the test substance and the route of administration. A vehicle control group is mandatory. |

| Reference Toxicant | A standard chemical with a known, stable LD₅₀ (e.g., potassium dichromate for oral studies). | Used for periodic validation of experimental animal strain sensitivity and overall laboratory procedure. |

| Clinical Chemistry & Hematology Assays | Kits for analyzing blood parameters (e.g., liver enzymes, creatinine, CBC). | Not for LD₅₀ calculation itself, but for identifying target organ toxicity and providing mechanistic context to mortality. |

| Statistical Analysis Software | Software capable of probit analysis (e.g., R with ecotoxicology package, SAS PROC PROBIT, EPA BMDS). |

Essential for performing the weighted regression, calculating the LD₅₀, and deriving reliable confidence intervals. |

| Animal Diet & Bedding | Standardized, certified feed and housing materials. | Ensures animal health and prevents confounding toxicity from environmental contaminants. |

Data Presentation and Toxicity Classification

The final results should be presented clearly. The calculated LD₅₀ value should always be reported with its 95% confidence interval, route of administration, species, and sex [1]. To contextualize the finding, it can be classified using established toxicity scales.

Table 4: Toxicity Classification Based on Oral LD₅₀ in Rats [1]

| Toxicity Rating | Commonly Used Term | Oral LD₅₀ (mg/kg) | Probable Lethal Dose for 70 kg Human |

|---|---|---|---|

| 1 | Extremely Toxic | ≤ 1 | A taste (< 7 drops) |

| 2 | Highly Toxic | 1 – 50 | 1 teaspoon (4 ml) |

| 3 | Moderately Toxic | 50 – 500 | 1 ounce (30 ml) |

| 4 | Slightly Toxic | 500 – 5000 | 1 pint (600 ml) |

| 5 | Practically Non-toxic | 5000 – 15000 | > 1 quart (1 L) |