A Complete Guide to Systematic Evidence Maps: Revolutionizing Chemical Risk Assessment for Researchers

This article provides a comprehensive guide to Systematic Evidence Maps (SEMs), a transformative methodology for organizing and visualizing complex toxicological data in chemical risk assessment.

A Complete Guide to Systematic Evidence Maps: Revolutionizing Chemical Risk Assessment for Researchers

Abstract

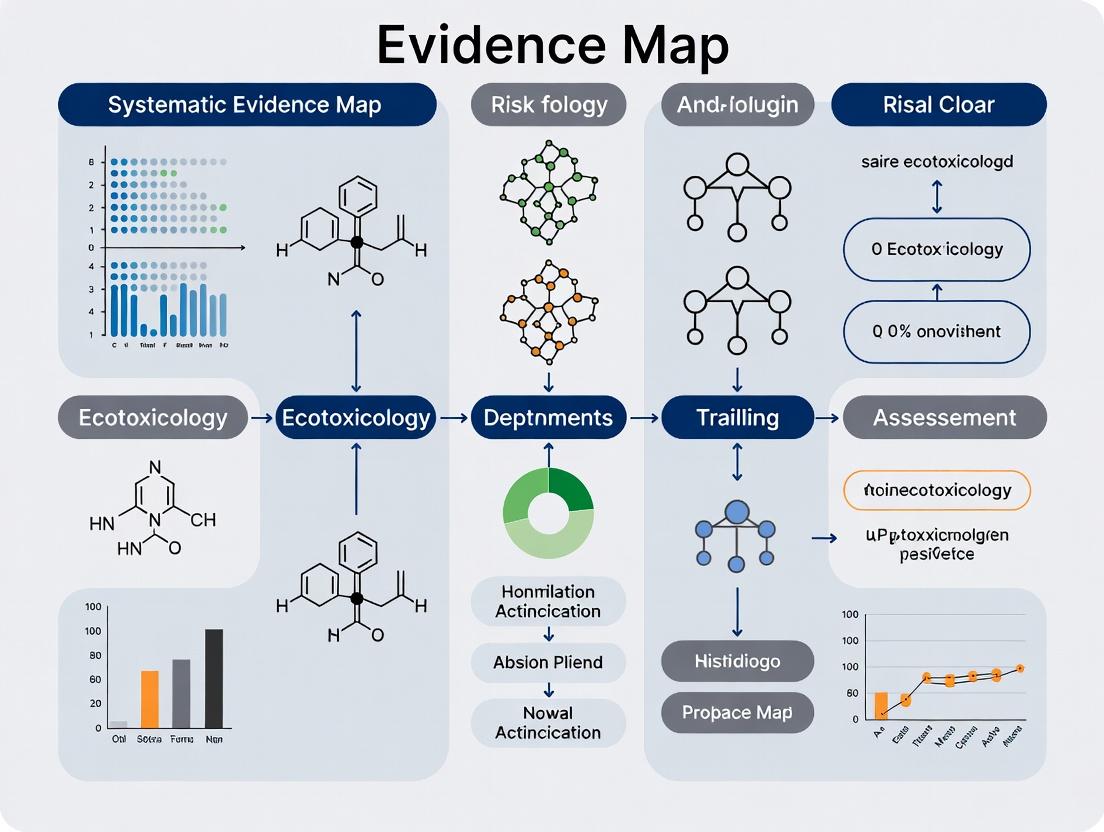

This article provides a comprehensive guide to Systematic Evidence Maps (SEMs), a transformative methodology for organizing and visualizing complex toxicological data in chemical risk assessment. Aimed at researchers, scientists, and drug development professionals, the content explores SEMs from foundational principles to advanced applications. It details how SEMs function as queryable databases to systematically characterize broad evidence bases, identify critical research gaps, and prioritize resources for subsequent systematic reviews or primary studies[citation:1][citation:5]. The article covers core methodological steps, including protocol development and data extraction, and presents real-world case studies from agencies like the US EPA[citation:6][citation:7]. It further addresses common implementation challenges, optimization strategies using knowledge graphs and automation[citation:2][citation:10], and validates SEMs by comparing them with other evidence synthesis tools. The conclusion synthesizes key takeaways and outlines future directions for integrating SEMs into biomedical and clinical research workflows to enhance evidence-based decision-making.

What Are Systematic Evidence Maps? Core Concepts and Evolution in Risk Science

In the field of chemical risk assessment, researchers and regulators are tasked with making critical decisions based on an expansive, complex, and often contradictory body of scientific evidence. Systematic Evidence Maps (SEMs) have emerged as a pivotal methodological tool to navigate this landscape. An SEM is defined as a form of evidence synthesis that offers a structured approach to categorizing and organizing scientific evidence to identify overarching trends and critical knowledge gaps [1]. Unlike a traditional systematic review, which aims to synthesize findings to answer a specific, narrow question, an SEM provides a broad, visual overview of an entire evidence base [2].

The application of SEMs is particularly valuable in environmental health and chemical risk management. Regulatory bodies, including the U.S. Environmental Protection Agency (EPA) and the Agency for Toxic Substances and Disease Registry (ATSDR), now routinely employ SEMs as problem-formulation tools and to support priority-setting in their assessment programs [3] [4]. For example, within the EPA's Integrated Risk Information System (IRIS), SEMs are used to systematically capture and screen literature on chemicals, creating an interactive inventory of research that informs subsequent, more targeted analyses [3]. By mapping the available evidence—including mammalian bioassays, epidemiological studies, and New Approach Methodologies (NAMs)—SEMs help decision-makers understand what is known, where robust evidence exists for systematic review, and where significant gaps warrant new primary research [2]. This "big picture" perspective is essential for efficient and transparent evidence-informed decision-making in chemical policy.

Core Methodology of Systematic Evidence Mapping

The methodological framework for conducting an SEM is rigorous and systematic, sharing several steps with traditional systematic reviews but differing in its objectives and final output. The process is designed to be comprehensive yet manageable for broad topic areas [1] [5]. The following workflow outlines the key stages.

Table: Systematic Evidence Map (SEM) Workflow

Defining Scope and Eligibility (PECO Framework)

The process begins with formulating a clear, often broad, research question. In chemical risk assessment, this is typically structured using the PECO framework (Population, Exposure, Comparator, Outcome) [3]. For an SEM, the PECO criteria are kept intentionally broad to capture a wide swath of potentially relevant evidence. The scope may also define supplemental content to track, such as in vitro studies, pharmacokinetic data, or evidence from New Approach Methods (NAMs) [3].

Systematic Search Strategy

A comprehensive and systematic search is conducted across multiple bibliographic databases and other sources. The challenge is balancing comprehensiveness with feasibility due to the broad scope [6]. Search strategies are designed to be sensitive, often requiring collaboration with information specialists. Key databases for environmental health topics typically include PubMed/MEDLINE, Embase, and Web of Science, with subject-specific databases added as needed [6] [7].

Screening and Data Coding

Identified records are screened against the eligibility criteria in multiple phases (title/abstract, then full-text), usually with two independent reviewers to minimize error [3]. Included studies then undergo data coding, where key metadata is extracted. This focuses on study characteristics (e.g., chemical, study type, model system, health outcome) rather than detailed quantitative results [1] [5]. This coded data forms the foundation for the evidence map.

Critical Appraisal (Risk of Bias Assessment)

Critical appraisal of individual studies is considered an optional step in an SEM [1] [3]. It is typically conducted when studies are categorized by the direction of effect or when the SEM is intended to directly inform a subsequent systematic review. When performed, it follows standard risk-of-bias assessment tools relevant to the study designs in question.

Data Visualization and Synthesis

The final and defining stage is the creation of interactive visualizations. Unlike a systematic review's narrative or meta-analytic synthesis, an SEM synthesizes evidence by categorizing and mapping it visually [2]. This is often achieved through heatmaps, interactive databases, or network diagrams that allow users to explore the evidence landscape, instantly see clusters of research, and identify empty cells representing evidence gaps [1].

Data Presentation: Evaluating Search Strategies for Evidence Mapping

A critical methodological challenge in SEMs is designing an efficient yet comprehensive search. Search Summary Tables (SSTs) provide transparent data on the performance of different information sources, guiding resource allocation in future projects [6] [7]. The following table summarizes data from a case study on peer support interventions, illustrating the relative yield of different databases for identifying systematic reviews (SRs) and randomized controlled trials (RCTs)—study designs also relevant to chemical risk assessment [6].

Table: Search Summary Table (SST) for an Evidence and Gap Map Case Study [6]

| Information Source | Total References Retrieved | Included Systematic Reviews (SRs) | Included Randomized Trials (RCTs) | Key Function for Evidence Mapping |

|---|---|---|---|---|

| MEDLINE | 1,123 | 27 (84%) | 55 (90%) | Core biomedical database; essential for both SRs and primary studies. |

| PsycINFO | 581 | 15 (47%) | 42 (69%) | Key for subject-specific (e.g., neurotoxicology) behavioral outcomes. |

| CINAHL | 877 | 23 (72%) | 36 (59%) | Useful for public health and community exposure outcomes. |

| Embase | 1,484 | 25 (78%) | Not Reported | Broad biomedical coverage, strong for pharmacological/toxicological data. |

| CENTRAL | Not Reported | Not Applicable | 53 (87%) | Primary resource for identifying controlled clinical trials. |

| Forward Citation Searching | N/A | 1 (3%) | 14 (23%) | Highly effective for finding newer RCTs citing key older studies. |

Experimental Protocols: Detailed SEM Methodology for Chemical Risk Assessment

The U.S. EPA has developed a standardized template for conducting SEMs within its chemical risk assessment programs [3]. The protocol below details the steps, incorporating standard systematic review practices adapted for mapping objectives.

Table: Detailed Experimental Protocol for an EPA Systematic Evidence Map [3]

| Protocol Stage | Detailed Methodology | Tools & Standards | Purpose in Chemical Risk Assessment |

|---|---|---|---|

| 1. Protocol Development | Define broad PECO; list supplemental evidence types (e.g., in vitro, NAMs, genotoxicity); pre-register plan. | PECO framework; ROSES checklist [5]. | Ensures transparency, reduces bias, and sets manageable scope for broad chemical topics. |

| 2. Search Strategy | Execute search in core databases (PubMed, TOXLINE, Embase); supplement with grey literature searches. | Boolean operators; controlled vocabularies (MeSH, Emtree). | Maximizes capture of all potentially relevant toxicological and epidemiological literature. |

| 3. Screening | Dual-independent review at title/abstract and full-text levels using pre-defined forms; resolve conflicts by consensus. | Abstract screening software (e.g., Rayyan, SWIFT-Review). | Ensures reproducible and unbiased selection of studies against broad eligibility criteria. |

| 4. Data Extraction & Coding | Extract metadata (study design, chemical, dose, model, outcome) into structured web-based forms; no synthesis of results. | Custom database platforms (e.g., Health Assessment Workspace Collaborative). | Creates a queryable database of study characteristics for visualization and gap analysis. |

| 5. Study Evaluation (Optional) | Apply risk-of-bias tools (e.g., OHAT, NTP RoB) on a case-by-case basis if needed for prioritization. | Risk-of-bias assessment tools. | Provides a layer of quality assessment to inform confidence in evidence clusters. |

| 6. Visualization & Reporting | Generate interactive heatmaps and evidence atlases; publish data in open-access formats. | Data visualization software (e.g., Tableau, R Shiny). | Enables stakeholders to interact with the evidence landscape and identify gaps intuitively. |

SEMs vs. Systematic Reviews: A Functional Contrast

Understanding the distinction between SEMs and traditional systematic reviews (SRs) is crucial for selecting the appropriate evidence synthesis tool. The following diagram and table contrast their primary functions, processes, and outputs within the context of chemical risk assessment [1] [2].

Table: Functional Contrast Between Systematic Evidence Maps and Systematic Reviews [1] [3] [2]

| Aspect | Systematic Evidence Map (SEM) | Traditional Systematic Review (SR) |

|---|---|---|

| Primary Question | Broad: "What is the extent and distribution of evidence on this chemical/outcome?" | Focused: "What is the effect of exposure X on health outcome Y?" |

| PECO Scope | Intentionally broad to capture all relevant evidence. | Highly specific to limit evidence to directly comparable studies. |

| Core Process | Systematic identification, categorization, and visual mapping of studies. | Systematic identification, critical appraisal, and statistical/narrative synthesis. |

| Data Extraction | Descriptive metadata (study design, population, exposure, outcome). | Detailed quantitative results and study characteristics for synthesis. |

| Critical Appraisal | Optional; not required for mapping purpose. | Mandatory; integral to interpreting findings and grading evidence. |

| Key Output | Interactive evidence atlas or heatmap showing evidence clusters and gaps. | Qualitative summary or meta-analysis with a strength-of-evidence conclusion. |

| Role in Decision-Making | Priority-setting: Identifies needs for future SRs or primary research. | Risk characterization: Directly informs hazard identification and dose-response. |

Conducting a robust SEM requires a suite of methodological tools and resources. The following table details key "research reagent solutions" essential for the SEM process in chemical risk assessment.

Table: Essential Toolkit for Conducting Systematic Evidence Maps in Chemical Risk Assessment

| Tool Category | Specific Item/Resource | Function in SEM Process | Example/Note |

|---|---|---|---|

| Protocol & Reporting Standards | ROSES (Reporting Standards for Systematic Evidence Syntheses) [5] | Provides a checklist for planning and reporting SEMs, ensuring methodological transparency. | Equivalent to PRISMA for systematic reviews but tailored for mapping. |

| Eligibility Framework | PECO (Population, Exposure, Comparator, Outcome) Statement [3] | Structures the broad research question and defines the boundaries for study inclusion. | In chemical risk, P: human/animal; E: specific chemical; C: unexposed/low dose; O: health outcome. |

| Search Resources | Core Biomedical Databases (PubMed/MEDLINE, Embase, Web of Science) [6] [7] | Primary sources for identifying published toxicological and epidemiological literature. | MEDLINE and Embase are considered essential for comprehensive retrieval [6]. |

| Search Resources | Toxicology-Specific Databases (TOXLINE, ECOTOX) | Capture specialized literature on chemical effects not fully indexed in core biomedical databases. | Critical for environmental risk assessments. |

| Screening & Automation Tools | Machine Learning-Aided Screening Software (e.g., SWIFT-Review, ASReview) | Prioritizes references during screening, increasing efficiency for large result sets [3]. | Learns from reviewer decisions to rank likely relevant records higher. |

| Data Management | Systematic Review Management Platforms (e.g., HAWC, DistillerSR) | Manages the flow of references, facilitates dual-independent screening, and stores extracted data [3]. | EPA's Health Assessment Workspace Collaborative (HAWC) is specifically designed for risk assessment. |

| Visualization Software | Interactive Dashboard Tools (e.g., Tableau, R Shiny, Python Dash) | Transforms coded metadata into interactive heatmaps and evidence gap maps for exploration [1]. | Allows end-users to filter and explore the mapped evidence by chemical, outcome, or study type. |

The Problem: Volume, Velocity, and Variability

Modern toxicology is experiencing a fundamental crisis of information. The evidence base for assessing chemical risks has expanded exponentially due to factors including more sensitive analytical techniques, increased regulatory data requirements, and the reform of regulatory reliance on traditional in vivo toxicity testing [8]. This has led to a scenario characterized by overwhelming volume, high velocity of new data generation, and significant variability in data types and quality. Consequently, locating, organizing, and evaluating all relevant data for informed decision-making has become a formidable challenge [8].

The regulatory landscape is simultaneously becoming more complex. Global frameworks are evolving toward stricter sustainability mandates, broader restrictions on substances like PFAS, and the digitalization of compliance reporting [9]. For instance, the European Union's Chemicals Strategy for Sustainability (CSS) and initiatives like the Safe-and-Sustainable-by-Design (SSbD) framework demand more comprehensive, predictive, and mechanistic data [9] [10]. This creates a critical gap: the need for robust, evidence-based decisions is greater than ever, but the traditional tools for evidence synthesis are ill-equipped to handle the modern data deluge.

This data overload directly impedes core toxicological and regulatory workflows, including:

- Hazard Identification & Characterization: Difficulty in aggregating fragmented evidence from high-throughput in vitro assays, omics technologies, and traditional studies to form a coherent hazard profile.

- Risk Assessment of Mixtures: Assessing cumulative exposure and "cocktail effects" is nearly intractable with conventional methods, despite evidence that simultaneous exposure to low doses of different pesticides can result in additive or synergistic effects [11].

- Application of New Approach Methodologies (NAMs): Integrating data from diverse NAMs—such as in silico modeling, high-throughput screening, and toxicogenomics—into a unified assessment framework [11] [10].

- Regulatory Prioritization & Scoping: Identifying critical data gaps and prioritizing chemicals for thorough risk evaluation amidst vast datasets.

Table 1: Key Data Challenges in Modern Chemical Risk Assessment

| Challenge Dimension | Specific Manifestation | Impact on Risk Assessment |

|---|---|---|

| Volume | Exponential growth in published studies, regulatory dossiers (e.g., IUCLID), and high-throughput screening data [8]. | Key evidence is overlooked; systematic review becomes prohibitively resource-intensive. |

| Variability (Heterogeneity) | Data from diverse sources (academic, regulatory, industry), study types (in vivo, in vitro, in silico), and reporting formats [8]. | Difficult to compare, combine, or synthesize findings across the evidence base. |

| Velocity | Rapid generation of new data from automated platforms and evolving scientific techniques [8]. | Evidence assessments are outdated by the time they are completed. |

| Veracity (Uncertainty) | Variable study quality, reporting completeness, and relevance of model systems to human health [11]. | Undermines confidence in conclusions and complicates weight-of-evidence analyses. |

| Regulatory Complexity | Evolving requirements under EU CSS, TSCA, GHS revisions, and mixture assessment mandates [9] [11]. | Increases the breadth of data required for compliance and safe-by-design innovation. |

Systematic Evidence Maps: A Foundational Solution

Systematic Evidence Mapping (SEM) emerges as a foundational methodology to address these challenges. An SEM is defined as a queryable database of systematically gathered and structured evidence, designed to organize and characterize a broad evidence base for exploration by diverse end-users [8]. Unlike a systematic review, which aims to answer a specific, narrow question with synthesis, an SEM aims to provide a map of the available evidence landscape. It enables users to identify clusters of research, glaring gaps, and trends without initially committing to a single synthesis question [8].

The core value proposition of SEM in toxicology is its role in facilitating evidence-based approaches while managing scale. It provides a transparent, auditable, and reusable resource that:

- Collates fragmented data into a single access point.

- Structures unstructured data (e.g., extracting key parameters from PDFs into defined fields).

- Codes data using controlled vocabularies and ontologies, enabling meaningful comparison across heterogeneous studies [8].

This is particularly vital for toxicology, where framing a single, narrow systematic review question is often difficult or uninformative for broad policy or prioritization needs [8]. An SEM serves as the critical first step in a tiered evidence-synthesis strategy, enabling efficient prioritization of resources for full systematic review where it is most needed.

From Flat Tables to Knowledge Graphs: An Architectural Evolution

Traditional SEMs, often built on relational databases with rigid, flat table structures, are insufficient for modern toxicology's interconnected data. This "schema-on-write" approach struggles with the highly connected and heterogeneous nature of toxicological data, where relationships (e.g., between a chemical, a molecular target, an adverse outcome pathway, and a disease) are as important as the entities themselves [8].

The next-generation architecture for SEMs is the knowledge graph. A knowledge graph is a flexible, schemaless data model that stores information as a network of nodes (entities/concepts) and edges (relationships). This "schema-on-read" approach is inherently suited for toxicology because it can easily accommodate [8]:

- Diverse and evolving data types without pre-defined table structures.

- Complex, multi-step relationships (e.g., part of an Adverse Outcome Pathway).

- Integration with formal ontologies (shared, logically related controlled vocabularies), which provide semantic meaning and enable sophisticated computational reasoning [8].

Table 2: Relational Database vs. Knowledge Graph for Toxicological SEMs

| Feature | Traditional Relational (Schema-on-Write) | Knowledge Graph (Schema-on-Read) |

|---|---|---|

| Data Structure | Rigid, predefined tables and columns. | Flexible, graph-based (nodes/edges). |

| Schema Definition | Required before data ingestion. | Applied during data querying and interpretation. |

| Relationship Handling | Handled via foreign keys between tables; complex relationships are cumbersome. | Relationships are first-class citizens, easily representing multi-step pathways. |

| Adaptability | Poor; adding new data types requires schema modification. | High; new node and relationship types can be added dynamically. |

| Query Focus | "What are the properties of X?" | "How is X connected to Y through Z?" |

| Suitability for Toxicology | Low; struggles with interconnected, heterogeneous data [8]. | High; ideal for AOPs, mechanistic networks, and integrated data [8]. |

The following diagram illustrates the architectural shift and workflow for building a toxicological knowledge graph.

Diagram 1: Systematic Evidence Mapping Workflow & Architecture Evolution (width=760px)

Implementing a Toxicological SEM: A Technical Protocol

The development of a fit-for-purpose SEM for toxicology requires a meticulous, protocol-driven approach. The following workflow, derived from established methodology [8], outlines the key stages.

Protocol Development & Stakeholder Engagement

- Define Map Scope & Objectives: Clearly articulate the chemical, toxicological, or regulatory domain (e.g., "endocrine disruption potential of pesticides"). Engage regulatory scientists, risk assessors, and researchers to ensure relevance.

- Develop a Detailed A Priori Protocol: Publish a protocol specifying the search strategy, data sources, inclusion/exclusion criteria, data extraction fields, and coding strategy. This is critical for transparency and reproducibility [8].

Evidence Search, Screening & Extraction

- Systematic Searching: Execute searches across multiple bibliographic (PubMed, Scopus, Embase) and regulatory (ECHA, EPA) databases. Search strings must balance sensitivity and specificity.

- Screening: Implement a two-stage (title/abstract, then full-text) screening process using tools like Rayyan or Covidence. At least two independent reviewers mitigate bias.

- Data Extraction: Extract structured data into a predefined template. Critical fields for toxicology include: chemical identifier (CAS, name), study type (in vivo/in vitro/in silico), test system, endpoint measured, dose/response data, and reported outcome.

Data Coding & Ontology Alignment

This is the most critical step for enabling interoperability and sophisticated querying.

- Code Development: Create a coding "book" of controlled terms for key variables (e.g., species, sex, target organ).

- Ontology Integration: Map codes to established biomedical ontologies. For example:

- Chemicals: ChEBI (Chemical Entities of Biological Interest)

- Assays & Endpoints: OBI (Ontology for Biomedical Investigations), BAO (BioAssay Ontology)

- Diseases & Phenotypes: Mondo (Monarch Disease Ontology), MP (Mammalian Phenotype Ontology)

- Adverse Outcome Pathways: AOP-Wiki concepts

- This semantic alignment allows the graph to "understand" that "hepatocellular carcinoma" (from Mondo) and "liver tumor" (from a study abstract) are related concepts.

Graph Construction & Quality Assurance

- Node & Edge Creation: Using a graph database platform (e.g., Neo4j, Amazon Neptune, or a triplestore like Stardog), transform the coded data into a graph. Each study, chemical, and outcome becomes a node. Relationships like

Chemical-[CAUSES]->EffectorStudy-[USES_ASSAY]->Assaybecome edges. - Data Integrity Checks: Implement rigorous quality control to ensure accurate extraction, coding, and graph population. Review a random sample of entries.

Table 3: Experimental Protocol for a High-Throughput Screening (HTS) Data Integration Pilot

| Protocol Stage | Action | Tools & Standards | Output/Deliverable |

|---|---|---|---|

| 1. Scope Definition | Focus on estrogen receptor (ER) activity HTS data from Tox21/ToxCast. | – | Published study protocol. |

| 2. Data Acquisition | Download curated data from EPA's CompTox Chemistry Dashboard. | CSV/JSON formats, DTXSIDs (chemical identifiers). | Raw HTS response data. |

| 3. Data Extraction & Curation | Extract chemical ID, assay name (e.g., ATG_ERa_TRANS), AC50 values, hit-call. |

Python/R scripts, OECD QSAR Toolbox. | Cleaned, structured dataset. |

| 4. Ontological Coding | Map assay ATG_ERa_TRANS to BAO: BAO_0002179 (nuclear receptor transcription assay). Map "active" hit-call to OBI: OBI_0000312 (positive result). |

Ontology lookup services (OLS), manual curation. | Annotated dataset with ontology URIs. |

| 5. Graph Ingestion | Ingest data into Neo4j: Create Chemical nodes, Assay nodes, and HAS_ACTIVITY relationships with properties (AC50, hit-call). |

Neo4j Cypher queries, Python driver. | Populated knowledge graph subset. |

| 6. Query & Validation | Execute query: "Find all chemicals active in ERα assays and link to known ERα agonists from peer-reviewed literature." | Cypher query language. | Validated subgraph connecting HTS predictions to legacy knowledge. |

The Scientist's Toolkit: Research Reagent Solutions for SEM Implementation

Building and utilizing a modern SEM requires a suite of technical and informatics "reagents."

Table 4: Essential Research Reagent Solutions for Toxicological SEMs

| Tool Category | Specific Item/Technology | Function & Role in SEM |

|---|---|---|

| Data Storage & Management | Graph Database (Neo4j, Amazon Neptune, Stardog) | Core infrastructure for storing the knowledge graph, enabling efficient traversal of complex relationships [8]. |

| Ontology Resources | Bioportal / OLS (Ontology Lookup Service), ChEBI, BAO, AOP-Wiki | Provides standardized, machine-readable vocabularies for coding toxicological entities and processes, ensuring semantic interoperability [8]. |

| Data Extraction & Curation | Text Mining & NLP Tools (e.g., custom Python/R scripts, CLAMP) | Automates the extraction of key entities (chemicals, endpoints) from unstructured text in study abstracts and reports. |

| Chemical Registry | EPA CompTox Chemistry Dashboard, PubChem | Provides authoritative chemical identifiers (DTXSID, CID), structures, and links to associated property and toxicity data, crucial for node disambiguation. |

| Evidence Synthesis Platforms | Systematic Review Management Software (Rayyan, Covidence, DistillerSR) | Facilitates the collaborative screening and data extraction phases of the SEM workflow, managing reviewer conflict resolution. |

| Query & Visualization | Graph Query Languages (Cypher, SPARQL), Visualization Libraries (Cytoscape, Gephi) | Allows researchers to interrogate the graph (e.g., "find paths between chemical X and disease Y") and visualize complex networks. |

| Computational Toxicology Integration | OECD QSAR Toolbox, EPA OPERA, KNIME/Analytics Platform | Enriches chemical nodes with predicted properties and read-across hypotheses, bridging the SEM with New Approach Methodologies (NAMs) [10]. |

Applications and Impact on Chemical Risk Assessment

When operationalized, a graph-based SEM transforms key toxicological and regulatory workflows. Its primary power lies in enabling complex, relationship-focused queries that are impossible with traditional databases.

Application 1: Accelerated Problem Formulation & Scoping

- Scenario: A regulator needs to prioritize chemicals for evaluation of potential developmental neurotoxicity (DNT).

- SEM Action: Query the graph for chemicals with at least two weak associative links to DNT outcomes (e.g., active in a DNT-relevant in vitro assay AND structurally similar to a known DNT toxicant).

- Impact: Rapid identification of candidate chemicals for further evaluation, making priority-setting evidence-based and transparent.

Application 2: Mechanistic Hypothesis Generation for Mixture Risk

- Scenario: Assessing the potential for synergistic effects of a chemical mixture found in drinking water.

- SEM Action: For each chemical, retrieve its known molecular initiating events (MIEs) and key events (KEs) from linked AOP knowledge. Query for shared or interconnected KEs within the mixture.

- Impact: Identifies plausible mechanistic bases for additive or synergistic interactions, guiding targeted testing. This addresses the critical challenge of "cocktail effects" [11].

Application 3: Bridging New Approach Methodologies (NAMs) with Traditional Evidence

- Scenario: Validating a novel in vitro assay intended to predict liver steatosis.

- SEM Action: Assemble a "ground truth" subgraph of chemicals known to cause steatosis from legacy in vivo studies. Connect these to their in vitro bioactivity profiles from HTS.

- Impact: Enables the systematic evaluation of the assay's predictive capacity across a broad chemical space, supporting the regulatory acceptance of NAMs [10].

The internal structure of such a knowledge graph, focusing on the integration of diverse evidence streams, is shown below.

Diagram 2: Knowledge Graph Structure Integrating Diverse Evidence Streams (width=760px)

The driving need in modern toxicology is not merely for more data, but for intelligent data architecture. The complexity and volume of information have outstripped the capacity of traditional, linear review processes. Systematic Evidence Mapping, particularly when implemented using flexible, graph-based architectures, provides a transformative solution. It shifts the paradigm from static literature reviews to dynamic, queryable evidence ecosystems.

By moving from rigid tables to interconnected knowledge graphs, toxicologists and risk assessors can navigate the evidence landscape with unprecedented efficiency. This enables them to ask and answer complex, systems-level questions about chemical hazards, mixture risks, and mechanistic pathways. As regulatory frameworks evolve toward greater demands for safety, sustainability, and transparency [9] [10], investing in the development of these robust evidence-mapping infrastructures is not just an academic exercise—it is a fundamental prerequisite for achieving evidence-based chemical risk assessment in the 21st century.

The field of chemical risk assessment is undergoing a fundamental shift in how it synthesizes and utilizes scientific evidence. The traditional paradigm, anchored by the systematic review (SR), is being supplemented and transformed by the emergence of systematic evidence maps (SEMs). This evolution responds directly to the pressing needs of modern regulatory science: to manage vast, heterogeneous evidence bases efficiently, support priority-setting, and inform decisions within realistic timeframes [2] [3]. This guide details the historical context, methodological core, and practical application of this evolution, framing it within the critical domain of chemical risk assessment research.

The Catalysts for Evolution: Limitations of Systematic Review in Regulatory Science

Systematic reviews established the gold standard for evidence-based decision-making by introducing rigorous, protocol-driven methods to minimize bias and maximize transparency [2]. In chemical risk assessment, their adoption promised to address challenges like selective use of data ("cherry-picking") and inconsistent application of scientific judgment [2]. The core steps and advantages of SR are well-defined, as summarized in Table 1.

Table 1: Core Steps and Advantages of Systematic Review (SR) in Chemical Risk Assessment [2]

| Systematic Review Step | Primary Advantage in Risk Assessment |

|---|---|

| Pre-published protocol | Reduces expectation bias; allows for external peer review of methods. |

| Clear PECO statement | Provides a structured, focused framework for the research question. |

| Comprehensive search | Reduces risk of partial retrieval of the relevant evidence base. |

| Screening against eligibility criteria | Reduces selection bias in deciding which evidence to include. |

| Data extraction & critical appraisal | Ensures consistent, valid interpretation of individual study findings. |

| Evidence synthesis & confidence rating | Increases power to identify trends; transparently communicates overall reliability of the body of evidence. |

| Drawing conclusions | Provides direct, synthesized answers to focused health risk questions. |

However, the practical application of SR in regulatory workflows revealed significant limitations [2]:

- Resource Intensity: Full SRs are time-consuming and costly, ill-suited for the rapid pace of regulatory decision-making and the volume of chemicals requiring assessment.

- Narrow Scope: The focused PECO (Population, Exposure, Comparator, Outcome) format answers specific questions but is poorly suited for scoping broad evidence landscapes, identifying research trends, or prioritizing which chemicals or health endpoints merit a full SR.

- Static Output: SRs provide a snapshot in time and are difficult to update continuously amid a rapidly growing scientific literature.

These limitations created a methodological gap, particularly for agencies like the U.S. EPA, which must triage and evaluate thousands of chemicals under statutes like TSCA [2] [12]. The need was for a tool that retained the systematicity and transparency of SR but offered a broader, more flexible, and resource-efficient overview of the evidence. This need catalyzed the evolution toward systematic evidence mapping.

Defining the Paradigm Shift: Systematic Evidence Maps as a Strategic Tool

A Systematic Evidence Map (SEM) is defined as a systematically gathered database that characterizes broad features of an evidence base [2]. Unlike an SR, which synthesizes findings to answer a specific question, an SEM organizes and catalogs evidence to visualize the extent, distribution, and characteristics of available research.

The evolution from SR to SEM represents a shift from a definitive answer-generating engine to a strategic intelligence and planning tool. This shift is characterized by key differences in objectives, processes, and outputs, as detailed in Table 2.

Table 2: Comparative Analysis: Systematic Review vs. Systematic Evidence Map [2] [1] [3]

| Feature | Systematic Review (SR) | Systematic Evidence Map (SEM) |

|---|---|---|

| Primary Objective | To synthesize evidence to answer a specific, narrow question (e.g., "Does chemical X cause outcome Y?"). | To survey, categorize, and visualize the broad landscape of evidence on a topic (e.g., "What is known about all health effects of chemical class Z?"). |

| Research Question | Tightly focused, defined by a precise PECO statement. | Broadly scoped, often using a modified PECO to capture a wide range of evidence. |

| Eligibility Criteria | Strict, designed to include only studies directly relevant to the synthesis. | More inclusive, often capturing studies for characterization even if not suitable for meta-analysis. |

| Critical Appraisal | Mandatory; risk of bias assessment is central to interpreting synthesized results. | Optional or streamlined; often conducted later if the map informs a subsequent SR [1]. |

| Core Output | A quantitative or qualitative synthesis (e.g., meta-analysis) with a graded confidence assessment. | A searchable database and interactive visualizations (e.g., heatmaps, network diagrams) showing evidence clusters and gaps [1]. |

| Key Utility | Provides a direct, evidence-based answer for risk management decisions. | Informs research prioritization, identifies needs for primary research or targeted SRs, and supports problem formulation in risk assessment [2] [3]. |

In chemical risk assessment, SEMs are now routinely used as problem formulation tools. They help assessors understand what types of studies exist (e.g., in vivo, in vitro, epidemiological), for which health endpoints, and for which exposure scenarios [3]. This allows for "fit-for-purpose" assessments where the depth of analysis can be tailored to the likelihood of risk, a principle reflected in recent regulatory proposals [12]. For example, the U.S. EPA's IRIS and PPRTV programs use SEMs as a critical first step in assessment development [3].

Methodological Protocols for Systematic Evidence Mapping

The strength of an SEM lies in its rigorous, protocol-driven methodology, which inherits the systematic search and transparency standards of SR while adapting other steps for mapping purposes. The following workflow, derived from established guidance and protocols, details the core steps [1] [3] [13].

Systematic Evidence Mapping (SEM) Standard Workflow [1] [13]

Step 1: Define Scope and Develop Protocol The process begins with a broad, strategic question. A pre-published protocol defines the objectives and methods. Key stakeholders, including research communities or affected interest groups, are often engaged to ensure relevance and utility [13]. The PECO criteria are kept broad to capture a wide swath of evidence. For example, a map on environmental chemicals and autism (aWARE project) includes human, non-human primate, and rodent studies across all exposure categories and ASD-related outcomes [13].

Step 2: Conduct Systematic Search A comprehensive, reproducible search strategy is developed for multiple bibliographic databases (e.g., PubMed, Web of Science, Scopus) without restrictive date or language filters [13]. This ensures the map captures the full breadth of relevant literature.

Step 3: Screen Studies Records are screened in two phases (title/abstract, then full text) against the eligibility criteria, typically using specialized systematic review software (e.g., DistillerSR) and following best practices to minimize bias [13].

Step 4: Extract and Code Data This is the core mapping activity. Data from included studies is extracted into structured, web-based forms. Coding focuses on characteristics needed for categorization and visualization, such as:

- Chemical/exposure class

- Study type (e.g., cohort, case-control, animal bioassay, in vitro)

- Health system or outcome assessed

- Model organism (if applicable)

- Study population demographics [3] [13] The U.S. EPA template also codes for supplemental content like New Approach Methodologies (NAMs) data and pharmacokinetic studies [3].

Step 5: Critical Appraisal (Optional) Formal risk-of-bias assessment is not always required for mapping. It may be conducted later if the map is used to select studies for a subsequent SR, or performed in a streamlined way to categorize studies by general reliability [1].

Step 6: Develop Interactive Visualization and Database The coded data is uploaded to interactive visualization platforms (e.g., Tableau, bespoke web applications) to create the SEM. Outputs are designed to be queryable, allowing users to filter and explore the evidence base dynamically [3] [13]. The aWARE project, for instance, is building a Web-based tool for this purpose [13].

Step 7: Narrative Summary and Report The final step involves interpreting the visualization to produce a narrative summary. This report identifies key evidence clusters (well-studied areas), critical evidence gaps (unstudied or understudied areas), and trends in the literature. This analysis directly informs recommendations for future primary research or targeted systematic reviews [2] [1].

Advanced Integration: SEMs with Adverse Outcome Pathways (AOPs) and New Approach Methodologies (NAMs)

The most advanced application of SEMs in chemical risk assessment is their integration with mechanistic toxicology frameworks. This represents the forward edge of the evolution from evidence synthesis to evidence-based predictive toxicology.

Systematic maps can be powerfully coupled with Adverse Outcome Pathway (AOP) development [14]. An AOP is a conceptual framework linking a molecular initiating event (MIE) through key biological events to an adverse outcome relevant to risk assessment. SEMs can be used to systematically survey and catalogue the literature supporting each key event relationship within a proposed AOP.

Integration of SEMs with AOPs and NAMs for Risk Assessment [3] [14]

This integration creates a data-driven, transparent bridge between mechanistic data and apical outcomes. For instance, an SEM on a liver toxicant would catalog not just traditional animal studies showing liver necrosis, but also in vitro studies showing receptor activation, omics studies revealing pathway perturbation, and epidemiological data. When mapped onto an AOP for liver fibrosis, this reveals which key event relationships are strongly supported and which are weak or missing [14].

Furthermore, SEMs explicitly track the availability of New Approach Methodologies (NAMs)—including high-throughput screening, transcriptomics, and in silico models—as supplemental content [3]. This practice directly supports the regulatory transition toward more efficient, human-relevant toxicity testing strategies by clarifying where traditional data can be supplemented or replaced with mechanistic NAM data.

Conducting a high-quality SEM requires a suite of specialized tools and reagents. The following table details key components of the modern evidence mapper's toolkit.

Table 3: Research Reagent Solutions for Systematic Evidence Mapping

| Tool Category | Specific Item / Software | Function in SEM Process |

|---|---|---|

| Protocol & Project Management | Pre-registration platforms (e.g., PROSPERO, Open Science Framework) | Ensures transparency, reduces bias, and allows for peer review of the SEM plan before work begins. |

| Search & Screening Automation | Bibliographic databases (PubMed, Scopus, Web of Science); AI-assisted screening tools (e.g., SWIFT-Review, RobotAnalyst) | Enables comprehensive literature retrieval and uses machine learning to prioritize records during title/abstract screening, increasing efficiency [3]. |

| Dedicated Review Software | DistillerSR, Rayyan, EPPI-Reviewer | Manages the entire review process—from reference importing, de-duplication, and multi-phase screening to data extraction and reporting—in a single, audit-ready platform [13]. |

| Data Extraction & Coding | Custom web-based extraction forms (e.g., DEXTR); Standardized taxonomy ontologies | Provides structured, consistent fields for data capture (e.g., chemical, study design, outcome). Ontologies ensure standardized terminology across mappers [13]. |

| Visualization & Database Creation | Business Intelligence software (Tableau, Power BI); Interactive web frameworks (R Shiny, Python Dash) | Transforms coded data into interactive heatmaps, bubble plots, and network diagrams. Allows creation of public-facing, queryable evidence databases [1] [13]. |

| Integration with Toxicity Frameworks | AOP-Wiki (aopwiki.org); CompTox Chemicals Dashboard | Provides formal AOP structures to map evidence against and gives access to curated chemical data to inform coding and analysis [14]. |

Quantitative Applications and Impact in Chemical Risk Assessment

The value of SEMs is demonstrated through concrete applications and measurable outcomes in regulatory and research settings. The following table summarizes key quantitative insights and applications derived from the methodology.

Table 4: Quantitative Applications and Impact of Systematic Evidence Maps

| Application Area | Quantitative Insight / Impact | Example from Evidence |

|---|---|---|

| Research Prioritization | Identifies the proportion of studies focused on specific health endpoints vs. others, revealing relative investment and attention. | An SEM on a chemical class may show 60% of studies investigate cancer, 20% investigate reproductive effects, and only 5% investigate neurotoxicity, clearly highlighting the latter as a priority gap [2]. |

| Efficiency in Systematic Review | Reduces the resource burden of subsequent SRs by pre-identifying and categorizing the relevant evidence base. | The U.S. EPA uses SEMs as a mandated first step in IRIS assessments, allowing teams to quickly scope the available literature before committing to a full, resource-intensive SR [3]. |

| Trend Analysis | Tracks the growth of specific research areas (e.g., NAMs) over time through publication year analysis. | A map can quantify the annual increase in publications using high-throughput transcriptomics for endocrine disruptors, demonstrating the field's evolution [3]. |

| Regulatory "Fit-for-Purpose" Analysis | Informs the scope and depth of risk evaluations by categorizing evidence volume and type. | Supports proposed regulatory changes where analysis can be tailored: detailed assessment for high-exposure/high-hazard uses, and streamlined review for low-exposure, data-poor uses [12]. |

| Stakeholder Communication | Provides visual, accessible summaries of complex evidence landscapes for policymakers and the public. | Projects like aWARE develop interactive web tools to communicate the state of science on autism and environment to the research community and interested public [13]. |

The evolution from systematic review to systematic evidence mapping represents more than a methodological tweak; it is a strategic adaptation of evidence-based science to the realities of modern chemical regulation. SEMs address the core challenges of volume, velocity, and variety in scientific data by providing a rigorous, transparent system for evidence triage and landscape visualization.

The future of this evolution points toward greater automation, integration, and dynamic updating. Machine learning and natural language processing will further streamline screening and data extraction [1]. The integration of SEMs with AOPs and NAMs will mature, creating living, evidence-linked knowledge frameworks that continuously incorporate new data [14]. Finally, the concept of "living" evidence maps that are periodically updated will transform SEMs from static reports into continuous evidence surveillance systems.

For researchers and assessors in chemical risk assessment, mastering SEM methodology is no longer optional but essential. It provides the critical link between the overwhelming deluge of primary research and the actionable, synthesized evidence required to protect public health efficiently and credibly.

Systematic Evidence Maps (SEMs) represent a transformative methodological advancement within chemical risk assessment, designed to characterize broad evidence landscapes and identify critical research gaps with greater efficiency than traditional systematic reviews [2] [15]. Functioning as queryable databases of systematically gathered research, SEMs provide a comprehensive overview of available evidence, supporting priority-setting for risk management and guiding targeted primary research or deeper systematic reviews [8] [3]. This technical guide details the core objectives, methodologies, and applications of SEMs, framing them within the evolving paradigm of evidence-based chemical regulation. It outlines standardized protocols for SEM construction, including problem formulation, evidence retrieval, and data extraction, while introducing advanced analytical techniques such as non-targeted analysis and knowledge graph integration for managing complex, heterogeneous data [16] [8]. The integration of SEMs into regulatory workflows, as exemplified by frameworks from the US EPA IRIS program and the European PARC initiative, demonstrates their critical role in enhancing the transparency, efficiency, and scientific robustness of global chemical safety decisions [3] [17].

The field of chemical risk assessment is characterized by an exponentially growing and heterogeneous evidence base, encompassing toxicological, epidemiological, exposure, and mechanistic data. Traditional narrative reviews and even rigorous systematic reviews (SRs) face significant challenges in this context. While SRs provide a gold standard for synthesizing evidence to answer a specific, focused question (e.g., "Does chemical X cause outcome Y in population Z?"), they are resource-intensive and their narrow scope can be misaligned with the broad evidence needs of regulators and risk managers tasked with evaluating thousands of substances [2] [18].

Systematic Evidence Maps (SEMs) have emerged as a novel tool to bridge this gap. An SEM is defined as a queryable database of systematically gathered research that characterizes the broad features of an evidence base [2] [15]. The core objectives of an SEM are twofold:

- Characterizing the Evidence Landscape: To systematically catalog and describe the available scientific literature on a given chemical, class of chemicals, or health outcome, including the volume, distribution, and key characteristics of studies (e.g., study designs, model systems, exposure levels, endpoints measured).

- Identifying Critical Gaps and Clusters: To visually and analytically reveal where sufficient evidence exists to support a definitive SR (evidence clusters) and where significant knowledge gaps or uncertainties remain, thereby guiding future research and resource allocation [8] [3].

Unlike an SR, an SEM does not aim to synthesize data to estimate a pooled effect size or provide a definitive hazard conclusion. Instead, it serves as a critical precursor and prioritization tool, making the evidence landscape navigable and informing where the application of more intensive SR methods would be most valuable [2]. This approach aligns with the needs of modern regulatory initiatives like the EU's REACH and the US TSCA, which require efficient, transparent, and evidence-based management of large chemical inventories [15] [17].

Methodological Framework for SEM Development

Core Workflow and Problem Formulation

The development of an SEM follows a rigorous, protocol-driven workflow to ensure transparency, reproducibility, and minimization of bias. The process begins with problem formulation, where a broad but structured review question is established. This is often framed using a modified PECO (Population, Exposure, Comparator, Outcome) statement, which is kept broader than in an SR to capture a wide swath of relevant evidence [3]. For example, an SEM on a class of pesticides might define its PECO as: Population (all mammalian laboratory animals and human epidemiological cohorts), Exposure (any study investigating exposure to chemicals within the defined class), Comparator (unexposed or differently exposed controls), and Outcome (any health or biological endpoint) [3].

The subsequent workflow involves searching multiple bibliographic databases with a comprehensive search strategy, systematic screening of titles/abstracts and full texts against pre-defined eligibility criteria, and finally, data extraction and coding of included studies into a structured database [2].

Diagram 1: Systematic Evidence Map (SEM) Development Workflow

Data Extraction and Structuring: From Flat Tables to Knowledge Graphs

Traditionally, extracted data from systematic maps have been stored in flat, tabular formats (e.g., spreadsheets). However, the complex, interconnected nature of chemical risk assessment data—linking chemicals, molecular targets, toxicological outcomes, study models, and endpoints—makes this approach limiting [8].

The cutting-edge evolution in SEM methodology involves structuring data as a knowledge graph. A knowledge graph is a flexible, schemaless network of entities (nodes) and their relationships (edges) [8]. This model is inherently suited for environmental health data, allowing for intuitive representation of complex relationships (e.g., "Chemical A activates Receptor B, which leadsto Outcome C, as reportedin Study D") [8]. Knowledge graphs facilitate sophisticated querying and trend analysis that are cumbersome with flat tables, enabling a more dynamic and insightful characterization of the evidence landscape. This graph-based approach supports long-term goals of interoperability and reusability of evidence across different assessment bodies [8].

Diagram 2: Knowledge Graph Schema for Interconnected Evidence

Experimental Protocols for Evidence Generation and Analysis

Protocol for Evidence Retrieval and Screening (EPA IRIS/PPRTV Template)

The US EPA's Integrated Risk Information System (IRIS) program has developed a standardized template for SEMs that emphasizes rapid, "fit-for-purpose" production [3]. A key component is the use of machine learning-assisted screening.

- Step 1 – Broad Search & De-duplication: Execute search strings across multiple databases (e.g., PubMed, Scopus, Web of Science). Remove duplicates using algorithmic tools.

- Step 2 – Machine Learning Prioritization: Import titles/abstracts into specialized software (e.g., SWIFT-Review, DistillerSR). A small subset (~500) is manually screened by two reviewers to generate a training set. The machine learning model then scores and ranks the remaining records, placing the most likely relevant studies at the top of the workflow.

- Step 3 – Dual-Screen Review: Reviewers screen the prioritized list. This "active learning" approach significantly accelerates the identification of PECO-relevant studies (e.g., mammalian bioassays, epidemiology) while ensuring comprehensive coverage [3].

- Step 4 – Supplemental Tracking: In parallel, studies containing supplemental information (e.g., in vitro assays, toxicokinetic data, New Approach Methodologies - NAMs) are tagged for separate tracking, providing a full panorama of available evidence types [3].

Protocol for Non-Targeted Chemical Analysis (NTA) via High-Resolution Mass Spectrometry

Generating new exposure evidence, a frequent gap identified by SEMs, relies on advanced analytical chemistry. Non-targeted analysis (NTA) using liquid chromatography-high-resolution mass spectrometry (LC-HRMS) is a key protocol [16].

- Step 1 – Sample Preparation: Extract chemicals from matrices (e.g., water, serum, dust) using solid-phase extraction (SPE). Include internal standards for quality control.

- Step 2 – LC-HRMS Analysis: Chromatographically separate compounds, followed by full-scan MS1 and data-dependent MS2 fragmentation in positive and negative electrospray ionization modes. Use a calibration standard for mass accuracy.

- Step 3 – Data Processing: Process raw files using software (e.g., MS-DIAL, XCMS). Perform peak picking, alignment, and adduct/isotope annotation.

- Step 4 – Compound Annotation: Query generated mass spectra against empirical spectral libraries (e.g., MassBank, NIST) and in-silico fragmentation libraries (e.g., GNPS). Use suspect screening lists (e.g., NORMAN-SLE) for known chemicals of emerging concern. Confidence levels (Level 1-5) are assigned based on the strength of the match [16].

- Step 5 – Prioritization: Prioritize detected but unidentified features (Level 5) based on prevalence, exposure metrics, or link to biological activity via effect-directed analysis (EDA), guiding further structure elucidation efforts [16].

Data Presentation: Characterizing Landscapes and Gaps

The value of an SEM is realized through the systematic presentation of quantitative data that summarizes the evidence base. The following tables exemplify core outputs.

Table 1: Evidence Distribution and Characterization from a Hypothetical SEM on "Chemical X" This table provides a high-level summary of the volume and type of evidence available, immediately highlighting areas of abundance and scarcity.

| Evidence Category | Number of Studies | Key Study Characteristics (Examples) | Evidence Strength Indicator |

|---|---|---|---|

| Human Epidemiology | 12 | Cohort studies (n=8), Case-control (n=4); Outcomes: Liver enzyme elevation (n=7), Thyroid hormones (n=5) | Moderate (consistent findings) |

| In Vivo Mammalian Toxicology | 45 | Rodents (n=42), non-rodents (n=3); Exposure duration: Sub-chronic (n=30), Chronic (n=15) | High (extensive testing) |

| In Vitro / Mechanistic Studies | 118 | Endpoints: Receptor activation (n=45), Cytotoxicity (n=38), Genotoxicity (n=35) | High (mechanistic clarity) |

| Environmental Exposure & Fate | 25 | Matrices: Water (n=15), Soil (n=7), Air (n=3); Regions: North America (n=18), Europe (n=7) | Moderate |

| Toxicokinetics (ADME) | 8 | Studies in rats (n=6), in vitro hepatic metabolism (n=2) | Critical Gap |

| Toxicity to Aquatic Organisms | 5 | Acute toxicity to daphnia (n=3), fish early-life stage (n=2) | Substantial Gap |

Data derived from methodology described in [3]

Table 2: Methodological Comparison of Evidence Synthesis Frameworks This table contrasts SEMs with other review types, clarifying their distinct role in the assessment ecosystem.

| Feature | Systematic Evidence Map (SEM) | Systematic Review (SR) for Hazard ID | Traditional Narrative Review |

|---|---|---|---|

| Primary Objective | Characterize evidence extent, distribution, and gaps | Synthesize evidence to answer a focused hazard question | Summarize evidence based on expert selection |

| Research Question Scope | Broad (e.g., "What evidence exists on chemical X?") | Narrow, specific PECO (e.g., "Does X cause liver toxicity?") | Variable, often broad |

| Evidence Synthesis | No quantitative synthesis; descriptive summary | Quantitative (meta-analysis) and/or qualitative synthesis required | Selective, qualitative description |

| Resource Intensity | Moderate to High (broader search, less synthesis) | High (intensive search, appraisal, synthesis) | Low to Moderate |

| Key Output | Interactive database; visual evidence maps; gap analysis report | Hazard conclusion; confidence rating; dose-response analysis | Scholarly article summarizing current understanding |

| Regulatory Use Case | Priority-setting; problem formulation; informing SR scoping | Hazard identification; derivation of toxicity reference values | Background context; hypothesis generation |

| Example Framework | US EPA IRIS SEM Template [3]; CEE Guidelines [8] | Navigation Guide [19]; OHAT Approach [19] | Common in academic journals |

Information synthesized from [2] [19] [8]

Visualization of Evidence Landscapes and Relationships

The PECO Framework in Evidence Mapping

The PECO framework structures the research question and eligibility criteria. In an SEM, each element is defined broadly to capture the evidence landscape.

Diagram 3: Broad PECO Framework for Systematic Evidence Mapping

The Scientist's Toolkit: Essential Research Reagents and Materials

The execution of protocols highlighted in this guide, from literature synthesis to laboratory analysis, relies on specialized tools and materials.

Table 3: Research Reagent Solutions for Evidence Mapping and Generation

| Item / Solution | Function in SEM/Evidence Generation | Example & Notes |

|---|---|---|

| Systematic Review Software | Manages the SEM workflow: reference import, deduplication, dual-screen review, data extraction, and reporting. | DistillerSR, Rayyan, CADIMA. Essential for transparency and reproducibility [3]. |

| Machine Learning Prioritization Tools | Accelerates title/abstract screening by learning from reviewer decisions and ranking remaining records by predicted relevance. | Integrated into SWIFT-Review, Abstractxr. Reduces screening workload by 50-70% [3]. |

| Graph Database Platform | Stores and queries the SEM knowledge graph, allowing for complex, relationship-based exploration of the evidence network. | Neo4j, Amazon Neptune. Enables moving beyond flat tables to interconnected data models [8]. |

| Liquid Chromatography-HRMS System | The core analytical instrument for non-targeted and suspect screening analysis to identify unknown chemicals in exposure assessment. | Orbitrap or Q–TOF mass spectrometers coupled to UHPLC. Provides high mass accuracy and resolution [16]. |

| Solid-Phase Extraction (SPE) Cartridges | Isolate and concentrate a wide range of organic chemicals from complex environmental or biological samples prior to LC-HRMS analysis. | Mixed-mode (C18/SAX/SCX) cartridges are common for broad-spectrum extraction [16]. |

| Chemical Reference Standard Libraries | Essential for confirming the identity of suspected chemicals (Level 1 identification) in non-targeted analysis and for quantification. | Commercial suites (e.g., PFAS, pesticide mixes) and custom-synthesized standards for emerging compounds [16]. |

| Toxico-Ontologies | Controlled, hierarchical vocabularies that provide standardized terms for annotating evidence (e.g., for outcomes, pathways). | The Adverse Outcome Pathway (AOP) ontology; BioAssay Ontology (BAO). Promotes data interoperability [8]. |

Integration with Regulatory Decision-Making and Future Directions

SEMs are increasingly embedded in regulatory science. The US EPA uses them as a required first step in its IRIS and PPRTV assessments to scope the literature and determine the feasibility and focus of subsequent SRs [3]. In Europe, the Partnership for the Assessment of Risks from Chemicals (PARC) is leveraging SEM-like approaches alongside innovative monitoring to build a next-generation risk assessment paradigm [16] [17].

The 2025 revision of the EU's REACH regulation emphasizes the need for "simpler, faster, bolder" processes [17]. SEMs directly contribute to these goals by enabling rapid evidence surveillance and efficient prioritization of assessment resources. Furthermore, the push for greater transparency through tools like the Digital Product Passport under the EU's Ecodesign Regulation will create new streams of chemical use data that can be integrated into evidence maps [20] [9].

Future advancements will focus on:

- Automation and Living Maps: Integrating natural language processing and machine learning more deeply to create "living" SEMs that continuously update with new publications [8] [3].

- Quantitative Gap Analysis: Moving beyond qualitative gap identification to quantitative models that predict the impact of specific data gaps on risk assessment uncertainty.

- Global Evidence Integration: Linking SEM databases across international regulatory bodies (e.g., EPA, ECHA, OECD) to create a unified global evidence landscape for high-priority substances.

Systematic Evidence Maps represent a fundamental evolution in evidence-based chemical risk assessment. By systematically characterizing broad evidence landscapes and pinpointing critical gaps, they provide an indispensable tool for rational priority-setting, efficient resource allocation, and strategic research planning. Their integration with advanced computational methods like knowledge graphs and machine learning, coupled with cutting-edge analytical protocols for evidence generation, positions SEMs as a cornerstone of a more transparent, agile, and scientifically robust regulatory future. As global chemical production and complexity grow, the role of SEMs in ensuring that risk management decisions are informed by a comprehensive and clear-sighted view of the available science will only become more vital.

In modern chemical risk assessment and research, the volume of scientific literature is vast and growing exponentially. Traditional narrative reviews or narrowly focused systematic reviews, while valuable, often fail to provide the comprehensive, queryable overview required for proactive decision-making in regulatory and research prioritization [15]. Systematic Evidence Maps (SEMs) have emerged as a critical methodology to address this gap. An SEM is defined as a queryable database of systematically gathered research that characterizes broad features of an evidence base, providing a comprehensive summary of large bodies of policy-relevant research [15].

The core function of an SEM is not to perform a full synthesis or meta-analysis, as in a systematic review, but to systematically identify, catalogue, and characterize available evidence. This mapping enables forward-looking predictions, trendspotting, and the efficient identification of evidence clusters and critical gaps [15]. Within the broader thesis on systematic evidence maps in chemical risk assessment research, these tools are foundational. They transform disconnected studies into structured, accessible knowledge assets. The primary outputs of an SEM—interactive databases, tailored visualizations, and detailed evidence inventories—are what deliver its value to researchers, risk assessors, and policy-makers, enabling evidence-based prioritization and hypothesis generation in fields such as toxicology and drug safety [21] [22].

Defining the Core Outputs of a Systematic Evidence Map

The utility of a Systematic Evidence Map is realized through three interconnected, digital-first outputs. Each serves a distinct purpose in making complex evidence bases accessible and actionable.

- Interactive Databases: These are the foundational, structured repositories of all extracted study data. They allow users to query the evidence base using multiple filters (e.g., chemical, outcome, study population, study type) to dynamically retrieve a customized subset of studies. This interactivity moves beyond static PDF tables, empowering users to ask their own questions of the data [23].

- Dynamic Visualizations: These are graphical representations derived from the database. Effective visualizations translate complex metadata and findings into intuitive charts, heat maps, and network diagrams. They are designed not merely for presentation but for exploration, often containing interactive elements that are linked to the underlying database [24] [25].

- Structured Evidence Inventories: This output is a comprehensive, standardized catalogue of all included studies. It typically includes core metadata (e.g., citation, study design), a summary of key elements relevant to the map's scope (e.g., exposure parameters, endpoints measured), and tags or codes applied during the screening process. It serves as the definitive index and source for the other two outputs [15] [21].

The relationship between these outputs is synergistic. The evidence inventory is populated through the systematic review workflow. Its structured data feeds the interactive database, which powers the backend of dynamic visualizations. Users can start their exploration with a visualization to spot a trend, then query the database to see the contributing studies, and finally examine the detailed record for each study in the inventory. This ecosystem transforms a literature collection into an explorable knowledge system.

Diagram 1: The Synergistic Relationship Between Core SEM Outputs. The systematic workflow creates an inventory, which feeds a queryable database that powers visualizations for end-users.

In-Depth Analysis of Core Outputs

Interactive Databases: Architecture and Implementation

An interactive database is the engine of an SEM. Its architecture is designed for flexibility and user autonomy, allowing stakeholders to navigate the evidence without relying on the original research team.

A robust technical architecture follows a layered approach:

- Data Layer: A structured relational (e.g., SQL) or NoSQL database containing all extracted data points from the evidence inventory.

- Application Logic Layer: This layer handles user requests, processes queries, and retrieves data. It is often built using frameworks like R Shiny or Python Dash, or embedded within business intelligence tools like Tableau.

- Presentation Layer: The user interface (UI), typically a web-based dashboard, where users select filters and view results [23].

Key interactive functionalities must include:

- Multi-dimensional Filtering: Allowing users to filter studies simultaneously by chemical, health outcome, study type (in vivo, in vitro, epidemiological), population, and other relevant tags.

- Linked Highlighting: Selecting a study in a results table highlights its position on a corresponding visualization (e.g., a bubble chart), and vice versa.

- Dynamic Search and Export: A keyword search across study metadata and abstracts, with the ability to export filtered results to standard formats (CSV, PDF).

For example, an SEM on inorganic arsenic could allow a user to filter for only in vitro studies that investigated genotoxicity as an endpoint in hepatic cell lines, instantly generating a list of relevant studies and a summary plot [21].

Dynamic Visualizations: Principles of Effective Design

Visualizations translate database queries into intuitive graphics. Beyond simple charts, they must be designed for clarity, accuracy, and inclusivity.

Core Design Principles:

- Perceptual Uniformity: Use color gradients that represent data fairly without visual distortion (e.g., viridis, batlow). Avoid misleading rainbow color maps [26].

- Universal Readability: Ensure visualizations are interpretable by people with color vision deficiencies and remain effective when printed in black and white [26].

- Accessibility Compliance: Adhere to Web Content Accessibility Guidelines (WCAG). For graphical objects and UI components, a minimum contrast ratio of 3:1 against adjacent colors is required. For text within graphics, the contrast ratio must be at least 4.5:1 (or 3:1 for large text) [27] [28].

- Empathetic and Ethical Design: Acknowledge the people behind data points. Avoid aggregation that erases small subgroups; use "near and far" graphics to show both broad trends and individual impacts where possible [24].

Common Visualization Types in SEMs:

- Evidence Heatmaps: Display chemicals on one axis and health outcomes on another, with cells colored by the volume or strength of evidence. This instantly identifies well-studied and data-poor areas.

- Interactive Bubble Charts: Plot studies where bubble position, color, and size encode different dimensions (e.g., study quality, sample size, effect direction).

- Temporal Trend Graphs: Show the accumulation of studies over time, which is useful for identifying emerging research topics.

Diagram 2: User Interaction Workflow with an SEM Dashboard. The process is dynamic and user-driven, from initial filtering to exploration and export.

Structured Evidence Inventories: The Foundational Layer

The evidence inventory is the meticulously curated dataset upon which all other outputs depend. It is the product of a rigorous, protocol-driven screening and data extraction process.

Development Protocol:

- Protocol Registration: Define the research question, search strategy, and inclusion/exclusion criteria a priori.

- Search & De-duplication: Execute searches across multiple databases (e.g., PubMed, Web of Science) and remove duplicates [22].

- Screening: Conduct title/abstract and full-text screening, typically by two independent reviewers.

- Data Extraction & Coding: Extract standardized metadata and content from included studies into a piloted form. This includes tagging studies with relevant modifiers (e.g., "susceptibility factor: age" for an arsenic SEM) [21].

- Quality Assurance: Implement consistency checks and inter-rater reliability assessments.

Content and Structure: A single record in an evidence inventory extends beyond a citation. It is a structured data object containing fields such as:

- Study Identification: DOI, authors, year.

- Study Characteristics: Design (cohort, case-control, in vitro), population/sample, exposure assessment method.

- Intervention/Exposure: Specific chemical, dose, duration.

- Outcomes: Endpoints measured (e.g., cytotoxicity, gene expression, tumor incidence).

- Modifying Factors: Tags for susceptibility factors like genetics, age, or co-exposures [21] [22].

- Results: Key quantitative findings (e.g., points of departure, variability factors) if extraction is quantitative.

This granular, structured data is what enables the powerful filtering and visualization in the downstream outputs.

Case Studies & Quantitative Analysis

Recent applications demonstrate the practical value and quantitative findings generated by SEM outputs in chemical risk assessment.

Table 1: Comparison of Two Recent Systematic Evidence Map Studies in Chemical Risk Assessment

| Study Focus | Inorganic Arsenic & Susceptibility [21] | Human Toxicodynamic (TD) Variability [22] |

|---|---|---|

| Primary Objective | To map literature on factors modifying susceptibility to iAs exposure. | To map empirical data on human TD variability to assess default uncertainty factors. |

| Search Yield | Not explicitly stated in abstract. | 2,408 studies retrieved from PubMed/Web of Science (2004-2023). |

| Final Included Studies | Not explicitly stated in abstract. | 23 in vitro studies (only 7 provided a quantitative TD variability factor). |

| Key Gap Identified | Characterization of the distribution and density of evidence on modifiers (e.g., genetics, nutrition). | A severe scarcity of studies designed to isolate and quantify human TD variability. |

| Impact on Assessment | Provides a clear roadmap for future targeted systematic reviews on specific susceptibility factors. | Suggests the default UF of 3.16 for TD variability is based on extremely limited data, highlighting a critical research need. |

Furthermore, regulatory agencies are formally adopting frameworks powered by SEM-like logic. The U.S. FDA's newly proposed Post-Market Assessment Prioritization Tool for food chemicals is a prime example. It employs a Multi-Criteria Decision Analysis (MCDA) approach where chemicals are scored on structured criteria, generating a ranked, evidence-based list for review [29].

Table 2: Criteria from the FDA's Proposed Prioritization Tool (MCDA Framework) [29]

| Criterion Category | Specific Criteria Examples |

|---|---|

| Public Health Criteria | Toxicity (across multiple data types), changes in population exposure, relevance to susceptible subpopulations (e.g., infants), presence of new scientific information. |

| Other Decisional Criteria | Level of external stakeholder attention, regulatory actions by other agencies (e.g., EU, California), potential impact on public confidence, detection in multiple commodities. |

This tool operationalizes the principles of an SEM—systematic gathering and structured scoring of evidence—into a reproducible, transparent regulatory process [29].

The Scientist's Toolkit: Essential Reagents & Software

Creating professional SEM outputs requires a combination of specialized software and adherence to best practice guidelines.

Table 3: Essential Toolkit for Developing Systematic Evidence Map Outputs

| Tool Category | Specific Tool / Guideline | Primary Function in SEM Development |

|---|---|---|

| Systematic Review Software | DistillerSR, Rayyan, Covidence | Manages the screening process (title/abstract, full-text), facilitates dual review, and maintains an audit trail. Often serves as the initial repository for the evidence inventory [21]. |

| Data Analysis & Visualization | R (with ggplot2, plotly), Python (with Pandas, Matplotlib, Seaborn), Tableau | Performs data wrangling, statistical analysis, and generates static and interactive visualizations. R Shiny and Python Dash are key for building web apps [23]. |

| Dashboard Development | R Shiny, Python Dash, Tableau Public, Power BI | Provides frameworks for building the interactive, web-based dashboard that combines database queries, visualizations, and UI controls into a single application [23]. |

| Color & Accessibility | Scientific Colour Maps (e.g., batlow) [26], WCAG Contrast Checkers [27] [28] | Ensures visualizations are perceptually uniform, accessible to color-blind users, and meet minimum contrast standards for text and graphics. |

| Style & Reproducibility | Urban Institute Style Guide [25], GitHub, RMarkdown/Jupyter | Promotes consistent, professional styling across charts and supports reproducible research practices through version control and literate programming. |

Future Directions & Integration

The future of SEM outputs lies in greater integration, automation, and intelligence. Interoperability between different evidence maps and chemical databases (e.g., EPA's CompTox, ECHA) will create a connected ecosystem of chemical safety evidence. The incorporation of machine learning is advancing rapidly, with models assisting in primary screening (reducing manual workload) and in identifying hidden patterns or predicting novel hazard endpoints across large evidence bases. Furthermore, the line between SEMs and risk assessment is blurring, as seen with the FDA's tool [29]. SEM outputs are evolving from informational resources into direct, decision-support systems that guide resource allocation for both research and regulation. As these tools become more sophisticated and user-friendly, they will be indispensable for navigating the complex evidence landscape of 21st-century chemical risk science.

Building and Using Systematic Evidence Maps: A Step-by-Step Methodology with Case Studies