A Beginner's Roadmap to Systematic Reviews in Ecotoxicology: From Protocol to Publication

This article provides a comprehensive, step-by-step guide for researchers and scientists new to conducting systematic reviews (SRs) in ecotoxicology.

A Beginner's Roadmap to Systematic Reviews in Ecotoxicology: From Protocol to Publication

Abstract

This article provides a comprehensive, step-by-step guide for researchers and scientists new to conducting systematic reviews (SRs) in ecotoxicology. It translates established evidence-synthesis principles from clinical and biomedical fields to address the specific methodological challenges of environmental health and toxicology. The guide covers the full scope of the review process, beginning with foundational concepts and the formulation of a precise research question. It then details the core methodological steps, including protocol development, comprehensive literature searching, study selection, and data extraction. To address common hurdles, the article offers practical troubleshooting advice for managing heterogeneous data and assessing risk of bias. Finally, it emphasizes validation through rigorous reporting standards, evidence certainty assessment, and the use of field-specific guidelines like COSTER. The goal is to equip beginners with the knowledge to produce transparent, reproducible, and high-quality evidence syntheses that can inform robust scientific conclusions and policy decisions.

Demystifying Systematic Reviews in Ecotoxicology: Laying the Groundwork for Success

Defining Systematic Reviews and Their Core Principles in Evidence-Based Toxicology

A systematic review is a structured, comprehensive, and reproducible methodology for synthesizing all available evidence on a precisely framed research question [1]. In toxicology, this approach is a core tool of Evidence-Based Toxicology (EBT), which aims to improve the field's transparency, objectivity, and consistency to better inform regulatory and policy decisions [1]. Unlike traditional narrative reviews, which may rely on an expert's implicit and selective synthesis of literature, a systematic review employs an explicit, pre-defined protocol to minimize bias and error, ensuring that its conclusions are robust and verifiable [1].

The adoption of systematic review methodology in toxicology and environmental health has grown rapidly, driven by recognition of its rigor and the need for reliable evidence synthesis [2]. This guide outlines the core principles and detailed methodology for conducting systematic reviews, specifically framed for beginners in ecotoxicology research.

Core Principles of Systematic Reviews

The execution of a high-quality systematic review is governed by several foundational principles designed to combat the limitations of traditional narrative reviews.

- Transparency: Every decision, criterion, and step in the review process must be documented and reported explicitly. This allows readers to understand how conclusions were reached and enables the replication of the review [1].

- Minimization of Bias: Systematic reviews employ strategies to reduce systematic error at every stage, from the comprehensive search for studies to the objective, criteria-based selection of evidence and its critical appraisal [1] [2].

- Reproducibility: The detailed reporting of methods ensures that the same review, conducted independently by different teams, would yield the same results [1].

- Comprehensiveness: The search strategy is designed to identify all potentially relevant studies on the question, minimizing the risk that important evidence is overlooked [1].

- Reliability: Through the application of standardized tools for quality assessment and evidence synthesis, systematic reviews provide a more reliable summary of the available science than less formal methods [3].

The following table contrasts the key features of narrative and systematic reviews:

Table 1: Comparison of Narrative and Systematic Reviews [1]

| Feature | Narrative Review | Systematic Review |

|---|---|---|

| Research Question | Broad, often not explicitly specified | Focused, specific, and explicitly defined |

| Literature Search | Sources and strategy usually not specified; potentially selective | Comprehensive, multi-source search with explicit, documented strategy |

| Study Selection | Implicit, subjective selection criteria | Explicit, pre-defined eligibility criteria applied consistently |

| Quality Assessment | Usually absent or informal | Critical appraisal using explicit, standardized tools |

| Synthesis | Qualitative summary, susceptible to author perspective | Structured synthesis (qualitative, quantitative, or narrative) based on extracted data |

| Time & Resources | Generally lower | Substantially higher (often >1 year, requiring a team with diverse expertise) [1] |

The Systematic Review Process: A Step-by-Step Methodology

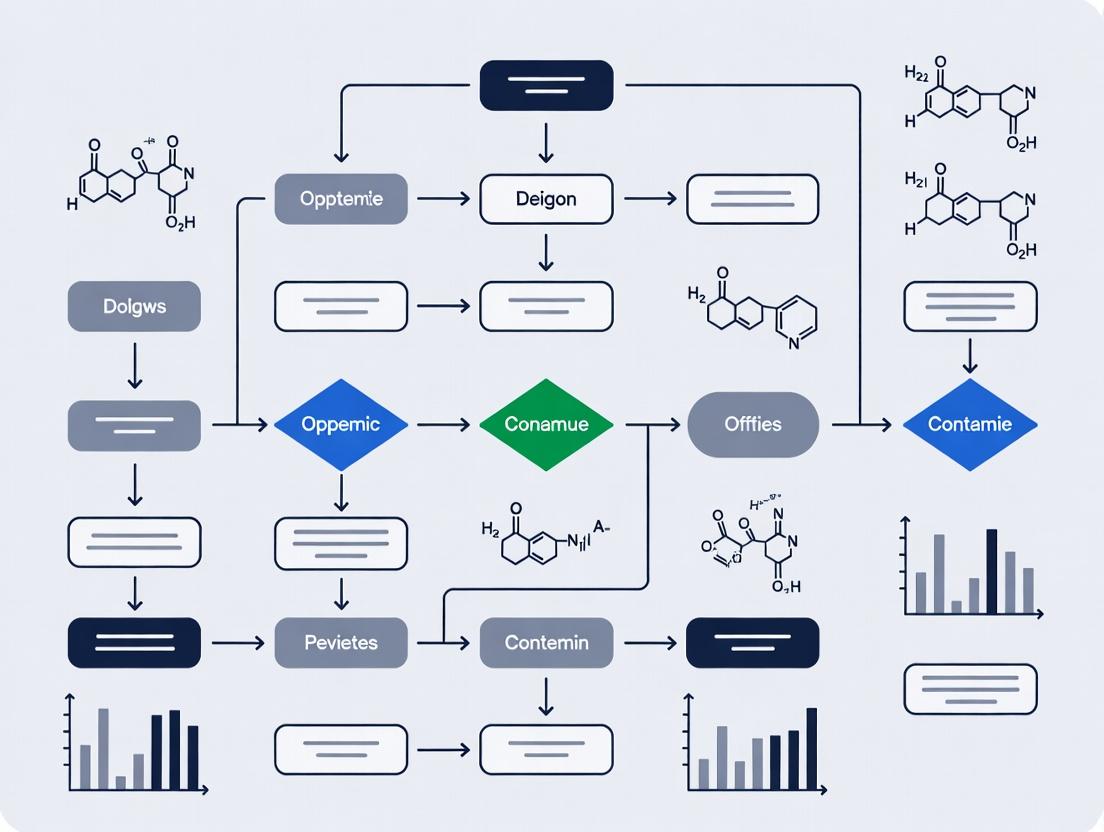

Conducting a systematic review is a multi-stage process that requires careful planning and execution. The following workflow diagrams the key phases.

Planning and Team Assembly

A systematic review must be conducted by a team with complementary expertise. A minimum of three members is recommended to ensure objectivity during screening [4]. Key roles include:

- Subject Experts: Provide domain knowledge in ecotoxicology.

- Methodological Experts: Guide the systematic review process.

- Information Specialist/Librarian: Design and execute comprehensive, reproducible database searches [4].

- Statistician: Essential if a meta-analysis is planned. Early engagement with relevant stakeholders (e.g., policymakers, community groups) can also enhance the review's relevance and impact [4].

Developing and Registering the Protocol

A detailed, pre-written protocol is the cornerstone of a systematic review, guarding against arbitrary decision-making. It should be registered on a platform like PROSPERO, Open Science Framework (OSF), or the International Platform of Registered Systematic Review and Meta-analysis Protocols (INPLASY) to promote transparency and reduce duplication of effort [5]. The protocol must define:

- A structured research question using a framework like PECO (Population, Exposure, Comparator, Outcome), which is specifically suited for environmental health questions [5].

- Population: The organisms or ecological systems of interest (e.g., freshwater fish).

- Exposure: The chemical or environmental stressor (e.g., glyphosate).

- Comparator: The comparison condition (e.g., no exposure, lower exposure level).

- Outcome: The measured effect (e.g., mortality, reproductive impairment).

- Pre-defined eligibility criteria (inclusion/exclusion) for studies.

- The search strategy.

- Data extraction methods.

- Plans for risk-of-bias assessment and evidence synthesis.

The logical relationship of the PECO framework in ecotoxicology is illustrated below.

Comprehensive Literature Search

The goal is to identify all relevant studies. A robust strategy involves:

- Searching multiple electronic databases (e.g., PubMed, Scopus, Web of Science, TOXLINE, GreenFile).

- Developing search strings using keywords and controlled vocabulary (e.g., MeSH, Emtree) for each PECO concept, combined with Boolean operators (AND, OR) [5].

- Supplementing with grey literature searches (theses, reports, conference proceedings) and backward/forward citation tracking [3]. The full search strategy for each database must be documented for reproducibility.

Study Screening and Eligibility Assessment

This phase involves applying the pre-defined eligibility criteria to the search results. It is typically performed in two stages [5]:

- Title/Abstract Screening: Reviewers independently screen citations. Conflicts are resolved by consensus or a third reviewer.

- Full-Text Screening: The full text of potentially relevant studies is retrieved and assessed against the criteria. The process and results are summarized in a PRISMA flow diagram, which transparently reports the number of studies identified, included, and excluded at each stage [4].

Table 2: Key Components of Eligibility Criteria [5]

| Component | Description | Ecotoxicology Example |

|---|---|---|

| Population | Organisms, species, or systems studied. | Freshwater benthic macroinvertebrates. |

| Exposure | The chemical, mixture, or stressor of interest. | Chronic exposure to triclosan in effluent. |

| Comparator | The baseline or control condition for comparison. | Upstream site or laboratory control. |

| Outcome | The measured endpoint or effect. | Species diversity index (e.g., Shannon Index). |

| Study Design | Accepted types of primary studies. | Field monitoring studies, controlled mesocosm experiments. |

Data Extraction

Data from included studies is extracted into standardized forms or software. Dual, independent extraction by two reviewers is best practice to minimize error. Items extracted typically include:

- Study identifiers and characteristics (author, year, location).

- Details on population, exposure, comparator, and outcome.

- Key quantitative results (e.g., mean, standard deviation, sample size, effect estimates).

- Information relevant to risk-of-bias assessment.

Quality and Risk of Bias Assessment

The internal validity (trustworthiness) of each included study is critically appraised using standardized tools. This assesses the risk of bias—the potential for systematic error to distort the study's results. Common tools include:

- ROBINS-I: For assessing risk of bias in non-randomized studies of interventions (or exposures), widely applicable to observational ecotoxicology studies [5].

- SYRCLE’s RoB tool: Adapted for animal (including ecotoxicological) intervention studies. Assessment is performed independently by two reviewers, with results used to inform the evidence synthesis (e.g., sensitivity analysis).

Evidence Synthesis

The extracted data is synthesized to answer the research question. Synthesis can be:

- Narrative/Descriptive: A structured summary of the findings from individual studies, often tabulated.

- Quantitative (Meta-analysis): The statistical combination of effect estimates from multiple studies to produce a summary measure (e.g., pooled hazard ratio). This requires comparable outcome data across studies.

- Qualitative: Thematic synthesis of findings, common for mixed-methods reviews. The strength of the body of evidence is also evaluated, considering factors like risk of bias, consistency, precision, and directness of the findings [1].

Reporting and Dissemination

The final review must be reported with full transparency. The PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement is the minimum reporting guideline [4] [6]. The manuscript should include the PRISMA flow diagram, detailed methods, results of all stages, and a discussion placing the findings in context. Results should be shared with relevant stakeholders and decision-makers [4].

Growth and Standards in the Field

The field of systematic reviews in toxicology is evolving rapidly. The number of published systematic reviews in toxicology approximately doubled from 2016 to 2020 [2]. This growth has been accompanied by the development of field-specific guidance to address unique challenges, such as integrating multiple evidence streams (e.g., in vitro, animal, human, ecological data) and assessing complex exposures [1]. Key standards and guidance documents include:

- COSTER (Conduct of Systematic Reviews in Toxicology and Environmental Health Research): A recent cross-sector consensus providing 70 recommendations for best practice [3].

- Navigation Guide: A rigorous methodology for translating environmental health science into policy [6].

- OHAT (Office of Health Assessment and Translation) Handbook: Provides a framework for literature-based health assessment [6]. These standards build upon foundational biomedical resources like the Cochrane Handbook [1] [6].

The Scientist’s Toolkit for Systematic Reviews

Table 3: Essential Tools and Resources for Conducting a Systematic Review in Ecotoxicology

| Tool/Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Protocol Registration | PROSPERO, INPLASY, Open Science Framework (OSF) | Publicly register review protocol to establish precedence and reduce duplication. |

| Search Databases | PubMed, Scopus, Web of Science, TOXLINE, GreenFile | Identify relevant primary research studies across disciplines. |

| Reference Management | EndNote, Zotero, Mendeley | Store, deduplicate, and manage large volumes of search results. |

| Screening Software | Rayyan, Covidence, DistillerSR | Facilitate blinded, collaborative title/abstract and full-text screening. |

| Data Extraction & Management | Custom spreadsheets (Excel, Google Sheets), Systematic Review Data Repository (SRDR+) | Systematically extract and store data from included studies. |

| Risk of Bias Assessment | ROBINS-I, SYRCLE’s RoB tool | Critically appraise the methodological quality of included studies. |

| Evidence Synthesis | RevMan, Metafor package in R, Stata | Conduct statistical meta-analysis and create forest plots. |

| Reporting Guideline | PRISMA Checklist & Flow Diagram | Ensure complete and transparent reporting of the review. |

Systematic reviews represent a fundamental shift toward greater rigor, transparency, and reliability in synthesizing toxicological evidence. By adhering to a structured protocol and core principles—comprehensiveness, minimization of bias, and reproducibility—researchers in ecotoxicology can produce high-quality syntheses that provide a solid foundation for scientific understanding, risk assessment, and environmental policy. For beginners, mastering this methodology involves committing to a team-based, protocol-driven approach and utilizing the growing suite of standards and tools specifically designed for the environmental health sciences.

Understanding the Hierarchy of Evidence and the Role of SRs in Ecotoxicology

Systematic reviews (SRs), pioneered in clinical medicine, provide a transparent, methodologically rigorous, and reproducible means of summarizing all available evidence on a precisely framed research question [1]. Their adoption in ecotoxicology represents a core component of the broader evidence-based toxicology (EBT) movement, which seeks to improve the field's objectivity, consistency, and utility for regulatory decision-making [1]. Unlike traditional narrative reviews, which often rely on implicit, expert-driven selection and synthesis of literature, SRs follow a predefined, explicit protocol to minimize bias and enhance reproducibility [1].

A fundamental concept underpinning SRs is the hierarchy of evidence. This hierarchy ranks different types of scientific studies based on the intrinsic strength of their design to minimize bias and establish causal relationships. In ecotoxicology, this hierarchy informs which studies provide the most reliable evidence for hazard identification and risk assessment. The integration of evidence from different levels of this hierarchy—from controlled laboratory studies to field observations—is formalized through Weight of Evidence (WOE) approaches [7] [8]. These structured methods are critical for tackling complex questions of chemical harm in the environment, where multiple lines of evidence must be coherently assembled to support causal judgments and inform policy [8].

The Hierarchy of Evidence in Ecotoxicology

The hierarchy of evidence provides a framework for assessing the reliability and inferential strength of different study types. It guides researchers in designing primary studies and informs systematic reviewers when evaluating and synthesizing evidence. In ecotoxicology, the hierarchy is adapted to address questions of exposure, hazard, and risk in environmental systems.

Table 1: Hierarchy of Evidence in Ecotoxicology

| Evidence Level | Study Type | Key Characteristics | Primary Strength | Common Limitations |

|---|---|---|---|---|

| Highest | Field Observations & Monitoring (e.g., wildlife population trends linked to measured exposure) | Direct observation of effects in real ecosystems; can establish strong temporal/spatial coherence [8]. | High ecological relevance; can provide definitive proof of real-world impact (e.g., vulture decline from diclofenac) [8]. | Difficult to control confounding variables; establishing causation is challenging without experimental support. |

| Randomized Field Experiments (e.g., mesocosm studies, plot tests) | Controlled manipulations in semi-natural or natural environments [8]. | Good balance between control and environmental realism. | Limited scale and duration; may not capture long-term or landscape-level effects. | |

| Controlled Laboratory Experiments (in vivo whole organism) | Standardized tests (e.g., OECD guidelines) under controlled conditions [9]. | High internal validity; establishes dose-response; controls confounding factors. | Uncertain ecological relevance; simplified conditions may not reflect complex field interactions [8]. | |

| In Vitro & In Silico Studies (e.g., cell assays, QSAR models) | Mechanistic data on toxicity pathways; high-throughput screening. | Useful for understanding mode of action; rapid and cost-effective for screening. | Significant extrapolation uncertainty to whole organisms and ecosystems. | |

| Lowest | Expert Opinion, Case Reports, & Anecdotal Evidence | Unsystematic observations or informal synthesis. | Can identify emerging issues or generate hypotheses. | High risk of bias; not reproducible; susceptible to selective use of information. |

The choice of evidence and its position in the hierarchy depends on the specific review question. For prospective risk assessment of new chemicals, controlled laboratory studies form the primary evidence base. For retrospective risk assessment (impact evaluation) of chemicals already in the environment, a WOE approach that integrates field monitoring, epidemiological data, and laboratory evidence is essential [8]. A critical review of WOE methodologies noted that the best approaches provide a structured synthesis of evidence across these different streams, improving transparency and consistency in hazard identification [7].

Diagram 1: The Ecotoxicology Evidence Hierarchy Pyramid

The Systematic Review Process: A Step-by-Step Methodology

Conducting a systematic review in ecotoxicology is a resource-intensive process typically requiring over a year to complete, demanding expertise in the scientific domain, review methodology, literature search, and data analysis [1]. The process is broken down into sequential steps to ensure rigor and transparency. The following ten-step framework, adapted for ecotoxicology, provides a detailed methodology [1].

Step 1: Planning & Team Assembly Form a multidisciplinary team including subject matter experts, a review methodologist, a information specialist/librarian, and a statistician if a meta-analysis is anticipated. Define roles, timelines, and resources.

Step 2: Formulating the Research Question Develop a focused, answerable question. While the PICO (Population, Intervention, Comparator, Outcome) framework is common in clinical reviews, ecotoxicology questions often adapt this to PECO (Population, Exposure, Comparator, Outcome) or similar variants [10]. For example: "In freshwater fish (P), does chronic exposure to glyphosate-based herbicides (E) compared to no exposure (C) lead to reduced fecundity or gonadal histopathology (O)?"

Step 3: Developing & Registering the Protocol Draft a detailed, publicly accessible protocol that prespecifies all methods for the subsequent steps. This includes the search strategy, study eligibility criteria, data extraction items, and planned approach to risk of bias assessment and synthesis. Registration on platforms like PROSPERO or the Open Science Framework minimizes reporting bias and duplication of effort.

Step 4: Systematic Search for Evidence The information specialist designs and executes a comprehensive, reproducible search strategy. This involves searching multiple bibliographic databases (e.g., PubMed, Scopus, Web of Science, Environment Complete), specialized sources, and grey literature (governmental reports, theses, conference proceedings). The search strategy uses a combination of controlled vocabulary (e.g., MeSH terms) and free-text keywords related to the PECO elements. Document the search process completely [1].

Step 5: Study Selection Apply the pre-defined eligibility criteria to the search results in a two-stage process: 1) screening of titles and abstracts, and 2) full-text assessment. At least two reviewers should work independently, with conflicts resolved by consensus or a third reviewer. The process should be documented using a PRISMA flow diagram [7].

Step 6: Data Extraction Using standardized, piloted forms, extract relevant data from each included study. This typically includes study identifiers, characteristics (species, test substance, exposure regime, endpoints), methodological details, and outcome data (e.g., EC50 values, mean effect sizes with variance measures). Dual independent extraction is recommended for accuracy.

Step 7: Assessing Risk of Bias (Critical Appraisal) Evaluate the methodological quality and internal validity of each included study—its "risk of bias." Ecotoxicology-specific tools are used, such as the ToxRTool for in vivo studies or criteria based on OECD Test Guidelines [9]. This step assesses factors like randomization, blinding, exposure verification, and statistical reporting. It does not judge the "relevance" of the study to the review question, which is a separate consideration [9].

Step 8: Evidence Synthesis Synthesize the findings from the included studies. This can be:

- Narrative Synthesis: A structured summary, often tabulating studies by design or outcome and exploring relationships between study characteristics and findings.

- Quantitative Synthesis (Meta-Analysis): A statistical combination of effect estimates from multiple studies to produce a summary estimate. This requires comparable outcome data and is common for summarizing in vivo toxicity endpoints (e.g., pooled LC50 values). Quantitative scoring systems, like those based on Multi-Criteria Decision Analysis (MCDA), can weight data by their reliability and relevance during synthesis [9].

Step 9: Assessing Certainty of the Evidence Rate the overall confidence in the body of evidence for each key outcome. The GRADE (Grading of Recommendations Assessment, Development and Evaluation) framework is increasingly adopted for this purpose [10]. In GRADE, evidence from controlled experimental studies (like randomized lab trials) starts as "high" certainty but can be rated down for risk of bias, inconsistency, indirectness, imprecision, and publication bias. Observational field evidence starts as "low" certainty but can be rated up for strong associations or dose-response gradients [10]. The final rating (High, Moderate, Low, Very Low) communicates how likely the true effect is to reflect the estimated effect.

Step 10: Interpretation & Reporting Interpret the findings in the context of the review's limitations, the certainty of the evidence, and the broader ecotoxicological landscape. Prepare a final report following reporting standards like PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses). Disseminate results to relevant stakeholders, including researchers, regulators, and policymakers.

Diagram 2: Systematic Review Workflow in Ecotoxicology

Application of the GRADE Framework for Evidence Certainty

The GRADE framework provides a transparent and systematic method to move from evidence to decisions in ecotoxicology [10]. Its application is a key part of Step 9 in the SR process. GRADE assesses the certainty of evidence (also called confidence or quality) for each pre-specified critical outcome across a body of studies.

Table 2: Application of GRADE in Ecotoxicology Systematic Reviews

| GRADE Element | Definition | Application in Ecotoxicology | Example Actions |

|---|---|---|---|

| Starting Point | Initial certainty level based on study design. | Controlled lab experiments (in vivo): Start as High. Observational field studies: Start as Low [10]. | A review of lab toxicity tests begins with High certainty for mortality outcomes. |

| Reasons to Rate Down | Factors that reduce confidence in the estimated effect. | ||

| 1. Risk of Bias | Limitations in study design/execution. | Assess using ecotoxicology-specific tools (e.g., lack of solvent control, inadequate exposure verification). | Downgrade by one level if many studies have serious limitations. |

| 2. Inconsistency | Unexplained variability in results across studies. | High heterogeneity in effect sizes (e.g., I² > 50%) not explained by species or exposure conditions. | Downgrade for substantial, unexplained inconsistency. |

| 3. Indirectness | How directly the evidence answers the review question. | Population: Lab species vs. wild population of concern. Exposure: Single chemical vs. environmental mixture. Outcome: Surrogate endpoint vs. population-level effect [10]. | Often downgraded for indirectness due to species extrapolation. |

| 4. Imprecision | Wide confidence intervals suggesting uncertainty. | Small number of studies or subjects; confidence intervals include both meaningful benefit and harm. | Downgrade if optimal information size is not met or CI is too wide. |

| 5. Publication Bias | Unpublished studies missing from the evidence. | Suspected if small-study effects are present (funnel plot asymmetry). | Downgrade if likely, based on funnel plot or knowledge of the field. |

| Reasons to Rate Up | Factors that increase confidence in the estimated effect. | ||

| 1. Large Magnitude of Effect | A very large effect size. | e.g., A highly potent toxin causing 10-fold increases in mortality at low doses. | Consider upgrading if effect is large and consistent. |

| 2. Dose-Response Gradient | Evidence of a increasing effect with increasing exposure. | A clear monotonic relationship across studies. | Upgrade if a precise, consistent gradient is observed. |

| 3. Effect of Plausible Confounding | All plausible biases would reduce the observed effect. | If only present, would suggest the true effect is larger. | Rare in ecotoxicology; requires strong rationale. |

| Final Certainty Rating | High, Moderate, Low, or Very Low. | Communicates the likelihood that the true effect is close to the estimated effect. | "Moderate certainty that Chemical X reduces growth in freshwater fish." |

GRADE outputs are summarized in a Summary of Findings table, which is integral to a systematic review report. This framework allows decision-makers to understand not just what the evidence shows, but how much trust to place in those findings [10].

Diagram 3: GRADE Framework for Assessing Certainty of Evidence

Conducting or evaluating systematic reviews in ecotoxicology requires familiarity with a suite of methodological tools and resources.

Table 3: Essential Toolkit for Ecotoxicology Systematic Reviews

| Tool/Resource Category | Specific Example(s) | Function & Purpose |

|---|---|---|

| Protocol & Reporting Guidelines | PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) [1] | A checklist and flow diagram standard to ensure transparent and complete reporting of the SR process. |

| PROSPERO (International prospective register of systematic reviews) | A registry for publishing SR protocols to minimize bias and duplication. | |

| Study Reliability/ Risk of Bias Assessment | ToxRTool (Toxicological data Reliability Assessment Tool) [9] | A standardized checklist for evaluating the reliability of toxicological studies (in vivo and in vitro). |

| ECO-QESST [9] | A quality evaluation system specific to common ecotoxicology tests (fish, Daphnia, algae). | |

| Klimisch Score [9] | A classic, though sometimes criticized, method categorizing study reliability as "1" (reliable without restriction) to "4" (not assignable). | |

| Data Evaluation & Scoring | Multi-Criteria Decision Analysis (MCDA) frameworks [9] | Quantitative methodologies to score the reliability and relevance of individual ecotoxicity data points, allowing for weighted analysis. |

| Evidence Certainty Assessment | GRADE Framework [10] | The structured system for rating confidence in a body of evidence (High to Very Low) and creating Summary of Findings tables. |

| Evidence Integration | Weight of Evidence (WOE) Frameworks [7] [8] | Structured approaches (e.g., using Hill's criteria like strength, consistency, temporality) to integrate multiple lines of evidence for hazard identification. |

| Statistical Synthesis Software | R (with packages metafor, meta) |

Open-source software for conducting meta-analysis and generating forest plots. |

| RevMan (Cochrane's Review Manager) | Software designed for preparing and maintaining Cochrane systematic reviews, including meta-analysis. | |

| Key Guidance Documents | Cochrane Handbook [1] | Foundational text on SR methodology, adaptable to non-clinical fields. |

| EFSA Guidance on Systematic Review [1] | European Food Safety Authority guidance for application in food and feed safety. | |

| OHAT Handbook [1] [10] | Office of Health Assessment and Translation (NTP) handbook for evaluating human health evidence, using GRADE. |

Within the field of ecotoxicology, where research informs critical regulatory decisions on chemical safety, the method chosen to synthesize evidence carries significant weight. Historically, narrative reviews have been the predominant form of summarizing knowledge on topics such as the effects of pesticides or emerging contaminants [1]. These traditional reviews rely on an author's expertise to selectively present and interpret literature. However, the rise of evidence-based toxicology has positioned the systematic review as a more rigorous, transparent, and reproducible alternative [1]. For beginner researchers, understanding the fundamental distinctions between these two approaches is essential for selecting the appropriate method to answer their research question, whether it is to broadly explore a field or to definitively assess a chemical's hazard.

Systematic reviews, pioneered in clinical medicine, employ explicit, pre-specified methods to minimize bias, ensuring that all available evidence on a focused question is identified, appraised, and synthesized [11] [1]. In ecotoxicology, this approach is increasingly mandated by regulatory bodies to develop toxicity values and inform risk assessments [12]. Conversely, narrative reviews offer a flexible, broad exploration of a topic, valuable for mapping complex fields, identifying theoretical gaps, and contextualizing research within a wider scientific debate [11] [13]. This guide provides a critical comparison of these two methodologies, framed within the practical context of modern ecotoxicological research.

Core Methodological Divergence: A Comparative Analysis

The fundamental difference between narrative and systematic reviews lies in the formality and transparency of their methodology. A systematic review follows a strict, pre-registered protocol akin to a primary research study, while a narrative review's process is often more implicit and guided by the author's perspective.

The following flowchart illustrates the key decision points and procedural differences between the two review pathways:

Diagram 1: Methodological Pathways for Narrative vs. Systematic Reviews (Max width: 760px)

This procedural divergence leads to distinct outcomes in terms of bias, reproducibility, and utility. The table below summarizes the key comparative features:

Table 1: Core Characteristics of Narrative vs. Systematic Reviews [11] [1] [14]

| Feature | Narrative (Traditional) Review | Systematic Review |

|---|---|---|

| Primary Objective | Provide a broad overview, explore concepts, identify debates and gaps [11]. | Answer a specific, focused research question using all available evidence [11] [14]. |

| Research Question | Often broad, informal, or not explicitly stated [1]. | Clearly specified and structured (e.g., using PICO—Population, Intervention, Comparator, Outcome) [11]. |

| Protocol & Methodology | No standard protocol; methodology is implicit, flexible, and author-dependent [11]. | Explicit, pre-specified, and published protocol; methodology is transparent and reproducible [11] [1]. |

| Literature Search | Not systematic; sources and search strategy often not specified, risk of selective citation [1]. | Comprehensive search across multiple databases with explicit, documented search strategy [11] [1]. |

| Study Selection | Criteria usually not specified; selection can be subjective [1]. | Explicit inclusion/exclusion criteria applied consistently by multiple reviewers [11]. |

| Quality/ Risk of Bias Assessment | Usually not performed formally; reliance on author expertise [1]. | Critical appraisal of included studies using standardized tools (e.g., EcoSR in ecotoxicology) [12] [1]. |

| Data Synthesis | Qualitative, narrative summary; may be influenced by author perspective [11] [1]. | Structured synthesis (qualitative and/or quantitative, e.g., meta-analysis); aims to minimize bias [11]. |

| Reporting & Reproducibility | Low reproducibility due to lack of methodological detail [1]. | High reproducibility; follows reporting guidelines (e.g., PRISMA) [1]. |

| Time & Resource Commitment | Generally lower (months) [1]. | Substantially higher (often >1 year) [1]. |

Application in Scientific Literature and Ecotoxicology

Both review types fulfill important but different roles in the scientific ecosystem. A survey of top medical journals found that narrative reviews constituted the majority (73%) of published reviews, suggesting their continued value for providing overviews and commentary [15]. However, the same study found that systematic reviews received more citations on average, indicating their greater utility as definitive references for specific questions [15].

In ecotoxicology, the application of each review type is context-dependent:

- Narrative Reviews are ideal for scoping emerging fields. For example, a narrative review on the environmental impact of pharmaceuticals can explore exposure pathways, regulatory frameworks, and mitigation strategies from a "One Health" perspective, effectively synthesizing a complex, interdisciplinary topic [16]. Similarly, a narrative review on environmental toxins in neurodegeneration can summarize epidemiological and mechanistic evidence across multiple toxin classes and disease outcomes to build a broad conceptual framework [13].

- Systematic Reviews are the gold standard for hazard and risk assessment. They are used to determine if a specific chemical (e.g., a pesticide) causes a specific adverse outcome (e.g., population decline in a fish species) with minimal bias. Their rigorous methodology supports regulatory decision-making by agencies like the EPA or EFSA [12] [1]. The development of frameworks like the Ecotoxicological Study Reliability (EcoSR) framework specifically addresses the need for critical appraisal tools tailored to ecotoxicity studies, underlining the field's commitment to systematic evidence evaluation [12].

Detailed Methodological Protocols

Protocol for a Systematic Review in Ecotoxicology

A systematic review in ecotoxicology typically follows a structured multi-step process adapted from clinical research to address toxicological questions [1]. The following diagram outlines a generalized workflow:

Diagram 2: Systematic Review Workflow in Ecotoxicology (Max width: 760px)

Key Step Details:

- Step 1 - PECO Question: A focused question is essential. Example: "In freshwater fish (P), does chronic exposure to glyphosate-based herbicides (E) compared to no exposure (C) lead to reduced growth rate or survival (O)?" [1].

- Step 3 - Comprehensive Search: The search strategy must be designed with a librarian or information specialist. It includes keywords, controlled vocabulary (e.g., MeSH terms), and searches across multiple electronic databases, trial registries, and organizational websites to mitigate publication bias [1].

- Step 5 - Critical Appraisal with EcoSR: The Ecotoxicological Study Reliability framework is a tiered tool for assessing internal validity. Tier 1 screens for fatal flaws (e.g., lack of control group). Tier 2 evaluates criteria like test organism characterization, exposure verification, endpoint relevance, and statistical reporting to categorize studies as high, medium, or low reliability [12].

Protocol for a Narrative Review

While flexible, a rigorous narrative review should still employ a systematic approach to enhance credibility [17]. A recommended methodology is as follows:

- Define Scope and Purpose: Clearly articulate the review's aim (e.g., "to summarize current knowledge on the sources, fate, and ecotoxicological concerns of trifluoroacetic acid (TFA)" [17]).

- Conduct a Literature Search: Perform searches in key databases (e.g., PubMed, Scopus) using a combination of keywords and Boolean operators. Document the search strategy (databases, keywords, date range) to improve transparency [17].

- Select and Organize Literature: Prioritize recent, high-impact, and seminal studies. Organize content thematically (e.g., by chemical class, mechanism, or ecosystem type) rather than strictly chronologically [13] [17].

- Synthesize Evidence Narratively: Weave findings from selected studies into a coherent story. Compare and contrast results, highlight consensus and controversy, and identify clear knowledge gaps. Discuss implications for policy, regulation, or future research [13] [16].

Table 2: Key Research Reagent Solutions for Conducting Reviews in Ecotoxicology

| Tool/Resource Category | Specific Examples & Functions |

|---|---|

| Protocol & Reporting Guidelines | - PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses): Checklist and flowchart for reporting systematic reviews [1]. - ROSES (RepOrting standards for Systematic Evidence Syntheses): Standards specifically for environmental evidence [11]. - Cochrane Handbook: Foundational guidance on systematic review methodology [11] [1]. |

| Search Automation & Management | - DistillerSR, Rayyan, Covidence: Software platforms to manage screening, deduplication, and data extraction in systematic reviews [11]. - Zotero, EndNote, Mendeley: Reference management software to organize literature [17]. |

| Critical Appraisal Frameworks | - Ecotoxicological Study Reliability (EcoSR) Framework: A two-tiered tool for assessing the reliability and risk of bias in ecotoxicity studies [12]. - OHAT (Office of Health Assessment and Translation) Risk of Bias Tool: Adapted for human and animal toxicology studies [1]. |

| Evidence Integration Resources | - GRADE (Grading of Recommendations, Assessment, Development, and Evaluations): Framework for rating the overall certainty of a body of evidence [1]. - INA (Integrated Approaches to Testing and Assessment): Framework for integrating multiple lines of evidence (e.g., in vitro, in silico, ecological) for decision-making [18]. |

For beginner researchers in ecotoxicology, the choice between a narrative and systematic review is not about which is inherently better, but about which is fit for purpose.

- Choose a Narrative Review when: Your goal is to explore a broad, complex topic (e.g., "behavioral ecotoxicology in environmental assessments" [19]), to provide a comprehensive introductory overview for educational purposes, to develop conceptual models or theoretical frameworks, or when time and resources are limited.

- Choose a Systematic Review when: You need to answer a specific question to inform a regulation or risk assessment (e.g., "Does chemical X pose a bioaccumulation hazard?" [18]), when a definitive, unbiased summary of the evidence is required, or when you are preparing a thesis chapter that forms the foundational evidence base for your primary research.

Beginners should start by clearly defining their research objective. Engaging with a research librarian is invaluable, especially for designing systematic review searches. For systematic reviews, expect the process to be a major project requiring a team and significant time commitment. For narrative reviews, strive for transparency in your methods to enhance the review's credibility and utility to the field.

Identifying the Unique Challenges and Complexities of Ecotoxicological Questions

This technical guide examines the core challenges in ecotoxicology through the lens of systematic review (SR) methodology. For researchers beginning a thesis on SR methods in ecotoxicology, understanding these foundational complexities is critical. Ecotoxicology inherently deals with highly heterogeneous data from diverse species, complex ecosystems, and varied experimental models, posing significant obstacles to evidence synthesis and risk assessment [20] [21]. Systematic review offers a rigorous, protocol-driven framework to minimize bias and error when aggregating this complex evidence base, providing a pathway toward more transparent and objective chemical risk assessments (CRAs) [21].

Core Ecotoxicological Challenges for Systematic Evidence Synthesis

Ecotoxicological research is defined by several intrinsic complexities that differentiate it from human toxicology and create unique hurdles for systematic review. The table below synthesizes the major challenges into three interrelated categories [22] [20].

Table: Foundational Challenges in Ecotoxicological Research and Evidence Synthesis

| Challenge Category | Specific Challenge | Impact on Evidence Synthesis & Risk Assessment |

|---|---|---|

| Data & Ecological Complexity | Extrapolating from individual to population and ecosystem levels | Laboratory single-species tests provide limited insight into community interactions, indirect effects, and ecosystem function [20]. |

| Accounting for multiple stressors and variable exposure | Organisms in nature face complex mixtures and fluctuating exposures, complicating lab-to-field extrapolation [20]. | |

| Protecting biodiversity and ecosystem structure | Regulatory targets like the HC5 (protecting 95% of species) may be insufficient for conserving biodiversity on larger scales [20]. | |

| Methodological & Regulatory Limitations | Selecting ecologically relevant test species and endpoints | Standard test species may not represent the most sensitive or ecologically critical organisms in a given ecosystem [22]. |

| Integrating non-standard data (e.g., biomarkers, omics) | While sensitive, the ecological relevance of sub-organismal endpoints for population-level outcomes is often unclear [20]. | |

| Ethical pressures to reduce vertebrate testing | Drives the need for New Approach Methodologies (NAMs) but requires validation for regulatory acceptance [22] [23]. | |

| Evidence Integration | Harmonizing disparate data sources and nomenclature | Combining data from studies using different chemical identifiers, units, and reporting formats is a major pre-synthesis hurdle [24]. |

| Weighing evidence from different study types | SR must integrate in silico, in vitro, in vivo (non-human), and sometimes epidemiological data of varying relevance and reliability [21]. |

These challenges are magnified in a regulatory context. Traditional Ecological Risk Assessment (ERA) often relies on simplified, "worst-case" laboratory data combined with assessment factors to derive a Predicted No Effect Concentration (PNEC) [20]. While pragmatic, this approach lacks ecological realism. Systematic review methodologies are uniquely positioned to address these issues by applying structured, transparent, and objective processes for identifying, selecting, appraising, and synthesizing all available evidence, thereby reducing ambiguity in risk conclusions [21].

The Systematic Review Workflow in Ecotoxicology

Implementing systematic review in ecotoxicology requires adapting the standard SR framework to accommodate the field's specific evidence streams and questions. The following diagram outlines a generalized SR workflow tailored for ecotoxicological questions, such as "What is the effect of chemical X on freshwater invertebrate populations?"

A critical early step is formulating a precise PECO question (Population, Exposure, Comparator, Outcome), which defines the scope [21]. The synthesis phase is particularly complex, as reviewers must integrate heterogeneous evidence streams—from computational predictions to field observations—into a coherent narrative. This integration is guided by assessing the biological relevance and methodological reliability of each study, often using frameworks that consider the evolutionary conservation of a chemical's molecular target across species [23]. The final evidence integration aims to establish a mode of action (MoA), identify the most sensitive taxa or life stages, and explicitly characterize all uncertainties [23] [21].

Key Experimental Approaches & Methodological Hierarchies

Ecotoxicology employs a tiered hierarchy of testing methods, balancing pragmatic constraints with ecological relevance. The following diagram illustrates this hierarchy and its connection to the systematic review process.

Detailed Experimental Protocols:

Standardized Single-Species Laboratory Tests: These form the regulatory core. A typical chronic toxicity test with Daphnia magna follows OECD Guideline 211. Neonates (<24 hours old) are exposed to a geometric series of chemical concentrations in a standardized freshwater medium. Tests run for 21 days, with daily checks for mortality and weekly renewal of test solutions and food (e.g., green algae). Primary endpoints are survival and reproduction (number of living offspring per surviving adult). The data are used to calculate effect concentrations (e.g., EC₂₀, EC₅₀) and the no-observed-effect concentration (NOEC) [22] [20].

Model Ecosystem Studies (Mesocosms): These are complex, higher-tier experiments. A freshwater pond mesocosm study might involve 20-30 outdoor tanks (e.g., 3,000 liters) with established sediment, macrophytes, and a community of algae, zooplankton, macroinvertebrates, and perhaps fish. After a stabilization period, the chemical is applied to replicate systems at environmentally relevant concentrations. Monitoring occurs over weeks or months, tracking population dynamics of multiple species, community metrics (diversity, abundance), and ecosystem functions (leaf litter decomposition, primary production). Data analysis focuses on deriving a community-level no-observed-effect concentration (NOECcommunity) and observing indirect, food-web mediated effects [20].

Omics-Based Mechanistic Studies: These NAMs investigate sub-organismal responses. In a typical transcriptomics study, model organisms (e.g., fish embryos) are exposed to sub-lethal chemical concentrations. After a defined period, RNA is extracted from whole organisms or target tissues, sequenced, and analyzed bioinformatically. The goal is to identify differentially expressed genes and perturbed biological pathways (e.g., oxidative stress, endocrine disruption). A key challenge for SR is determining how these molecular "biomarkers" relate to adverse outcomes at the individual or population level [23] [20].

Essential Research Toolkit for Ecotoxicological Synthesis

Conducting robust ecotoxicological research and systematic reviews requires a suite of specialized tools. The following table details key resources for experimental work and data synthesis.

Table: Research Reagent & Solution Toolkit for Ecotoxicological Synthesis

| Tool Category | Specific Tool/Reagent | Primary Function in Research/Synthesis |

|---|---|---|

| Test Organisms & Culturing | Standardized algal, invertebrate, and fish strains (e.g., Raphidocelis subcapitata, Daphnia magna, Danio rerio) | Provide consistent, reproducible biological models for toxicity testing under controlled laboratory conditions [22]. |

| Computational (in silico) Tools | VEGA, EPI Suite, OPERA: (Q)SAR platforms for predicting environmental fate and toxicity [25].ADMETLab 3.0: Predicts absorption, distribution, metabolism, excretion, and toxicity properties [25]. | Prioritize chemicals for testing, fill data gaps for untested substances, and support read-across in regulatory submissions, especially under animal-testing bans [25] [23]. |

| Data Harmonization & Management | MAGIC Graph: A labeled property graph database for chemicals [24].Chemical Identifiers: CAS RN, DTXSID, SMILES, InChIKey [24]. | Resolves synonyms and structural variants across databases, enabling the linkage of disparate ecotoxicological datasets (exposure, effects, use) for meta-analysis [24]. |

| Evidence Synthesis & Visualization | Systematic Review Software: DistillerSR, Rayyan, CADIMA. | Manage the SR process: de-duplication, blinded screening, data extraction, and risk-of-bias assessment for large numbers of studies [21]. |

| Geographic & Data Visualization Tools: GIS mapping, time sliders, contour plots [26]. | Visualize spatial and temporal trends in chemical exposure and ecological impacts, aiding in exposure assessment and communication of findings [26]. |

The push toward New Approach Methodologies (NAMs)—including in silico models, in vitro assays, and omics—is reshaping this toolkit. These tools are vital for addressing data gaps and ethical concerns but require rigorous evaluation of their applicability domain and reliability within a systematic review framework before they can robustly inform decision-making [25] [23].

Within the broader methodology of evidence-based toxicology, systematic reviews provide a transparent, rigorous, and reproducible means of synthesizing scientific evidence to answer precise research questions [1]. For beginners in ecotoxicology research, this approach represents a fundamental shift from traditional narrative reviews, which often employ implicit, non-transparent processes for selecting and interpreting literature [1]. A well-conducted systematic review minimizes bias, enhances reproducibility, and provides a reliable foundation for informing regulatory decisions and future research directions [1] [27].

The foundational success of any systematic review hinges on two critical preparatory phases executed before any literature search begins: the careful assembly of a multidisciplinary review team and the precise definition of the review's scope and protocol. Neglecting these steps risks methodological weaknesses, biased conclusions, and ultimately, a review that fails to meet the standards of evidence-based science [1] [28]. This guide details the protocols and considerations for these essential prerequisites within the context of ecotoxicology.

Table 1: Comparison of Narrative and Systematic Review Approaches in Toxicology [1]

| Feature | Narrative Review | Systematic Review |

|---|---|---|

| Research Question | Broad and often not explicitly specified. | Precisely framed and specific. |

| Literature Search | Sources and strategy usually not specified. | Comprehensive, using explicit search strategies across multiple databases. |

| Study Selection | Criteria usually not specified. | Transparent selection based on explicit, pre-defined criteria. |

| Quality Assessment | Often absent or informal. | Critical appraisal using explicit, validated tools. |

| Synthesis | Typically qualitative summary. | Qualitative synthesis, often supplemented with quantitative meta-analysis. |

| Time Investment | Months (typically). | Often greater than one year. |

| Required Expertise | Subject matter expertise. | Subject expertise plus skills in systematic review methodology, searching, and data analysis. |

| Primary Output | Expert opinion summary. | Transparent, reproducible evidence synthesis. |

Phase 1: Assembling the Multidisciplinary Review Team

A systematic review is not a solitary endeavor. Its methodological rigor demands a team with complementary skills to balance subject expertise, methodological rigor, and project management. The core team typically manages the daily work, while an external advisory group provides oversight and resolves conflicts [1].

Table 2: Core Review Team Roles and Responsibilities

| Role | Primary Responsibilities | Essential Skills/Expertise |

|---|---|---|

| Principal Investigator (PI)/Lead Reviewer | Provides overall leadership, ensures protocol adherence, manages timelines and resources, and is the primary author [1]. | Deep ecotoxicology expertise, project management, and strong knowledge of systematic review methodology. |

| Subject Matter Experts (2-3 recommended) | Define the research question, inform inclusion/exclusion criteria (e.g., relevant species, endpoints), and interpret technical findings [1] [27]. | Advanced knowledge in the specific chemical, toxicological pathway, or ecological receptor under review. |

| Systematic Review Methodologist | Designs and oversees the review protocol, ensures methodological rigor, selects quality assessment tools, and guides data synthesis [1] [28]. | Expertise in evidence synthesis methods, statistics (for meta-analysis), and risk of bias assessment frameworks. |

| Information Specialist/Librarian | Develops and executes comprehensive, reproducible search strategies across multiple databases and grey literature sources [1] [28]. | Advanced proficiency with bibliographic databases (e.g., PubMed, Web of Science, Scopus, TOXLINE) and search syntax. |

| Data Analyst/Statistician | Designs data extraction forms, performs meta-analysis (if applicable), assesses heterogeneity, and conducts sensitivity analyses [1]. | Statistical expertise in meta-analytic models and experience with software (e.g., R, RevMan, Stata). |

| Project Coordinator | Manages screening processes, coordinates meetings, maintains documentation, and manages reference software [28]. | Organizational skills, attention to detail, and proficiency with review management tools (e.g., Covidence, Rayyan). |

Diagram 1: Core Systematic Review Team Structure and Key Roles

Key Protocol: Team Onboarding and Conflict Management

- Kick-off Meeting: Convene the entire team to review the proposed research question, discuss the systematic review process, and assign clear roles and responsibilities based on the above framework [1].

- Conflict of Interest (COI) Declaration: All team members must formally disclose any financial, professional, or intellectual conflicts related to the review topic. A pre-defined plan (e.g., recusal from specific decisions) must be established [27].

- Pilot Calibration Exercises: Before full screening begins, all screeners will independently assess the same small sample of titles/abstracts (e.g., 50-100). Inter-rater reliability (e.g., Cohen's Kappa) will be calculated, and disagreements will be discussed to refine inclusion criteria and ensure consistent application [28].

Phase 2: Defining the Scope and Protocol

A precisely framed research question and a detailed, publicly registered protocol are the cornerstones of a reproducible systematic review. They serve as the unchanging blueprint for the entire project, guarding against ad-hoc decisions that introduce bias [1] [28].

Formulating the Research Question

In ecotoxicology, the PECO or PECO(S) framework is highly recommended for structuring a focused, answerable question [1] [27].

- P (Population): The ecological receptor (e.g., Daphnia magna, fathead minnow embryos, soil nematode communities).

- E (Exposure): The chemical or stressor of interest, including specification of dose/concertation and route (e.g., aqueous exposure to microplastic particles <100μm).

- C (Comparator): The control or reference condition (e.g., clean sediment, solvent control, a different chemical).

- O (Outcome): The measured endpoint (e.g., 48-hr mortality, reproductive inhibition, genotoxicity as measured by comet assay).

- S (Study Design) (Optional): The acceptable type of evidence (e.g., laboratory toxicity tests, field mesocosm studies).

Example Question: "In laboratory studies of freshwater benthic invertebrates (P), what is the effect of chronic sediment exposure to fluoranthene (E) compared to unspiked sediment (C) on growth and reproduction (O)?"

Developing Inclusion/Exclusion Criteria

Explicit criteria flow directly from the PECO framework and are essential for transparent study selection [27].

Table 3: Scope Definition: Example Inclusion/Exclusion Criteria

| Category | Inclusion Criteria | Exclusion Criteria | Rationale |

|---|---|---|---|

| Population | Freshwater fish species at early life stages (embryo, larval). | Marine fish, adult fish, other taxonomic groups. | Focuses the review on a sensitive and ecologically relevant life stage within a defined ecosystem. |

| Exposure | Studies measuring aqueous exposure to ionic silver (Ag⁺). | Studies with silver nanoparticles (AgNPs) or silver complexes; studies with only total silver measurements. | Isolates the toxic effect of the specific bioavailable chemical species of interest. |

| Comparator | Clean, un-amended control conditions. | Studies using only positive toxicant controls (e.g., Cu reference). | Ensures the measured effect is attributable to the exposure of interest. |

| Outcome | Quantified sub-lethal endpoints related to development (e.g., malformation rate, hatch success, growth). | Studies reporting only lethal endpoints (LC50) or biomarker responses without apical effect. | Addresses a specific research gap concerning chronic, population-relevant effects. |

| Study Design | Primary experimental studies with controlled exposures (lab or field mesocosm). | Review articles, modeling studies, field monitoring with uncontrolled confounding factors. | Ensures the synthesis is based on direct, empirical evidence of cause-effect. |

| Publication Status | Peer-reviewed articles, official reports, and relevant grey literature (theses, conference proceedings). | Unpublished data without detailed methods, non-English articles without translatable abstract/data. | Maximizes evidence capture while ensuring a minimum threshold for methodological assessment [28]. |

| Time Frame | Studies published from 2000 to present. | Studies published before 2000. | Reflectors modern analytical methods and environmental relevance. |

Protocol Registration and Reporting Standards

The finalized protocol should be registered on a public platform such as PROSPERO or the Collaboration for Environmental Evidence (CEE) Library. This prevents duplication of effort and guards against outcome reporting bias [1]. The review's final report should adhere to the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement to ensure complete transparency [1].

Diagram 2: Systematic Review Scope Definition and Protocol Workflow

Detailed Methodological Protocols

Based on established frameworks like the TCEQ's six-step process, the following protocols detail how the assembled team executes the defined scope [27].

Protocol 1: Systematic Literature Search and Study Retrieval

- Strategy Development: The information specialist, in consultation with subject experts, develops search strings for each database. Strings combine terms for Population, Exposure, and Outcome using Boolean operators (AND, OR). Truncation and subject headings (e.g., MeSH) are used.

- Database Selection: A minimum of two major databases must be searched (e.g., PubMed, Web of Science, Scopus, TOXLINE, AGRICOLA). Discipline-specific databases (e.g., ECOTOX, EnviroTox) are essential for ecotoxicology.

- Grey Literature Search: This includes searches in regulatory agency websites (e.g., US EPA, ECHA), thesis repositories (e.g., ProQuest Dissertations), and conference proceedings to mitigate publication bias.

- Search Documentation: The exact search string, database, date searched, and number of records retrieved are recorded for full reproducibility.

Protocol 2: Study Selection and Screening

- Deduplication: Records from all sources are combined, and duplicates are removed using reference management software.

- Pilot Screening: As per the team protocol, a pilot calibration exercise is conducted on a random sample.

- Two-Stage Screening:

- Stage 1 (Title/Abstract): Two independent reviewers screen each record against inclusion criteria. Conflicts are resolved by consensus or a third reviewer.

- Stage 2 (Full Text): The full texts of potentially relevant studies are retrieved. Two independent reviewers assess them against all criteria. Reasons for exclusion at this stage are documented (e.g., "wrong exposure," "no control group").

- Prisma Flow Diagram: The results of the screening process are documented in a PRISMA flow diagram, reporting the numbers of records identified, screened, and included.

Protocol 3: Data Extraction and Quality Assessment

- Form Design: A standardized, pre-piloted data extraction form is created in a tool like Microsoft Excel or systematic review software (e.g., Covidence).

- Data Extraction: Two reviewers independently extract key data from each included study: citation, study design, population details, exposure characteristics (dose, duration), outcome data (means, SD, sample size), and funding source.

- Risk of Bias/Study Quality Assessment: Two reviewers independently assess each study's internal validity using a tool appropriate to ecotoxicology, such as the Science in Risk Assessment and Policy (SciRAP) tool or the TCEQ's quality assessment criteria [27]. This evaluates elements like blinding, randomization, dose verification, and statistical reporting.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Essential Toolkit for Conducting a Systematic Review

| Tool Category | Specific Tool/Resource | Primary Function | Notes for Ecotoxicology |

|---|---|---|---|

| Protocol & Reporting | PROSPERO Registry, PRISMA Checklist | Protocol registration and reporting guidance. | Ensures transparency and meets journal requirements. |

| Reference Management | EndNote, Zotero, Mendeley | Store, deduplicate, and manage search results. | Critical for handling large search yields from multiple databases. |

| Review Management | Covidence, Rayyan, DistillerSR | Facilitate blinded screening, conflict resolution, and data extraction. | Streamlines the collaborative review process, reducing error. |

| Search Databases | Web of Science, Scopus, PubMed, ECOTOX | Primary sources for identifying relevant scientific literature. | ECOTOX is a critical, specialized database for ecotoxicology studies. |

| Grey Literature Sources | Agency Websites (EPA, EFSA), OpenGrey, ProQuest Dissertations | Identify unpublished or regulatory data. | Mitigates publication bias; essential for regulatory contexts [27]. |

| Risk of Bias Assessment | SciRAP Tool, TCEQ Quality Criteria | Standardized assessment of study reliability and internal validity [27]. | More relevant than clinical tools (e.g., Cochrane RoB) for toxicology tests. |

| Data Analysis & Visualization | R (metafor, meta packages), RevMan, Stata | Perform meta-analysis, calculate effect sizes, assess heterogeneity, create forest plots. | Required if quantitative synthesis is planned. |

| AI-Assisted Screening | Sciscoper, ASReview | Use machine learning to prioritize records during title/abstract screening. | Can significantly improve screening efficiency for large datasets [28]. |

Mastering the Core Process: A Step-by-Step Roadmap for Ecotoxicology SRs

Formulating a precise and answerable research question is the foundational and most critical step in conducting a systematic review (SR) [29]. This step determines the entire trajectory of the review process, guiding the development of the protocol, search strategy, inclusion criteria, and data synthesis [1]. In evidence-based toxicology, a well-structured question is paramount for ensuring the review is transparent, methodologically rigorous, and reproducible, thereby providing a reliable summary of evidence to inform regulatory and scientific decisions [1]. For beginners in ecotoxicology, mastering this step is essential to avoid the pitfalls of traditional narrative reviews, which may lack explicit methods, introduce selection bias, and yield irreproducible conclusions [1].

The PICOS framework is the most commonly used tool to structure research questions in health-related systematic reviews [29]. It provides a standardized format to define the key components of a clinical or intervention-based question. However, the unique challenges of toxicological and ecotoxicological research—such as evaluating exposures and hazards rather than therapeutic interventions, integrating multiple evidence streams (e.g., in vivo, in vitro, epidemiological), and dealing with complex mixtures—necessitate a nuanced understanding of PICOS and its alternatives [1]. This guide provides an in-depth technical overview of formulating research questions using PICOS and other adaptable frameworks within the context of systematic review methods for ecotoxicology beginners.

Deconstructing the PICOS Framework

The PICOS framework breaks down a research question into five essential, searchable elements. This structure forces clarity and completeness, ensuring the resulting question is focused and amenable to a systematic search strategy [30].

Population (P): This refers to the subjects of the research question. In ecotoxicology, this most commonly defines the biological organism(s) under study. Specifications can include species (e.g., Daphnia magna), life stage (e.g., larval zebrafish), sex, specific health status (e.g., immunocompromised models), or environmental context (e.g., benthic organisms). A precisely defined population enhances the review's relevance and applicability [29] [31].

Intervention (I) or Exposure (I/E): In clinical research, this is the treatment or therapy being investigated. In toxicology and ecotoxicology, this element is more accurately described as the Exposure. It defines the agent whose effects are being studied. This includes the specific chemical or mixture (e.g., glyphosate, PFOS), its physical form, the route of exposure (e.g., aqueous, dietary, sediment), and the dosage or concentration [1] [32].

Comparator (C): This is the control or alternative against which the intervention/exposure is compared. This could be a placebo, a standard treatment, an alternative chemical, or, most frequently in toxicology, a non-exposed control group. The choice of comparator is crucial for determining the basis for effect measurement [30] [33].

Outcome (O): These are the measurable endpoints or effects of interest. In ecotoxicology, outcomes are the adverse effects or biomarkers of effect. They must be clearly defined, measurable, and relevant to the hypothesis. Examples include mortality (LC50), reproductive output, growth inhibition, genotoxicity (e.g., micronucleus frequency), or changes in specific enzyme activity (e.g., acetylcholinesterase inhibition) [30] [1].

Study Design (S): This optional but highly recommended element specifies the preferred type of primary research studies to be included in the review. Specifying the design (e.g., randomized controlled trial, cohort study, controlled laboratory experiment) helps refine the search and sets eligibility criteria based on methodological rigor [34]. For ecotoxicology, common designs include standardized toxicity tests (e.g., OECD guidelines), field studies, or observational cohort studies in wildlife.

PICOS Question Template for Ecotoxicology: “In [Population], what is the effect of [Exposure] compared to [Comparator] on [Outcome] as measured in [Study Design]?”

Table 1: PICOS Framework Applied to an Ecotoxicology Example

| PICOS Element | Definition | Ecotoxicology Example |

|---|---|---|

| Population | The subjects or biological system under study. | Freshwater amphipods (Hyalella azteca), juvenile stage. |

| Intervention/Exposure | The agent or condition being investigated. | Chronic exposure to microplastics (polyethylene, <100μm) via sediment. |

| Comparator | The control or alternative for comparison. | Sediment without microplastic addition. |

| Outcome | The measured effect or endpoint. | Growth rate (weight gain), mortality, and reproductive success. |

| Study Design | The preferred methodology of primary studies. | Laboratory-based, controlled toxicity tests following standardized protocols (e.g., EPA, OECD). |

Beyond PICOS: Alternative Frameworks for Different Question Types

While PICOS is ideal for intervention/exposure questions, other frameworks may be better suited for different types of research questions common in ecotoxicology, such as those focused on diagnosis, prognosis, or qualitative phenomena [29] [32]. Selecting the correct framework is key to a well-structured question.

- PECO: A direct adaptation of PICO for exposure-focused fields like environmental science and toxicology. It replaces “Intervention” with “Exposure,” making it intuitively aligned with ecotoxicological questions [32].

- PFO (Population, Factor, Outcome): Used for prognosis or risk factor questions. It examines whether a specific factor predicts a particular outcome over time. In ecotoxicology, this could investigate genetic markers as predictors of population decline in a contaminated habitat [34].

- PIRD (Population, Index Test, Reference Test, Diagnosis): Designed for diagnostic test accuracy questions. This is relevant in ecotoxicology for assessing the validity of new biomarker tests (Index Test) against a traditional, gold-standard toxicity assay (Reference Test) for diagnosing a specific toxic effect [34].

- SPIDER (Sample, Phenomenon of Interest, Design, Evaluation, Research type): Developed for qualitative and mixed-methods research [29] [32]. This is applicable for systematic reviews that seek to synthesize qualitative evidence, such as studies on stakeholder perceptions of chemical risk or the socio-economic impact of pollution.

Table 2: Selecting a Framework Based on Research Question Type

| Question Type / Focus | Recommended Framework | Key Elements | Ecotoxicology Example Question |

|---|---|---|---|

| Exposure / Intervention | PICOS or PECO | Population, Exposure, Comparator, Outcome, Study Design | In honey bees (Apis mellifera), does sublethal exposure to neonicotinoid pesticide (imidacloprid) compared to no exposure reduce foraging efficiency and colony strength? |

| Etiology / Risk | PECO or PFO | Population, Exposure/Factor, Comparator (optional), Outcome | Are amphibian populations in agricultural wetlands associated with higher pesticide runoff at greater risk of limb malformations? |

| Diagnostic Test Accuracy | PIRD | Population, Index test, Reference test, Diagnosis | In fish, is the induction of vitellogenin in males (Index Test) an accurate diagnostic for estrogenic endocrine disruption compared to histological analysis of gonads (Reference Test)? |

| Qualitative Experience | SPIDER | Sample, Phenomenon of Interest, Design, Evaluation, Research type | What are the perceived barriers and facilitators (Phenomenon) among farmers (Sample) to adopting pesticide alternatives, as explored in qualitative interview studies (Research type)? |

The following diagram illustrates the decision-making process for selecting the most appropriate framework based on the core focus of the research question.

Step-by-Step Methodology for Formulating and Refining the Question

Formulating a research question is an iterative process that requires background knowledge and strategic scoping. The following protocol outlines a standard operational procedure for developing a PICOS-based question suitable for a systematic review in ecotoxicology.

Preliminary Scoping and Background Research

Before drafting the question, conduct preliminary scoping searches in key databases (e.g., Web of Science, Scopus, PubMed, TOXLINE) [29]. The goal is to:

- Identify key literature and experts in the field.

- Gauge the volume and nature of existing primary research.

- Understand how key concepts (population, exposure, outcomes) are defined and measured in the literature [1].

- Identify evidence gaps that the review could fill. This step prevents duplicating existing reviews and ensures the question is novel and feasible [30].

Drafting the PICOS Components

Using the insights from scoping, draft each element of the PICOS framework with precision:

- Define Population: Be specific about species, strain, life stage, and health status. Consider relevance to the ecosystem and regulatory context.

- Define Exposure: Specify the chemical/mixture, its form, route, and relevant dose range (e.g., environmentally relevant concentrations). Consider co-exposures if relevant.

- Define Comparator: Clearly state the control condition (e.g., vehicle control, sham exposure, background level).

- Define Outcome: Select primary and secondary outcomes that are biologically meaningful, measurable, and commonly reported. Prioritize standardized endpoints (e.g., OECD guidelines) for better synthesis [1].

- Consider Study Design & Time: Specify the preferred study types (e.g., controlled lab studies, field mesocosm studies) and, if critical, a relevant time frame for outcome measurement (e.g., chronic 21-day exposure) [30] [33].

Assembling the Question and Checking FINER Criteria

Combine the elements into a clear, focused question. Then, evaluate it against the FINER criteria to assess its overall viability [34]:

- Feasible: Adequate number of subjects (studies)? Manageable scope?

- Interesting: To the scientific community and potential end-users (e.g., regulators).

- Novel: Addresses a knowledge gap or confirms/contradicts prior findings.

- Ethical: For a review, this pertains to the ethical use and reporting of published data.

- Relevant: To advancing ecotoxicology science, policy, or environmental health.

Protocol Registration

Once the question is finalized, document it within a detailed review protocol. The protocol should include the background, the clear research question, and explicit plans for the search strategy, study selection, data extraction, risk of bias assessment, and synthesis methods [29] [35]. Registering this protocol on a platform like PROSPERO (for health-related outcomes) or the Open Science Framework is considered best practice. It enhances transparency, reduces duplication of effort, and minimizes bias by committing to methods before data collection begins [29] [32].

Application in Ecotoxicology: Case Study and Evidence Integration Logic

Case Study: Microplastics and Freshwater Invertebrates

- Background: Microplastic pollution is a global concern, but the effects on freshwater invertebrates are synthesized in diverse, sometimes conflicting, primary studies.

- PICOS Question: “In freshwater benthic invertebrates (P), does chronic exposure to synthetic microplastic fibers (I) compared to natural particle or no-exposure controls (C) affect growth, mortality, and reproductive success (O) in controlled laboratory studies (S)?”

- Protocol Development: This question leads directly to explicit inclusion/exclusion criteria: studies must involve defined benthic invertebrates, expose them to synthetic (not biodegradable) microplastic fibers, include a relevant control, and measure at least one of the specified outcomes in a controlled lab setting. Studies on marine organisms, different polymer types (e.g., beads), or field observations would be excluded.

The logic of answering such a question through a systematic review involves integrating evidence from multiple primary studies, each with its own internal validity. The following diagram maps this logical pathway from individual study results to a synthesized review conclusion, highlighting the critical role of quality assessment at each stage.

Table 3: Key Research Reagent Solutions for Systematic Review Formulation

| Tool / Resource | Type | Primary Function in Question Formulation | Source / Example |

|---|---|---|---|

| PICOS Framework | Conceptual Tool | Provides the standard structure to deconstruct and define all components of a focused, answerable research question. | [30] [33] [31] |

| FINER Criteria | Checklist | A mnemonic to assess the overall viability and worth of a research question (Feasible, Interesting, Novel, Ethical, Relevant). | [34] |

| PROSPERO Database | Protocol Registry | Allows researchers to check for existing or in-progress reviews on their topic to avoid duplication. Registration of a protocol commits to methods a priori. | International Prospective Register of Systematic Reviews [29] [32] |

| Scoping Search | Methodological Step | Preliminary searches in major databases to map existing literature, identify key terms, and gauge the volume of evidence, informing the feasibility and scope of the PICOS question. | Databases: PubMed, Web of Science, Scopus, TOXLINE [29] [1] |

| Systematic Review Protocol Template | Document Template | Provides a structured format to formally document the background, PICOS question, and planned methods for search, selection, appraisal, and synthesis. | Centre for Reviews and Dissemination (CRD), University of York [29] [32] |

| Alternative Frameworks (PECO, SPIDER, etc.) | Conceptual Tools | Provide tailored structures for research questions that do not fit the classic intervention/therapy model of PICO, essential for ecotoxicology and qualitative reviews. | [29] [34] [32] |